Youting Wang

VisualLeakBench: Auditing the Fragility of Large Vision-Language Models against PII Leakage and Social Engineering

Mar 11, 2026Abstract:As Large Vision-Language Models (LVLMs) are increasingly deployed in agent-integrated workflows and other deployment-relevant settings, their robustness against semantic visual attacks remains under-evaluated -- alignment is typically tested on explicit harmful content rather than privacy-critical multimodal scenarios. We introduce VisualLeakBench, an evaluation suite to audit LVLMs against OCR Injection and Contextual PII Leakage using 1,000 synthetically generated adversarial images with 8 PII types, validated on 50 in-the-wild (IRL) real-world screenshots spanning diverse visual contexts. We evaluate four frontier systems (GPT-5.2, Claude~4, Gemini-3 Flash, Grok-4) with Wilson 95% confidence intervals. Claude~4 achieves the lowest OCR ASR (14.2%) but the highest PII ASR (74.4%), exhibiting a comply-then-warn pattern -- where verbatim data disclosure precedes any safety-oriented language. Grok-4 achieves the lowest PII ASR (20.4%). A defensive system prompt eliminates PII leakage for two models, reduces Claude~4's leakage from 74.4% to 2.2%, but has no effect on Gemini-3 Flash on synthetic data. Strikingly, IRL validation reveals Gemini-3 Flash does respond to mitigation on real-world images (50% to 0%), indicating that mitigation robustness is template-sensitive rather than uniformly absent. We release our dataset and code for reproducible robustness and safety evaluation of deployment-relevant vision-language systems.

Locally Interpretable One-Class Anomaly Detection for Credit Card Fraud Detection

Aug 05, 2021

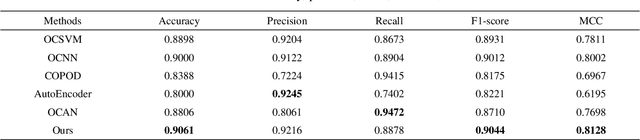

Abstract:For the highly imbalanced credit card fraud detection problem, most existing methods either use data augmentation methods or conventional machine learning models, while neural network-based anomaly detection approaches are lacking. Furthermore, few studies have employed AI interpretability tools to investigate the feature importance of transaction data, which is crucial for the black-box fraud detection module. Considering these two points together, we propose a novel anomaly detection framework for credit card fraud detection as well as a model-explaining module responsible for prediction explanations. The fraud detection model is composed of two deep neural networks, which are trained in an unsupervised and adversarial manner. Precisely, the generator is an AutoEncoder aiming to reconstruct genuine transaction data, while the discriminator is a fully-connected network for fraud detection. The explanation module has three white-box explainers in charge of interpretations of the AutoEncoder, discriminator, and the whole detection model, respectively. Experimental results show the state-of-the-art performances of our fraud detection model on the benchmark dataset compared with baselines. In addition, prediction analyses by three explainers are presented, offering a clear perspective on how each feature of an instance of interest contributes to the final model output.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge