Youquan He

Thinking in Structures: Evaluating Spatial Intelligence through Reasoning on Constrained Manifolds

Feb 08, 2026Abstract:Spatial intelligence is crucial for vision--language models (VLMs) in the physical world, yet many benchmarks evaluate largely unconstrained scenes where models can exploit 2D shortcuts. We introduce SSI-Bench, a VQA benchmark for spatial reasoning on constrained manifolds, built from complex real-world 3D structures whose feasible configurations are tightly governed by geometric, topological, and physical constraints. SSI-Bench contains 1,000 ranking questions spanning geometric and topological reasoning and requiring a diverse repertoire of compositional spatial operations, such as mental rotation, cross-sectional inference, occlusion reasoning, and force-path reasoning. It is created via a fully human-centered pipeline: ten researchers spent over 400 hours curating images, annotating structural components, and designing questions to minimize pixel-level cues. Evaluating 31 widely used VLMs reveals a large gap to humans: the best open-source model achieves 22.2% accuracy and the strongest closed-source model reaches 33.6%, while humans score 91.6%. Encouraging models to think yields only marginal gains, and error analysis points to failures in structural grounding and constraint-consistent 3D reasoning. Project page: https://ssi-bench.github.io.

Misregistration Measurement and Improvement for Sentinel-1 SAR and Sentinel-2 Optical images

May 22, 2020

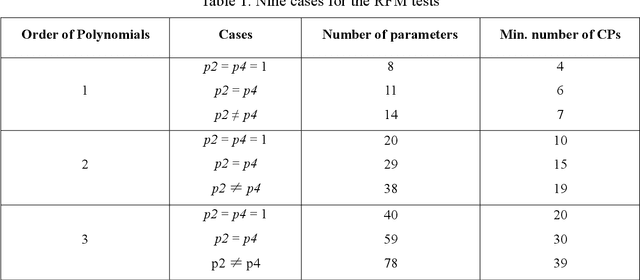

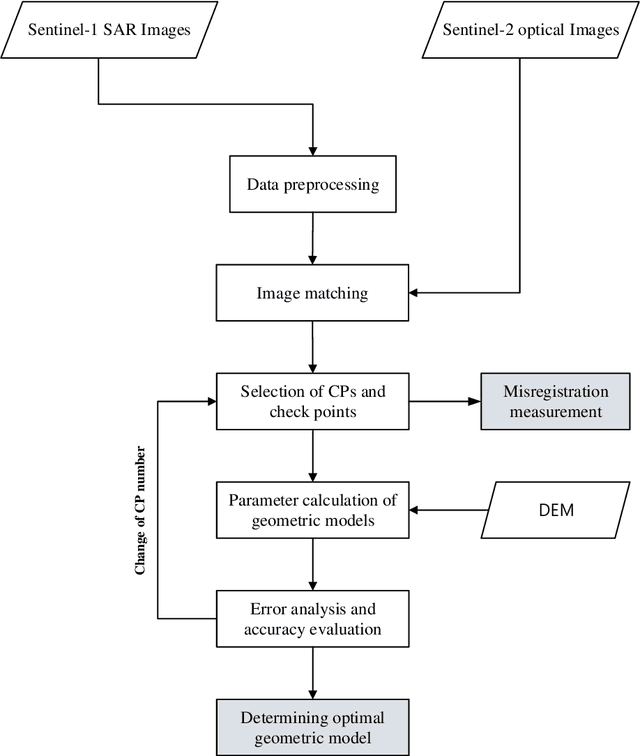

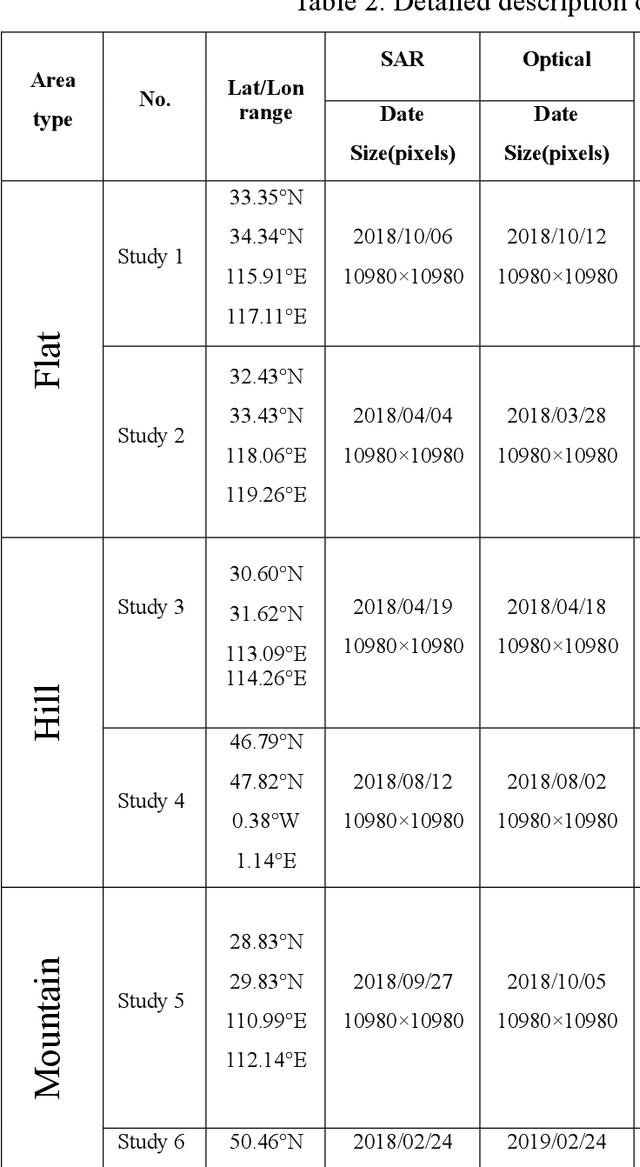

Abstract:Co-registering the Sentinel-1 SAR and Sentinel-2 optical data of European Space Agency (ESA) is of great importance for many remote sensing applications. The Sentinel-1 and 2 product specifications from ESA show that the Sentinel-1 SAR L1 and the Sentinel-2 optical L1C images have a co-registration accuracy of within 2 pixels. However, we find that the actual misregistration errors are much larger than that between such images. This paper measures the misregistration errors by a block-based multimodal image matching strategy to six pairs of the Sentinel-1 SAR and Sentinel-2 optical images, which locate in China and Europe and cover three different terrains such as flat areas, hilly areas and mountainous areas. Our experimental results show the misregistration errors of the flat areas are 20-30 pixels, and these of the hilly areas are 20-40 pixels. While in the mountainous areas, the errors increase to 50-60 pixels. To eliminate the misregistration, we use some representative geometric transformation models such as polynomial models, projective models, and rational function models for the co-registration of the two types of images, and compare and analyze their registration accuracy under different number of control points and different terrains. The results of our analysis show that the 3rd. Order polynomial achieves the most satisfactory registration results. Its registration accuracy of the flat areas is less than 1.0 10m pixels, and that of the hilly areas is about 1.5 pixels, and that of the mountainous areas is between 1.8 and 2.3 pixels. In a word, this paper discloses and measures the misregistration between the Sentinel-1 SAR L1 and Sentinel-2 optical L1C images for the first time. Moreover, we also determine a relatively optimal geometric transformation model of the co-registration of the two types of images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge