Xutong Mu

When Convenience Becomes Risk: A Semantic View of Under-Specification in Host-Acting Agents

Mar 22, 2026Abstract:Host-acting agents promise a convenient interaction model in which users specify goals and the system determines how to realize them. We argue that this convenience introduces a distinct security problem: semantic under-specification in goal specification. User instructions are typically goal-oriented, yet they often leave process constraints, safety boundaries, persistence, and exposure insufficiently specified. As a result, the agent must complete missing execution semantics before acting, and this completion can produce risky host-side plans even when the user-stated goal is benign. In this paper, we develop a semantic threat model, present a taxonomy of semantic-induced risky completion patterns, and study the phenomenon through an OpenClaw-centered case study and execution-trace analysis. We further derive defense design principles for making execution boundaries explicit and constraining risky completion. These findings suggest that securing host-acting agents requires governing not only which actions are allowed at execution time, but also how goal-only instructions are translated into executable plans.

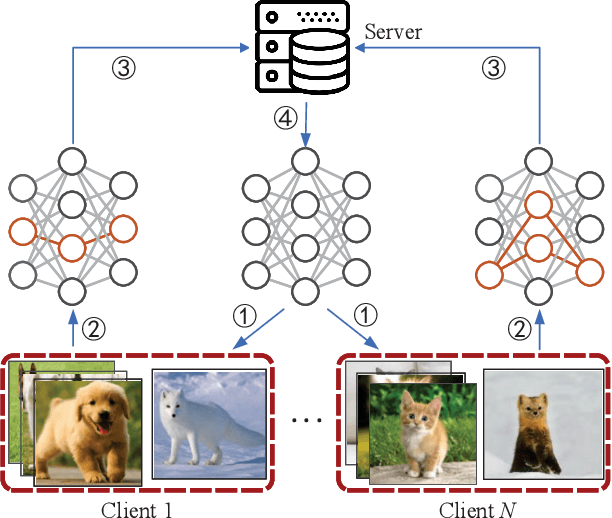

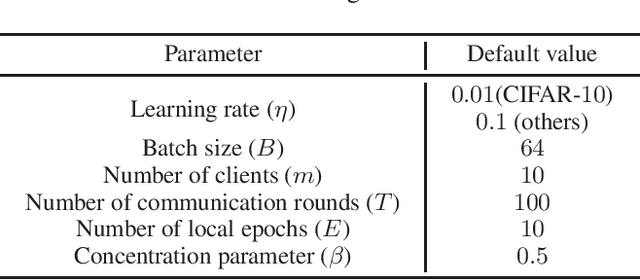

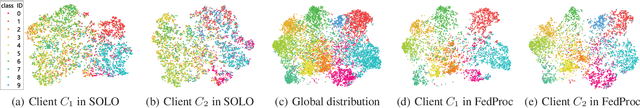

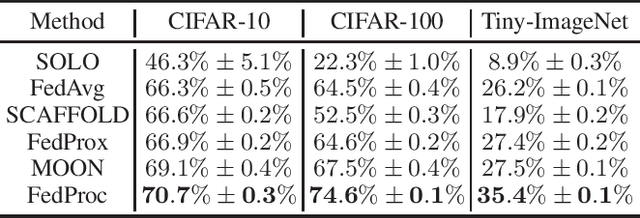

FedProc: Prototypical Contrastive Federated Learning on Non-IID data

Sep 25, 2021

Abstract:Federated learning allows multiple clients to collaborate to train high-performance deep learning models while keeping the training data locally. However, when the local data of all clients are not independent and identically distributed (i.e., non-IID), it is challenging to implement this form of efficient collaborative learning. Although significant efforts have been dedicated to addressing this challenge, the effect on the image classification task is still not satisfactory. In this paper, we propose FedProc: prototypical contrastive federated learning, which is a simple and effective federated learning framework. The key idea is to utilize the prototypes as global knowledge to correct the local training of each client. We design a local network architecture and a global prototypical contrastive loss to regulate the training of local models, which makes local objectives consistent with the global optima. Eventually, the converged global model obtains a good performance on non-IID data. Experimental results show that, compared to state-of-the-art federated learning methods, FedProc improves the accuracy by $1.6\%\sim7.9\%$ with acceptable computation cost.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge