Xiaowu Sun

ODySSeI: An Open-Source End-to-End Framework for Automated Detection, Segmentation, and Severity Estimation of Lesions in Invasive Coronary Angiography Images

Mar 20, 2026Abstract:Invasive Coronary Angiography (ICA) is the clinical gold standard for the assessment of coronary artery disease. However, its interpretation remains subjective and prone to intra- and inter-operator variability. In this work, we introduce ODySSeI: an Open-source end-to-end framework for automated Detection, Segmentation, and Severity estimation of lesions in ICA images. ODySSeI integrates deep learning-based lesion detection and lesion segmentation models trained using a novel Pyramidal Augmentation Scheme (PAS) to enhance robustness and real-time performance across diverse patient cohorts (2149 patients from Europe, North America, and Asia). Furthermore, we propose a quantitative coronary angiography-free Lesion Severity Estimation (LSE) technique that directly computes the Minimum Lumen Diameter (MLD) and diameter stenosis from the predicted lesion geometry. Extensive evaluation on both in-distribution and out-of-distribution clinical datasets demonstrates ODySSeI's strong generalizability. Our PAS yields large performance gains in highly complex tasks as compared to relatively simpler ones, notably, a 2.5-fold increase in lesion detection performance versus a 1-3\% increase in lesion segmentation performance over their respective baselines. Our LSE technique achieves high accuracy, with predicted MLD values differing by only $\pm$ 2-3 pixels from the corresponding ground truths. On average, ODySSeI processes a raw ICA image within only a few seconds on a CPU and in a fraction of a second on a GPU and is available as a plug-and-play web interface at swisscardia.epfl.ch. Overall, this work establishes ODySSeI as a comprehensive and open-source framework which supports automated, reproducible, and scalable ICA analysis for real-time clinical decision-making.

CM-UNet: A Self-Supervised Learning-Based Model for Coronary Artery Segmentation in X-Ray Angiography

Jul 22, 2025Abstract:Accurate segmentation of coronary arteries remains a significant challenge in clinical practice, hindering the ability to effectively diagnose and manage coronary artery disease. The lack of large, annotated datasets for model training exacerbates this issue, limiting the development of automated tools that could assist radiologists. To address this, we introduce CM-UNet, which leverages self-supervised pre-training on unannotated datasets and transfer learning on limited annotated data, enabling accurate disease detection while minimizing the need for extensive manual annotations. Fine-tuning CM-UNet with only 18 annotated images instead of 500 resulted in a 15.2% decrease in Dice score, compared to a 46.5% drop in baseline models without pre-training. This demonstrates that self-supervised learning can enhance segmentation performance and reduce dependence on large datasets. This is one of the first studies to highlight the importance of self-supervised learning in improving coronary artery segmentation from X-ray angiography, with potential implications for advancing diagnostic accuracy in clinical practice. By enhancing segmentation accuracy in X-ray angiography images, the proposed approach aims to improve clinical workflows, reduce radiologists' workload, and accelerate disease detection, ultimately contributing to better patient outcomes. The source code is publicly available at https://github.com/CamilleChallier/Contrastive-Masked-UNet.

Biomedical image analysis competitions: The state of current participation practice

Dec 16, 2022Abstract:The number of international benchmarking competitions is steadily increasing in various fields of machine learning (ML) research and practice. So far, however, little is known about the common practice as well as bottlenecks faced by the community in tackling the research questions posed. To shed light on the status quo of algorithm development in the specific field of biomedical imaging analysis, we designed an international survey that was issued to all participants of challenges conducted in conjunction with the IEEE ISBI 2021 and MICCAI 2021 conferences (80 competitions in total). The survey covered participants' expertise and working environments, their chosen strategies, as well as algorithm characteristics. A median of 72% challenge participants took part in the survey. According to our results, knowledge exchange was the primary incentive (70%) for participation, while the reception of prize money played only a minor role (16%). While a median of 80 working hours was spent on method development, a large portion of participants stated that they did not have enough time for method development (32%). 25% perceived the infrastructure to be a bottleneck. Overall, 94% of all solutions were deep learning-based. Of these, 84% were based on standard architectures. 43% of the respondents reported that the data samples (e.g., images) were too large to be processed at once. This was most commonly addressed by patch-based training (69%), downsampling (37%), and solving 3D analysis tasks as a series of 2D tasks. K-fold cross-validation on the training set was performed by only 37% of the participants and only 50% of the participants performed ensembling based on multiple identical models (61%) or heterogeneous models (39%). 48% of the respondents applied postprocessing steps.

Neurosymbolic Motion and Task Planning for Linear Temporal Logic Tasks

Oct 11, 2022

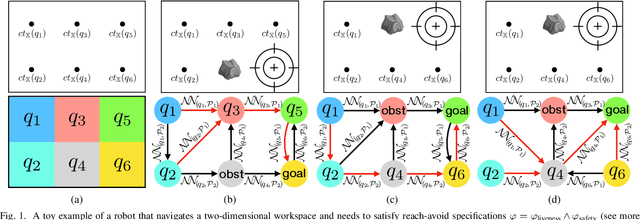

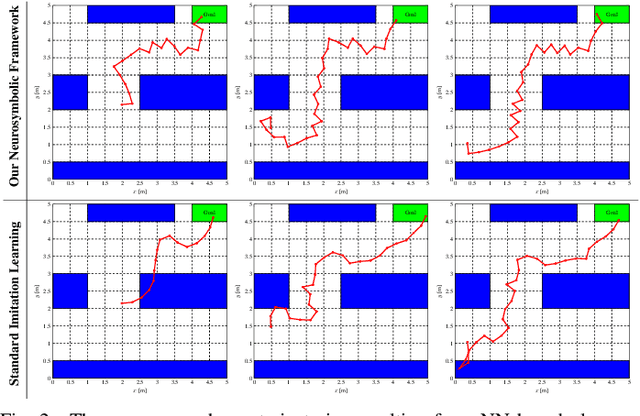

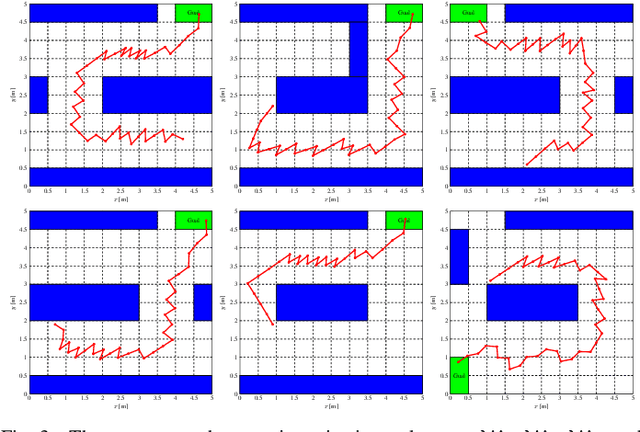

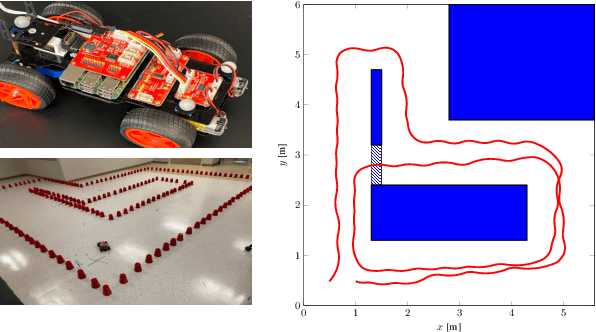

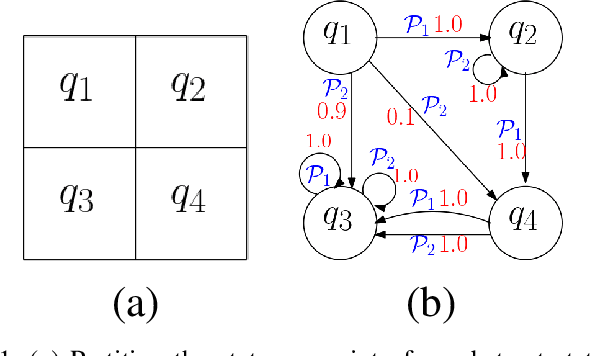

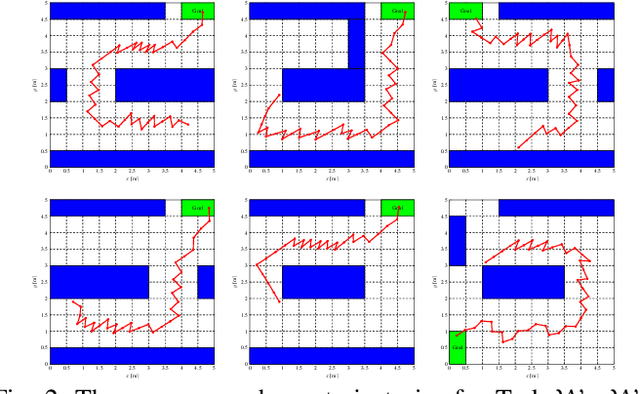

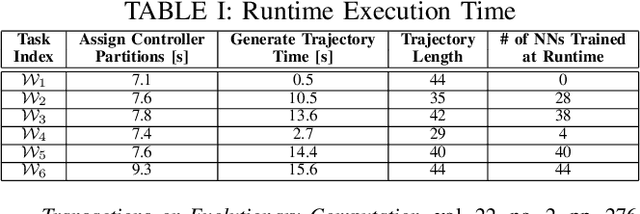

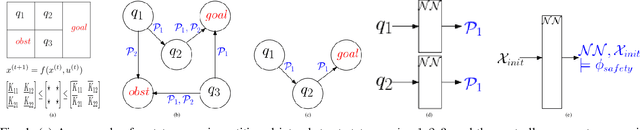

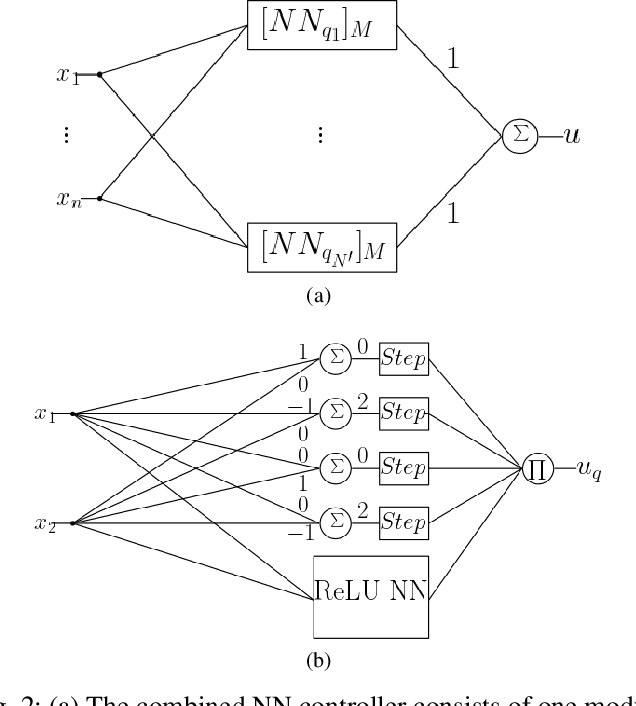

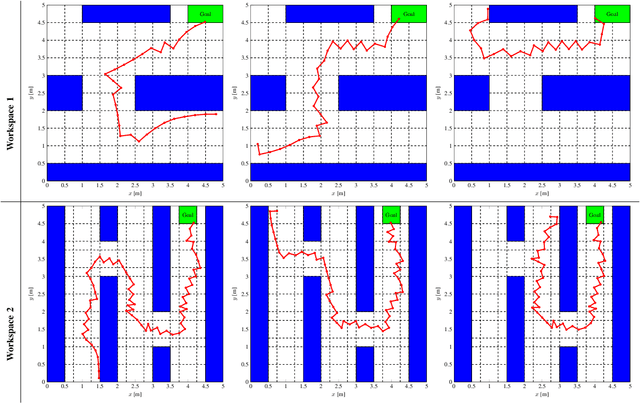

Abstract:This paper presents a neurosymbolic framework to solve motion planning problems for mobile robots involving temporal goals. The temporal goals are described using temporal logic formulas such as Linear Temporal Logic (LTL) to capture complex tasks. The proposed framework trains Neural Network (NN)-based planners that enjoy strong correctness guarantees when applying to unseen tasks, i.e., the exact task (including workspace, LTL formula, and dynamic constraints of a robot) is unknown during the training of NNs. Our approach to achieving theoretical guarantees and computational efficiency is based on two insights. First, we incorporate a symbolic model into the training of NNs such that the resulting NN-based planner inherits the interpretability and correctness guarantees of the symbolic model. Moreover, the symbolic model serves as a discrete "memory", which is necessary for satisfying temporal logic formulas. Second, we train a library of neural networks offline and combine a subset of the trained NNs into a single NN-based planner at runtime when a task is revealed. In particular, we develop a novel constrained NN training procedure, named formal NN training, to enforce that each neural network in the library represents a "symbol" in the symbolic model. As a result, our neurosymbolic framework enjoys the scalability and flexibility benefits of machine learning and inherits the provable guarantees from control-theoretic and formal-methods techniques. We demonstrate the effectiveness of our framework in both simulations and on an actual robotic vehicle, and show that our framework can generalize to unknown tasks where state-of-the-art meta-reinforcement learning techniques fail.

Provably Safe Model-Based Meta Reinforcement Learning: An Abstraction-Based Approach

Sep 03, 2021

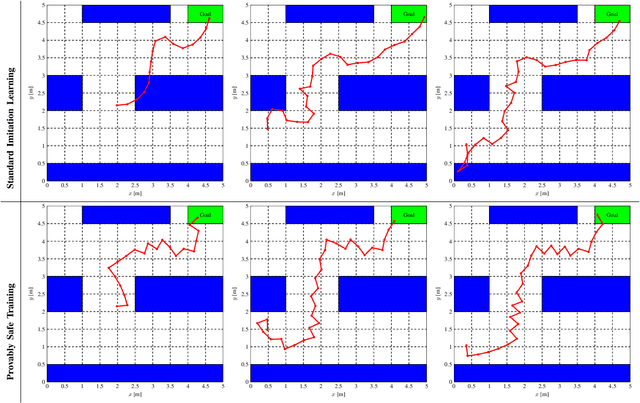

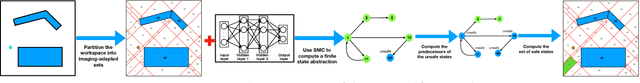

Abstract:While conventional reinforcement learning focuses on designing agents that can perform one task, meta-learning aims, instead, to solve the problem of designing agents that can generalize to different tasks (e.g., environments, obstacles, and goals) that were not considered during the design or the training of these agents. In this spirit, in this paper, we consider the problem of training a provably safe Neural Network (NN) controller for uncertain nonlinear dynamical systems that can generalize to new tasks that were not present in the training data while preserving strong safety guarantees. Our approach is to learn a set of NN controllers during the training phase. When the task becomes available at runtime, our framework will carefully select a subset of these NN controllers and compose them to form the final NN controller. Critical to our approach is the ability to compute a finite-state abstraction of the nonlinear dynamical system. This abstract model captures the behavior of the closed-loop system under all possible NN weights, and is used to train the NNs and compose them when the task becomes available. We provide theoretical guarantees that govern the correctness of the resulting NN. We evaluated our approach on the problem of controlling a wheeled robot in cluttered environments that were not present in the training data.

Provably Correct Training of Neural Network Controllers Using Reachability Analysis

Feb 22, 2021

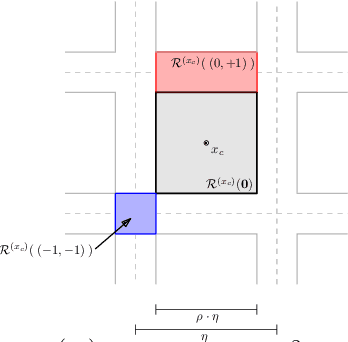

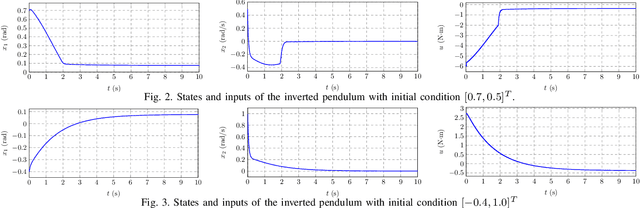

Abstract:In this paper, we consider the problem of training neural network (NN) controllers for cyber-physical systems (CPS) that are guaranteed to satisfy safety and liveness properties. Our approach is to combine model-based design methodologies for dynamical systems with data-driven approaches to achieve this target. Given a mathematical model of the dynamical system, we compute a finite-state abstract model that captures the closed-loop behavior under all possible neural network controllers. Using this finite-state abstract model, our framework identifies the subset of NN weights that are guaranteed to satisfy the safety requirements. During training, we augment the learning algorithm with a NN weight projection operator that enforces the resulting NN to be provably safe. To account for the liveness properties, the proposed framework uses the finite-state abstract model to identify candidate NN weights that may satisfy the liveness properties. Using such candidate NN weights, the proposed framework biases the NN training to achieve the liveness specification. Achieving the guarantees above, can not be ensured without correctness guarantees on the NN architecture, which controls the NN's expressiveness. Therefore, and as a corner step in the proposed framework is the ability to select provably correct NN architectures automatically.

Two-Level Lattice Neural Network Architectures for Control of Nonlinear Systems

Apr 20, 2020

Abstract:In this paper, we consider the problem of automatically designing a Rectified Linear Unit (ReLU) Neural Network (NN) architecture (number of layers and number of neurons per layer) with the guarantee that it is sufficiently parametrized to control a nonlinear system. Whereas current state-of-the-art techniques are based on hand-picked architectures or heuristic based search to find such NN architectures, our approach exploits the given model of the system to design an architecture; as a result, we provide a guarantee that the resulting NN architecture is sufficient to implement a controller that satisfies an achievable specification. Our approach exploits two basic ideas. First, assuming that the system can be controlled by an unknown Lipschitz-continuous state-feedback controller with some Lipschitz constant upper-bounded by $K_\text{cont}$, we bound the number of affine functions needed to construct a Continuous Piecewise Affine (CPWA) function that can approximate the unknown Lipschitz-continuous controller. Second, we utilize the authors' recent results on a novel NN architecture named as the Two-Level Lattice (TLL) NN architecture, which was shown to be capable of implementing any CPWA function just from the knowledge of the number of affine functions that compromises this CPWA function.

Formal Verification of Neural Network Controlled Autonomous Systems

Oct 31, 2018

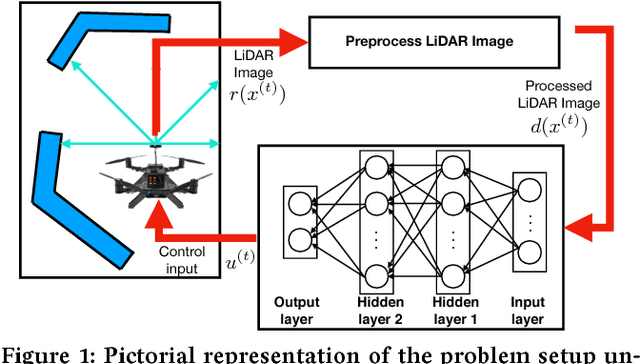

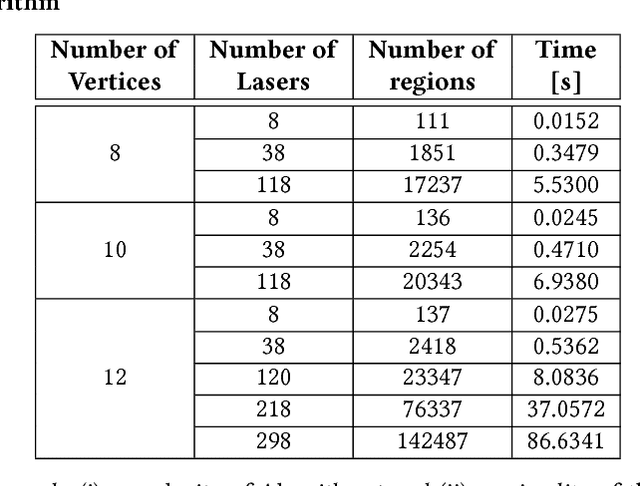

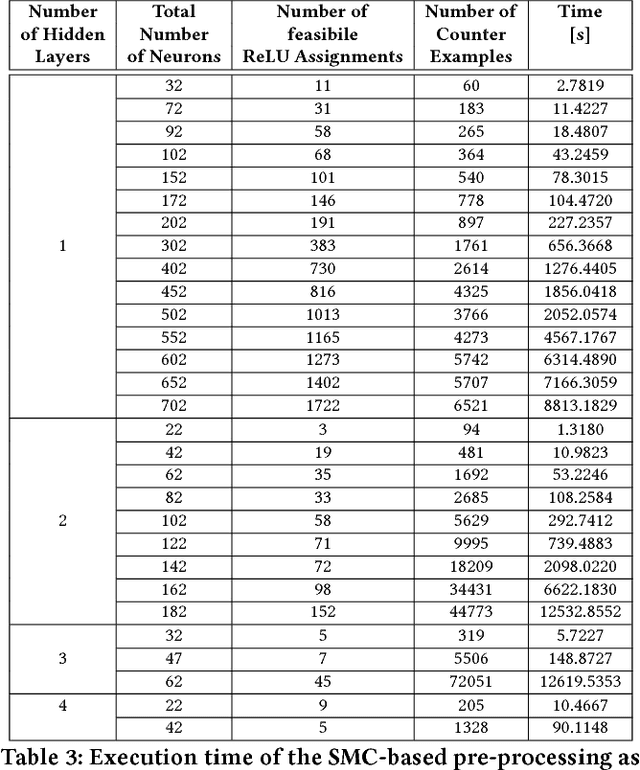

Abstract:In this paper, we consider the problem of formally verifying the safety of an autonomous robot equipped with a Neural Network (NN) controller that processes LiDAR images to produce control actions. Given a workspace that is characterized by a set of polytopic obstacles, our objective is to compute the set of safe initial conditions such that a robot trajectory starting from these initial conditions is guaranteed to avoid the obstacles. Our approach is to construct a finite state abstraction of the system and use standard reachability analysis over the finite state abstraction to compute the set of the safe initial states. The first technical problem in computing the finite state abstraction is to mathematically model the imaging function that maps the robot position to the LiDAR image. To that end, we introduce the notion of imaging-adapted sets as partitions of the workspace in which the imaging function is guaranteed to be affine. We develop a polynomial-time algorithm to partition the workspace into imaging-adapted sets along with computing the corresponding affine imaging functions. Given this workspace partitioning, a discrete-time linear dynamics of the robot, and a pre-trained NN controller with Rectified Linear Unit (ReLU) nonlinearity, the second technical challenge is to analyze the behavior of the neural network. To that end, we utilize a Satisfiability Modulo Convex (SMC) encoding to enumerate all the possible segments of different ReLUs. SMC solvers then use a Boolean satisfiability solver and a convex programming solver and decompose the problem into smaller subproblems. To accelerate this process, we develop a pre-processing algorithm that could rapidly prune the space feasible ReLU segments. Finally, we demonstrate the efficiency of the proposed algorithms using numerical simulations with increasing complexity of the neural network controller.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge