Vinay Kyatham

Target-Independent Domain Adaptation for WBC Classification using Generative Latent Search

May 11, 2020

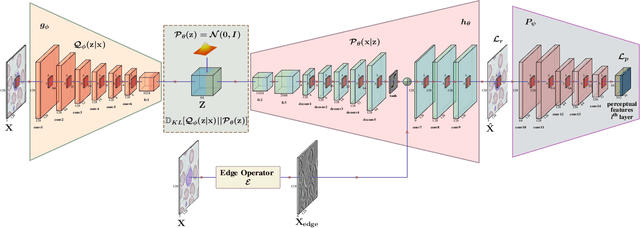

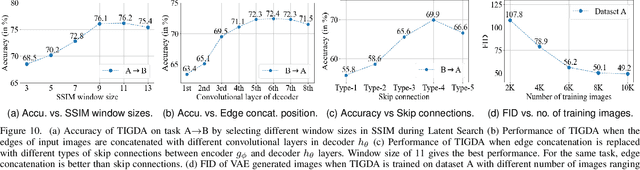

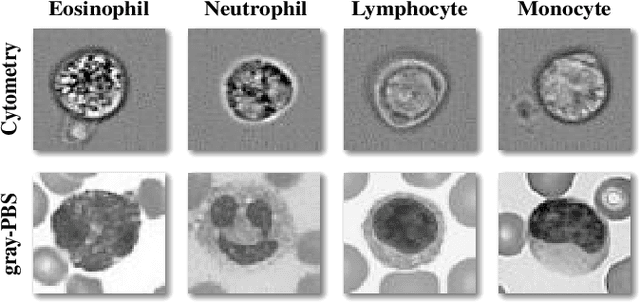

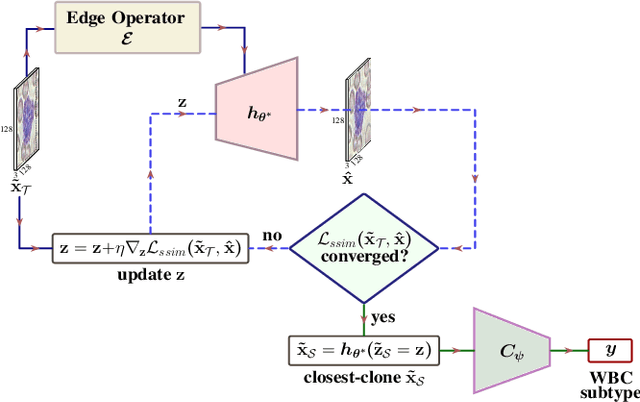

Abstract:Automating the classification of camera-obtained microscopic images of White Blood Cells (WBCs) and related cell subtypes has assumed importance since it aids the laborious manual process of review and diagnosis. Several State-Of-The-Art (SOTA) methods developed using Deep Convolutional Neural Networks suffer from the problem of domain shift - severe performance degradation when they are tested on data (target) obtained in a setting different from that of the training (source). The change in the target data might be caused by factors such as differences in camera/microscope types, lenses, lighting-conditions etc. This problem can potentially be solved using Unsupervised Domain Adaptation (UDA) techniques albeit standard algorithms presuppose the existence of a sufficient amount of unlabelled target data which is not always the case with medical images. In this paper, we propose a method for UDA that is devoid of the need for target data. Given a test image from the target data, we obtain its 'closest-clone' from the source data that is used as a proxy in the classifier. We prove the existence of such a clone given that infinite number of data points can be sampled from the source distribution. We propose a method in which a latent-variable generative model based on variational inference is used to simultaneously sample and find the 'closest-clone' from the source distribution through an optimization procedure in the latent space. We demonstrate the efficacy of the proposed method over several SOTA UDA methods for WBC classification on datasets captured using different imaging modalities under multiple settings.

Variational Inference with Latent Space Quantization for Adversarial Resilience

Mar 24, 2019

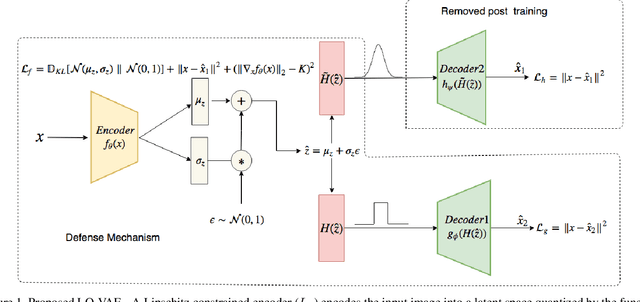

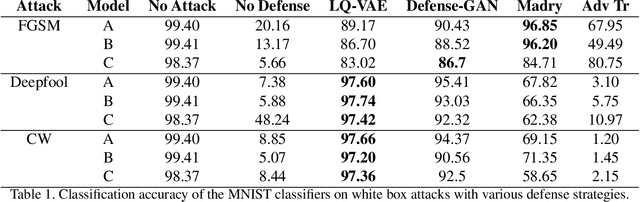

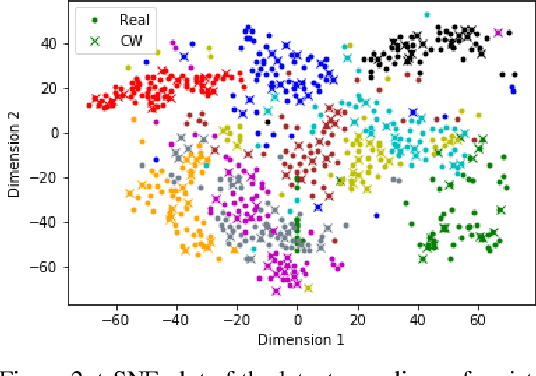

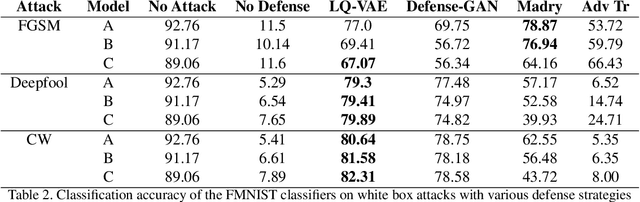

Abstract:Despite their tremendous success in modelling high-dimensional data manifolds, deep neural networks suffer from the threat of adversarial attacks - Existence of perceptually valid input-like samples obtained through careful perturbations that leads to degradation in the performance of underlying model. Major concerns with existing defense mechanisms include non-generalizability across different attacks, models and large inference time. In this paper, we propose a generalized defense mechanism capitalizing on the expressive power of regularized latent space based generative models. We design an adversarial filter, devoid of access to classifier and adversaries, which makes it usable in tandem with any classifier. The basic idea is to learn a Lipschitz constrained mapping from the data manifold, incorporating adversarial perturbations, to a quantized latent space and re-map it to the true data manifold. Specifically, we simultaneously auto-encode the data manifold and its perturbations implicitly through the perturbations of the regularized and quantized generative latent space, realized using variational inference. We demonstrate the efficacy of the proposed formulation in providing the resilience against multiple attack types (Black and white box) and methods, while being almost real-time. Our experiments show that the proposed method surpasses the state-of-the-art techniques in several cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge