Sonja Adebahr

Measuring breathing induced oesophageal motion and its dosimetric impact

Oct 19, 2020

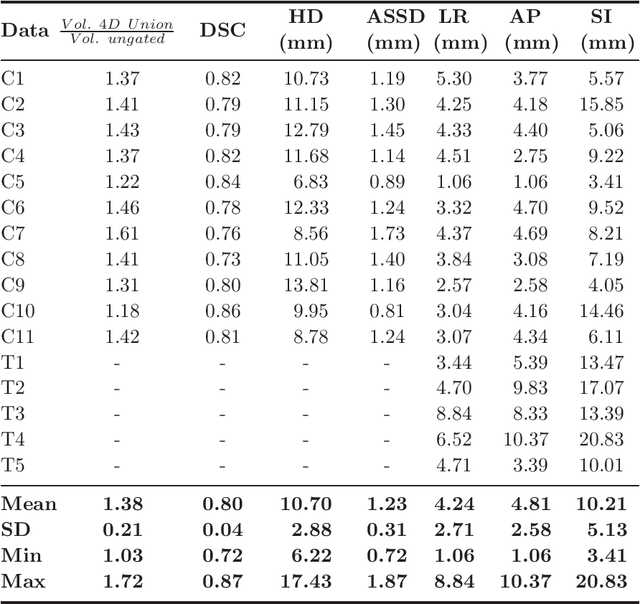

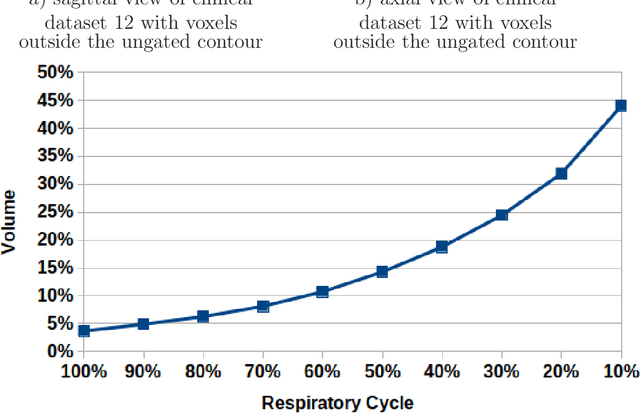

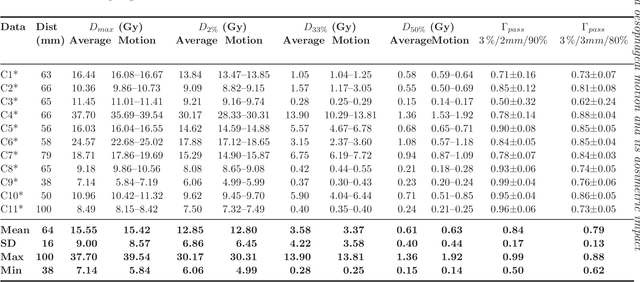

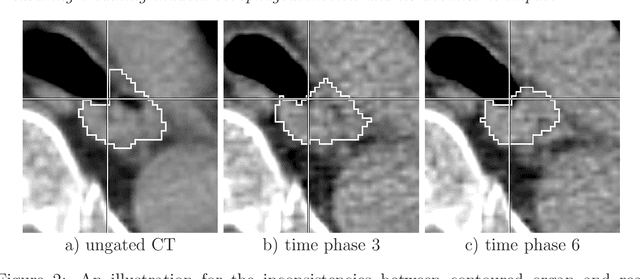

Abstract:Stereotactic body radiation therapy allows for a precise and accurate dose delivery. Organ motion during treatment bares the risk of undetected high dose healthy tissue exposure. An organ very susceptible to high dose is the oesophagus. Its low contrast on CT and the oblong shape renders motion estimation difficult. We tackle this issue by modern algorithms to measure the oesophageal motion voxel-wise and to estimate motion related dosimetric impact. Oesophageal motion was measured using deformable image registration and 4DCT of 11 internal and 5 public datasets. Current clinical practice of contouring the organ on 3DCT was compared to timely resolved 4DCT contours. The dosimetric impact of the motion was estimated by analysing the trajectory of each voxel in the 4D dose distribution. Finally an organ motion model was built, allowing for easier patient-wise comparisons. Motion analysis showed mean absolute maximal motion amplitudes of 4.24 +/- 2.71 mm left-right, 4.81 +/- 2.58 mm anterior-posterior and 10.21 +/- 5.13 mm superior-inferior. Motion between the cohorts differed significantly. In around 50 % of the cases the dosimetric passing criteria was violated. Contours created on 3DCT did not cover 14 % of the organ for 50 % of the respiratory cycle and the 3D contour is around 38 % smaller than the union of all 4D contours. The motion model revealed that the maximal motion is not limited to the lower part of the organ. Our results showed motion amplitudes higher than most reported values in the literature and that motion is very heterogeneous across patients. Therefore, individual motion information should be considered in contouring and planning.

A 3D fully convolutional neural network and a random walker to segment the esophagus in CT

Apr 21, 2017

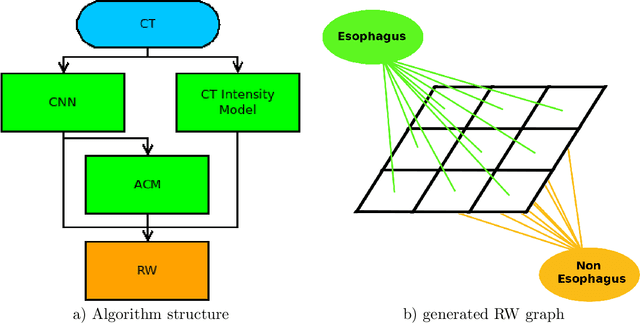

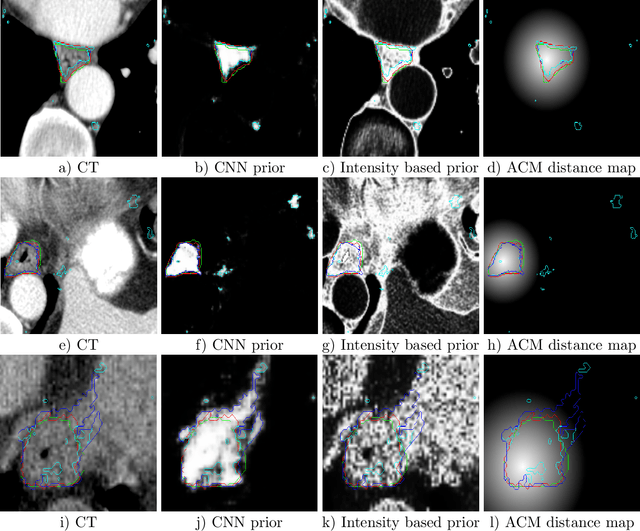

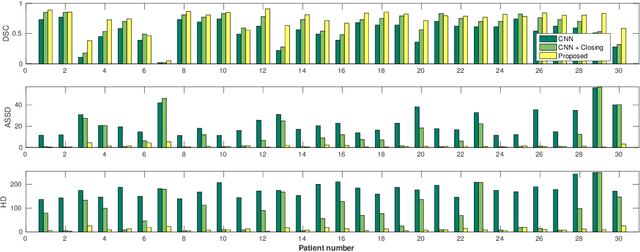

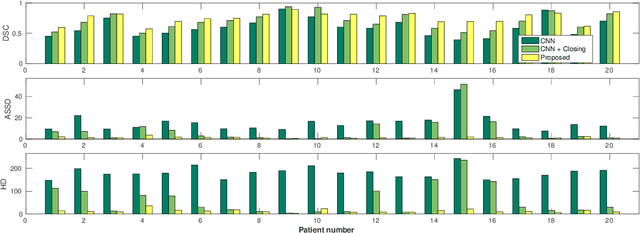

Abstract:Precise delineation of organs at risk (OAR) is a crucial task in radiotherapy treatment planning, which aims at delivering high dose to the tumour while sparing healthy tissues. In recent years algorithms showed high performance and the possibility to automate this task for many OAR. However, for some OAR precise delineation remains challenging. The esophagus with a versatile shape and poor contrast is among these structures. To tackle these issues we propose a 3D fully (convolutional neural network (CNN) driven random walk (RW) approach to automatically segment the esophagus on CT. First, a soft probability map is generated by the CNN. Then an active contour model (ACM) is fitted on the probability map to get a first estimation of the center line. The outputs of the CNN and ACM are then used in addition to CT Hounsfield values to drive the RW. Evaluation and training was done on 50 CTs with peer reviewed esophagus contours. Results were assessed regarding spatial overlap and shape similarities. The generated contours showed a mean Dice coefficient of 0.76, an average symmetric square distance of 1.36 mm and an average Hausdorff distance of 11.68 compared to the reference. These figures translate into a very good agreement with the reference contours and an increase in accuracy compared to other methods. We show that by employing a CNN accurate estimations of esophagus location can be obtained and refined by a post processing RW step. One of the main advantages compared to previous methods is that our network performs convolutions in a 3D manner, fully exploiting the 3D spatial context and performing an efficient and precise volume-wise prediction. The whole segmentation process is fully automatic and yields esophagus delineations in very good agreement with the used gold standard, showing that it can compete with previously published methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge