Simone Antonelli

Predicting Channel Closures in the Lightning Network with Machine Learning

May 12, 2026Abstract:The Lightning Network (LN) is a second-layer protocol for Bitcoin designed to enable fast and cost-efficient off-chain transactions. Channels in the LN can be closed either by mutual agreement or unilaterally through a forced closure, which locks the involved capital for an extended period and degrades network reliability. In this paper, we study the problem of predicting channel closure types from publicly available gossip data, framing it as a temporal link classification task over the evolving channel graph. We construct a dataset spanning over two years of LN activity and benchmark a range of machine learning approaches, from MLPs to temporal graph neural networks and spectral encodings. Our experiments reveal that the dominant predictive signals are temporal and behavioural, namely how recently each endpoint was active and the per-node history of past closures, while the surrounding network topology provides no additional benefit. We find that a simple MLP operating on edge-level features, node-level event counts, and temporal patterns outperforms all graph-based approaches, and discuss how the inherent privacy of the LN, where critical information such as channel balances and payment flows remains hidden, fundamentally limits the predictability of closures from gossip data alone. We publicly release the dataset and code at https://github.com/AmbossTech/ln-channel-closure-prediction to encourage further research on this practically relevant task.

Metric Based Few-Shot Graph Classification

Jun 08, 2022

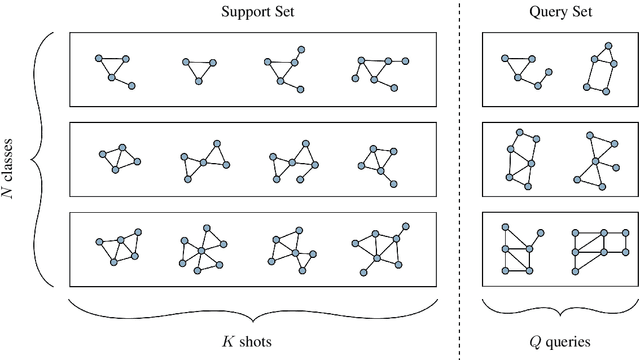

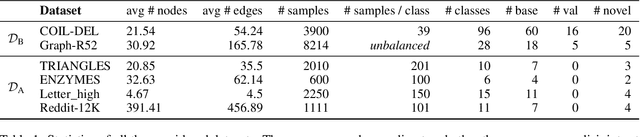

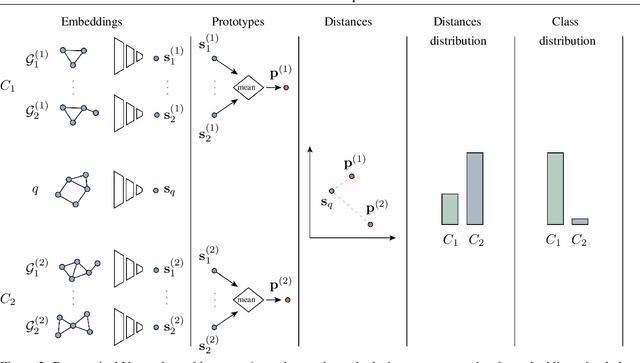

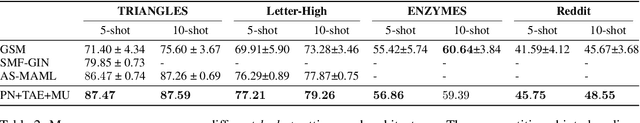

Abstract:Many modern deep-learning techniques do not work without enormous datasets. At the same time, several fields demand methods working in scarcity of data. This problem is even more complex when the samples have varying structures, as in the case of graphs. Graph representation learning techniques have recently proven successful in a variety of domains. Nevertheless, the employed architectures perform miserably when faced with data scarcity. On the other hand, few-shot learning allows employing modern deep learning models in scarce data regimes without waiving their effectiveness. In this work, we tackle the problem of few-shot graph classification, showing that equipping a simple distance metric learning baseline with a state-of-the-art graph embedder allows to obtain competitive results on the task.While the simplicity of the architecture is enough to outperform more complex ones, it also allows straightforward additions. To this end, we show that additional improvements may be obtained by encouraging a task-conditioned embedding space. Finally, we propose a MixUp-based online data augmentation technique acting in the latent space and show its effectiveness on the task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge