Rushit Dave

Enhancing Smart Farming Through Federated Learning: A Secure, Scalable, and Efficient Approach for AI-Driven Agriculture

Sep 15, 2025Abstract:The agricultural sector is undergoing a transformation with the integration of advanced technologies, particularly in data-driven decision-making. This work proposes a federated learning framework for smart farming, aiming to develop a scalable, efficient, and secure solution for crop disease detection tailored to the environmental and operational conditions of Minnesota farms. By maintaining sensitive farm data locally and enabling collaborative model updates, our proposed framework seeks to achieve high accuracy in crop disease classification without compromising data privacy. We outline a methodology involving data collection from Minnesota farms, application of local deep learning algorithms, transfer learning, and a central aggregation server for model refinement, aiming to achieve improved accuracy in disease detection, good generalization across agricultural scenarios, lower costs in communication and training time, and earlier identification and intervention against diseases in future implementations. We outline a methodology and anticipated outcomes, setting the stage for empirical validation in subsequent studies. This work comes in a context where more and more demand for data-driven interpretations in agriculture has to be weighed with concerns about privacy from farms that are hesitant to share their operational data. This will be important to provide a secure and efficient disease detection method that can finally revolutionize smart farming systems and solve local agricultural problems with data confidentiality. In doing so, this paper bridges the gap between advanced machine learning techniques and the practical, privacy-sensitive needs of farmers in Minnesota and beyond, leveraging the benefits of federated learning.

From Clicks to Security: Investigating Continuous Authentication via Mouse Dynamics

Mar 06, 2024

Abstract:In the realm of computer security, the importance of efficient and reliable user authentication methods has become increasingly critical. This paper examines the potential of mouse movement dynamics as a consistent metric for continuous authentication. By analyzing user mouse movement patterns in two contrasting gaming scenarios, "Team Fortress" and Poly Bridge we investigate the distinctive behavioral patterns inherent in high-intensity and low-intensity UI interactions. The study extends beyond conventional methodologies by employing a range of machine learning models. These models are carefully selected to assess their effectiveness in capturing and interpreting the subtleties of user behavior as reflected in their mouse movements. This multifaceted approach allows for a more nuanced and comprehensive understanding of user interaction patterns. Our findings reveal that mouse movement dynamics can serve as a reliable indicator for continuous user authentication. The diverse machine learning models employed in this study demonstrate competent performance in user verification, marking an improvement over previous methods used in this field. This research contributes to the ongoing efforts to enhance computer security and highlights the potential of leveraging user behavior, specifically mouse dynamics, in developing robust authentication systems.

Your device may know you better than you know yourself -- continuous authentication on novel dataset using machine learning

Mar 06, 2024

Abstract:This research aims to further understanding in the field of continuous authentication using behavioral biometrics. We are contributing a novel dataset that encompasses the gesture data of 15 users playing Minecraft with a Samsung Tablet, each for a duration of 15 minutes. Utilizing this dataset, we employed machine learning (ML) binary classifiers, being Random Forest (RF), K-Nearest Neighbors (KNN), and Support Vector Classifier (SVC), to determine the authenticity of specific user actions. Our most robust model was SVC, which achieved an average accuracy of approximately 90%, demonstrating that touch dynamics can effectively distinguish users. However, further studies are needed to make it viable option for authentication systems

Hybrid Deepfake Detection Utilizing MLP and LSTM

Apr 21, 2023

Abstract:The growing reliance of society on social media for authentic information has done nothing but increase over the past years. This has only raised the potential consequences of the spread of misinformation. One of the growing methods in popularity is to deceive users using a deepfake. A deepfake is an invention that has come with the latest technological advancements, which enables nefarious online users to replace their face with a computer generated, synthetic face of numerous powerful members of society. Deepfake images and videos now provide the means to mimic important political and cultural figures to spread massive amounts of false information. Models that can detect these deepfakes to prevent the spread of misinformation are now of tremendous necessity. In this paper, we propose a new deepfake detection schema utilizing two deep learning algorithms: long short term memory and multilayer perceptron. We evaluate our model using a publicly available dataset named 140k Real and Fake Faces to detect images altered by a deepfake with accuracies achieved as high as 74.7%

Leveraging Deep Learning Approaches for Deepfake Detection: A Review

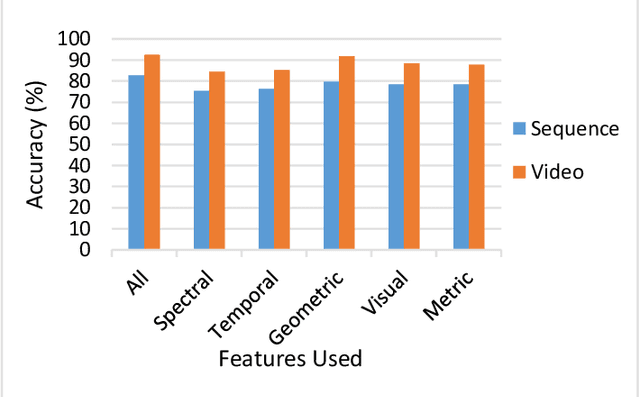

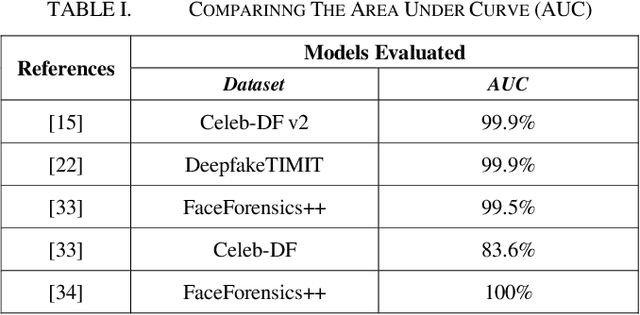

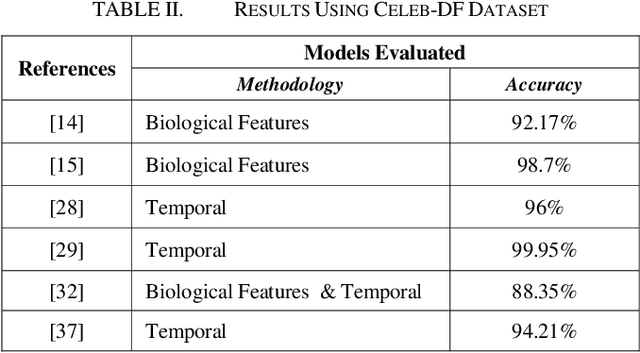

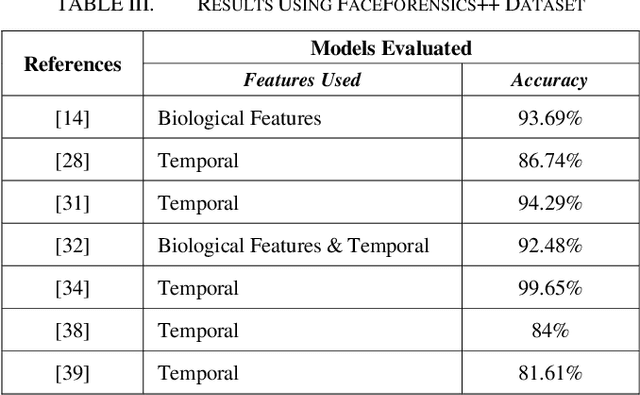

Apr 04, 2023Abstract:Conspicuous progression in the field of machine learning and deep learning have led the jump of highly realistic fake media, these media oftentimes referred as deepfakes. Deepfakes are fabricated media which are generated by sophisticated AI that are at times very difficult to set apart from the real media. So far, this media can be uploaded to the various social media platforms, hence advertising it to the world got easy, calling for an efficacious countermeasure. Thus, one of the optimistic counter steps against deepfake would be deepfake detection. To undertake this threat, researchers in the past have created models to detect deepfakes based on ML/DL techniques like Convolutional Neural Networks. This paper aims to explore different methodologies with an intention to achieve a cost-effective model with a higher accuracy with different types of the datasets, which is to address the generalizability of the dataset.

Machine Learning Based Approach to Recommend MITRE ATT&CK Framework for Software Requirements and Design Specifications

Feb 10, 2023

Abstract:Engineering more secure software has become a critical challenge in the cyber world. It is very important to develop methodologies, techniques, and tools for developing secure software. To develop secure software, software developers need to think like an attacker through mining software repositories. These aim to analyze and understand the data repositories related to software development. The main goal is to use these software repositories to support the decision-making process of software development. There are different vulnerability databases like Common Weakness Enumeration (CWE), Common Vulnerabilities and Exposures database (CVE), and CAPEC. We utilized a database called MITRE. MITRE ATT&CK tactics and techniques have been used in various ways and methods, but tools for utilizing these tactics and techniques in the early stages of the software development life cycle (SDLC) are lacking. In this paper, we use machine learning algorithms to map requirements to the MITRE ATT&CK database and determine the accuracy of each mapping depending on the data split.

Using Deep Learning to Detecting Deepfakes

Jul 27, 2022

Abstract:In the recent years, social media has grown to become a major source of information for many online users. This has given rise to the spread of misinformation through deepfakes. Deepfakes are videos or images that replace one persons face with another computer-generated face, often a more recognizable person in society. With the recent advances in technology, a person with little technological experience can generate these videos. This enables them to mimic a power figure in society, such as a president or celebrity, creating the potential danger of spreading misinformation and other nefarious uses of deepfakes. To combat this online threat, researchers have developed models that are designed to detect deepfakes. This study looks at various deepfake detection models that use deep learning algorithms to combat this looming threat. This survey focuses on providing a comprehensive overview of the current state of deepfake detection models and the unique approaches many researchers take to solving this problem. The benefits, limitations, and suggestions for future work will be thoroughly discussed throughout this paper.

Mitigating Presentation Attack using DCGAN and Deep CNN

Jun 22, 2022

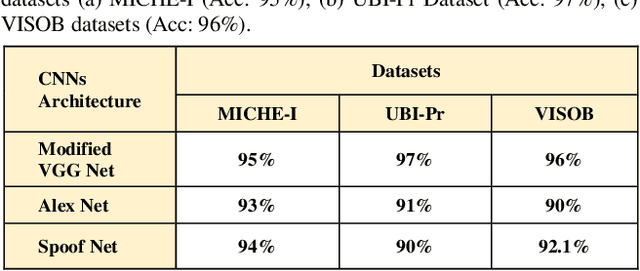

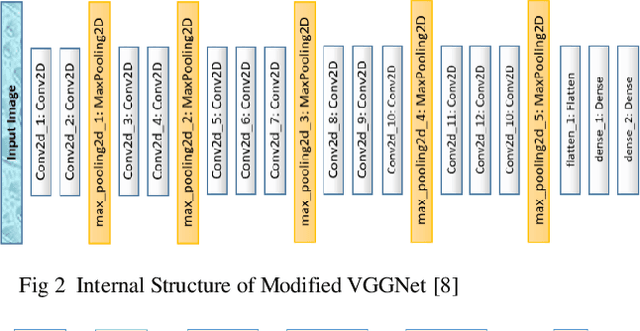

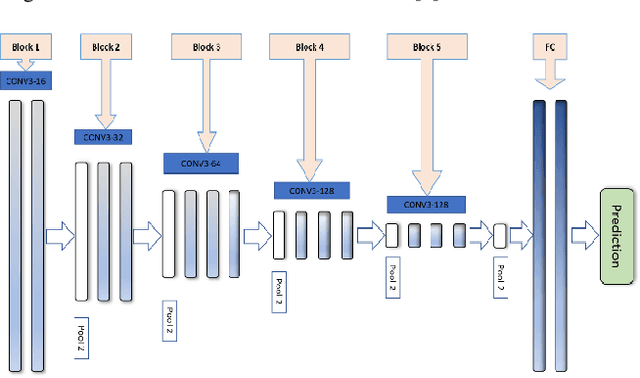

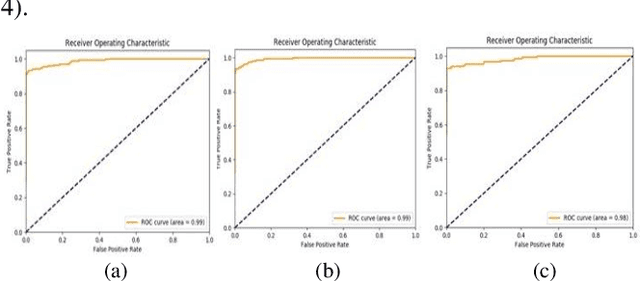

Abstract:Biometric based authentication is currently playing an essential role over conventional authentication system; however, the risk of presentation attacks subsequently rising. Our research aims at identifying the areas where presentation attack can be prevented even though adequate biometric image samples of users are limited. Our work focusses on generating photorealistic synthetic images from the real image sets by implementing Deep Convolution Generative Adversarial Net (DCGAN). We have implemented the temporal and spatial augmentation during the fake image generation. Our work detects the presentation attacks on facial and iris images using our deep CNN, inspired by VGGNet [1]. We applied the deep neural net techniques on three different biometric image datasets, namely MICHE I [2], VISOB [3], and UBIPr [4]. The datasets, used in this research, contain images that are captured both in controlled and uncontrolled environment along with different resolutions and sizes. We obtained the best test accuracy of 97% on UBI-Pr [4] Iris datasets. For MICHE-I [2] and VISOB [3] datasets, we achieved the test accuracies of 95% and 96% respectively.

Machine and Deep Learning Applications to Mouse Dynamics for Continuous User Authentication

May 26, 2022

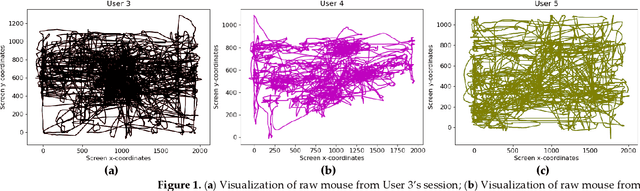

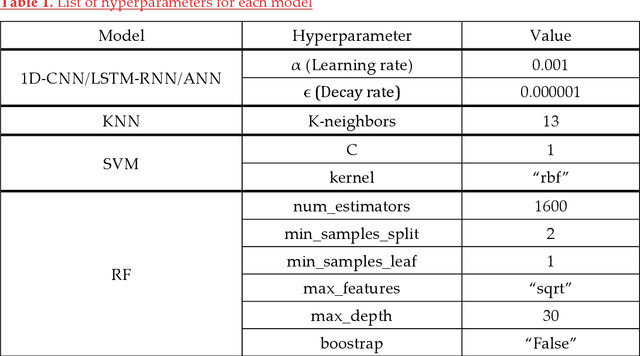

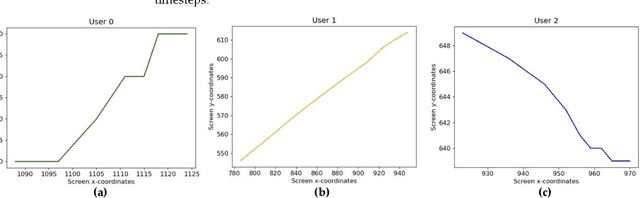

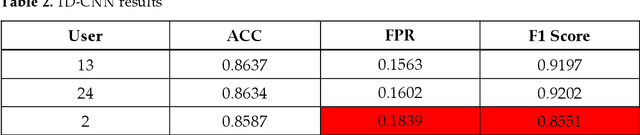

Abstract:Static authentication methods, like passwords, grow increasingly weak with advancements in technology and attack strategies. Continuous authentication has been proposed as a solution, in which users who have gained access to an account are still monitored in order to continuously verify that the user is not an imposter who had access to the user credentials. Mouse dynamics is the behavior of a users mouse movements and is a biometric that has shown great promise for continuous authentication schemes. This article builds upon our previous published work by evaluating our dataset of 40 users using three machine learning and deep learning algorithms. Two evaluation scenarios are considered: binary classifiers are used for user authentication, with the top performer being a 1-dimensional convolutional neural network with a peak average test accuracy of 85.73% across the top 10 users. Multi class classification is also examined using an artificial neural network which reaches an astounding peak accuracy of 92.48% the highest accuracy we have seen for any classifier on this dataset.

Evaluation of a User Authentication Schema Using Behavioral Biometrics and Machine Learning

May 07, 2022

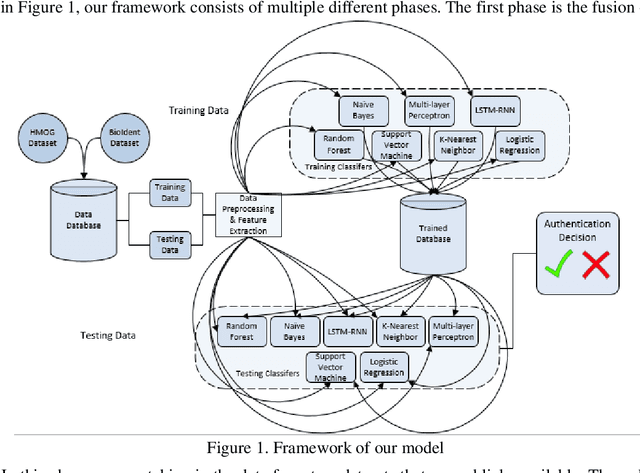

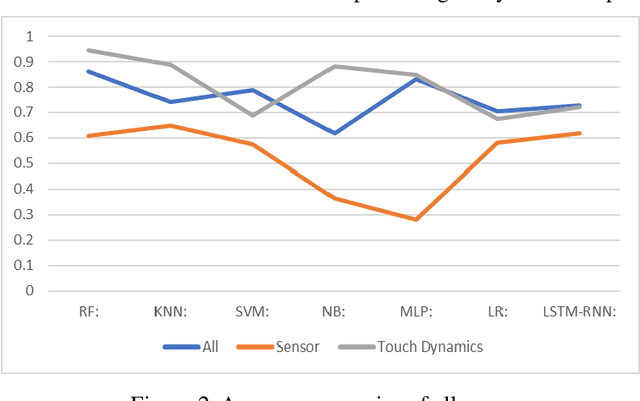

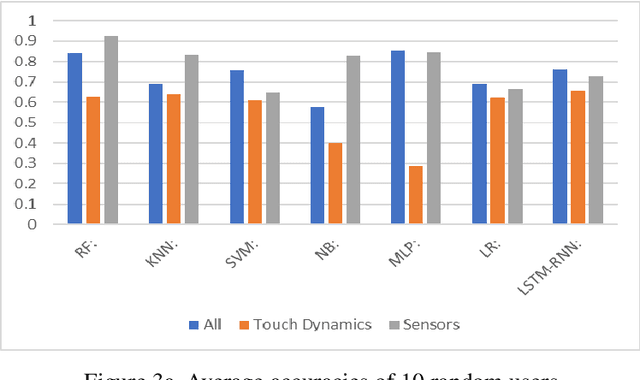

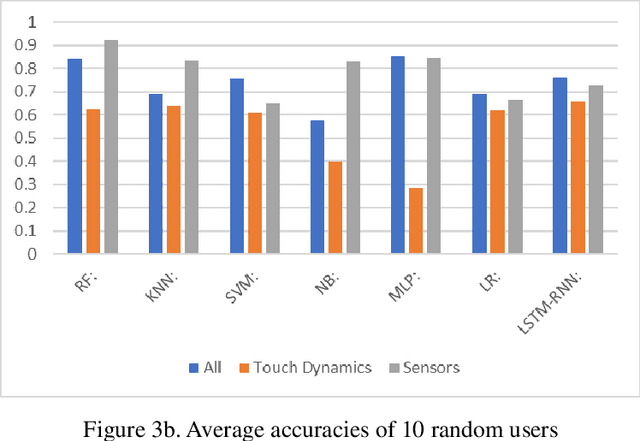

Abstract:The amount of secure data being stored on mobile devices has grown immensely in recent years. However, the security measures protecting this data have stayed static, with few improvements being done to the vulnerabilities of current authentication methods such as physiological biometrics or passwords. Instead of these methods, behavioral biometrics has recently been researched as a solution to these vulnerable authentication methods. In this study, we aim to contribute to the research being done on behavioral biometrics by creating and evaluating a user authentication scheme using behavioral biometrics. The behavioral biometrics used in this study include touch dynamics and phone movement, and we evaluate the performance of different single-modal and multi-modal combinations of the two biometrics. Using two publicly available datasets - BioIdent and Hand Movement Orientation and Grasp (H-MOG), this study uses seven common machine learning algorithms to evaluate performance. The algorithms used in the evaluation include Random Forest, Support Vector Machine, K-Nearest Neighbor, Naive Bayes, Logistic Regression, Multilayer Perceptron, and Long Short-Term Memory Recurrent Neural Networks, with accuracy rates reaching as high as 86%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge