Ruiping Yin

AISA: Awakening Intrinsic Safety Awareness in Large Language Models against Jailbreak Attacks

Feb 14, 2026Abstract:Large language models (LLMs) remain vulnerable to jailbreak prompts that elicit harmful or policy-violating outputs, while many existing defenses rely on expensive fine-tuning, intrusive prompt rewriting, or external guardrails that add latency and can degrade helpfulness. We present AISA, a lightweight, single-pass defense that activates safety behaviors already latent inside the model rather than treating safety as an add-on. AISA first localizes intrinsic safety awareness via spatiotemporal analysis and shows that intent-discriminative signals are broadly encoded, with especially strong separability appearing in the scaled dot-product outputs of specific attention heads near the final structural tokens before generation. Using a compact set of automatically selected heads, AISA extracts an interpretable prompt-risk score with minimal overhead, achieving detector-level performance competitive with strong proprietary baselines on small (7B) models. AISA then performs logits-level steering: it modulates the decoding distribution in proportion to the inferred risk, ranging from normal generation for benign prompts to calibrated refusal for high-risk requests -- without changing model parameters, adding auxiliary modules, or requiring multi-pass inference. Extensive experiments spanning 13 datasets, 12 LLMs, and 14 baselines demonstrate that AISA improves robustness and transfer while preserving utility and reducing false refusals, enabling safer deployment even for weakly aligned or intentionally risky model variants.

Advances and Challenges of Multi-task Learning Method in Recommender System: A Survey

May 23, 2023

Abstract:Multi-task learning has been widely applied in computational vision, natural language processing and other fields, which has achieved well performance. In recent years, a lot of work about multi-task learning recommender system has been yielded, but there is no previous literature to summarize these works. To bridge this gap, we provide a systematic literature survey about multi-task recommender systems, aiming to help researchers and practitioners quickly understand the current progress in this direction. In this survey, we first introduce the background and the motivation of the multi-task learning-based recommender systems. Then we provide a taxonomy of multi-task learning-based recommendation methods according to the different stages of multi-task learning techniques, which including task relationship discovery, model architecture and optimization strategy. Finally, we raise discussions on the application and promising future directions in this area.

Improving Embedded Knowledge Graph Multi-hop Question Answering by introducing Relational Chain Reasoning

Oct 25, 2021

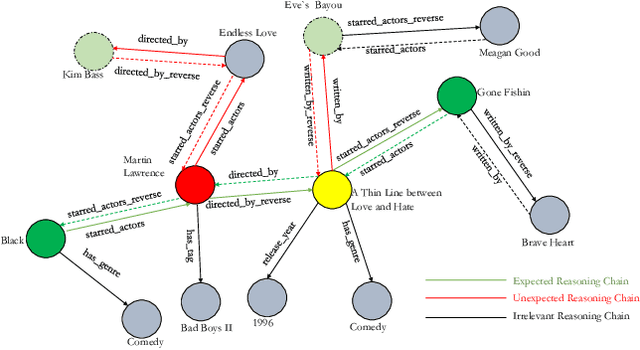

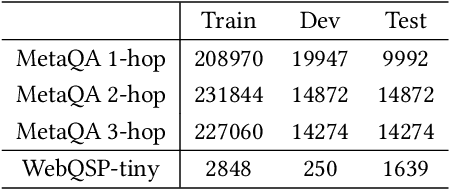

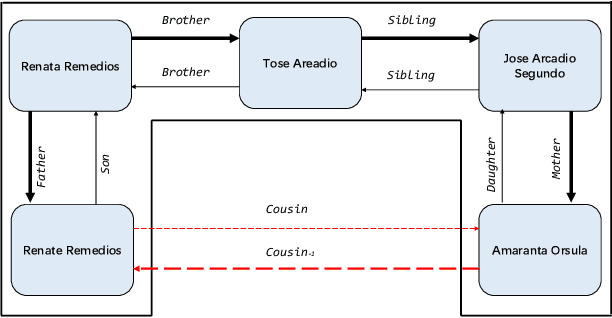

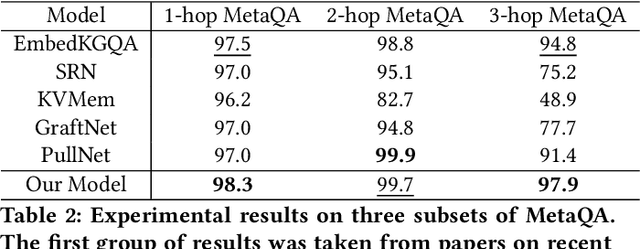

Abstract:Knowledge Base Question Answering (KBQA) aims to answer userquestions from a knowledge base (KB) by identifying the reasoningrelations between topic entity and answer. As a complex branchtask of KBQA, multi-hop KGQA requires reasoning over multi-hop relational chains preserved in KG to arrive at the right answer.Despite the successes made in recent years, the existing works onanswering multi-hop complex question face the following challenges: i) suffering from poor performances due to the neglect of explicit relational chain order and its relational types reflected inuser questions; ii) failing to consider implicit relations between thetopic entity and the answer implied in structured KG because oflimited neighborhood size constraints in subgraph retrieval based algorithms. To address these issues in multi-hop KGQA, we proposea novel model in this paper, namely Relational Chain-based Embed-ded KGQA (Rce-KGQA), which simultaneously utilizes the explicitrelational chain described in natural language questions and the implicit relational chain stored in structured KG. Our extensiveempirical study on two open-domain benchmarks proves that ourmethod significantly outperforms the state-of-the-art counterpartslike GraftNet, PullNet and EmbedKGQA. Comprehensive ablation experiments also verify the effectiveness of our method for multi-hop KGQA tasks. We have made our model's source code availableat Github: https://github.com/albert-jin/Rce-KGQA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge