Rosalind Picard

Multi-task multiple kernel machines for personalized pain recognition from functional near-infrared spectroscopy brain signals

Aug 21, 2018

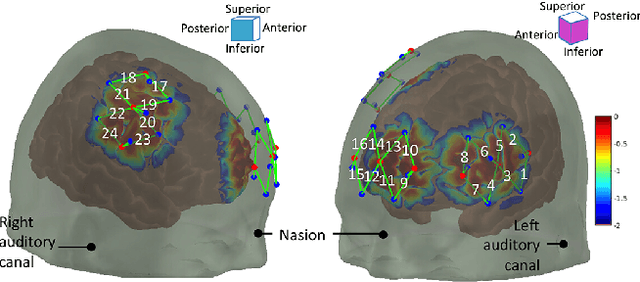

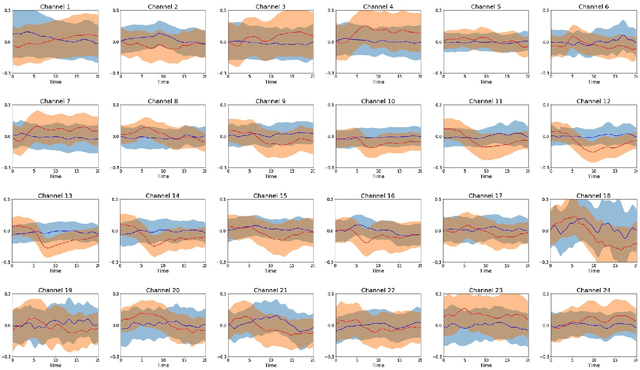

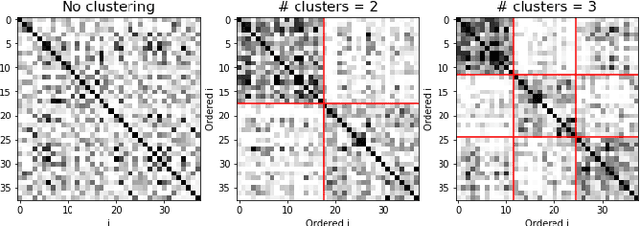

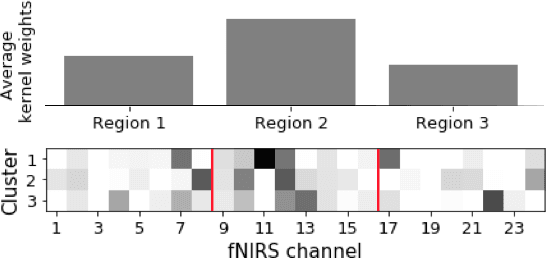

Abstract:Currently there is no validated objective measure of pain. Recent neuroimaging studies have explored the feasibility of using functional near-infrared spectroscopy (fNIRS) to measure alterations in brain function in evoked and ongoing pain. In this study, we applied multi-task machine learning methods to derive a practical algorithm for pain detection derived from fNIRS signals in healthy volunteers exposed to a painful stimulus. Especially, we employed multi-task multiple kernel learning to account for the inter-subject variability in pain response. Our results support the use of fNIRS and machine learning techniques in developing objective pain detection, and also highlight the importance of adopting personalized analysis in the process.

Personalized Machine Learning for Robot Perception of Affect and Engagement in Autism Therapy

Jun 19, 2018Abstract:Robots have great potential to facilitate future therapies for children on the autism spectrum. However, existing robots lack the ability to automatically perceive and respond to human affect, which is necessary for establishing and maintaining engaging interactions. Moreover, their inference challenge is made harder by the fact that many individuals with autism have atypical and unusually diverse styles of expressing their affective-cognitive states. To tackle the heterogeneity in behavioral cues of children with autism, we use the latest advances in deep learning to formulate a personalized machine learning (ML) framework for automatic perception of the childrens affective states and engagement during robot-assisted autism therapy. The key to our approach is a novel shift from the traditional ML paradigm - instead of using 'one-size-fits-all' ML models, our personalized ML framework is optimized for each child by leveraging relevant contextual information (demographics and behavioral assessment scores) and individual characteristics of each child. We designed and evaluated this framework using a dataset of multi-modal audio, video and autonomic physiology data of 35 children with autism (age 3-13) and from 2 cultures (Asia and Europe), participating in a 25-minute child-robot interaction (~500k datapoints). Our experiments confirm the feasibility of the robot perception of affect and engagement, showing clear improvements due to the model personalization. The proposed approach has potential to improve existing therapies for autism by offering more efficient monitoring and summarization of the therapy progress.

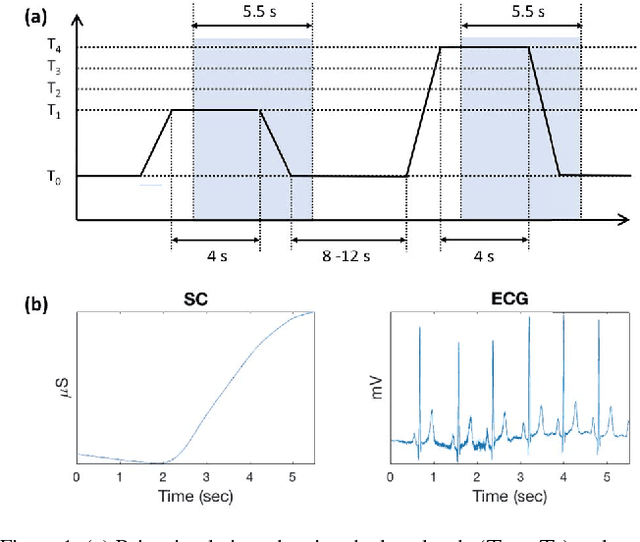

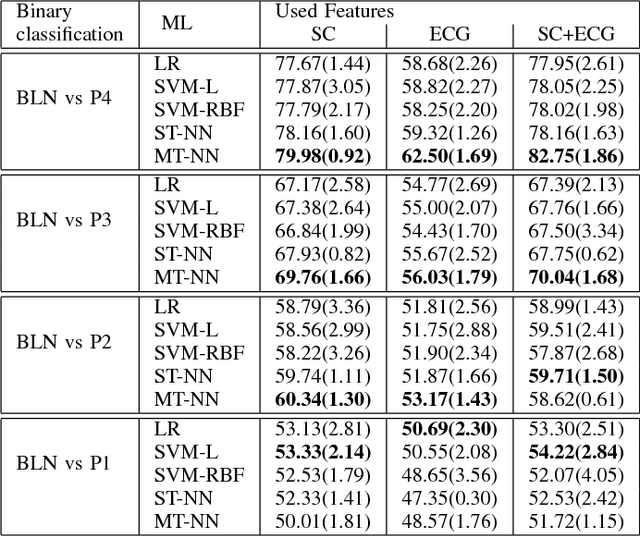

Physiological and behavioral profiling for nociceptive pain estimation using personalized multitask learning

Nov 10, 2017

Abstract:Pain is a subjective experience commonly measured through patient's self report. While there exist numerous situations in which automatic pain estimation methods may be preferred, inter-subject variability in physiological and behavioral pain responses has hindered the development of such methods. In this work, we address this problem by introducing a novel personalized multitask machine learning method for pain estimation based on individual physiological and behavioral pain response profiles, and show its advantages in a dataset containing multimodal responses to nociceptive heat pain.

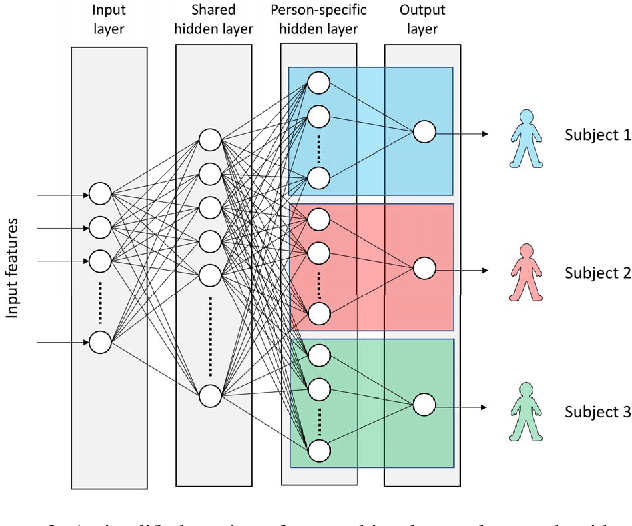

Multi-task Neural Networks for Personalized Pain Recognition from Physiological Signals

Sep 04, 2017

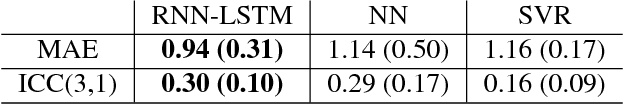

Abstract:Pain is a complex and subjective experience that poses a number of measurement challenges. While self-report by the patient is viewed as the gold standard of pain assessment, this approach fails when patients cannot verbally communicate pain intensity or lack normal mental abilities. Here, we present a pain intensity measurement method based on physiological signals. Specifically, we implement a multi-task learning approach based on neural networks that accounts for individual differences in pain responses while still leveraging data from across the population. We test our method in a dataset containing multi-modal physiological responses to nociceptive pain.

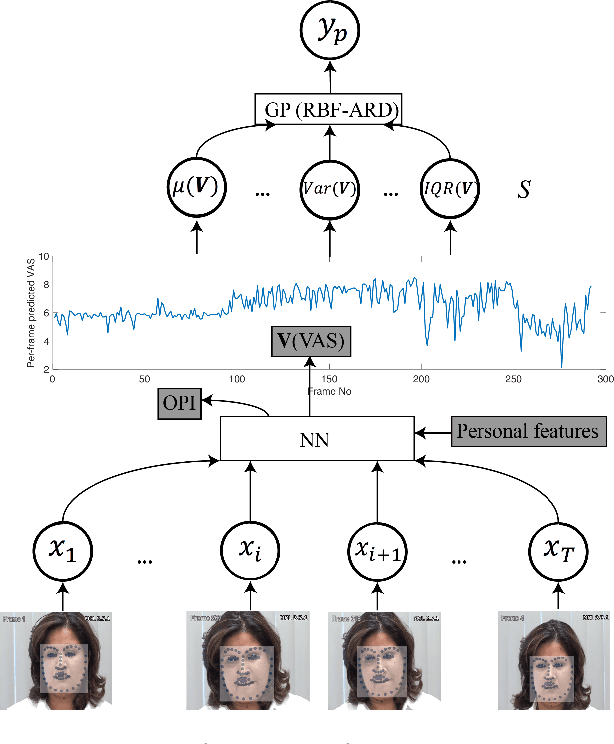

DeepFaceLIFT: Interpretable Personalized Models for Automatic Estimation of Self-Reported Pain

Aug 09, 2017

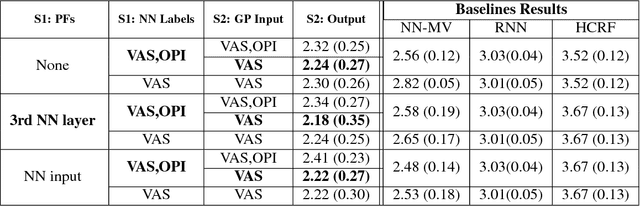

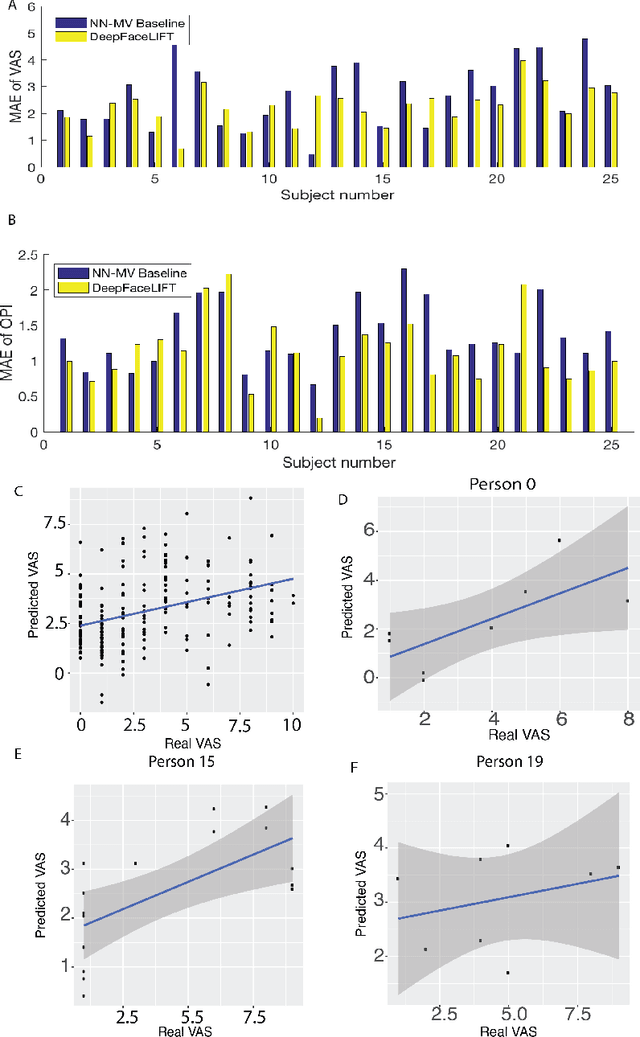

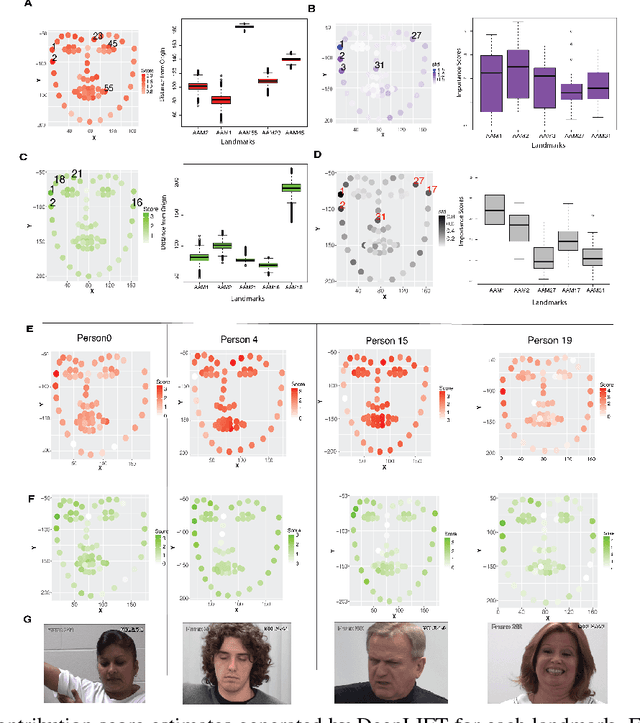

Abstract:Previous research on automatic pain estimation from facial expressions has focused primarily on "one-size-fits-all" metrics (such as PSPI). In this work, we focus on directly estimating each individual's self-reported visual-analog scale (VAS) pain metric, as this is considered the gold standard for pain measurement. The VAS pain score is highly subjective and context-dependent, and its range can vary significantly among different persons. To tackle these issues, we propose a novel two-stage personalized model, named DeepFaceLIFT, for automatic estimation of VAS. This model is based on (1) Neural Network and (2) Gaussian process regression models, and is used to personalize the estimation of self-reported pain via a set of hand-crafted personal features and multi-task learning. We show on the benchmark dataset for pain analysis (The UNBC-McMaster Shoulder Pain Expression Archive) that the proposed personalized model largely outperforms the traditional, unpersonalized models: the intra-class correlation improves from a baseline performance of 19\% to a personalized performance of 35\% while also providing confidence in the model\textquotesingle s estimates -- in contrast to existing models for the target task. Additionally, DeepFaceLIFT automatically discovers the pain-relevant facial regions for each person, allowing for an easy interpretation of the pain-related facial cues.

Personalized Automatic Estimation of Self-reported Pain Intensity from Facial Expressions

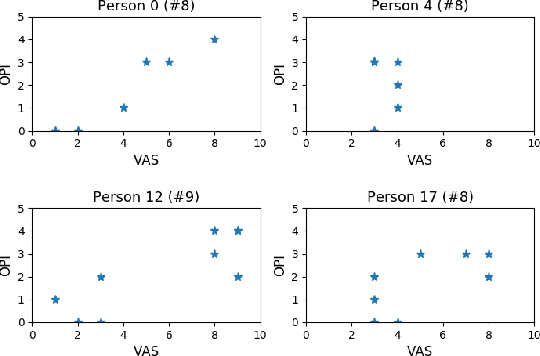

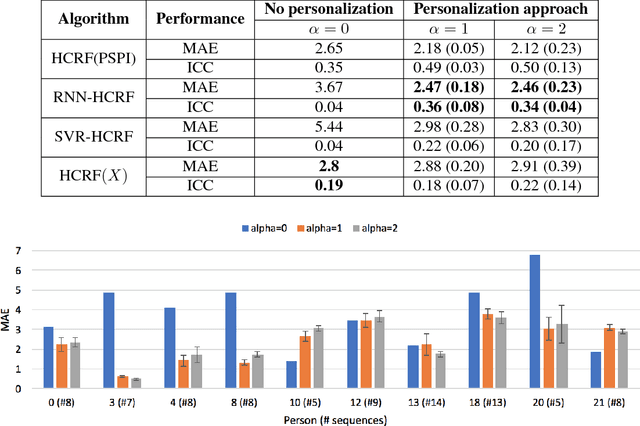

Jun 24, 2017

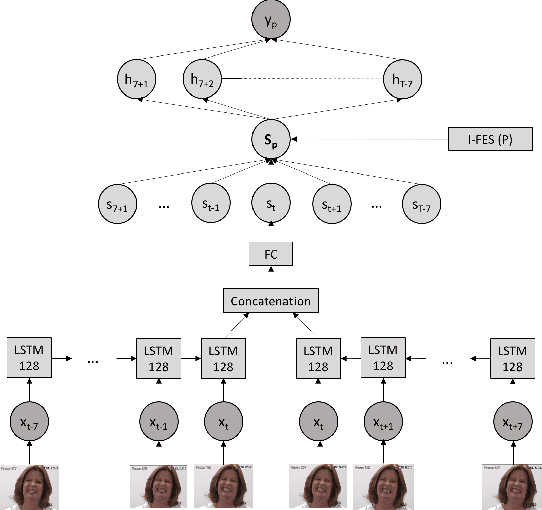

Abstract:Pain is a personal, subjective experience that is commonly evaluated through visual analog scales (VAS). While this is often convenient and useful, automatic pain detection systems can reduce pain score acquisition efforts in large-scale studies by estimating it directly from the participants' facial expressions. In this paper, we propose a novel two-stage learning approach for VAS estimation: first, our algorithm employs Recurrent Neural Networks (RNNs) to automatically estimate Prkachin and Solomon Pain Intensity (PSPI) levels from face images. The estimated scores are then fed into the personalized Hidden Conditional Random Fields (HCRFs), used to estimate the VAS, provided by each person. Personalization of the model is performed using a newly introduced facial expressiveness score, unique for each person. To the best of our knowledge, this is the first approach to automatically estimate VAS from face images. We show the benefits of the proposed personalized over traditional non-personalized approach on a benchmark dataset for pain analysis from face images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge