Raffael Kalisch

A statistical perspective on transformers for small longitudinal cohort data

Feb 18, 2026Abstract:Modeling of longitudinal cohort data typically involves complex temporal dependencies between multiple variables. There, the transformer architecture, which has been highly successful in language and vision applications, allows us to account for the fact that the most recently observed time points in an individual's history may not always be the most important for the immediate future. This is achieved by assigning attention weights to observations of an individual based on a transformation of their values. One reason why these ideas have not yet been fully leveraged for longitudinal cohort data is that typically, large datasets are required. Therefore, we present a simplified transformer architecture that retains the core attention mechanism while reducing the number of parameters to be estimated, to be more suitable for small datasets with few time points. Guided by a statistical perspective on transformers, we use an autoregressive model as a starting point and incorporate attention as a kernel-based operation with temporal decay, where aggregation of multiple transformer heads, i.e. different candidate weighting schemes, is expressed as accumulating evidence on different types of underlying characteristics of individuals. This also enables a permutation-based statistical testing procedure for identifying contextual patterns. In a simulation study, the approach is shown to recover contextual dependencies even with a small number of individuals and time points. In an application to data from a resilience study, we identify temporal patterns in the dynamics of stress and mental health. This indicates that properly adapted transformers can not only achieve competitive predictive performance, but also uncover complex context dependencies in small data settings.

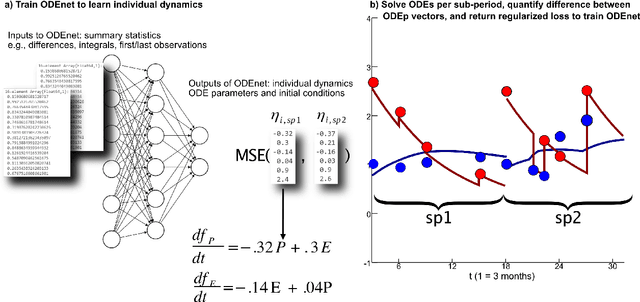

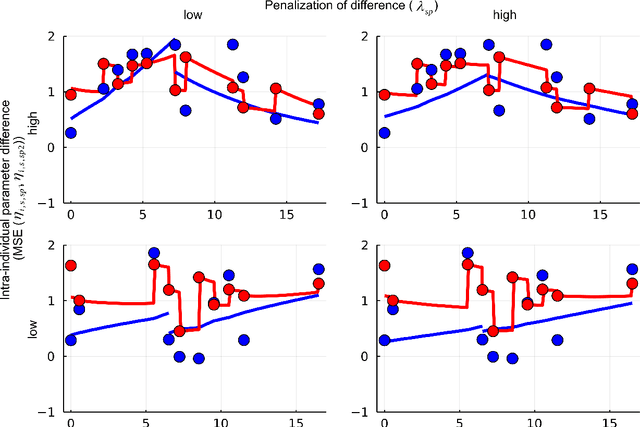

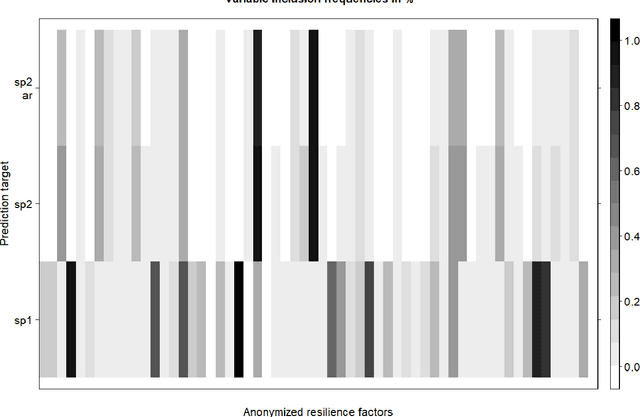

Deep learning and differential equations for modeling changes in individual-level latent dynamics between observation periods

Feb 15, 2022

Abstract:When modeling longitudinal biomedical data, often dimensionality reduction as well as dynamic modeling in the resulting latent representation is needed. This can be achieved by artificial neural networks for dimension reduction, and differential equations for dynamic modeling of individual-level trajectories. However, such approaches so far assume that parameters of individual-level dynamics are constant throughout the observation period. Motivated by an application from psychological resilience research, we propose an extension where different sets of differential equation parameters are allowed for observation sub-periods. Still, estimation for intra-individual sub-periods is coupled for being able to fit the model also with a relatively small dataset. We subsequently derive prediction targets from individual dynamic models of resilience in the application. These serve as interpretable resilience-related outcomes, to be predicted from characteristics of individuals, measured at baseline and a follow-up time point, and selecting a small set of important predictors. Our approach is seen to successfully identify individual-level parameters of dynamic models that allows us to stably select predictors, i.e., resilience factors. Furthermore, we can identify those characteristics of individuals that are the most promising for updates at follow-up, which might inform future study design. This underlines the usefulness of our proposed deep dynamic modeling approach with changes in parameters between observation sub-periods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge