Quanzhi Fu

AgentCgroup: Understanding and Controlling OS Resources of AI Agents

Feb 10, 2026Abstract:AI agents are increasingly deployed in multi-tenant cloud environments, where they execute diverse tool calls within sandboxed containers, each call with distinct resource demands and rapid fluctuations. We present a systematic characterization of OS-level resource dynamics in sandboxed AI coding agents, analyzing 144 software engineering tasks from the SWE-rebench benchmark across two LLM models. Our measurements reveal that (1) OS-level execution (tool calls, container and agent initialization) accounts for 56-74% of end-to-end task latency; (2) memory, not CPU, is the concurrency bottleneck; (3) memory spikes are tool-call-driven with a up to 15.4x peak-to-average ratio; and (4) resource demands are highly unpredictable across tasks, runs, and models. Comparing these characteristics against serverless, microservice, and batch workloads, we identify three mismatches in existing resource controls: a granularity mismatch (container-level policies vs. tool-call-level dynamics), a responsiveness mismatch (user-space reaction vs. sub-second unpredictable bursts), and an adaptability mismatch (history-based prediction vs. non-deterministic stateful execution). We propose AgentCgroup , an eBPF-based resource controller that addresses these mismatches through hierarchical cgroup structures aligned with tool-call boundaries, in-kernel enforcement via sched_ext and memcg_bpf_ops, and runtime-adaptive policies driven by in-kernel monitoring. Preliminary evaluation demonstrates improved multi-tenant isolation and reduced resource waste.

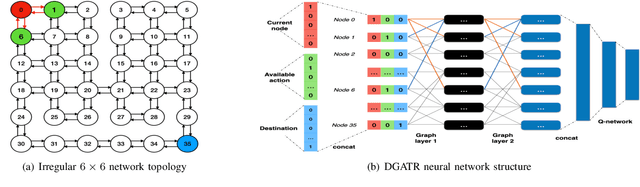

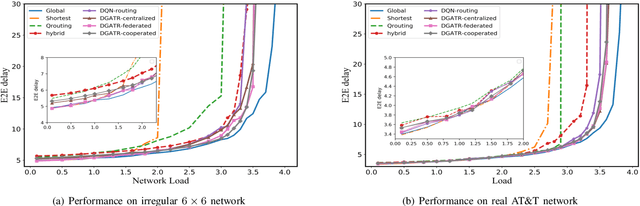

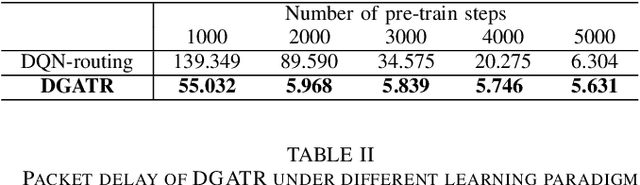

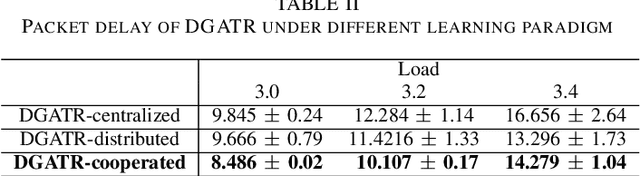

Packet Routing with Graph Attention Multi-agent Reinforcement Learning

Jul 28, 2021

Abstract:Packet routing is a fundamental problem in communication networks that decides how the packets are directed from their source nodes to their destination nodes through some intermediate nodes. With the increasing complexity of network topology and highly dynamic traffic demand, conventional model-based and rule-based routing schemes show significant limitations, due to the simplified and unrealistic model assumptions, and lack of flexibility and adaption. Adding intelligence to the network control is becoming a trend and the key to achieving high-efficiency network operation. In this paper, we develop a model-free and data-driven routing strategy by leveraging reinforcement learning (RL), where routers interact with the network and learn from the experience to make some good routing configurations for the future. Considering the graph nature of the network topology, we design a multi-agent RL framework in combination with Graph Neural Network (GNN), tailored to the routing problem. Three deployment paradigms, centralized, federated, and cooperated learning, are explored respectively. Simulation results demonstrate that our algorithm outperforms some existing benchmark algorithms in terms of packet transmission delay and affordable load.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge