Qinan Fan

Toward Cross-Lingual Definition Generation for Language Learners

Oct 12, 2020

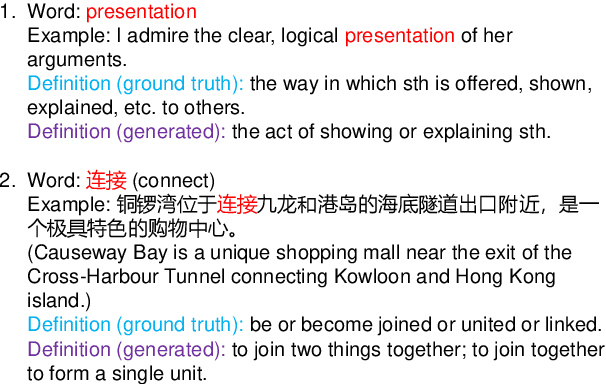

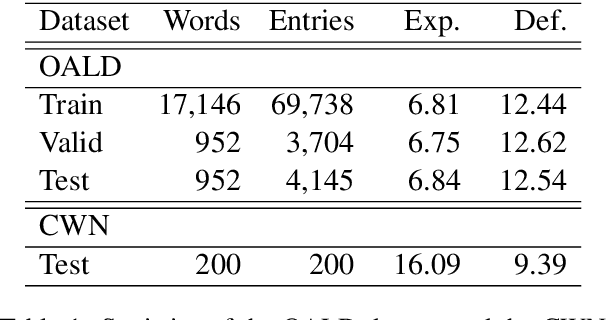

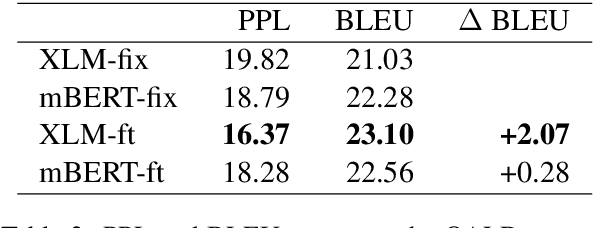

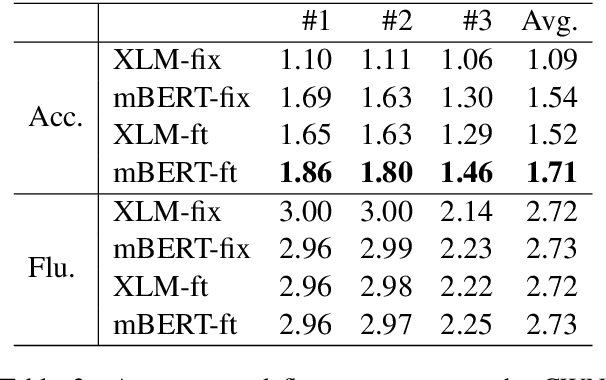

Abstract:Generating dictionary definitions automatically can prove useful for language learners. However, it's still a challenging task of cross-lingual definition generation. In this work, we propose to generate definitions in English for words in various languages. To achieve this, we present a simple yet effective approach based on publicly available pretrained language models. In this approach, models can be directly applied to other languages after trained on the English dataset. We demonstrate the effectiveness of this approach on zero-shot definition generation. Experiments and manual analyses on newly constructed datasets show that our models have a strong cross-lingual transfer ability and can generate fluent English definitions for Chinese words. We further measure the lexical complexity of generated and reference definitions. The results show that the generated definitions are much simpler, which is more suitable for language learners.

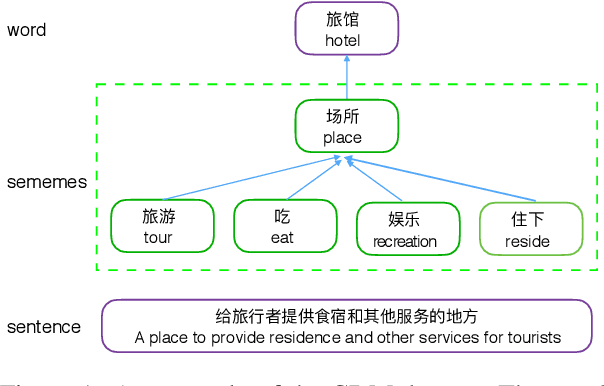

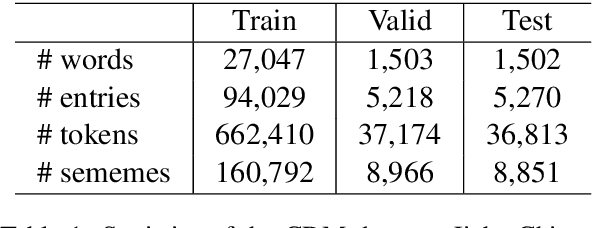

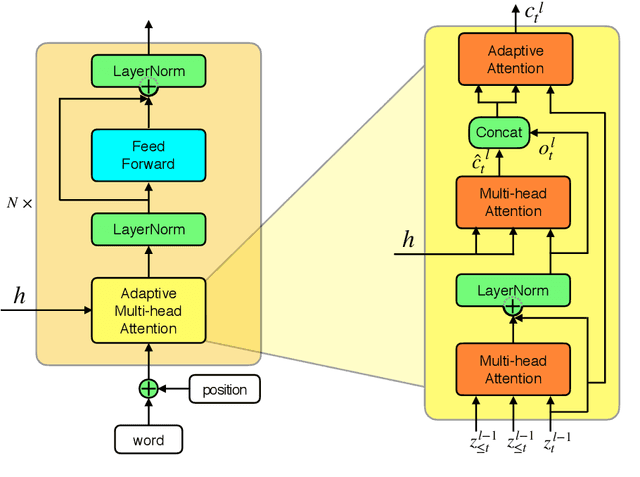

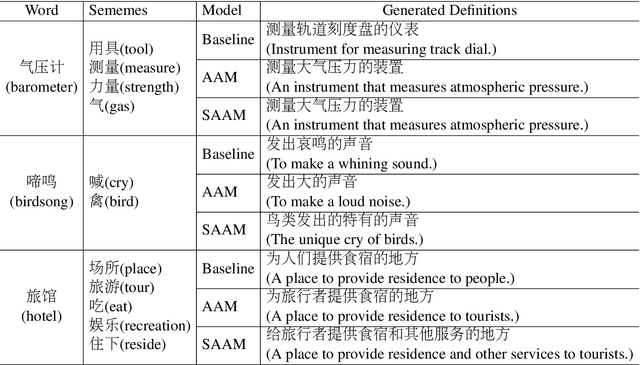

Incorporating Sememes into Chinese Definition Modeling

May 16, 2019

Abstract:Chinese definition modeling is a challenging task that generates a dictionary definition in Chinese for a given Chinese word. To accomplish this task, we construct the Chinese Definition Modeling Corpus (CDM), which contains triples of word, sememes and the corresponding definition. We present two novel models to improve Chinese definition modeling: the Adaptive-Attention model (AAM) and the Self- and Adaptive-Attention Model (SAAM). AAM successfully incorporates sememes for generating the definition with an adaptive attention mechanism. It has the capability to decide which sememes to focus on and when to pay attention to sememes. SAAM further replaces recurrent connections in AAM with self-attention and relies entirely on the attention mechanism, reducing the path length between word, sememes and definition. Experiments on CDM demonstrate that by incorporating sememes, our best proposed model can outperform the state-of-the-art method by +6.0 BLEU.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge