Qifan Xue

CILF:Causality Inspired Learning Framework for Out-of-Distribution Vehicle Trajectory Prediction

Jul 11, 2023

Abstract:Trajectory prediction is critical for autonomous driving vehicles. Most existing methods tend to model the correlation between history trajectory (input) and future trajectory (output). Since correlation is just a superficial description of reality, these methods rely heavily on the i.i.d. assumption and evince a heightened susceptibility to out-of-distribution data. To address this problem, we propose an Out-of- Distribution Causal Graph (OOD-CG), which explicitly defines the underlying causal structure of the data with three entangled latent features: 1) domain-invariant causal feature (IC), 2) domain-variant causal feature (VC), and 3) domain-variant non-causal feature (VN ). While these features are confounded by confounder (C) and domain selector (D). To leverage causal features for prediction, we propose a Causal Inspired Learning Framework (CILF), which includes three steps: 1) extracting domain-invariant causal feature by means of an invariance loss, 2) extracting domain variant feature by domain contrastive learning, and 3) separating domain-variant causal and non-causal feature by encouraging causal sufficiency. We evaluate the performance of CILF in different vehicle trajectory prediction models on the mainstream datasets NGSIM and INTERACTION. Experiments show promising improvements in CILF on domain generalization.

Contrastive Embedding Distribution Refinement and Entropy-Aware Attention for 3D Point Cloud Classification

Jan 27, 2022

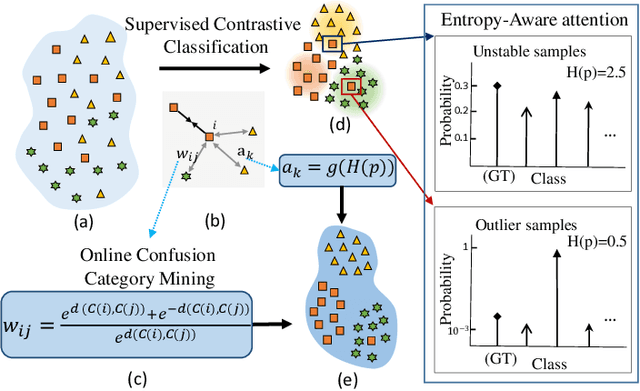

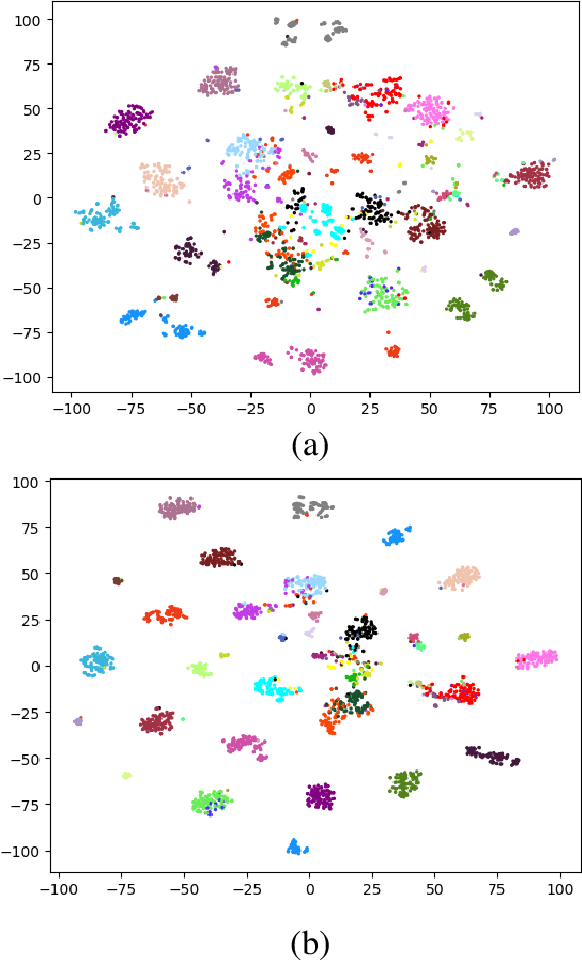

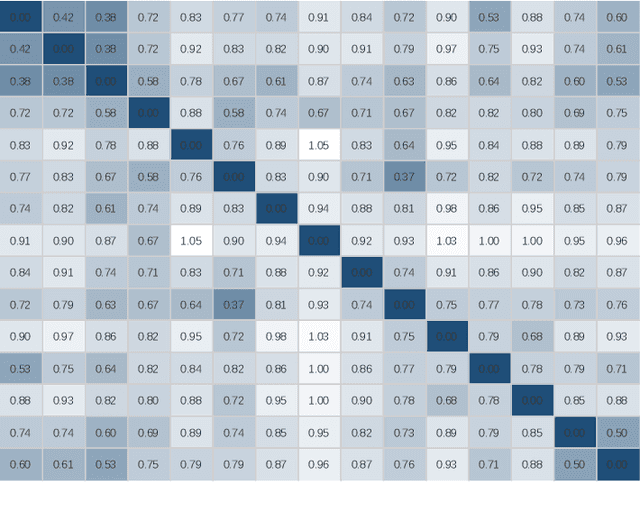

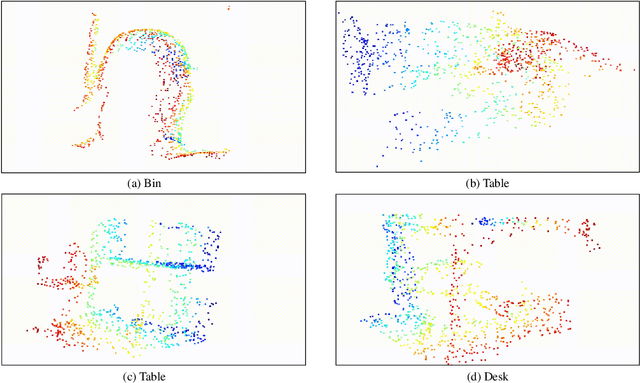

Abstract:Learning a powerful representation from point clouds is a fundamental and challenging problem in the field of computer vision. Different from images where RGB pixels are stored in the regular grid, for point clouds, the underlying semantic and structural information of point clouds is the spatial layout of the points. Moreover, the properties of challenging in-context and background noise pose more challenges to point cloud analysis. One assumption is that the poor performance of the classification model can be attributed to the indistinguishable embedding feature that impedes the search for the optimal classifier. This work offers a new strategy for learning powerful representations via a contrastive learning approach that can be embedded into any point cloud classification network. First, we propose a supervised contrastive classification method to implement embedding feature distribution refinement by improving the intra-class compactness and inter-class separability. Second, to solve the confusion problem caused by small inter-class compactness and inter-class separability. Second, to solve the confusion problem caused by small inter-class variations between some similar-looking categories, we propose a confusion-prone class mining strategy to alleviate the confusion effect. Finally, considering that outliers of the sample clusters in the embedding space may cause performance degradation, we design an entropy-aware attention module with information entropy theory to identify the outlier cases and the unstable samples by measuring the uncertainty of predicted probability. The results of extensive experiments demonstrate that our method outperforms the state-of-the-art approaches by achieving 82.9% accuracy on the real-world ScanObjectNN dataset and substantial performance gains up to 2.9% in DCGNN, 3.1% in PointNet++, and 2.4% in GBNet.

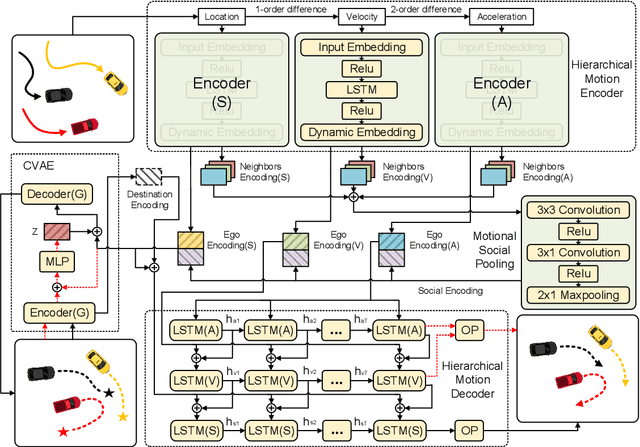

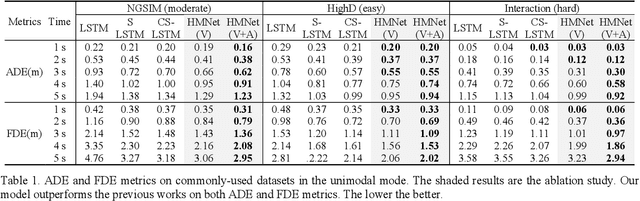

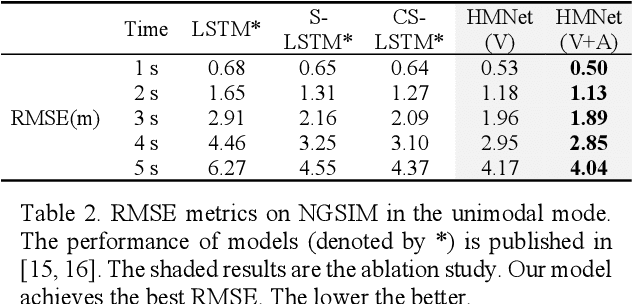

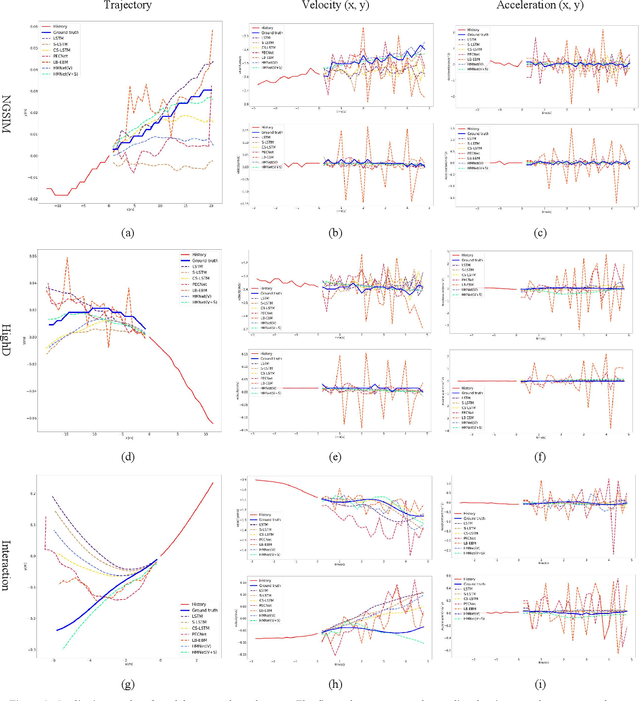

Hierarchical Motion Encoder-Decoder Network for Trajectory Forecasting

Nov 26, 2021

Abstract:Trajectory forecasting plays a pivotal role in the field of intelligent vehicles or social robots. Recent works focus on modeling spatial social impacts or temporal motion attentions, but neglect inherent properties of motions, i.e. moving trends and driving intentions. This paper proposes a context-free Hierarchical Motion Encoder-Decoder Network (HMNet) for vehicle trajectory prediction. HMNet first infers the hierarchical difference on motions to encode physically compliant patterns with high expressivity of moving trends and driving intentions. Then, a goal (endpoint)-embedded decoder hierarchically constructs multimodal predictions depending on the location-velocity-acceleration-related patterns. Besides, we present a modified social pooling module which considers certain motion properties to represent social interactions. HMNet enables to make the accurate, unimodal/multimodal and physically-socially-compliant prediction. Experiments on three public trajectory prediction datasets, i.e. NGSIM, HighD and Interaction show that our model achieves the state-of-the-art performance both quantitatively and qualitatively. We will release our code here: https://github.com/xuedashuai/HMNet.

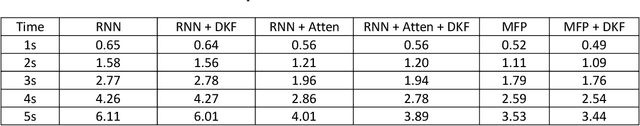

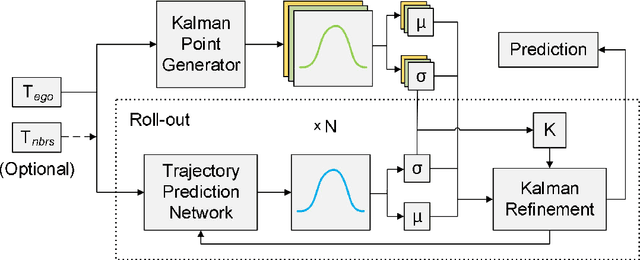

Deep Kalman Filter: A Refinement Module for the Rollout Trajectory Prediction Methods

Feb 22, 2021

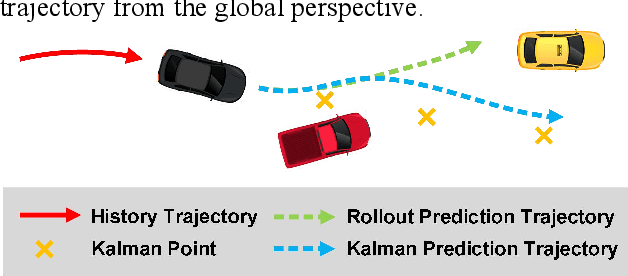

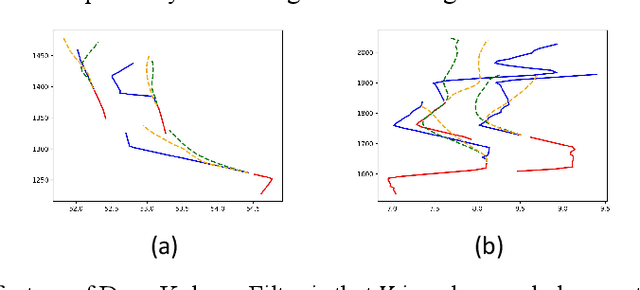

Abstract:Trajectory prediction plays a pivotal role in the field of intelligent vehicles. It currently suffers from several challenges, e.g., accumulative error in rollout process and weak adaptability in various scenarios. This paper proposes a parametric-learning Kalman filter based on deep neural network for trajectory prediction. We design a flexible plug-in module which can be readily implanted into most rollout approaches. Kalman points are proposed to capture the long-term prediction stability from the global perspective. We carried experiments out on the NGSIM dataset. The promising results indicate that our method could improve rollout trajectory prediction methods effectively.

Spatial-Channel Transformer Network for Trajectory Prediction on the Traffic Scenes

Feb 05, 2021

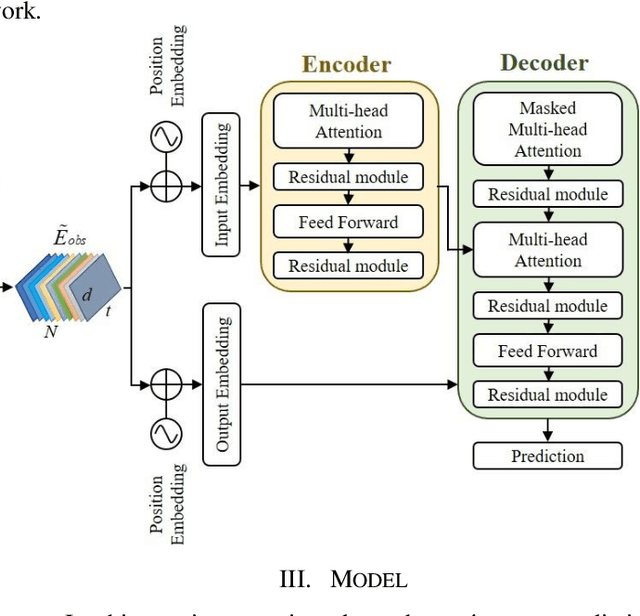

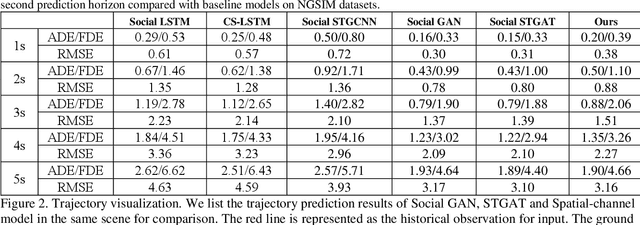

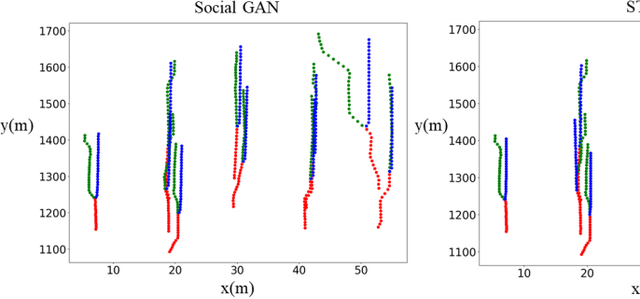

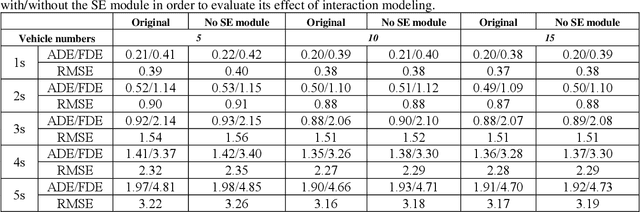

Abstract:Predicting motion of surrounding agents is critical to real-world applications of tactical path planning for autonomous driving. Due to the complex temporal dependencies and social interactions of agents, on-line trajectory prediction is a challenging task. With the development of attention mechanism in recent years, transformer model has been applied in natural language sequence processing first and then image processing. In this paper, we present a Spatial-Channel Transformer Network for trajectory prediction with attention functions. Instead of RNN models, we employ transformer model to capture the spatial-temporal features of agents. A channel-wise module is inserted to measure the social interaction between agents. We find that the Spatial-Channel Transformer Network achieves promising results on real-world trajectory prediction datasets on the traffic scenes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge