Peter Duerr

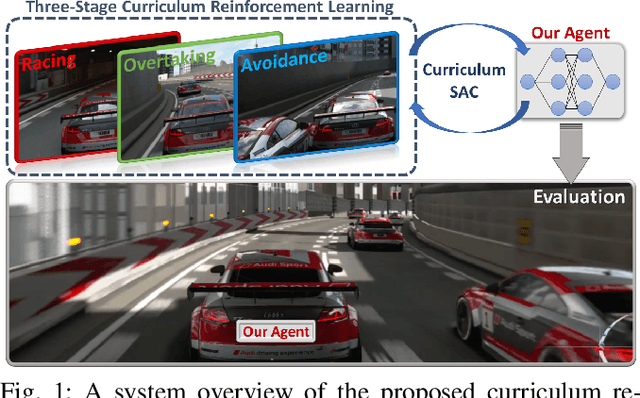

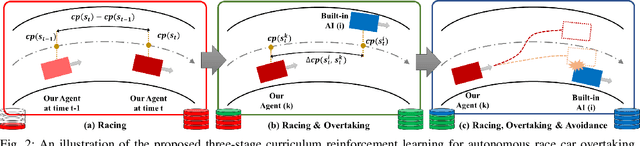

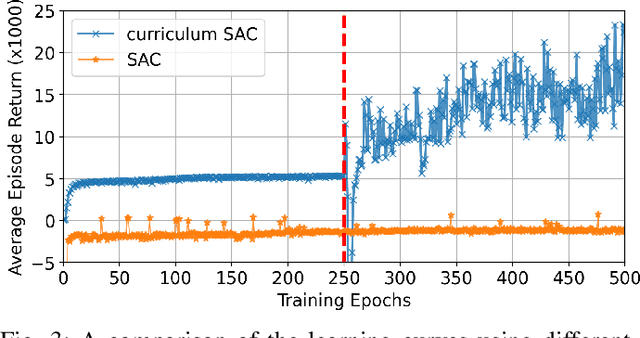

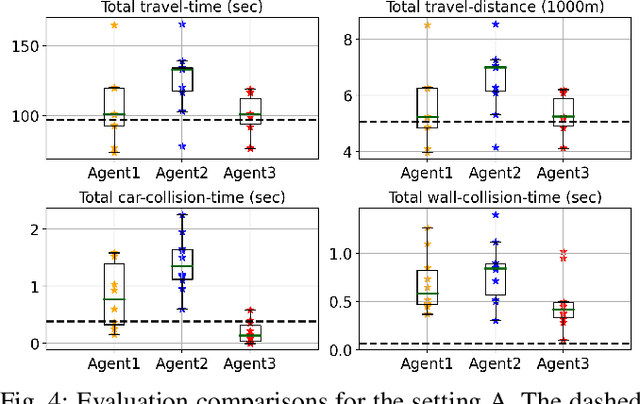

Autonomous Overtaking in Gran Turismo Sport Using Curriculum Reinforcement Learning

Mar 26, 2021

Abstract:Professional race car drivers can execute extreme overtaking maneuvers. However, conventional systems for autonomous overtaking rely on either simplified assumptions about the vehicle dynamics or solving expensive trajectory optimization problems online. When the vehicle is approaching its physical limits, existing model-based controllers struggled to handle highly nonlinear dynamics and cannot leverage the large volume of data generated by simulation or real-world driving. To circumvent these limitations, this work proposes a new learning-based method to tackle the autonomous overtaking problem. We evaluate our approach using Gran Turismo Sport -- a world-leading car racing simulator known for its detailed dynamic modeling of various cars and tracks. By leveraging curriculum learning, our approach leads to faster convergence as well as increased performance compared to vanilla reinforcement learning. As a result, the trained controller outperforms the built-in model-based game AI and achieves comparable overtaking performance with an experienced human driver.

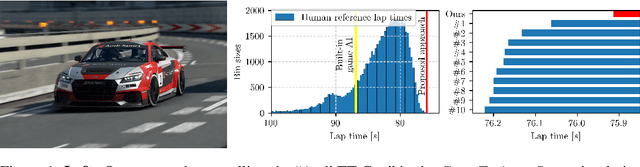

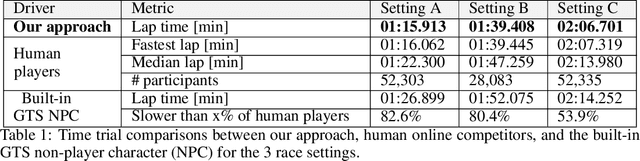

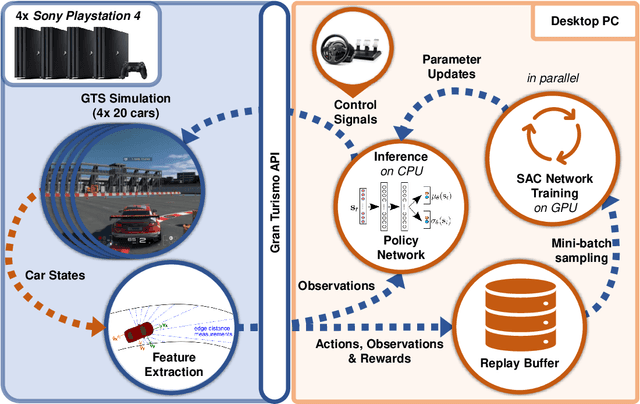

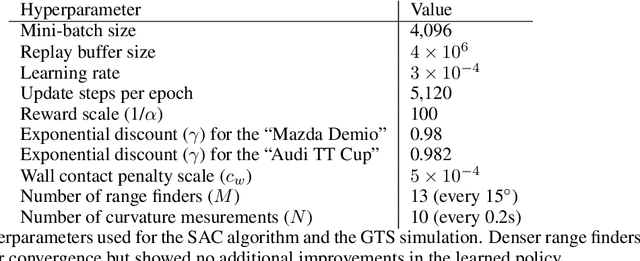

Super-Human Performance in Gran Turismo Sport Using Deep Reinforcement Learning

Aug 18, 2020

Abstract:Autonomous car racing raises fundamental robotics challenges such as planning minimum-time trajectories under uncertain dynamics and controlling the car at its friction limits. In this project, we consider the task of autonomous car racing in the top-selling car racing game Gran Turismo Sport. Gran Turismo Sport is known for its detailed physics simulation of various cars and tracks. Our approach makes use of maximum-entropy deep reinforcement learning and a new reward design to train a sensorimotor policy to complete a given race track as fast as possible. We evaluate our approach in three different time trial settings with different cars and tracks. Our results show that the obtained controllers not only beat the built-in non-player character of Gran Turismo Sport, but also outperform the fastest known times in a dataset of personal best lap times of over 50,000 human drivers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge