Peihan Zhang

Dexterous Control of an 11-DOF Redundant Robot for CT-Guided Needle Insertion With Task-Oriented Weighted Policies

Mar 18, 2025

Abstract:Computed tomography (CT)-guided needle biopsies are critical for diagnosing a range of conditions, including lung cancer, but present challenges such as limited in-bore space, prolonged procedure times, and radiation exposure. Robotic assistance offers a promising solution by improving needle trajectory accuracy, reducing radiation exposure, and enabling real-time adjustments. In our previous work, we introduced a redundant robotic platform designed for dexterous needle insertion within the confined CT bore. However, its limited base mobility restricts flexible deployment in clinical settings. In this study, we present an improved 11-degree-of-freedom (DOF) robotic system that integrates a 6-DOF robotic base with a 5-DOF cable-driven end-effector, significantly enhancing workspace flexibility and precision. With the hyper-redundant degrees of freedom, we introduce a weighted inverse kinematics controller with a two-stage priority scheme for large-scale movement and fine in-bore adjustments, along with a null-space control strategy to optimize dexterity. We validate our system through both simulation and real-world experiments, demonstrating superior tracking accuracy and enhanced manipulability in CT-guided procedures. The study provides a strong case for hyper-redundancy and null-space control formulations for robot-assisted needle biopsy scenarios.

Risk-aware Trajectory Sampling for Quadrotor Obstacle Avoidance in Dynamic Environments

Jan 17, 2022

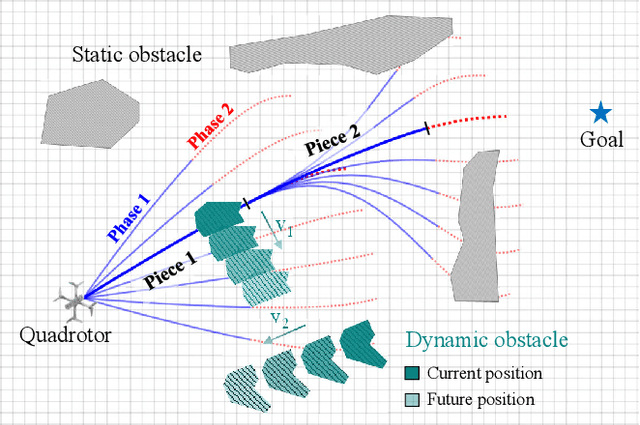

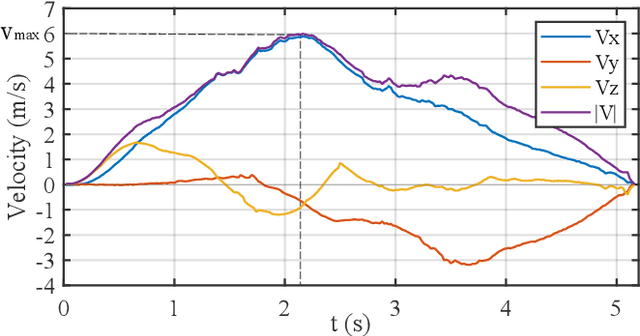

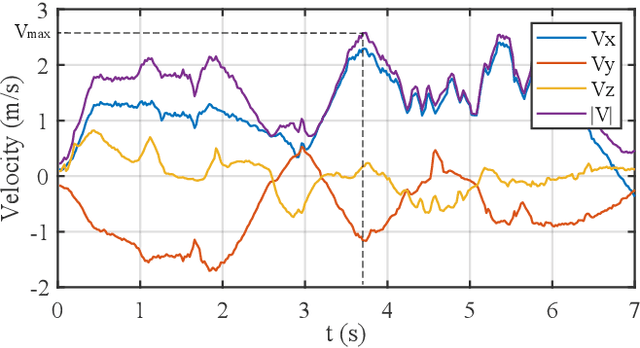

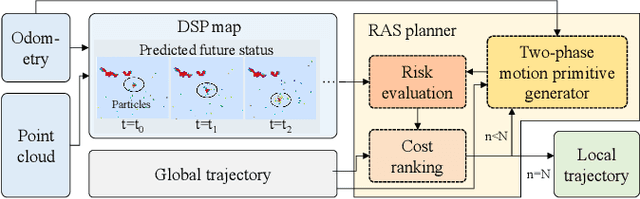

Abstract:Obstacle avoidance of quadrotors in dynamic environments, with both static and dynamic obstacles, is still a very open problem. Current works commonly leverage traditional static maps to represent static obstacles and the detection and tracking of moving objects (DATMO) method to model dynamic obstacles separately. The dynamic obstacles are pre-trained in the detector and can only be modeled with certain shapes, such as cylinders or ellipsoids. This work utilizes our dual-structure particle-based (DSP) dynamic occupancy map to represent the arbitrary-shaped static obstacles and dynamic obstacles simultaneously and proposes an efficient risk-aware sampling-based local trajectory planner to realize safe flights in this map. The trajectory is planned by sampling motion primitives generated in the state space. Each motion primitive is divided into two phases: short-term planning with a strict risk limitation and relatively long-term planning designed to avoid high-risk regions. The risk is evaluated with the predicted future occupancy status, represented by particles, considering the time dimension. With an approach to split from and merge to global trajectories, the planner can also be used with an arbitrary preplanned global trajectory. Comparison experiments show that the obstacle avoidance system composed of the DSP map and our planner performs the best in dynamic environments. In real-world tests, our quadrotor reaches a speed of 6 m/s with the motion capture system and 2 m/s with everything computed on a low-price single board computer.

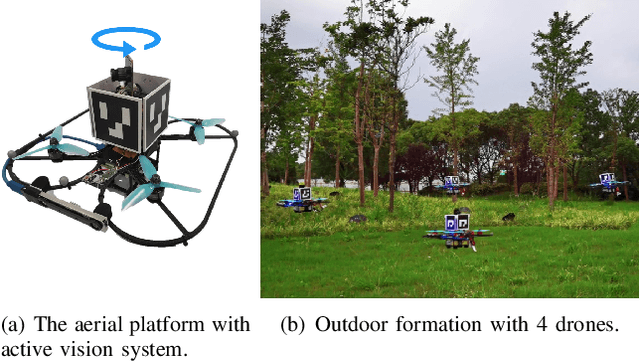

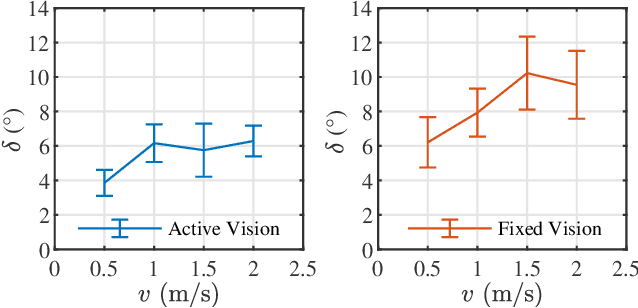

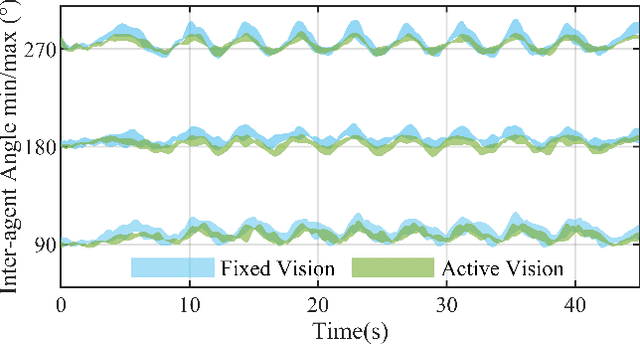

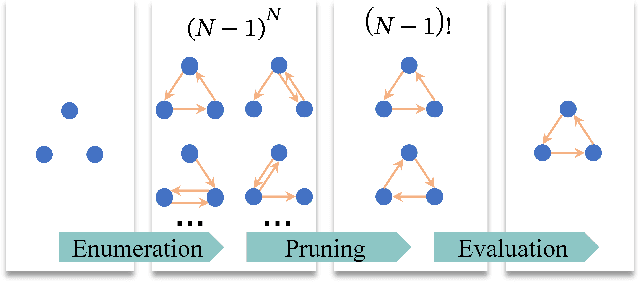

Agile Formation Control of Drone Flocking Enhanced with Active Vision-based Relative Localization

Aug 12, 2021

Abstract:Relative localization is a prerequisite for the cooperation of aerial swarms. The vision-based approach has been investigated owing to its scalability and independence on communication. However, the limited field of view (FOV) inherently restricts the performance of vision-based relative localization. Inspired by bird flocks in nature, this letter proposes a novel distributed active vision-based relative localization framework for formation control in aerial swarms. Aiming at improving observation quality and formation accuracy, we devise graph-based attention planning (GAP) to determine the active observation scheme in the swarm. Then active detection results are fused with onboard measurements from UWB and VIO to obtain real-time relative positions, which further improve the formation control performance. Real-world experiments show that the proposed active vision system enables the swarm agents to achieve agile flocking movements with an acceleration of 4 $m/s^2$ in circular formation tasks. A 45.3 % improvement of formation accuracy has been achieved compared with the fixed vision system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge