Pablo Gil

Automatics, Robotics and Artificial Vision Research Group, Dept. of Physics, System Engineering and Signal Theory, University of Alicante, Spain, Computer Science Research Institute, University of Alicante, Spain

VBT-MPC: Vision-Based Tactile MPC for Contour Following

May 19, 2026Abstract:Tactile sensing plays a key role in robotic manipulation, particularly in tasks like surface inspection. Successful execution requires maintaining contact while accurately tracking object contours. In this work, we propose a Vision-Based Tactile Model Predictive Control (VBT-MPC) framework for robotic contour following using a Vision-Based Tactile Sensor (VBTS) mounted in an eye-in-hand configuration. The proposed controller operates directly in contour features space, thereby avoiding the need for separate pose-estimation modules or complex force-control architectures. We further compare our VBT-MPC with visual-servoing strategies adapted to tactile features, and evaluate contour tracking on objects with diverse geometries and materials in both simulation and real-world experiments.

Grasping Force Estimation for Markerless Visuotactile Sensors

Oct 30, 2024Abstract:Tactile sensors have been used for force estimation in the past, especially Vision-Based Tactile Sensors (VBTS) have recently become a new trend due to their high spatial resolution and low cost. In this work, we have designed and implemented several approaches to estimate the normal grasping force using different types of markerless visuotactile representations obtained from VBTS. Our main goal is to determine the most appropriate visuotactile representation, based on a performance analysis during robotic grasping tasks. Our proposal has been tested on the dataset generated with our DIGIT sensors and another one obtained using GelSight Mini sensors from another state-of-the-art work. We have also tested the generalization capabilities of our best approach, called RGBmod. The results led to two main conclusions. First, the RGB visuotactile representation is a better input option than the depth image or a combination of the two for estimating normal grasping forces. Second, RGBmod achieved a good performance when tested on 10 unseen everyday objects in real-world scenarios, achieving an average relative error of 0.125 +- 0.153. Furthermore, we show that our proposal outperforms other works in the literature that use RGB and depth information for the same task.

QDGset: A Large Scale Grasping Dataset Generated with Quality-Diversity

Oct 03, 2024

Abstract:Recent advances in AI have led to significant results in robotic learning, but skills like grasping remain partially solved. Many recent works exploit synthetic grasping datasets to learn to grasp unknown objects. However, those datasets were generated using simple grasp sampling methods using priors. Recently, Quality-Diversity (QD) algorithms have been proven to make grasp sampling significantly more efficient. In this work, we extend QDG-6DoF, a QD framework for generating object-centric grasps, to scale up the production of synthetic grasping datasets. We propose a data augmentation method that combines the transformation of object meshes with transfer learning from previous grasping repertoires. The conducted experiments show that this approach reduces the number of required evaluations per discovered robust grasp by up to 20%. We used this approach to generate QDGset, a dataset of 6DoF grasp poses that contains about 3.5 and 4.5 times more grasps and objects, respectively, than the previous state-of-the-art. Our method allows anyone to easily generate data, eventually contributing to a large-scale collaborative dataset of synthetic grasps.

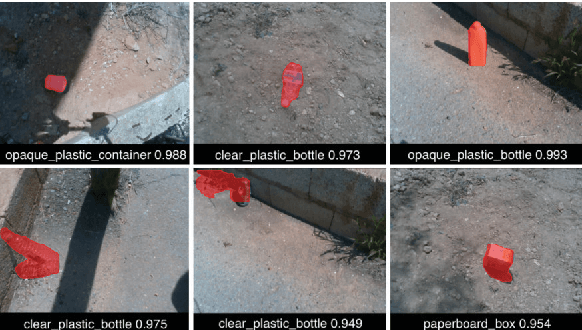

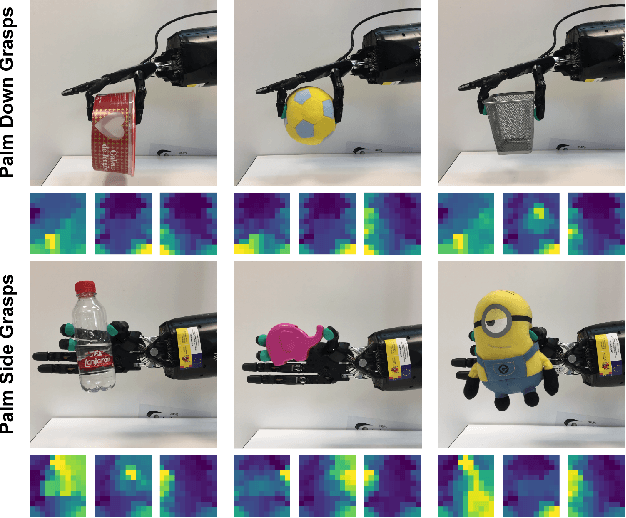

Visual-tactile manipulation to collect household waste in outdoor

Jul 15, 2024Abstract:This work presents a perception system applied to robotic manipulation, that is able to assist in navigation, household waste classification and collection in outdoor environments. This system is made up of optical tactile sensors, RGBD cameras and a LiDAR. These sensors are integrated on a mobile platform with a robot manipulator and a robotic gripper. Our system is divided in three software modules, two of them are vision-based and the last one is tactile-based. The vision-based modules use CNNs to localize and recognize solid household waste, together with the grasping points estimation. The tactile-based module, which also uses CNNs and image processing, adjusts the gripper opening to control the grasping from touch data. Our proposal achieves localization errors around 6 %, a recognition accuracy of 98% and ensures the grasping stability the 91% of the attempts. The sum of runtimes of the three modules is less than 750 ms.

* in Spanish language

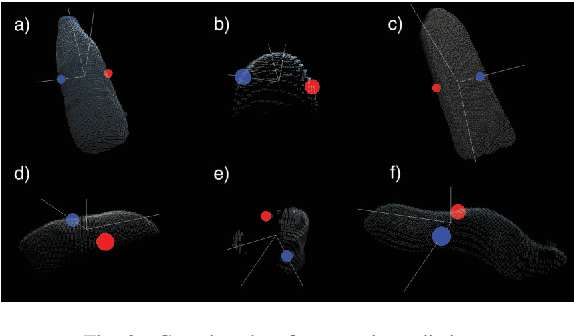

Vision and Tactile Robotic System to Grasp Litter in Outdoor Environments

Jul 11, 2024Abstract:The accumulation of litter is increasing in many places and is consequently becoming a problem that must be dealt with. In this paper, we present a manipulator robotic system to collect litter in outdoor environments. This system has three functionalities. Firstly, it uses colour images to detect and recognise litter comprising different materials. Secondly, depth data are combined with pixels of waste objects to compute a 3D location and segment three-dimensional point clouds of the litter items in the scene. The grasp in 3 Degrees of Freedom (DoFs) is then estimated for a robot arm with a gripper for the segmented cloud of each instance of waste. Finally, two tactile-based algorithms are implemented and then employed in order to provide the gripper with a sense of touch. This work uses two low-cost visual-based tactile sensors at the fingertips. One of them addresses the detection of contact (which is obtained from tactile images) between the gripper and solid waste, while another has been designed to detect slippage in order to prevent the objects grasped from falling. Our proposal was successfully tested by carrying out extensive experimentation with different objects varying in size, texture, geometry and materials in different outdoor environments (a tiled pavement, a surface of stone/soil, and grass). Our system achieved an average score of 94% for the detection and Collection Success Rate (CSR) as regards its overall performance, and of 80% for the collection of items of litter at the first attempt.

Measuring Object Rotation via Visuo-Tactile Segmentation

Jan 18, 2024

Abstract:When carrying out robotic manipulation tasks, objects occasionally fall as a result of the rotation caused by slippage. This can be prevented by obtaining tactile information that provides better knowledge on the physical properties of the grasping. In this paper, we estimate the rotation angle of a grasped object when slippage occurs. We implement a system made up of a neural network with which to segment the contact region and an algorithm with which to estimate the rotated angle of that region. This method is applied to DIGIT tactile sensors. Our system has additionally been trained and tested with our publicly available dataset which is, to the best of our knowledge, the first dataset related to tactile segmentation from non-synthetic images to appear in the literature, and with which we have attained results of 95% and 90% as regards Dice and IoU metrics in the worst scenario. Moreover, we have obtained a maximum error of 3 degrees when testing with objects not previously seen by our system in 45 different lifts. This, therefore, proved that our approach is able to detect the slippage movement, thus providing a possible reaction that will prevent the object from falling.

Rotational Slippage Prediction from Segmentation of Tactile Images

May 08, 2023Abstract:Adding tactile sensors to a robotic system is becoming a common practice to achieve more complex manipulation skills than those robotics systems that only use external cameras to manipulate objects. The key of tactile sensors is that they provide extra information about the physical properties of the grasping. In this paper, we implemented a system to predict and quantify the rotational slippage of objects in hand using the vision-based tactile sensor known as Digit. Our system comprises a neural network that obtains the segmented contact region (object-sensor), to later calculate the slippage rotation angle from this region using a thinning algorithm. Besides, we created our own tactile segmentation dataset, which is the first one in the literature as far as we are concerned, to train and evaluate our neural network, obtaining results of 95% and 91% in Dice and IoU metrics. In real-scenario experiments, our system is able to predict rotational slippage with a maximum mean rotational error of 3 degrees with previously unseen objects. Thus, our system can be used to prevent an object from falling due to its slippage.

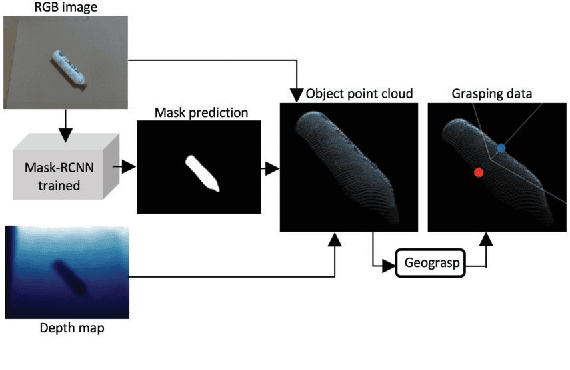

Domestic waste detection and grasping points for robotic picking up

May 14, 2021

Abstract:This paper presents an AI system applied to location and robotic grasping. Experimental setup is based on a parameter study to train a deep-learning network based on Mask-RCNN to perform waste location in indoor and outdoor environment, using five different classes and generating a new waste dataset. Initially the AI system obtain the RGBD data of the environment, followed by the detection of objects using the neural network. Later, the 3D object shape is computed using the network result and the depth channel. Finally, the shape is used to compute grasping for a robot arm with a two-finger gripper. The objective is to classify the waste in groups to improve a recycling strategy.

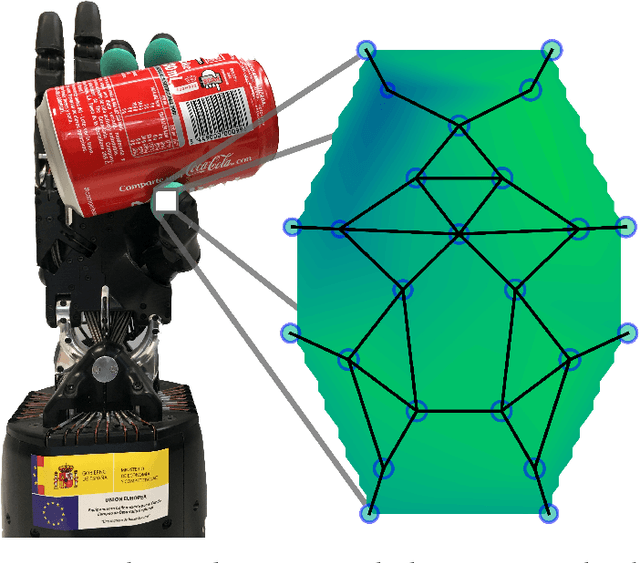

TactileGCN: A Graph Convolutional Network for Predicting Grasp Stability with Tactile Sensors

Jan 18, 2019

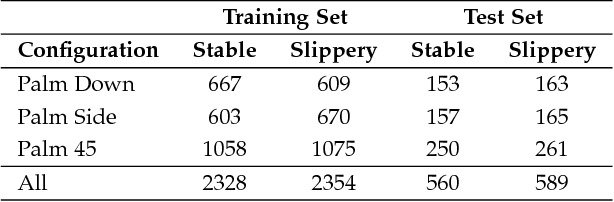

Abstract:Tactile sensors provide useful contact data during the interaction with an object which can be used to accurately learn to determine the stability of a grasp. Most of the works in the literature represented tactile readings as plain feature vectors or matrix-like tactile images, using them to train machine learning models. In this work, we explore an alternative way of exploiting tactile information to predict grasp stability by leveraging graph-like representations of tactile data, which preserve the actual spatial arrangement of the sensor's taxels and their locality. In experimentation, we trained a Graph Neural Network to binary classify grasps as stable or slippery ones. To train such network and prove its predictive capabilities for the problem at hand, we captured a novel dataset of approximately 5000 three-fingered grasps across 41 objects for training and 1000 grasps with 10 unknown objects for testing. Our experiments prove that this novel approach can be effectively used to predict grasp stability.

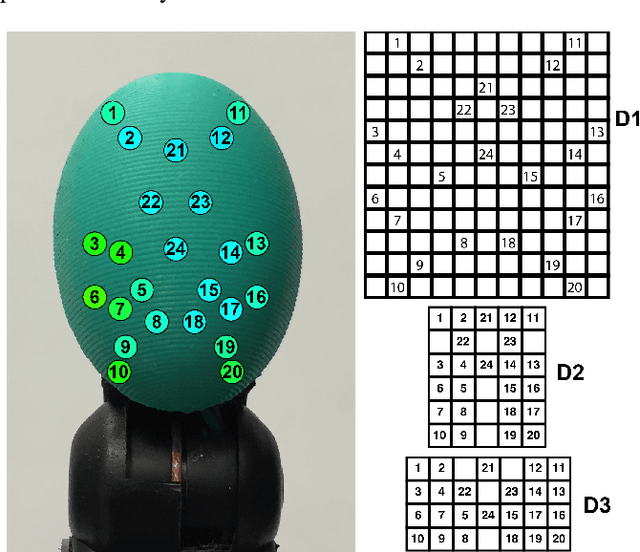

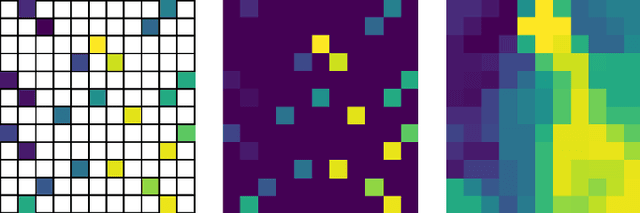

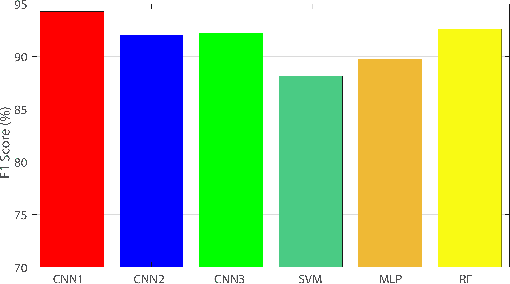

Non-Matrix Tactile Sensors: How Can Be Exploited Their Local Connectivity For Predicting Grasp Stability?

Sep 14, 2018

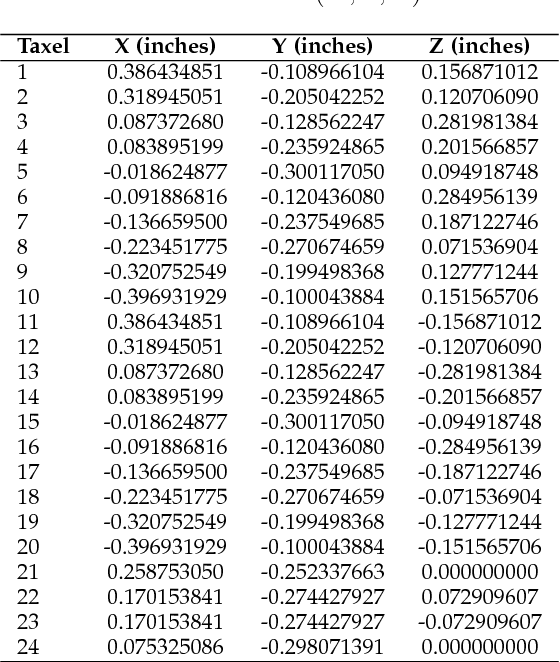

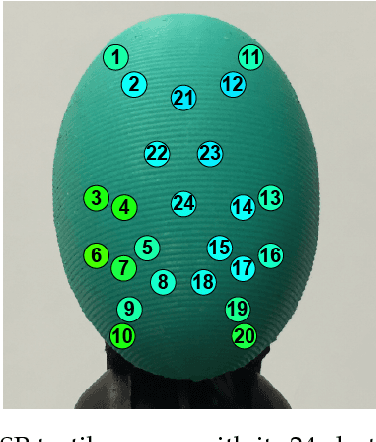

Abstract:Tactile sensors supply useful information during the interaction with an object that can be used for assessing the stability of a grasp. Most of the previous works on this topic processed tactile readings as signals by calculating hand-picked features. Some of them have processed these readings as images calculating characteristics on matrix-like sensors. In this work, we explore how non-matrix sensors (sensors with taxels not arranged exactly in a matrix) can be processed as tactile images as well. In addition, we prove that they can be used for predicting grasp stability by training a Convolutional Neural Network (CNN) with them. We captured over 2500 real three-fingered grasps on 41 everyday objects to train a CNN that exploited the local connectivity inherent on the non-matrix tactile sensors, achieving 94.2% F1-score on predicting stability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge