Santiago T. Puente

Transferability of labels between multilens cameras

Jan 20, 2025Abstract:In this work, a new method for automatically extending Bounding Box (BB) and mask labels across different channels on multilens cameras is presented. For that purpose, the proposed method combines the well known phase correlation method with a refinement process. During the first step, images are aligned by localizing the peak of intensity obtained in the spatial domain after performing the cross correlation process in the frequency domain. The second step consists of obtaining the best possible transformation by using an iterative process maximising the IoU (Intersection over Union) metric. Results show that, by using this method, labels could be transferred across different lens on a camera with an accuracy over 90% in most cases and just by using 65 ms in the whole process. Once the transformations are obtained, artificial RGB images are generated, for labeling them so as to transfer this information into each of the other lens. This work will allow users to use this type of cameras in more fields rather than satellite or medical imagery, giving the chance of labeling even invisible objects in the visible spectrum.

QDGset: A Large Scale Grasping Dataset Generated with Quality-Diversity

Oct 03, 2024

Abstract:Recent advances in AI have led to significant results in robotic learning, but skills like grasping remain partially solved. Many recent works exploit synthetic grasping datasets to learn to grasp unknown objects. However, those datasets were generated using simple grasp sampling methods using priors. Recently, Quality-Diversity (QD) algorithms have been proven to make grasp sampling significantly more efficient. In this work, we extend QDG-6DoF, a QD framework for generating object-centric grasps, to scale up the production of synthetic grasping datasets. We propose a data augmentation method that combines the transformation of object meshes with transfer learning from previous grasping repertoires. The conducted experiments show that this approach reduces the number of required evaluations per discovered robust grasp by up to 20%. We used this approach to generate QDGset, a dataset of 6DoF grasp poses that contains about 3.5 and 4.5 times more grasps and objects, respectively, than the previous state-of-the-art. Our method allows anyone to easily generate data, eventually contributing to a large-scale collaborative dataset of synthetic grasps.

Vision and Tactile Robotic System to Grasp Litter in Outdoor Environments

Jul 11, 2024Abstract:The accumulation of litter is increasing in many places and is consequently becoming a problem that must be dealt with. In this paper, we present a manipulator robotic system to collect litter in outdoor environments. This system has three functionalities. Firstly, it uses colour images to detect and recognise litter comprising different materials. Secondly, depth data are combined with pixels of waste objects to compute a 3D location and segment three-dimensional point clouds of the litter items in the scene. The grasp in 3 Degrees of Freedom (DoFs) is then estimated for a robot arm with a gripper for the segmented cloud of each instance of waste. Finally, two tactile-based algorithms are implemented and then employed in order to provide the gripper with a sense of touch. This work uses two low-cost visual-based tactile sensors at the fingertips. One of them addresses the detection of contact (which is obtained from tactile images) between the gripper and solid waste, while another has been designed to detect slippage in order to prevent the objects grasped from falling. Our proposal was successfully tested by carrying out extensive experimentation with different objects varying in size, texture, geometry and materials in different outdoor environments (a tiled pavement, a surface of stone/soil, and grass). Our system achieved an average score of 94% for the detection and Collection Success Rate (CSR) as regards its overall performance, and of 80% for the collection of items of litter at the first attempt.

Visual Servoing NMPC Applied to UAVs for Photovoltaic Array Inspection

Nov 14, 2023Abstract:The photovoltaic (PV) industry is seeing a significant shift toward large-scale solar plants, where traditional inspection methods have proven to be time-consuming and costly. Currently, the predominant approach to PV inspection using unmanned aerial vehicles (UAVs) is based on photogrammetry. However, the photogrammetry approach presents limitations, such as an increased amount of useless data during flights, potential issues related to image resolution, and the detection process during high-altitude flights. In this work, we develop a visual servoing control system applied to a UAV with dynamic compensation using a nonlinear model predictive control (NMPC) capable of accurately tracking the middle of the underlying PV array at different frontal velocities and height constraints, ensuring the acquisition of detailed images during low-altitude flights. The visual servoing controller is based on the extraction of features using RGB-D images and the Kalman filter to estimate the edges of the PV arrays. Furthermore, this work demonstrates the proposal in both simulated and real-world environments using the commercial aerial vehicle (DJI Matrice 100), with the purpose of showcasing the results of the architecture. Our approach is available for the scientific community in: https://github.com/EPVelasco/VisualServoing_NMPC

LiLO: Lightweight and low-bias LiDAR Odometry method based on spherical range image filtering

Nov 13, 2023Abstract:In unstructured outdoor environments, robotics requires accurate and efficient odometry with low computational time. Existing low-bias LiDAR odometry methods are often computationally expensive. To address this problem, we present a lightweight LiDAR odometry method that converts unorganized point cloud data into a spherical range image (SRI) and filters out surface, edge, and ground features in the image plane. This substantially reduces computation time and the required features for odometry estimation in LOAM-based algorithms. Our odometry estimation method does not rely on global maps or loop closure algorithms, which further reduces computational costs. Experimental results generate a translation and rotation error of 0.86\% and 0.0036{\deg}/m on the KITTI dataset with an average runtime of 78ms. In addition, we tested the method with our data, obtaining an average closed-loop error of 0.8m and a runtime of 27ms over eight loops covering 3.5Km.

ViKi-HyCo: A Hybrid-Control approach for complex car-like maneuvers

Nov 13, 2023

Abstract:This paper presents ViKi-HyCo (Visual servoing and Kinematic Hybrid-Controller), an approach that generates the necessary maneuvers for the complex positioning of a non-holonomic mobile robot in outdoor environments, towards a target point based on the object detection, by combining an image based visual servoing (IBVS) and a kinematic controller. The method avoids the problems of the visual servoing controller when it loses the visual object detection features by switching to a kinematic controller. We also present object localization for outdoor environments employing the fusion of LiDAR and RGB-D cameras that estimates the spatial location of a target point for the kinematic controller, and also allows the dynamic calculation of a desired bounding box of the detected object for the calculation of velocities in the visual servoing controller. The presented approach does not require an object tracking algorithm and is applicable to any visually tracking robotic task where its kinematic model is known. The Hybrid-Control presents an error of 0.0428 \pm 0.0467 m in the X-axis and 0.0515 \pm 0.0323 m in the Y-axis at the end of a complete positioning task.

Detection and depth estimation for domestic waste in outdoor environments by sensors fusion

Nov 08, 2022Abstract:In this work, we estimate the depth in which domestic waste are located in space from a mobile robot in outdoor scenarios. As we are doing this calculus on a broad range of space (0.3 - 6.0 m), we use RGB-D camera and LiDAR fusion. With this aim and range, we compare several methods such as average, nearest, median and center point, applied to those which are inside a reduced or non-reduced Bounding Box (BB). These BB are obtained from segmentation and detection methods which are representative of these techniques like Yolact, SOLO, You Only Look Once (YOLO)v5, YOLOv6 and YOLOv7. Results shown that, applying a detection method with the average technique and a reduction of BB of 40%, returns the same output as segmenting the object and applying the average method. Indeed, the detection method is faster and lighter in comparison with the segmentation one. The committed median error in the conducted experiments was 0.0298 ${\pm}$ 0.0544 m.

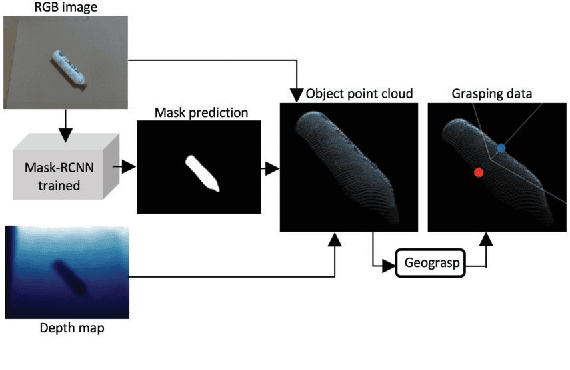

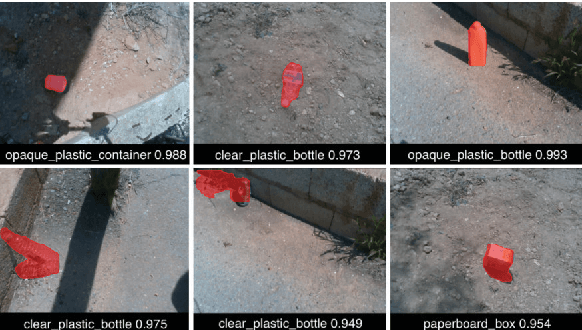

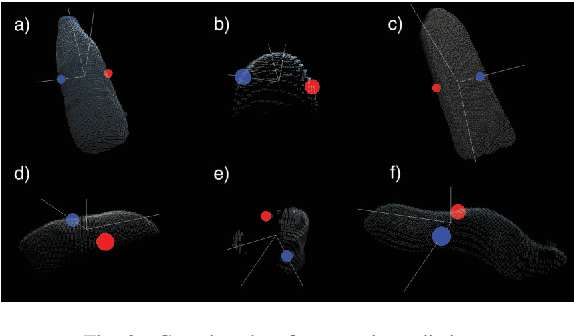

Domestic waste detection and grasping points for robotic picking up

May 14, 2021

Abstract:This paper presents an AI system applied to location and robotic grasping. Experimental setup is based on a parameter study to train a deep-learning network based on Mask-RCNN to perform waste location in indoor and outdoor environment, using five different classes and generating a new waste dataset. Initially the AI system obtain the RGBD data of the environment, followed by the detection of objects using the neural network. Later, the 3D object shape is computed using the network result and the depth channel. Finally, the shape is used to compute grasping for a robot arm with a two-finger gripper. The objective is to classify the waste in groups to improve a recycling strategy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge