Nicholas Mattei

Social Mechanism Design: A Low-Level Introduction

Nov 15, 2022Abstract:How do we deal with the fact that agents have preferences over both decision outcomes and the rules or procedures used to make decisions? If we create rules for aggregating preferences over rules, it would appear that we run into infinite regress with preferences and rules at successively higher "levels." The starting point of our analysis is the claim that infinite regress should not be a problem in practice, as any such preferences will necessarily be bounded in complexity and structured coherently in accordance with some (possibly latent) normative principles. Our core contributions are (1) the identification of simple, intuitive preference structures at low levels that can be generalized to form the building blocks of preferences at higher levels, and (2) the development of algorithms for maximizing the number of agents with such low-level preferences who will "accept" a decision. We analyze algorithms for acceptance maximization in two different domains: asymmetric dichotomous choice and constitutional amendment. In both settings we study the worst-case performance of the appropriate algorithms, and reveal circumstances under which universal acceptance is possible. In particular, we show that constitutional amendment procedures proposed recently by Abramowitz, Shapiro, and Talmon (2021) can achieve universal acceptance.

Who Pays? Personalization, Bossiness and the Cost of Fairness

Sep 08, 2022Abstract:Fairness-aware recommender systems that have a provider-side fairness concern seek to ensure that protected group(s) of providers have a fair opportunity to promote their items or products. There is a ``cost of fairness'' borne by the consumer side of the interaction when such a solution is implemented. This consumer-side cost raises its own questions of fairness, particularly when personalization is used to control the impact of the fairness constraint. In adopting a personalized approach to the fairness objective, researchers may be opening their systems up to strategic behavior on the part of users. This type of incentive has been studied in the computational social choice literature under the terminology of ``bossiness''. The concern is that a bossy user may be able to shift the cost of fairness to others, improving their own outcomes and worsening those for others. This position paper introduces the concept of bossiness, shows its application in fairness-aware recommendation and discusses strategies for reducing this strategic incentive.

Towards Group Learning: Distributed Weighting of Experts

Jun 03, 2022

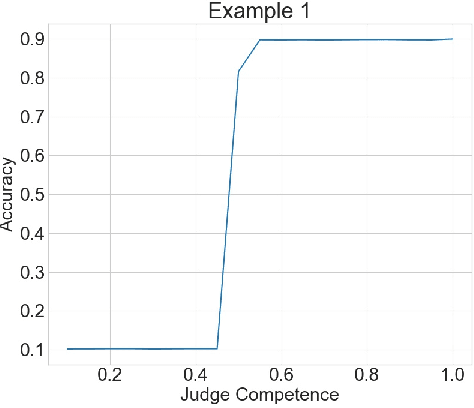

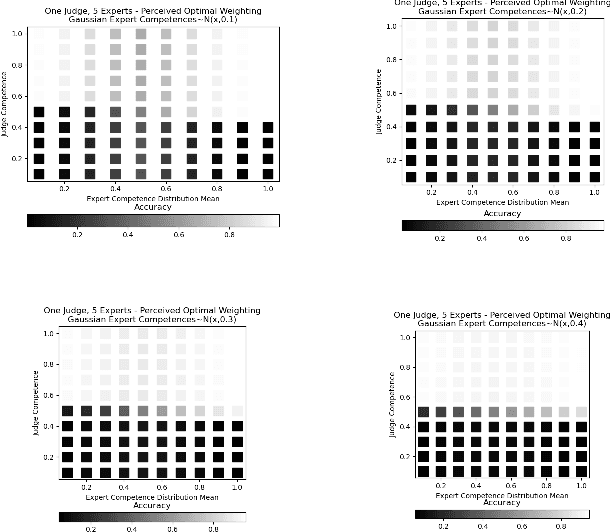

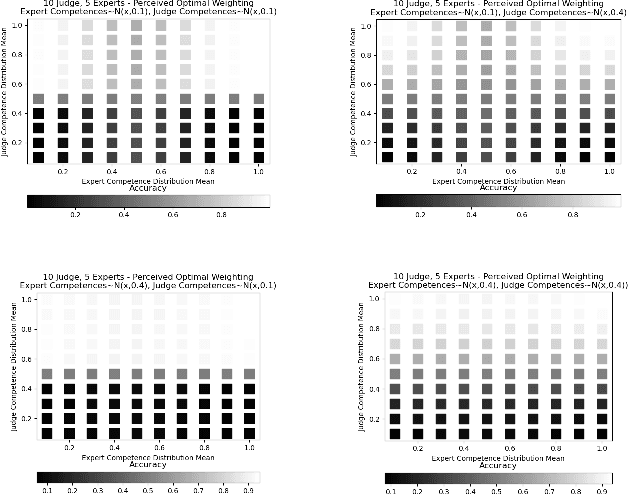

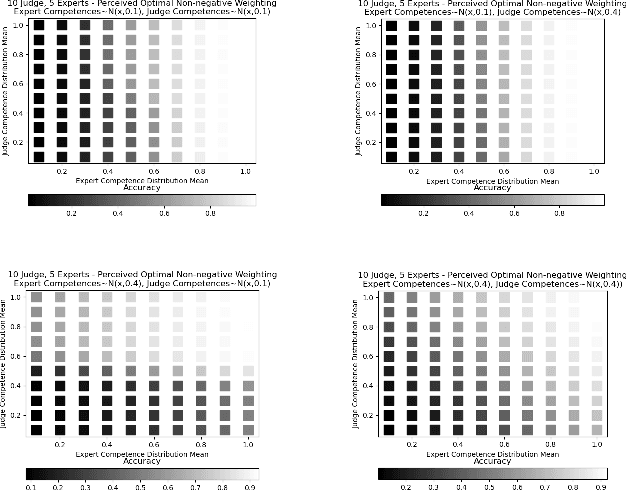

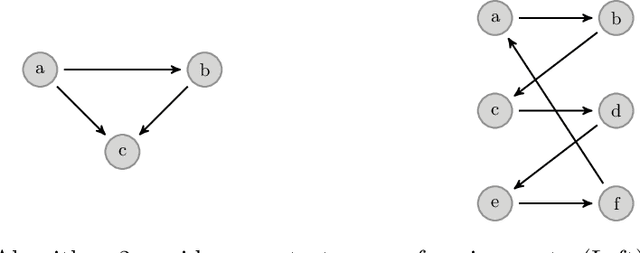

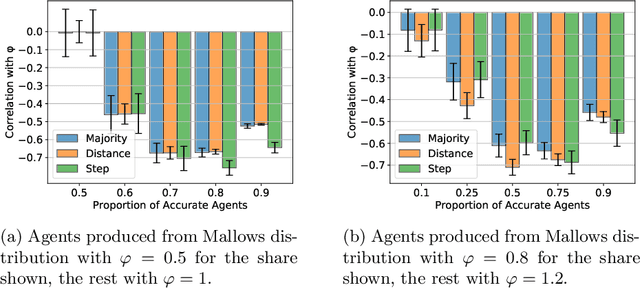

Abstract:Aggregating signals from a collection of noisy sources is a fundamental problem in many domains including crowd-sourcing, multi-agent planning, sensor networks, signal processing, voting, ensemble learning, and federated learning. The core question is how to aggregate signals from multiple sources (e.g. experts) in order to reveal an underlying ground truth. While a full answer depends on the type of signal, correlation of signals, and desired output, a problem common to all of these applications is that of differentiating sources based on their quality and weighting them accordingly. It is often assumed that this differentiation and aggregation is done by a single, accurate central mechanism or agent (e.g. judge). We complicate this model in two ways. First, we investigate the setting with both a single judge, and one with multiple judges. Second, given this multi-agent interaction of judges, we investigate various constraints on the judges' reporting space. We build on known results for the optimal weighting of experts and prove that an ensemble of sub-optimal mechanisms can perform optimally under certain conditions. We then show empirically that the ensemble approximates the performance of the optimal mechanism under a broader range of conditions.

Learning Behavioral Soft Constraints from Demonstrations

Feb 21, 2022

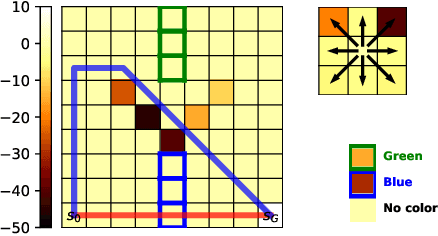

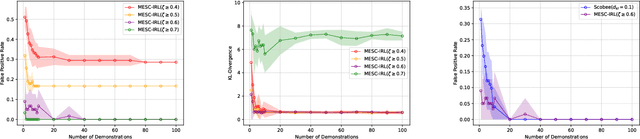

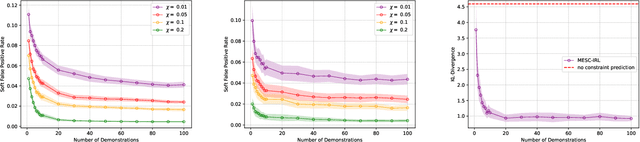

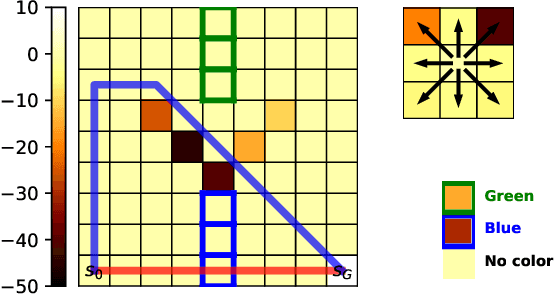

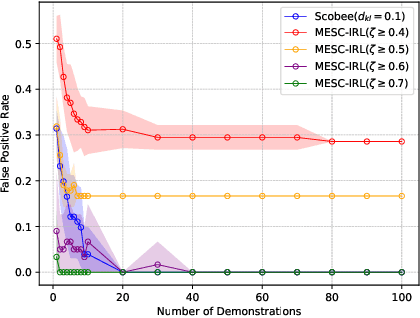

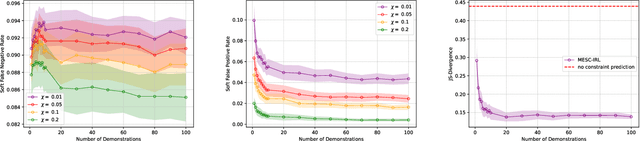

Abstract:Many real-life scenarios require humans to make difficult trade-offs: do we always follow all the traffic rules or do we violate the speed limit in an emergency? These scenarios force us to evaluate the trade-off between collective rules and norms with our own personal objectives and desires. To create effective AI-human teams, we must equip AI agents with a model of how humans make these trade-offs in complex environments when there are implicit and explicit rules and constraints. Agent equipped with these models will be able to mirror human behavior and/or to draw human attention to situations where decision making could be improved. To this end, we propose a novel inverse reinforcement learning (IRL) method: Max Entropy Inverse Soft Constraint IRL (MESC-IRL), for learning implicit hard and soft constraints over states, actions, and state features from demonstrations in deterministic and non-deterministic environments modeled as Markov Decision Processes (MDPs). Our method enables agents implicitly learn human constraints and desires without the need for explicit modeling by the agent designer and to transfer these constraints between environments. Our novel method generalizes prior work which only considered deterministic hard constraints and achieves state of the art performance.

When Is It Acceptable to Break the Rules? Knowledge Representation of Moral Judgement Based on Empirical Data

Jan 19, 2022Abstract:One of the most remarkable things about the human moral mind is its flexibility. We can make moral judgments about cases we have never seen before. We can decide that pre-established rules should be broken. We can invent novel rules on the fly. Capturing this flexibility is one of the central challenges in developing AI systems that can interpret and produce human-like moral judgment. This paper details the results of a study of real-world decision makers who judge whether it is acceptable to break a well-established norm: ``no cutting in line.'' We gather data on how human participants judge the acceptability of line-cutting in a range of scenarios. Then, in order to effectively embed these reasoning capabilities into a machine, we propose a method for modeling them using a preference-based structure, which captures a novel modification to standard ``dual process'' theories of moral judgment.

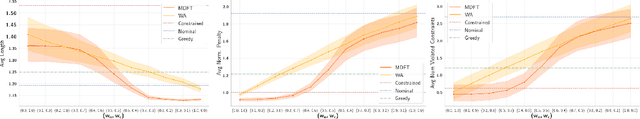

Combining Fast and Slow Thinking for Human-like and Efficient Navigation in Constrained Environments

Jan 18, 2022

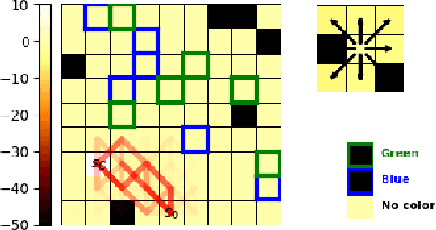

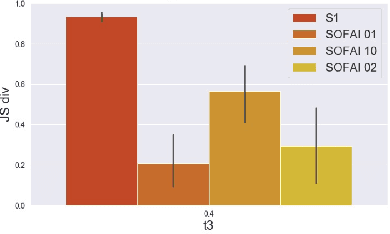

Abstract:Current AI systems lack several important human capabilities, such as adaptability, generalizability, self-control, consistency, common sense, and causal reasoning. We believe that existing cognitive theories of human decision making, such as the thinking fast and slow theory, can provide insights on how to advance AI systems towards some of these capabilities. In this paper, we propose a general architecture that is based on fast/slow solvers and a metacognitive component. We then present experimental results on the behavior of an instance of this architecture, for AI systems that make decisions about navigating in a constrained environment. We show how combining the fast and slow decision modalities allows the system to evolve over time and gradually pass from slow to fast thinking with enough experience, and that this greatly helps in decision quality, resource consumption, and efficiency.

Thinking Fast and Slow in AI: the Role of Metacognition

Oct 05, 2021

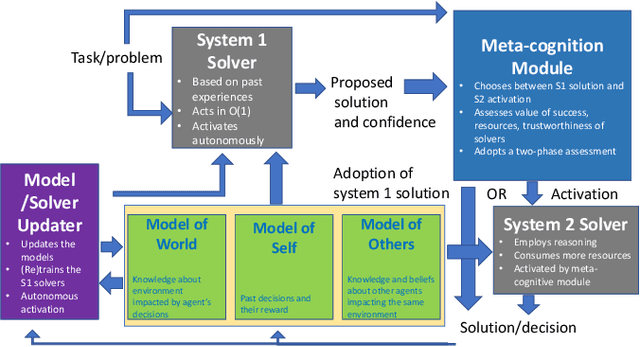

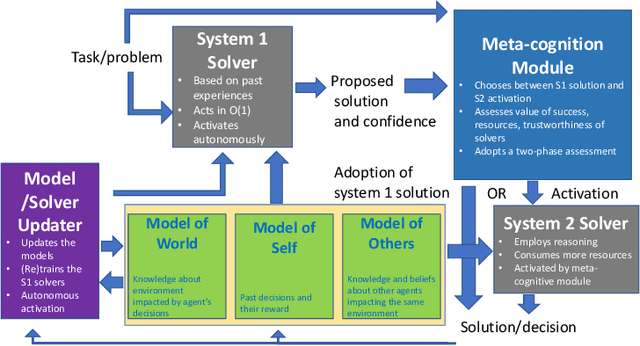

Abstract:AI systems have seen dramatic advancement in recent years, bringing many applications that pervade our everyday life. However, we are still mostly seeing instances of narrow AI: many of these recent developments are typically focused on a very limited set of competencies and goals, e.g., image interpretation, natural language processing, classification, prediction, and many others. Moreover, while these successes can be accredited to improved algorithms and techniques, they are also tightly linked to the availability of huge datasets and computational power. State-of-the-art AI still lacks many capabilities that would naturally be included in a notion of (human) intelligence. We argue that a better study of the mechanisms that allow humans to have these capabilities can help us understand how to imbue AI systems with these competencies. We focus especially on D. Kahneman's theory of thinking fast and slow, and we propose a multi-agent AI architecture where incoming problems are solved by either system 1 (or "fast") agents, that react by exploiting only past experience, or by system 2 (or "slow") agents, that are deliberately activated when there is the need to reason and search for optimal solutions beyond what is expected from the system 1 agent. Both kinds of agents are supported by a model of the world, containing domain knowledge about the environment, and a model of "self", containing information about past actions of the system and solvers' skills.

Making Human-Like Trade-offs in Constrained Environments by Learning from Demonstrations

Sep 22, 2021

Abstract:Many real-life scenarios require humans to make difficult trade-offs: do we always follow all the traffic rules or do we violate the speed limit in an emergency? These scenarios force us to evaluate the trade-off between collective norms and our own personal objectives. To create effective AI-human teams, we must equip AI agents with a model of how humans make trade-offs in complex, constrained environments. These agents will be able to mirror human behavior or to draw human attention to situations where decision making could be improved. To this end, we propose a novel inverse reinforcement learning (IRL) method for learning implicit hard and soft constraints from demonstrations, enabling agents to quickly adapt to new settings. In addition, learning soft constraints over states, actions, and state features allows agents to transfer this knowledge to new domains that share similar aspects. We then use the constraint learning method to implement a novel system architecture that leverages a cognitive model of human decision making, multi-alternative decision field theory (MDFT), to orchestrate competing objectives. We evaluate the resulting agent on trajectory length, number of violated constraints, and total reward, demonstrating that our agent architecture is both general and achieves strong performance. Thus we are able to capture and replicate human-like trade-offs from demonstrations in environments when constraints are not explicit.

Peer Selection with Noisy Assessments

Jul 21, 2021

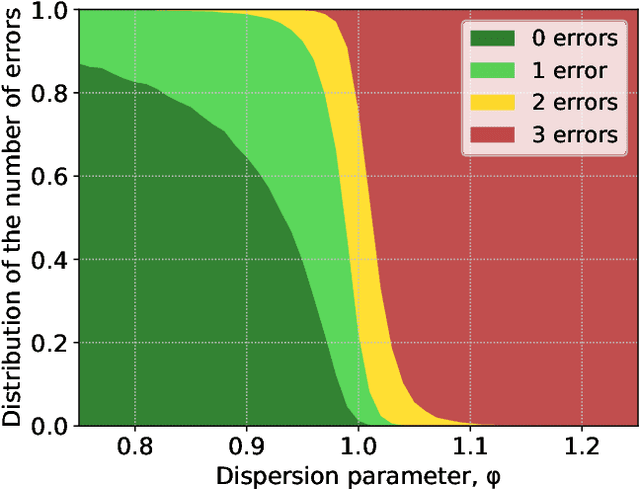

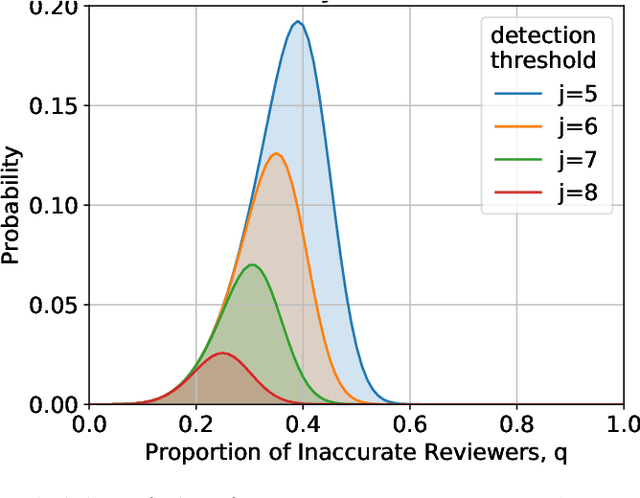

Abstract:In the peer selection problem a group of agents must select a subset of themselves as winners for, e.g., peer-reviewed grants or prizes. Here, we take a Condorcet view of this aggregation problem, i.e., that there is a ground-truth ordering over the agents and we wish to select the best set of agents, subject to the noisy assessments of the peers. Given this model, some agents may be unreliable, while others might be self-interested, attempting to influence the outcome in their favour. In this paper we extend PeerNomination, the most accurate peer reviewing algorithm to date, into WeightedPeerNomination, which is able to handle noisy and inaccurate agents. To do this, we explicitly formulate assessors' reliability weights in a way that does not violate strategyproofness, and use this information to reweight their scores. We show analytically that a weighting scheme can improve the overall accuracy of the selection significantly. Finally, we implement several instances of reweighting methods and show empirically that our methods are robust in the face of noisy assessments.

Modeling Voters in Multi-Winner Approval Voting

Dec 04, 2020

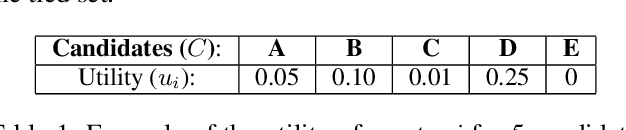

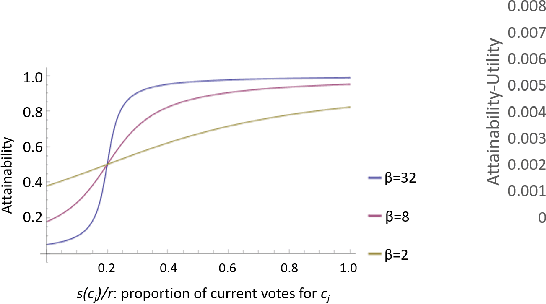

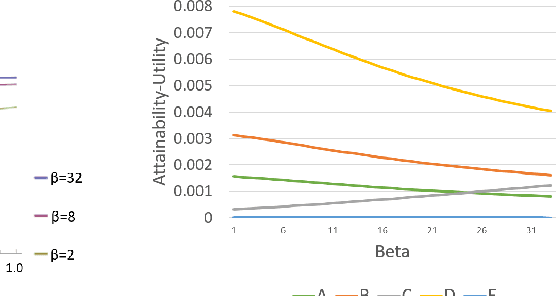

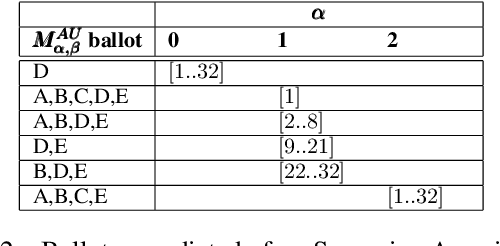

Abstract:In many real world situations, collective decisions are made using voting and, in scenarios such as committee or board elections, employing voting rules that return multiple winners. In multi-winner approval voting (AV), an agent submits a ballot consisting of approvals for as many candidates as they wish, and winners are chosen by tallying up the votes and choosing the top-$k$ candidates receiving the most approvals. In many scenarios, an agent may manipulate the ballot they submit in order to achieve a better outcome by voting in a way that does not reflect their true preferences. In complex and uncertain situations, agents may use heuristics instead of incurring the additional effort required to compute the manipulation which most favors them. In this paper, we examine voting behavior in single-winner and multi-winner approval voting scenarios with varying degrees of uncertainty using behavioral data obtained from Mechanical Turk. We find that people generally manipulate their vote to obtain a better outcome, but often do not identify the optimal manipulation. There are a number of predictive models of agent behavior in the COMSOC and psychology literature that are based on cognitively plausible heuristic strategies. We show that the existing approaches do not adequately model real-world data. We propose a novel model that takes into account the size of the winning set and human cognitive constraints, and demonstrate that this model is more effective at capturing real-world behaviors in multi-winner approval voting scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge