Nasir Memon

Fair GANs through model rebalancing with synthetic data

Aug 16, 2023Abstract:Deep generative models require large amounts of training data. This often poses a problem as the collection of datasets can be expensive and difficult, in particular datasets that are representative of the appropriate underlying distribution (e.g. demographic). This introduces biases in datasets which are further propagated in the models. We present an approach to mitigate biases in an existing generative adversarial network by rebalancing the model distribution. We do so by generating balanced data from an existing unbalanced deep generative model using latent space exploration and using this data to train a balanced generative model. Further, we propose a bias mitigation loss function that shows improvements in the fairness metric even when trained with unbalanced datasets. We show results for the Stylegan2 models while training on the FFHQ dataset for racial fairness and see that the proposed approach improves on the fairness metric by almost 5 times, whilst maintaining image quality. We further validate our approach by applying it to an imbalanced Cifar-10 dataset. Lastly, we argue that the traditionally used image quality metrics such as Frechet inception distance (FID) are unsuitable for bias mitigation problems.

Identity-Preserving Aging of Face Images via Latent Diffusion Models

Jul 17, 2023

Abstract:The performance of automated face recognition systems is inevitably impacted by the facial aging process. However, high quality datasets of individuals collected over several years are typically small in scale. In this work, we propose, train, and validate the use of latent text-to-image diffusion models for synthetically aging and de-aging face images. Our models succeed with few-shot training, and have the added benefit of being controllable via intuitive textual prompting. We observe high degrees of visual realism in the generated images while maintaining biometric fidelity measured by commonly used metrics. We evaluate our method on two benchmark datasets (CelebA and AgeDB) and observe significant reduction (~44%) in the False Non-Match Rate compared to existing state-of the-art baselines.

Zero-shot racially balanced dataset generation using an existing biased StyleGAN2

May 12, 2023Abstract:Facial recognition systems have made significant strides thanks to data-heavy deep learning models, but these models rely on large privacy-sensitive datasets. Unfortunately, many of these datasets lack diversity in terms of ethnicity and demographics, which can lead to biased models that can have serious societal and security implications. To address these issues, we propose a methodology that leverages the biased generative model StyleGAN2 to create demographically diverse images of synthetic individuals. The synthetic dataset is created using a novel evolutionary search algorithm that targets specific demographic groups. By training face recognition models with the resulting balanced dataset containing 50,000 identities per race (13.5 million images in total), we can improve their performance and minimize biases that might have been present in a model trained on a real dataset.

A Dataless FaceSwap Detection Approach Using Synthetic Images

Dec 05, 2022Abstract:Face swapping technology used to create "Deepfakes" has advanced significantly over the past few years and now enables us to create realistic facial manipulations. Current deep learning algorithms to detect deepfakes have shown promising results, however, they require large amounts of training data, and as we show they are biased towards a particular ethnicity. We propose a deepfake detection methodology that eliminates the need for any real data by making use of synthetically generated data using StyleGAN3. This not only performs at par with the traditional training methodology of using real data but it shows better generalization capabilities when finetuned with a small amount of real data. Furthermore, this also reduces biases created by facial image datasets that might have sparse data from particular ethnicities.

Gotcha: A Challenge-Response System for Real-Time Deepfake Detection

Oct 12, 2022

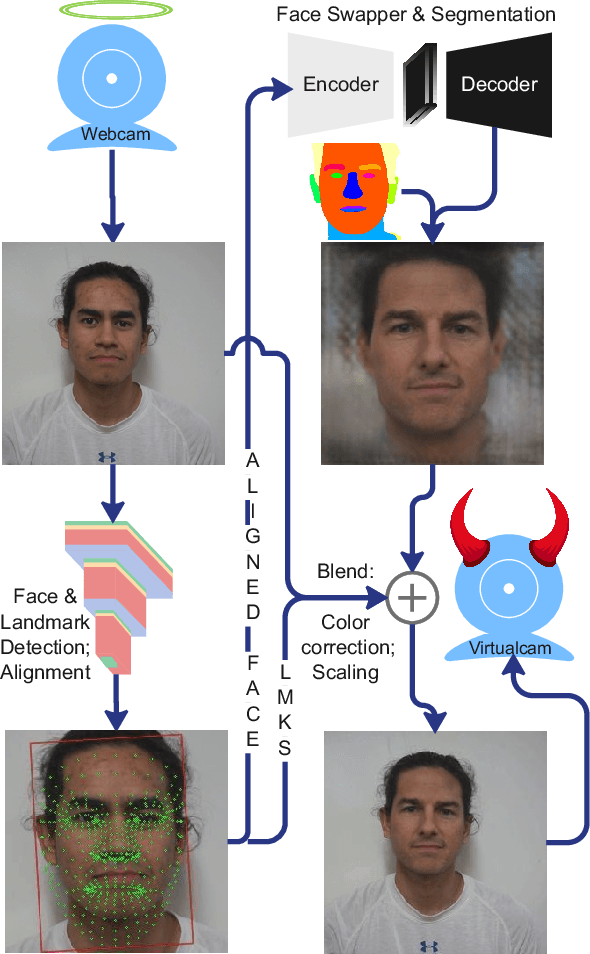

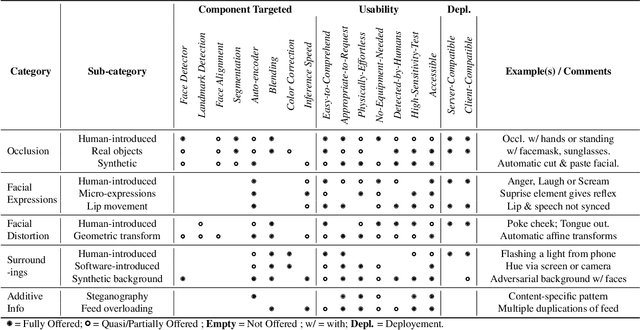

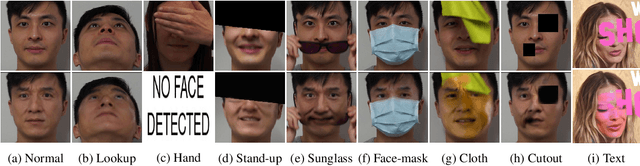

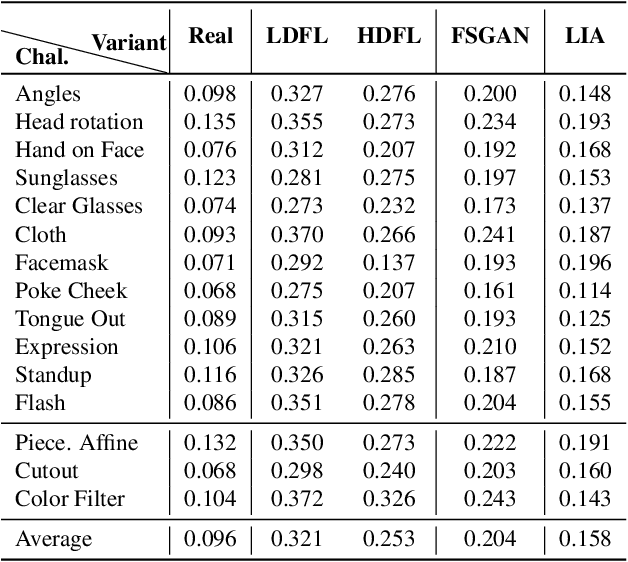

Abstract:The integrity of online video interactions is threatened by the widespread rise of AI-enabled high-quality deepfakes that are now deployable in real-time. This paper presents Gotcha, a real-time deepfake detection system for live video interactions. The core principle underlying Gotcha is the presentation of a specially chosen cascade of both active and passive challenges to video conference participants. Active challenges include inducing changes in face occlusion, face expression, view angle, and ambiance; passive challenges include digital manipulation of the webcam feed. The challenges are designed to target vulnerabilities in the structure of modern deepfake generators and create perceptible artifacts for the human eye while inducing robust signals for ML-based automatic deepfake detectors. We present a comprehensive taxonomy of a large set of challenge tasks, which reveals a natural hierarchy among different challenges. Our system leverages this hierarchy by cascading progressively more demanding challenges to a suspected deepfake. We evaluate our system on a novel dataset of live users emulating deepfakes and show that our system provides consistent, measurable degradation of deepfake quality, showcasing its promise for robust real-time deepfake detection when deployed in the wild.

Diversity and Novelty MasterPrints: Generating Multiple DeepMasterPrints for Increased User Coverage

Sep 11, 2022

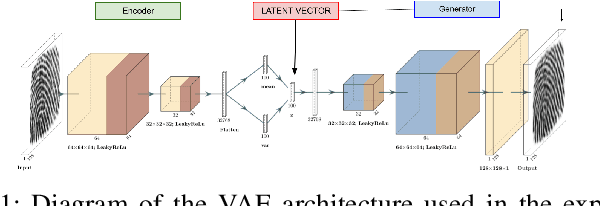

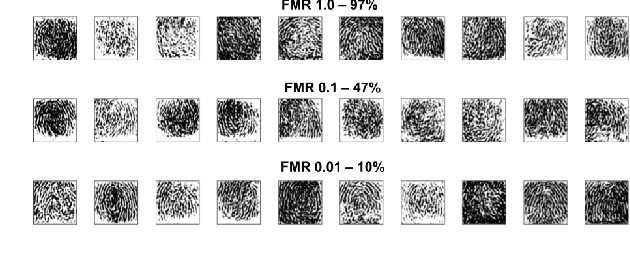

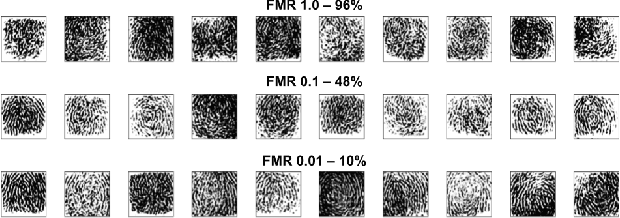

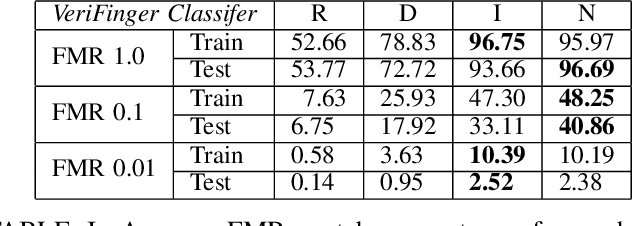

Abstract:This work expands on previous advancements in genetic fingerprint spoofing via the DeepMasterPrints and introduces Diversity and Novelty MasterPrints. This system uses quality diversity evolutionary algorithms to generate dictionaries of artificial prints with a focus on increasing coverage of users from the dataset. The Diversity MasterPrints focus on generating solution prints that match with users not covered by previously found prints, and the Novelty MasterPrints explicitly search for prints with more that are farther in user space than previous prints. Our multi-print search methodologies outperform the singular DeepMasterPrints in both coverage and generalization while maintaining quality of the fingerprint image output.

Dictionary Attacks on Speaker Verification

Apr 24, 2022

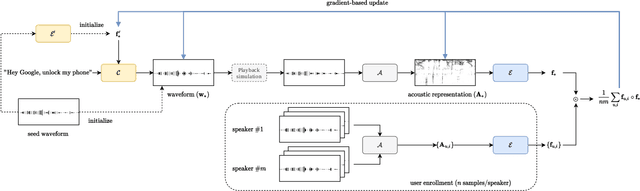

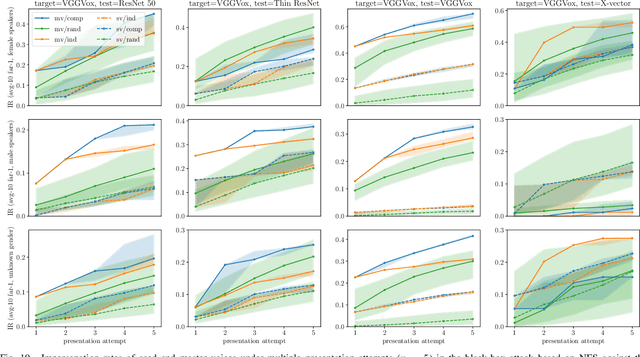

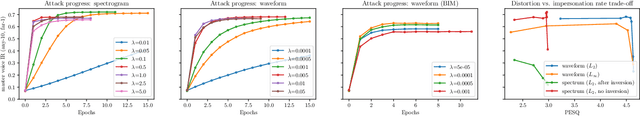

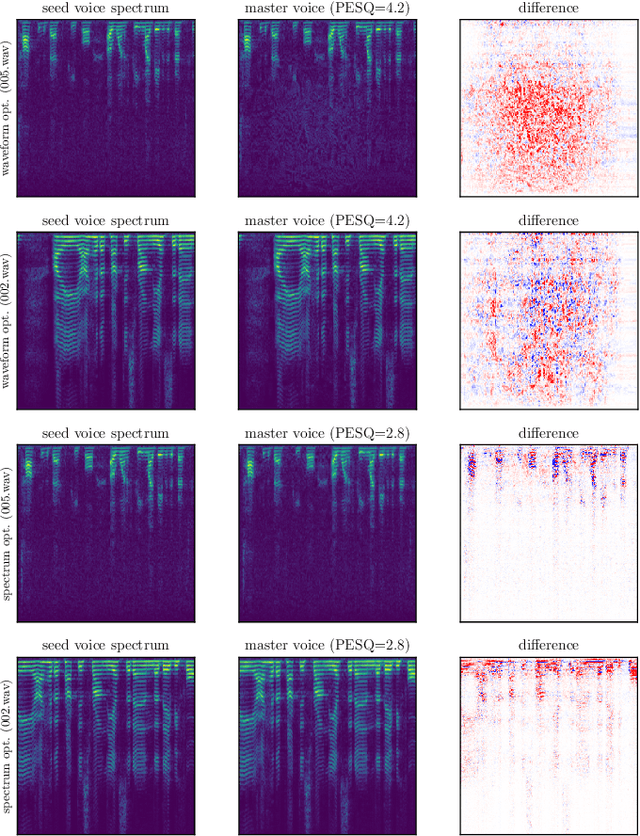

Abstract:In this paper, we propose dictionary attacks against speaker verification - a novel attack vector that aims to match a large fraction of speaker population by chance. We introduce a generic formulation of the attack that can be used with various speech representations and threat models. The attacker uses adversarial optimization to maximize raw similarity of speaker embeddings between a seed speech sample and a proxy population. The resulting master voice successfully matches a non-trivial fraction of people in an unknown population. Adversarial waveforms obtained with our approach can match on average 69% of females and 38% of males enrolled in the target system at a strict decision threshold calibrated to yield false alarm rate of 1%. By using the attack with a black-box voice cloning system, we obtain master voices that are effective in the most challenging conditions and transferable between speaker encoders. We also show that, combined with multiple attempts, this attack opens even more to serious issues on the security of these systems.

Hard-Attention for Scalable Image Classification

Feb 20, 2021

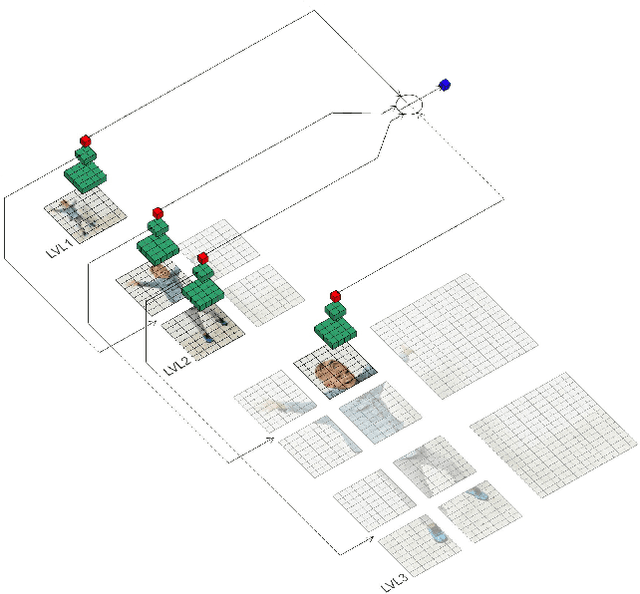

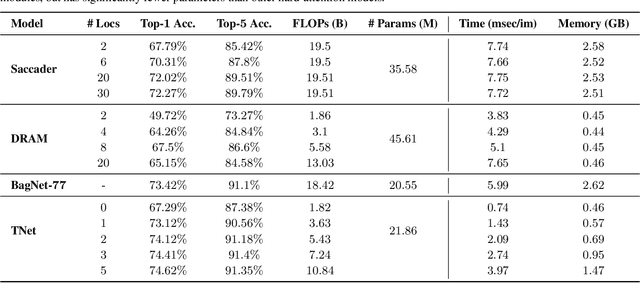

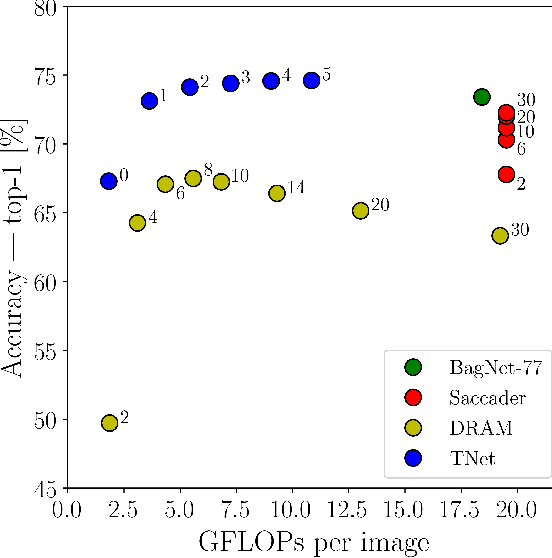

Abstract:Deep neural networks (DNNs) are typically optimized for a specific input resolution (e.g. $224 \times 224$ px) and their adoption to inputs of higher resolution (e.g., satellite or medical images) remains challenging, as it leads to excessive computation and memory overhead, and may require substantial engineering effort (e.g., streaming). We show that multi-scale hard-attention can be an effective solution to this problem. We propose a novel architecture, TNet, which traverses an image pyramid in a top-down fashion, visiting only the most informative regions along the way. We compare our model against strong hard-attention baselines, achieving a better trade-off between resources and accuracy on ImageNet. We further verify the efficacy of our model on satellite images (fMoW dataset) of size up to $896 \times 896$ px. In addition, our hard-attention mechanism guarantees predictions with a degree of interpretability, without extra cost beyond inference. We also show that we can reduce data acquisition and annotation cost, since our model attends only to a fraction of the highest resolution content, while using only image-level labels without bounding boxes.

The Role of the Crowd in Countering Misinformation: A Case Study of the COVID-19 Infodemic

Nov 12, 2020

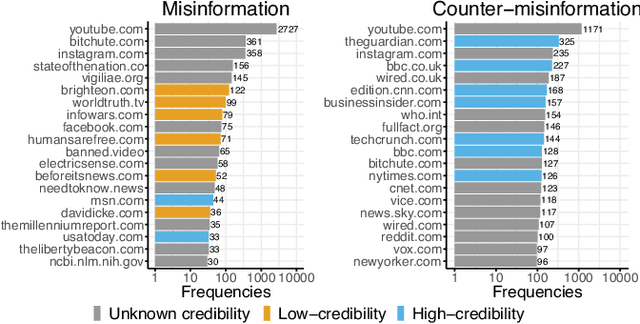

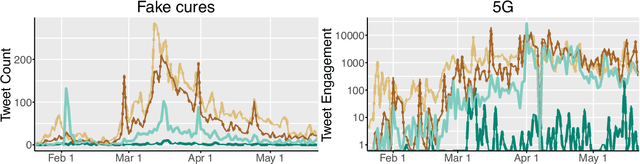

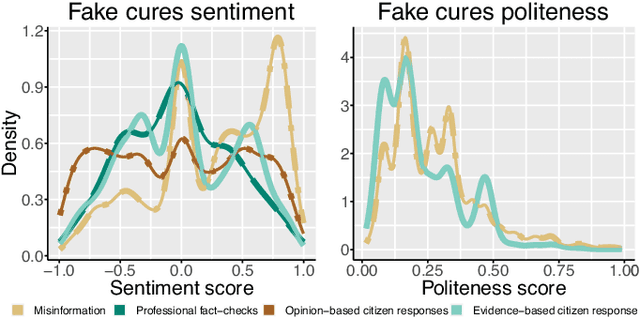

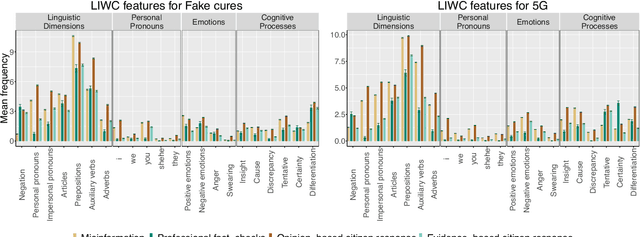

Abstract:Fact checking by professionals is viewed as a vital defense in the fight against misinformation.While fact checking is important and its impact has been significant, fact checks could have limited visibility and may not reach the intended audience, such as those deeply embedded in polarized communities. Concerned citizens (i.e., the crowd), who are users of the platforms where misinformation appears, can play a crucial role in disseminating fact-checking information and in countering the spread of misinformation. To explore if this is the case, we conduct a data-driven study of misinformation on the Twitter platform, focusing on tweets related to the COVID-19 pandemic, analyzing the spread of misinformation, professional fact checks, and the crowd response to popular misleading claims about COVID-19. In this work, we curate a dataset of false claims and statements that seek to challenge or refute them. We train a classifier to create a novel dataset of 155,468 COVID-19-related tweets, containing 33,237 false claims and 33,413 refuting arguments.Our findings show that professional fact-checking tweets have limited volume and reach. In contrast, we observe that the surge in misinformation tweets results in a quick response and a corresponding increase in tweets that refute such misinformation. More importantly, we find contrasting differences in the way the crowd refutes tweets, some tweets appear to be opinions, while others contain concrete evidence, such as a link to a reputed source. Our work provides insights into how misinformation is organically countered in social platforms by some of their users and the role they play in amplifying professional fact checks.These insights could lead to development of tools and mechanisms that can empower concerned citizens in combating misinformation. The code and data can be found in http://claws.cc.gatech.edu/covid_counter_misinformation.html.

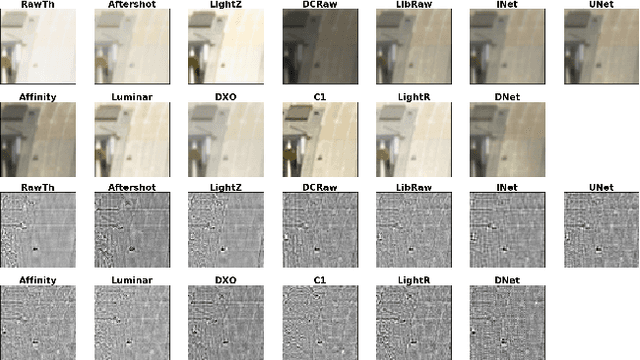

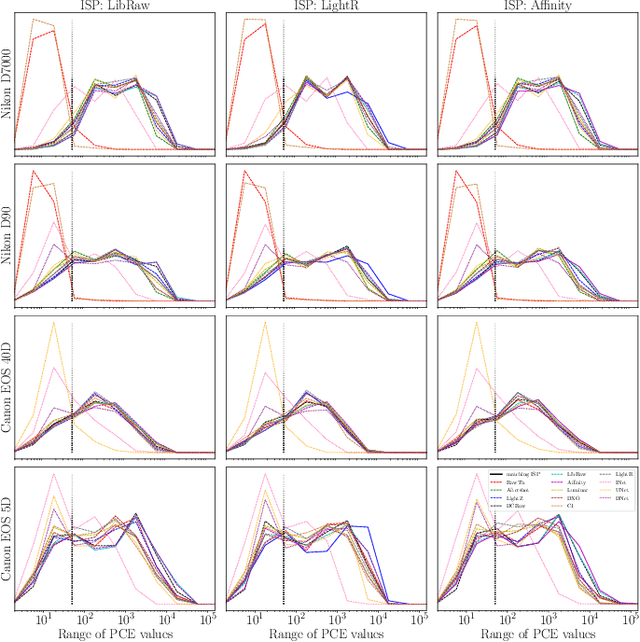

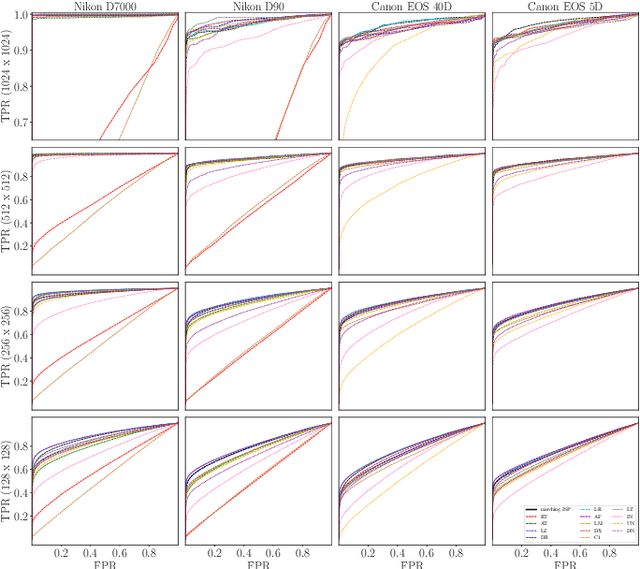

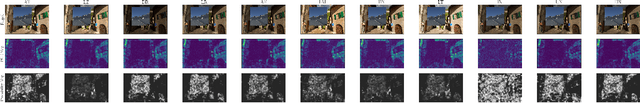

Empirical Evaluation of PRNU Fingerprint Variation for Mismatched Imaging Pipelines

Apr 04, 2020

Abstract:We assess the variability of PRNU-based camera fingerprints with mismatched imaging pipelines (e.g., different camera ISP or digital darkroom software). We show that camera fingerprints exhibit non-negligible variations in this setup, which may lead to unexpected degradation of detection statistics in real-world use-cases. We tested 13 different pipelines, including standard digital darkroom software and recent neural-networks. We observed that correlation between fingerprints from mismatched pipelines drops on average to 0.38 and the PCE detection statistic drops by over 40%. The degradation in error rates is the strongest for small patches commonly used in photo manipulation detection, and when neural networks are used for photo development. At a fixed 0.5% FPR setting, the TPR drops by 17 ppt (percentage points) for 128 px and 256 px patches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge