Murtaza Hazara

Few-shot model-based adaptation in noisy conditions

Oct 16, 2020

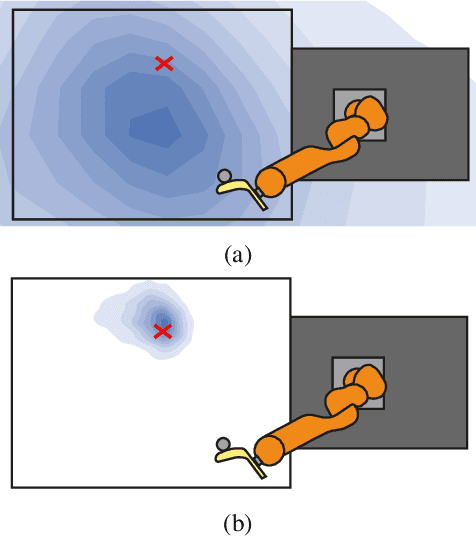

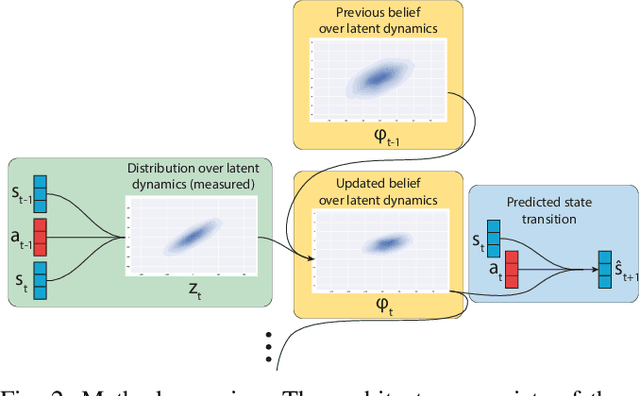

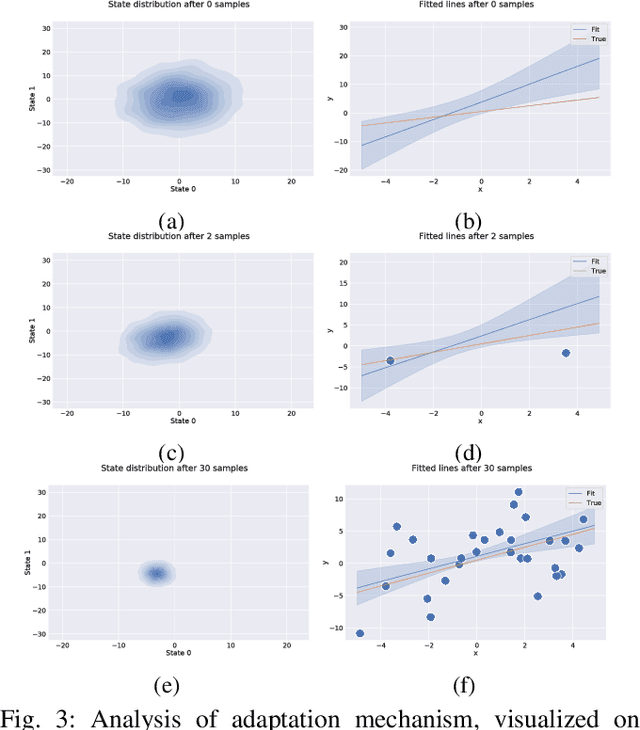

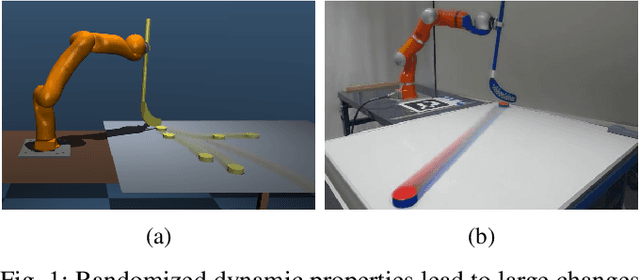

Abstract:Few-shot adaptation is a challenging problem in the context of simulation-to-real transfer in robotics, requiring safe and informative data collection. In physical systems, additional challenge may be posed by domain noise, which is present in virtually all real-world applications. In this paper, we propose to perform few-shot adaptation of dynamics models in noisy conditions using an uncertainty-aware Kalman filter-based neural network architecture. We show that the proposed method, which explicitly addresses domain noise, improves few-shot adaptation error over a blackbox adaptation LSTM baseline, and over a model-free on-policy reinforcement learning approach, which tries to learn an adaptable and informative policy at the same time. The proposed method also allows for system analysis by analyzing hidden states of the model during and after adaptation.

Meta Reinforcement Learning for Sim-to-real Domain Adaptation

Sep 16, 2019

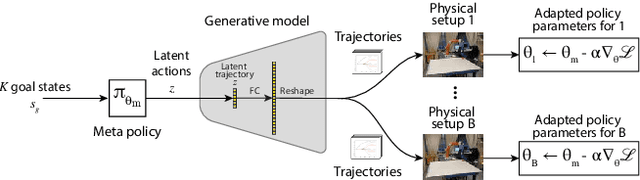

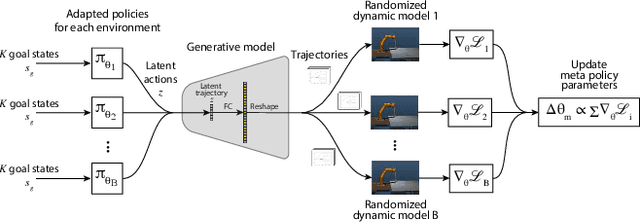

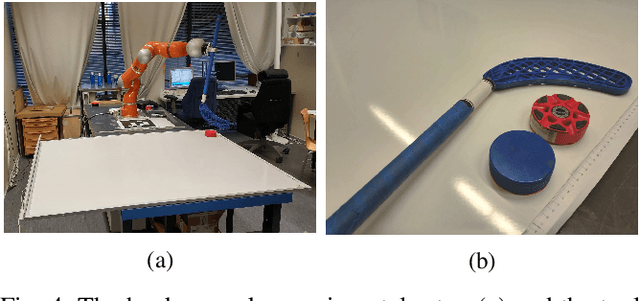

Abstract:Modern reinforcement learning methods suffer from low sample efficiency and unsafe exploration, making it infeasible to train robotic policies entirely on real hardware. In this work, we propose to address the problem of sim-to-real domain transfer by using meta learning to train a policy that can adapt to a variety of dynamic conditions, and using a task-specific trajectory generation model to provide an action space that facilitates quick exploration. We evaluate the method by performing domain adaptation in simulation and analyzing the structure of the latent space during adaptation. We then deploy this policy on a KUKA LBR 4+ robot and evaluate its performance on a task of hitting a hockey puck to a target. Our method shows more consistent and stable domain adaptation than the baseline, resulting in better overall performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge