Mian Zou

Color Matters: Demosaicing-Guided Color Correlation Training for Generalizable AI-Generated Image Detection

Jan 30, 2026Abstract:As realistic AI-generated images threaten digital authenticity, we address the generalization failure of generative artifact-based detectors by exploiting the intrinsic properties of the camera imaging pipeline. Concretely, we investigate color correlations induced by the color filter array (CFA) and demosaicing, and propose a Demosaicing-guided Color Correlation Training (DCCT) framework for AI-generated image detection. By simulating the CFA sampling pattern, we decompose each color image into a single-channel input (as the condition) and the remaining two channels as the ground-truth targets (for prediction). A self-supervised U-Net is trained to model the conditional distribution of the missing channels from the given one, parameterized via a mixture of logistic functions. Our theoretical analysis reveals that DCCT targets a provable distributional difference in color-correlation features between photographic and AI-generated images. By leveraging these distinct features to construct a binary classifier, DCCT achieves state-of-the-art generalization and robustness, significantly outperforming prior methods across over 20 unseen generators.

Bi-Level Optimization for Self-Supervised AI-Generated Face Detection

Jul 30, 2025

Abstract:AI-generated face detectors trained via supervised learning typically rely on synthesized images from specific generators, limiting their generalization to emerging generative techniques. To overcome this limitation, we introduce a self-supervised method based on bi-level optimization. In the inner loop, we pretrain a vision encoder only on photographic face images using a set of linearly weighted pretext tasks: classification of categorical exchangeable image file format (EXIF) tags, ranking of ordinal EXIF tags, and detection of artificial face manipulations. The outer loop then optimizes the relative weights of these pretext tasks to enhance the coarse-grained detection of manipulated faces, serving as a proxy task for identifying AI-generated faces. In doing so, it aligns self-supervised learning more closely with the ultimate goal of AI-generated face detection. Once pretrained, the encoder remains fixed, and AI-generated faces are detected either as anomalies under a Gaussian mixture model fitted to photographic face features or by a lightweight two-layer perceptron serving as a binary classifier. Extensive experiments demonstrate that our detectors significantly outperform existing approaches in both one-class and binary classification settings, exhibiting strong generalization to unseen generators.

Self-Supervised Learning for Detecting AI-Generated Faces as Anomalies

Jan 04, 2025

Abstract:The detection of AI-generated faces is commonly approached as a binary classification task. Nevertheless, the resulting detectors frequently struggle to adapt to novel AI face generators, which evolve rapidly. In this paper, we describe an anomaly detection method for AI-generated faces by leveraging self-supervised learning of camera-intrinsic and face-specific features purely from photographic face images. The success of our method lies in designing a pretext task that trains a feature extractor to rank four ordinal exchangeable image file format (EXIF) tags and classify artificially manipulated face images. Subsequently, we model the learned feature distribution of photographic face images using a Gaussian mixture model. Faces with low likelihoods are flagged as AI-generated. Both quantitative and qualitative experiments validate the effectiveness of our method. Our code is available at \url{https://github.com/MZMMSEC/AIGFD_EXIF.git}.

Semantics-Oriented Multitask Learning for DeepFake Detection: A Joint Embedding Approach

Aug 29, 2024

Abstract:In recent years, the multimedia forensics and security community has seen remarkable progress in multitask learning for DeepFake (i.e., face forgery) detection. The prevailing strategy has been to frame DeepFake detection as a binary classification problem augmented by manipulation-oriented auxiliary tasks. This strategy focuses on learning features specific to face manipulations, which exhibit limited generalizability. In this paper, we delve deeper into semantics-oriented multitask learning for DeepFake detection, leveraging the relationships among face semantics via joint embedding. We first propose an automatic dataset expansion technique that broadens current face forgery datasets to support semantics-oriented DeepFake detection tasks at both the global face attribute and local face region levels. Furthermore, we resort to joint embedding of face images and their corresponding labels (depicted by textual descriptions) for prediction. This approach eliminates the need for manually setting task-agnostic and task-specific parameters typically required when predicting labels directly from images. In addition, we employ a bi-level optimization strategy to dynamically balance the fidelity loss weightings of various tasks, making the training process fully automated. Extensive experiments on six DeepFake datasets show that our method improves the generalizability of DeepFake detection and, meanwhile, renders some degree of model interpretation by providing human-understandable explanations.

Semantic Contextualization of Face Forgery: A New Definition, Dataset, and Detection Method

May 14, 2024

Abstract:In recent years, deep learning has greatly streamlined the process of generating realistic fake face images. Aware of the dangers, researchers have developed various tools to spot these counterfeits. Yet none asked the fundamental question: What digital manipulations make a real photographic face image fake, while others do not? In this paper, we put face forgery in a semantic context and define that computational methods that alter semantic face attributes to exceed human discrimination thresholds are sources of face forgery. Guided by our new definition, we construct a large face forgery image dataset, where each image is associated with a set of labels organized in a hierarchical graph. Our dataset enables two new testing protocols to probe the generalization of face forgery detectors. Moreover, we propose a semantics-oriented face forgery detection method that captures label relations and prioritizes the primary task (\ie, real or fake face detection). We show that the proposed dataset successfully exposes the weaknesses of current detectors as the test set and consistently improves their generalizability as the training set. Additionally, we demonstrate the superiority of our semantics-oriented method over traditional binary and multi-class classification-based detectors.

Statistical Analysis of Signal-Dependent Noise: Application in Blind Localization of Image Splicing Forgery

Nov 02, 2020

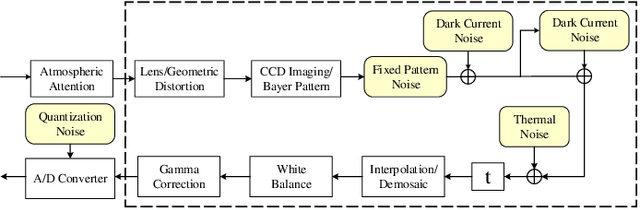

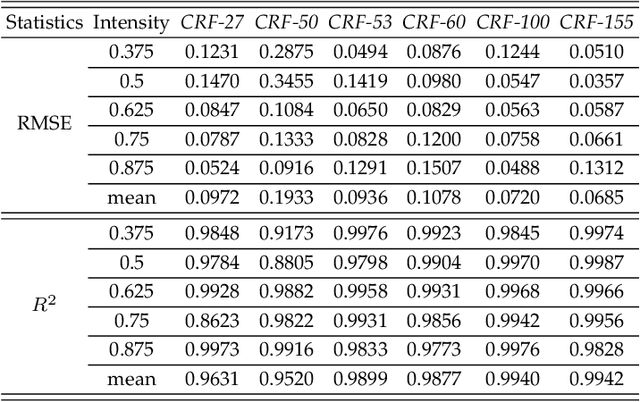

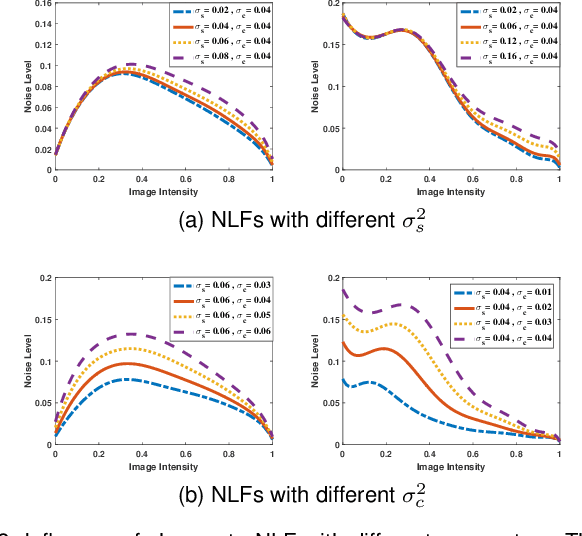

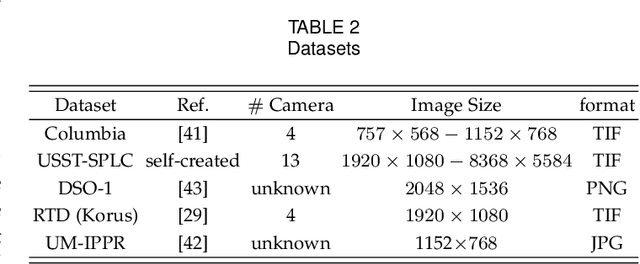

Abstract:Visual noise is often regarded as a disturbance in image quality, whereas it can also provide a crucial clue for image-based forensic tasks. Conventionally, noise is assumed to comprise an additive Gaussian model to be estimated and then used to reveal anomalies. However, for real sensor noise, it should be modeled as signal-dependent noise (SDN). In this work, we apply SDN to splicing forgery localization tasks. Through statistical analysis of the SDN model, we assume that noise can be modeled as a Gaussian approximation for a certain brightness and propose a likelihood model for a noise level function. By building a maximum a posterior Markov random field (MAP-MRF) framework, we exploit the likelihood of noise to reveal the alien region of spliced objects, with a probability combination refinement strategy. To ensure a completely blind detection, an iterative alternating method is adopted to estimate the MRF parameters. Experimental results demonstrate that our method is effective and provides a comparative localization performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge