Marco Visca

Deep Learning Traversability Estimator for Mobile Robots in Unstructured Environments

May 23, 2021

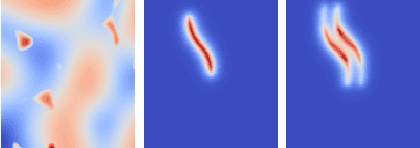

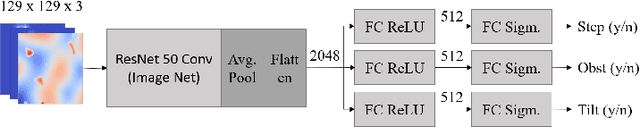

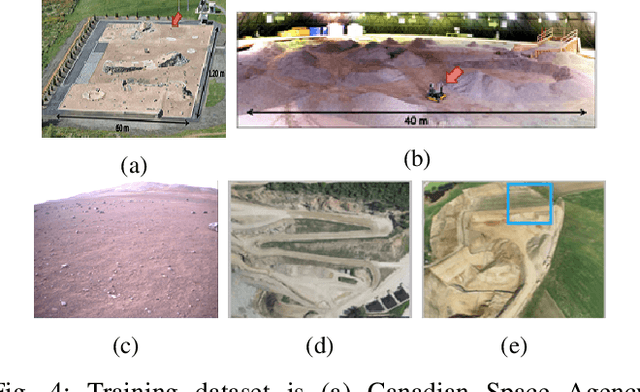

Abstract:Terrain traversability analysis plays a major role in ensuring safe robotic navigation in unstructured environments. However, real-time constraints frequently limit the accuracy of online tests, especially in scenarios where realistic robot-terrain interactions are complex to model. In this context, we propose a deep learning framework, trained in an end-to-end fashion from elevation maps and trajectories, to estimate the occurrence of failure events. The network is first trained and tested in simulation over synthetic maps generated by the OpenSimplex algorithm. The prediction performance of the Deep Learning framework is illustrated by being able to retain over 94% recall of the original simulator at 30% of the computational time. Finally, the network is transferred and tested on real elevation maps collected by the SEEKER consortium during the Martian rover test trial in the Atacama desert in Chile. We show that transferring and fine-tuning of an application-independent pre-trained model retains better performance than training uniquely on scarcely available real data.

Conv1D Energy-Aware Path Planner for Mobile Robots in Unstructured Environments

Apr 04, 2021

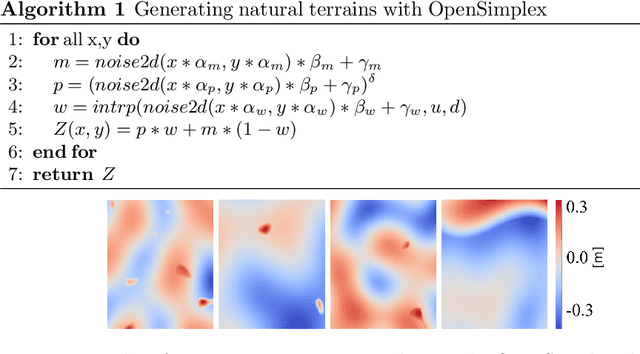

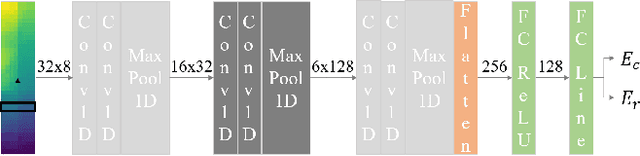

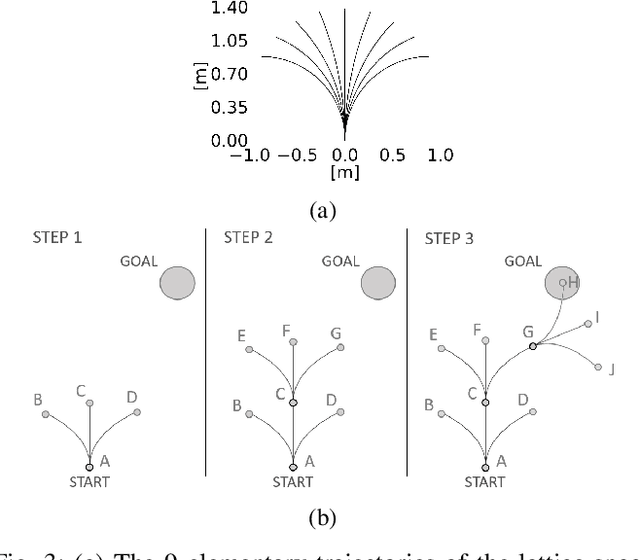

Abstract:Driving energy consumption plays a major role in the navigation of mobile robots in challenging environments, especially if they are left to operate unattended under limited on-board power. This paper reports on first results of an energy-aware path planner, which can provide estimates of the driving energy consumption and energy recovery of a robot traversing complex uneven terrains. Energy is estimated over trajectories making use of a self-supervised learning approach, in which the robot autonomously learns how to correlate perceived terrain point clouds to energy consumption and recovery. A novel feature of the method is the use of 1D convolutional neural network to analyse the terrain sequentially in the same temporal order as it would be experienced by the robot when moving. The performance of the proposed approach is assessed in simulation over several digital terrain models collected from real natural scenarios, and is compared with a heuristic inclination-based energy model. We show evidence of the benefit of our method to increase the overall prediction r2 score by 66.8% and to reduce the driving energy consumption over planned paths by 5.5%.

Self-adaptive Torque Vectoring Controller Using Reinforcement Learning

Mar 27, 2021

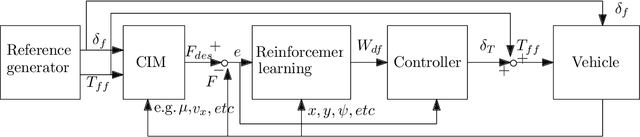

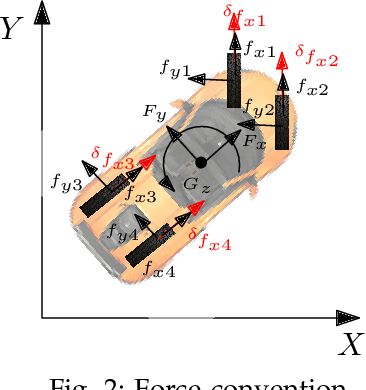

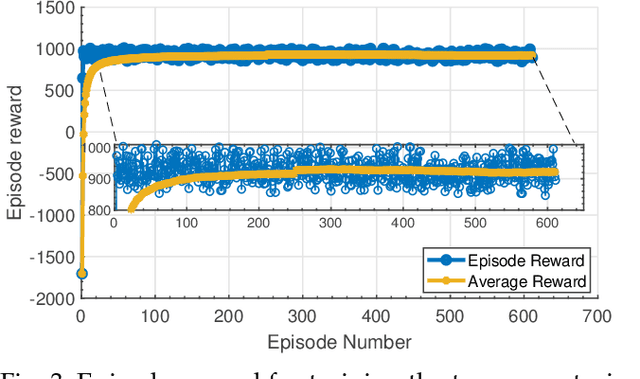

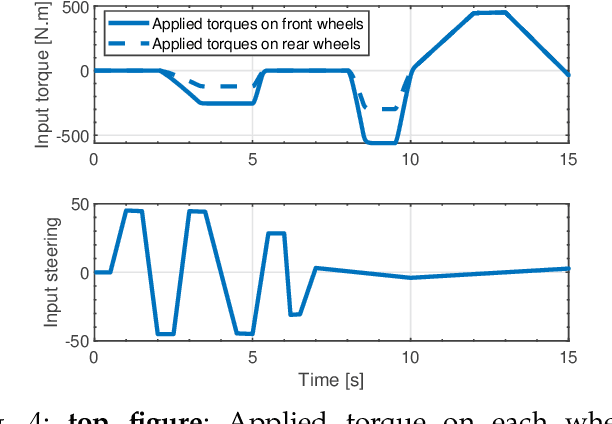

Abstract:Continuous direct yaw moment control systems such as torque-vectoring controller are an essential part for vehicle stabilization. This controller has been extensively researched with the central objective of maintaining the vehicle stability by providing consistent stable cornering response. The ability of careful tuning of the parameters in a torque-vectoring controller can significantly enhance vehicle's performance and stability. However, without any re-tuning of the parameters, especially in extreme driving conditions e.g. low friction surface or high velocity, the vehicle fails to maintain the stability. In this paper, the utility of Reinforcement Learning (RL) based on Deep Deterministic Policy Gradient (DDPG) as a parameter tuning algorithm for torque-vectoring controller is presented. It is shown that, torque-vectoring controller with parameter tuning via reinforcement learning performs well on a range of different driving environment e.g., wide range of friction conditions and different velocities, which highlight the advantages of reinforcement learning as an adaptive algorithm for parameter tuning. Moreover, the robustness of DDPG algorithm are validated under scenarios which are beyond the training environment of the reinforcement learning algorithm. The simulation has been carried out using a four wheels vehicle model with nonlinear tire characteristics. We compare our DDPG based parameter tuning against a genetic algorithm and a conventional trial-and-error tunning of the torque vectoring controller, and the results demonstrated that the reinforcement learning based parameter tuning significantly improves the stability of the vehicle.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge