Marco Baroni

CIMeC - Center for Mind/Brain Sciences, University of Trento

Emergent Language Generalization and Acquisition Speed are not tied to Compositionality

Apr 25, 2020

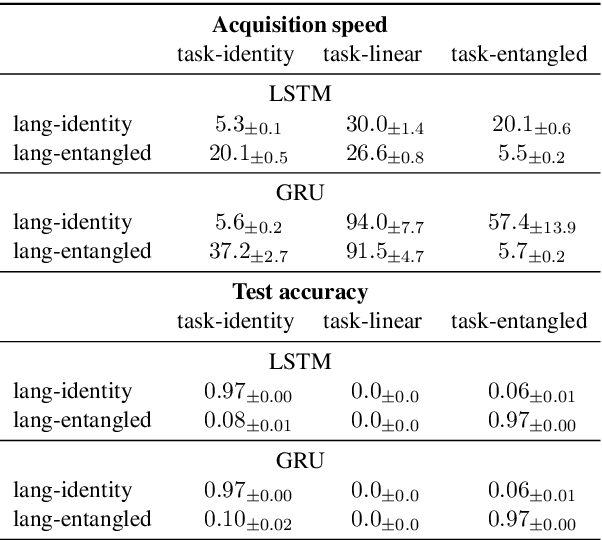

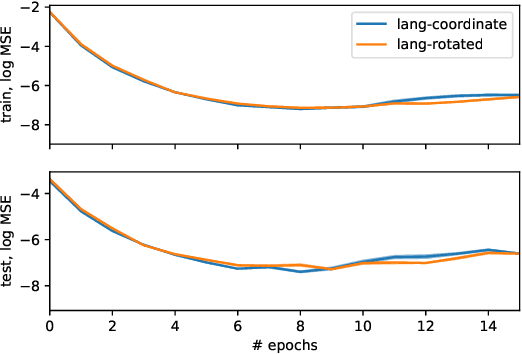

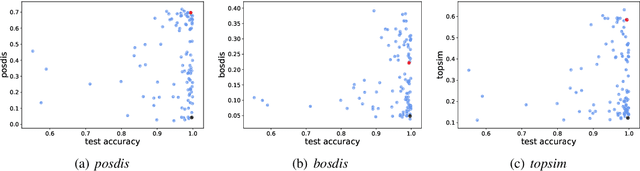

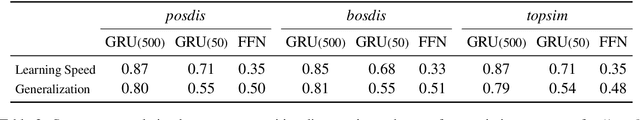

Abstract:Studies of discrete languages emerging when neural agents communicate to solve a joint task often look for evidence of compositional structure. This stems for the expectation that such a structure would allow languages to be acquired faster by the agents and enable them to generalize better. We argue that these beneficial properties are only loosely connected to compositionality. In two experiments, we demonstrate that, depending on the task, non-compositional languages might show equal, or better, generalization performance and acquisition speed than compositional ones. Further research in the area should be clearer about what benefits are expected from compositionality, and how the latter would lead to them.

Syntactic Structure from Deep Learning

Apr 22, 2020Abstract:Modern deep neural networks achieve impressive performance in engineering applications that require extensive linguistic skills, such as machine translation. This success has sparked interest in probing whether these models are inducing human-like grammatical knowledge from the raw data they are exposed to, and, consequently, whether they can shed new light on long-standing debates concerning the innate structure necessary for language acquisition. In this article, we survey representative studies of the syntactic abilities of deep networks, and discuss the broader implications that this work has for theoretical linguistics.

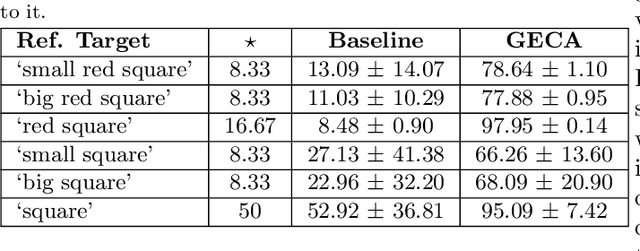

Compositionality and Generalization in Emergent Languages

Apr 20, 2020

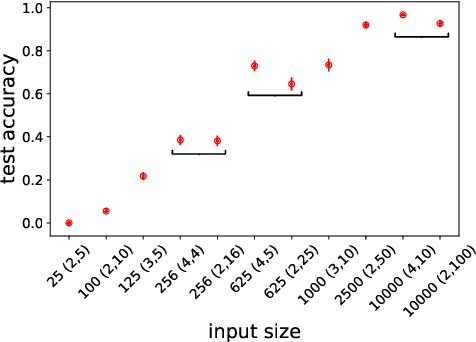

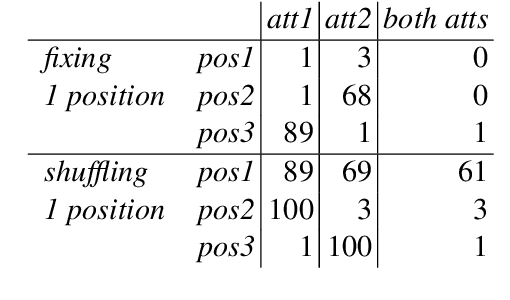

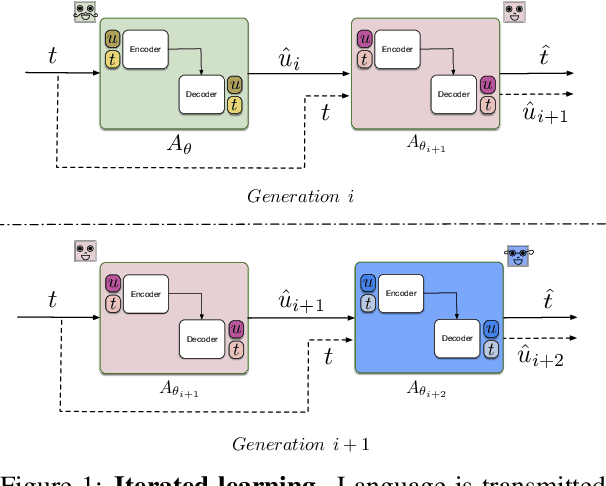

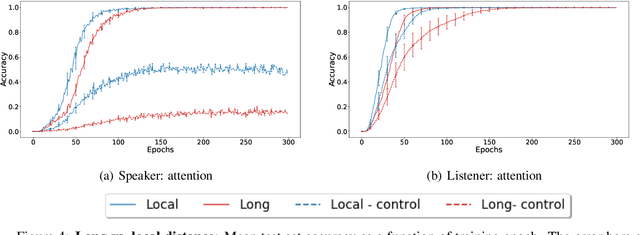

Abstract:Natural language allows us to refer to novel composite concepts by combining expressions denoting their parts according to systematic rules, a property known as \emph{compositionality}. In this paper, we study whether the language emerging in deep multi-agent simulations possesses a similar ability to refer to novel primitive combinations, and whether it accomplishes this feat by strategies akin to human-language compositionality. Equipped with new ways to measure compositionality in emergent languages inspired by disentanglement in representation learning, we establish three main results. First, given sufficiently large input spaces, the emergent language will naturally develop the ability to refer to novel composite concepts. Second, there is no correlation between the degree of compositionality of an emergent language and its ability to generalize. Third, while compositionality is not necessary for generalization, it provides an advantage in terms of language transmission: The more compositional a language is, the more easily it will be picked up by new learners, even when the latter differ in architecture from the original agents. We conclude that compositionality does not arise from simple generalization pressure, but if an emergent language does chance upon it, it will be more likely to survive and thrive.

Rat big, cat eaten! Ideas for a useful deep-agent protolanguage

Mar 17, 2020Abstract:Deep-agent communities developing their own language-like communication protocol are a hot (or at least warm) topic in AI. Such agents could be very useful in machine-machine and human-machine interaction scenarios long before they have evolved a protocol as complex as human language. Here, I propose a small set of priorities we should focus on, if we want to get as fast as possible to a stage where deep agents speak a useful protolanguage.

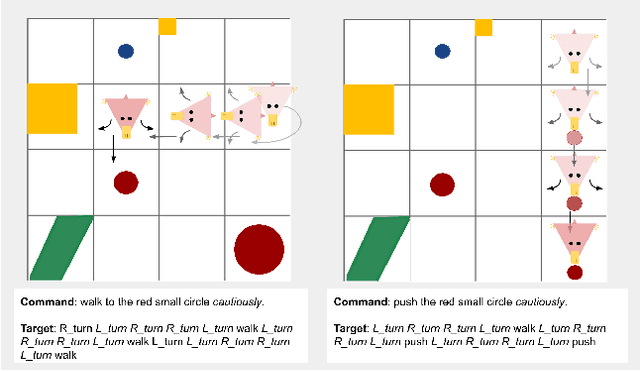

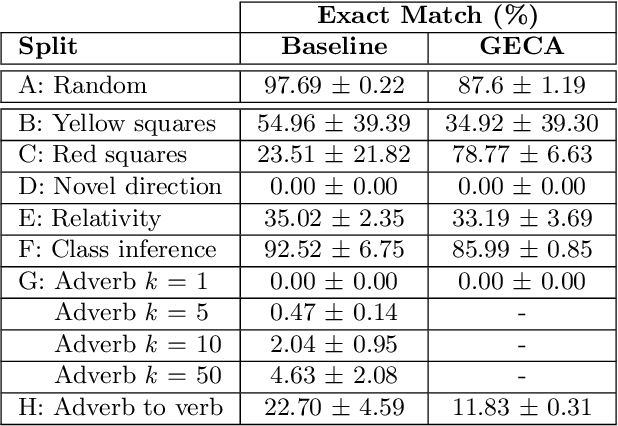

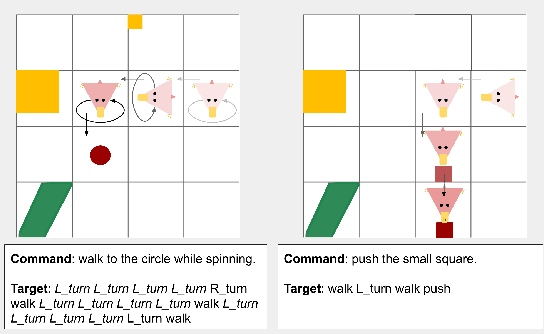

A Benchmark for Systematic Generalization in Grounded Language Understanding

Mar 11, 2020

Abstract:Human language users easily interpret expressions that describe unfamiliar situations composed from familiar parts ("greet the pink brontosaurus by the ferris wheel"). Modern neural networks, by contrast, struggle to interpret compositions unseen in training. In this paper, we introduce a new benchmark, gSCAN, for evaluating compositional generalization in models of situated language understanding. We take inspiration from standard models of meaning composition in formal linguistics. Going beyond an earlier related benchmark that focused on syntactic aspects of generalization, gSCAN defines a language grounded in the states of a grid world. This allows us to build novel generalization tasks that probe the acquisition of linguistically motivated rules. For example, agents must understand how adjectives such as 'small' are interpreted relative to the current world state or how adverbs such as 'cautiously' combine with new verbs. We test a strong multi-modal baseline model and a state-of-the-art compositional method finding that, in most cases, they fail dramatically when generalization requires systematic compositional rules.

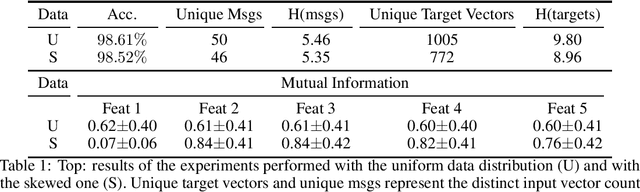

Focus on What's Informative and Ignore What's not: Communication Strategies in a Referential Game

Nov 05, 2019

Abstract:Research in multi-agent cooperation has shown that artificial agents are able to learn to play a simple referential game while developing a shared lexicon. This lexicon is not easy to analyze, as it does not show many properties of a natural language. In a simple referential game with two neural network-based agents, we analyze the object-symbol mapping trying to understand what kind of strategy was used to develop the emergent language. We see that, when the environment is uniformly distributed, the agents rely on a random subset of features to describe the objects. When we modify the objects making one feature non-uniformly distributed,the agents realize it is less informative and start to ignore it, and, surprisingly, they make a better use of the remaining features. This interesting result suggests that more natural, less uniformly distributed environments might aid in spurring the emergence of better-behaved languages.

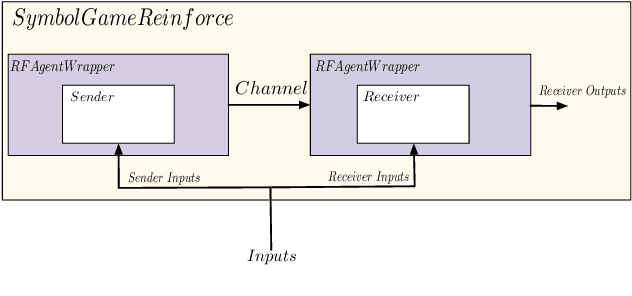

EGG: a toolkit for research on Emergence of lanGuage in Games

Jul 01, 2019

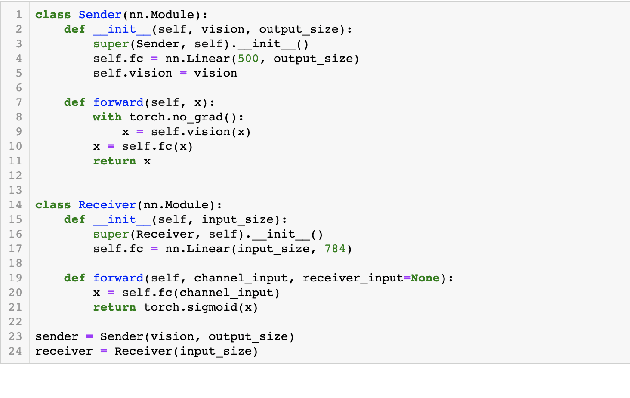

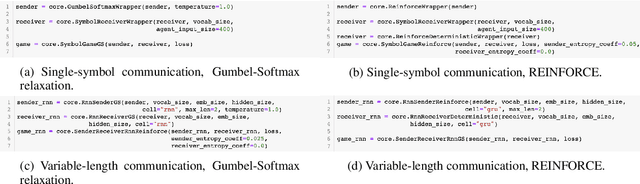

Abstract:There is renewed interest in simulating language emergence among deep neural agents that communicate to jointly solve a task, spurred by the practical aim to develop language-enabled interactive AIs, as well as by theoretical questions about the evolution of human language. However, optimizing deep architectures connected by a discrete communication channel (such as that in which language emerges) is technically challenging. We introduce EGG, a toolkit that greatly simplifies the implementation of emergent-language communication games. EGG's modular design provides a set of building blocks that the user can combine to create new games, easily navigating the optimization and architecture space. We hope that the tool will lower the technical barrier, and encourage researchers from various backgrounds to do original work in this exciting area.

Tabula nearly rasa: Probing the Linguistic Knowledge of Character-Level Neural Language Models Trained on Unsegmented Text

Jun 17, 2019Abstract:Recurrent neural networks (RNNs) have reached striking performance in many natural language processing tasks. This has renewed interest in whether these generic sequence processing devices are inducing genuine linguistic knowledge. Nearly all current analytical studies, however, initialize the RNNs with a vocabulary of known words, and feed them tokenized input during training. We present a multi-lingual study of the linguistic knowledge encoded in RNNs trained as character-level language models, on input data with word boundaries removed. These networks face a tougher and more cognitively realistic task, having to discover any useful linguistic unit from scratch based on input statistics. The results show that our "near tabula rasa" RNNs are mostly able to solve morphological, syntactic and semantic tasks that intuitively presuppose word-level knowledge, and indeed they learned, to some extent, to track word boundaries. Our study opens the door to speculations about the necessity of an explicit, rigid word lexicon in language learning and usage.

Word-order biases in deep-agent emergent communication

Jun 14, 2019

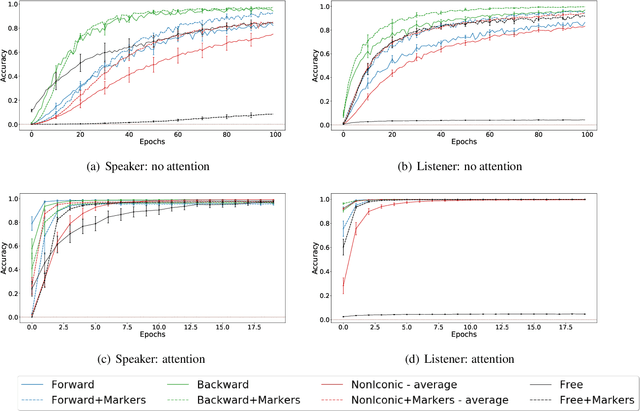

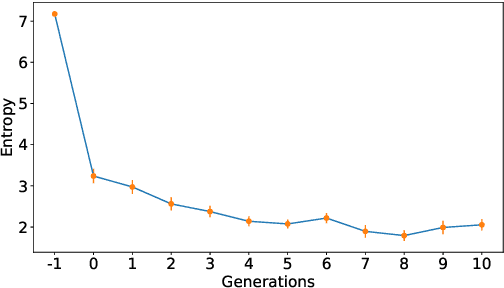

Abstract:Sequence-processing neural networks led to remarkable progress on many NLP tasks. As a consequence, there has been increasing interest in understanding to what extent they process language as humans do. We aim here to uncover which biases such models display with respect to "natural" word-order constraints. We train models to communicate about paths in a simple gridworld, using miniature languages that reflect or violate various natural language trends, such as the tendency to avoid redundancy or to minimize long-distance dependencies. We study how the controlled characteristics of our miniature languages affect individual learning and their stability across multiple network generations. The results draw a mixed picture. On the one hand, neural networks show a strong tendency to avoid long-distance dependencies. On the other hand, there is no clear preference for the efficient, non-redundant encoding of information that is widely attested in natural language. We thus suggest inoculating a notion of "effort" into neural networks, as a possible way to make their linguistic behavior more human-like.

Anti-efficient encoding in emergent communication

Jun 13, 2019

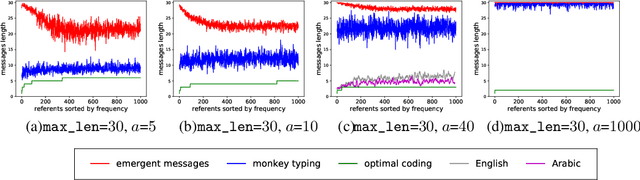

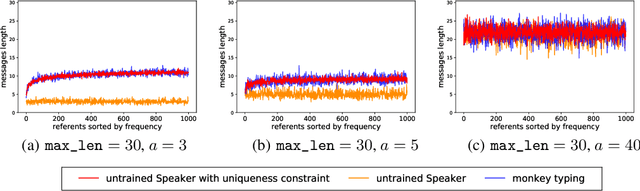

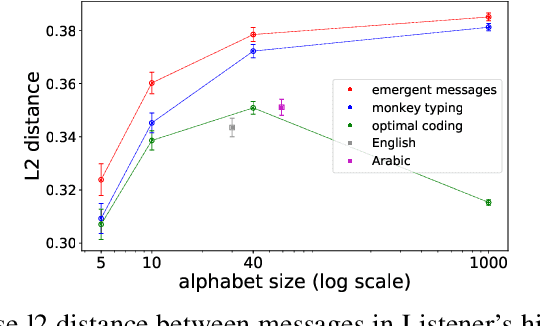

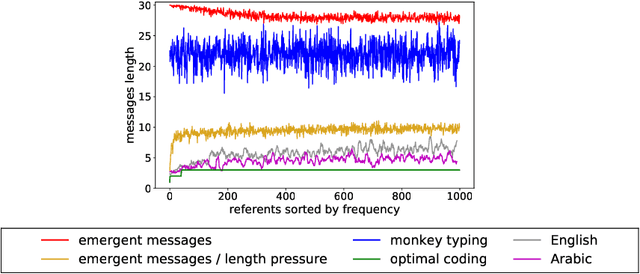

Abstract:Despite renewed interest in emergent language simulations with neural networks, little is known about the basic properties of the induced code, and how they compare to human language. One fundamental characteristic of the latter, known as Zipf's Law of Abbreviation (ZLA), is that more frequent words are efficiently associated to shorter strings. We study whether the same pattern emerges when two neural networks, a "speaker" and a "listener", are trained to play a signaling game. Surprisingly, we find that networks develop an \emph{anti-efficient} encoding scheme, in which the most frequent inputs are associated to the longest messages, and messages in general are skewed towards the maximum length threshold. This anti-efficient code appears easier to discriminate for the listener, and, unlike in human communication, the speaker does not impose a contrasting least-effort pressure towards brevity. Indeed, when the cost function includes a penalty for longer messages, the resulting message distribution starts respecting ZLA. Our analysis stresses the importance of studying the basic features of emergent communication in a highly controlled setup, to ensure the latter will not strand too far from human language. Moreover, we present a concrete illustration of how different functional pressures can lead to successful communication codes that lack basic properties of human language, thus highlighting the role such pressures play in the latter.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge