Marc R. Schlichting

Mechanistic Interpretability for Learning Assurance of a Vision-Based Landing System

May 20, 2026Abstract:EASA's learning-assurance guidance requires data-driven aviation systems to build and monitor their own situation representation, yet for neural networks the technical means to provide such evidence remain an open problem. We address this gap for a vision-based aircraft landing system: we propose that a minimally assurable model must at least be shown to separate content from style in its own situation representation. Showing that the model's predictions then rely largely on the contentful representation components leads to a concrete assurance path. To demonstrate this assurance path on a concrete model we train a vision transformer model for runway keypoint regression on the LARDv2 dataset. The model, which acts as the subject for our assurance demonstration, produces per-patch embeddings that we decompose into interpretable atoms via K-SVD sparse dictionary learning. A qualitative visualization confirms that contentful atoms track task-relevant runway structure and stylistic atoms track domain-specific appearance, and the regression head is shown to place almost all of its linear weight on contentful atoms. We further build on the content/style separation and define out-of-model-scope (OOMS) detection, a novel runtime assurance approach directly monitoring the model's situation representation. OOMS monitoring is complementary to operational design domain and output-space out-of-distribution monitoring and addresses concrete requirements of the recent EASA guidance. By directly analyzing a model's situation representation both at test time and runtime, this work delivers the first concrete piece of the representation-level evidence that EASA learning-assurance guidance demands, and points to mechanistic interpretability as a practical building block of future aviation safety cases.

Robust Planning for Autonomous Vehicles with Diffusion-Based Failure Samplers

Jul 16, 2025Abstract:High-risk traffic zones such as intersections are a major cause of collisions. This study leverages deep generative models to enhance the safety of autonomous vehicles in an intersection context. We train a 1000-step denoising diffusion probabilistic model to generate collision-causing sensor noise sequences for an autonomous vehicle navigating a four-way intersection based on the current relative position and velocity of an intruder. Using the generative adversarial architecture, the 1000-step model is distilled into a single-step denoising diffusion model which demonstrates fast inference speed while maintaining similar sampling quality. We demonstrate one possible application of the single-step model in building a robust planner for the autonomous vehicle. The planner uses the single-step model to efficiently sample potential failure cases based on the currently measured traffic state to inform its decision-making. Through simulation experiments, the robust planner demonstrates significantly lower failure rate and delay rate compared with the baseline Intelligent Driver Model controller.

Diffusion Models for Safety Validation of Autonomous Driving Systems

Jun 10, 2025Abstract:Safety validation of autonomous driving systems is extremely challenging due to the high risks and costs of real-world testing as well as the rarity and diversity of potential failures. To address these challenges, we train a denoising diffusion model to generate potential failure cases of an autonomous vehicle given any initial traffic state. Experiments on a four-way intersection problem show that in a variety of scenarios, the diffusion model can generate realistic failure samples while capturing a wide variety of potential failures. Our model does not require any external training dataset, can perform training and inference with modest computing resources, and does not assume any prior knowledge of the system under test, with applicability to safety validation for traffic intersections.

Scalable Importance Sampling in High Dimensions with Low-Rank Mixture Proposals

May 19, 2025Abstract:Importance sampling is a Monte Carlo technique for efficiently estimating the likelihood of rare events by biasing the sampling distribution towards the rare event of interest. By drawing weighted samples from a learned proposal distribution, importance sampling allows for more sample-efficient estimation of rare events or tails of distributions. A common choice of proposal density is a Gaussian mixture model (GMM). However, estimating full-rank GMM covariance matrices in high dimensions is a challenging task due to numerical instabilities. In this work, we propose using mixtures of probabilistic principal component analyzers (MPPCA) as the parametric proposal density for importance sampling methods. MPPCA models are a type of low-rank mixture model that can be fit quickly using expectation-maximization, even in high-dimensional spaces. We validate our method on three simulated systems, demonstrating consistent gains in sample efficiency and quality of failure distribution characterization.

Diffusion-Based Failure Sampling for Cyber-Physical Systems

Jun 20, 2024

Abstract:Validating safety-critical autonomous systems in high-dimensional domains such as robotics presents a significant challenge. Existing black-box approaches based on Markov chain Monte Carlo may require an enormous number of samples, while methods based on importance sampling often rely on simple parametric families that may struggle to represent the distribution over failures. We propose to sample the distribution over failures using a conditional denoising diffusion model, which has shown success in complex high-dimensional problems such as robotic task planning. We iteratively train a diffusion model to produce state trajectories closer to failure. We demonstrate the effectiveness of our approach on high-dimensional robotic validation tasks, improving sample efficiency and mode coverage compared to existing black-box techniques.

SAVME: Efficient Safety Validation for Autonomous Systems Using Meta-Learning

Sep 30, 2023

Abstract:Discovering potential failures of an autonomous system is important prior to deployment. Falsification-based methods are often used to assess the safety of such systems, but the cost of running many accurate simulation can be high. The validation can be accelerated by identifying critical failure scenarios for the system under test and by reducing the simulation runtime. We propose a Bayesian approach that integrates meta-learning strategies with a multi-armed bandit framework. Our method involves learning distributions over scenario parameters that are prone to triggering failures in the system under test, as well as a distribution over fidelity settings that enable fast and accurate simulations. In the spirit of meta-learning, we also assess whether the learned fidelity settings distribution facilitates faster learning of the scenario parameter distributions for new scenarios. We showcase our methodology using a cutting-edge 3D driving simulator, incorporating 16 fidelity settings for an autonomous vehicle stack that includes camera and lidar sensors. We evaluate various scenarios based on an autonomous vehicle pre-crash typology. As a result, our approach achieves a significant speedup, up to 18 times faster compared to traditional methods that solely rely on a high-fidelity simulator.

Deep Normalizing Flows for State Estimation

Jun 27, 2023

Abstract:Safe and reliable state estimation techniques are a critical component of next-generation robotic systems. Agents in such systems must be able to reason about the intentions and trajectories of other agents for safe and efficient motion planning. However, classical state estimation techniques such as Gaussian filters often lack the expressive power to represent complex underlying distributions, especially if the system dynamics are highly nonlinear or if the interaction outcomes are multi-modal. In this work, we use normalizing flows to learn an expressive representation of the belief over an agent's true state. Furthermore, we improve upon existing architectures for normalizing flows by using more expressive deep neural network architectures to parameterize the flow. We evaluate our method on two robotic state estimation tasks and show that our approach outperforms both classical and modern deep learning-based state estimation baselines.

Long Short-Term Memory for Spatial Encoding in Multi-Agent Path Planning

Mar 21, 2022

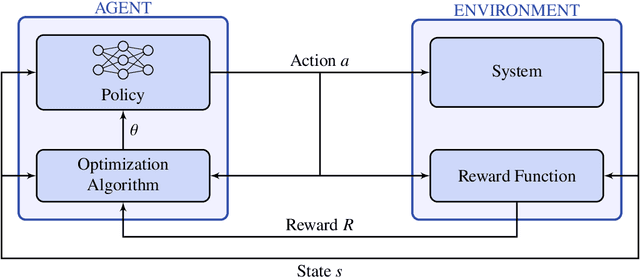

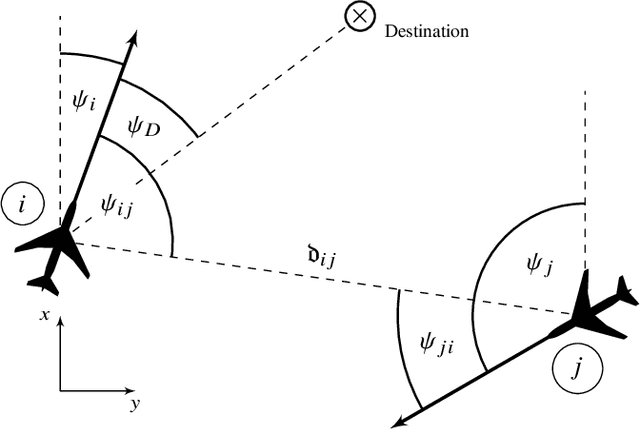

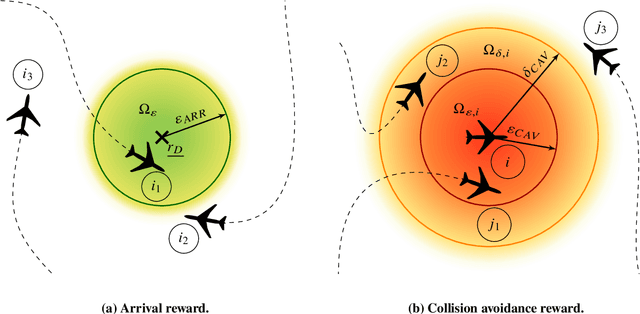

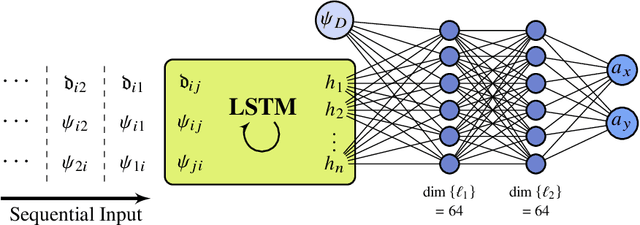

Abstract:Reinforcement learning-based path planning for multi-agent systems of varying size constitutes a research topic with increasing significance as progress in domains such as urban air mobility and autonomous aerial vehicles continues. Reinforcement learning with continuous state and action spaces is used to train a policy network that accommodates desirable path planning behaviors and can be used for time-critical applications. A Long Short-Term Memory module is proposed to encode an unspecified number of states for a varying, indefinite number of agents. The described training strategies and policy architecture lead to a guidance that scales to an infinite number of agents and unlimited physical dimensions, although training takes place at a smaller scale. The guidance is implemented on a low-cost, off-the-shelf onboard computer. The feasibility of the proposed approach is validated by presenting flight test results of up to four drones, autonomously navigating collision-free in a real-world environment.

* For associated source code, see https://github.com/MarcSchlichting/LSTMSpatialEncoding , For associated video of flight test, see https://schlichting.page.link/lstm_flight_test , 17 pages, 11 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge