Luca Tonin

Adaptive Gait Generation for Multi-Terrain Exoskeletons via Constrained Kernelized Movement Primitives

May 04, 2026Abstract:Lower limb exoskeletons (LLEs) present the potential to make motor-impaired individuals walk again. Their application in real-world environments is still limited by the lack of effective adaptive gait planning. Indeed, current exoskeletons are meant to walk only on a flat and even terrain. Generating environment-aware, physiologically consistent gait trajectories in real-time is an open challenge. To overcome this, we propose a novel Kernelized Movement Primitives (KMP)-based framework for adaptive gait generation (AGG) across multiple indoor terrains. The proposed approach learns a probabilistic representation of human gait in both the joint and task spaces from a limited number of human demonstrations, representing natural gait characteristics and ensuring kinematic feasibility. In addition, the learned trajectories are adapted using environmental information extracted from an onboard RGB-D camera by treating the AGG as a linearly constrained optimization problem with via-points. The proposed method has been thoroughly validated first in simulations for gait generation in different scenarios, such as flat-ground walking, slopes, stairs, and obstacles crossing. Finally, the effectiveness and robustness of the method have been demonstrated with experiments on a commercial LLE in real-world scenarios. The results obtained demonstrate the feasibility of an environment-aware gait planning system for a new generation of intelligent lower limb exoskeletons for assisting people with disabilities in their every-day life.

GEGLU-Transformer for IMU-to-EMG Estimation with Few-Shot Adaptation

Apr 28, 2026Abstract:Reliable estimation of neuromuscular activation is a key enabler for adaptive and personalized control in wearable robotics. However, surface electromyography (EMG) remains difficult to deploy robustly outside laboratory settings due to electrode sensitivity, signal non-stationarity, and strong subject dependence. In this work, we propose an adaptive IMU-to-EMG learning framework that reconstructs continuous muscle activation envelopes from wearable inertial measurements across heterogeneous movement conditions. The approach combines a Transformer encoder with Gaussian Error Gated Linear Units (GEGLU-Transformer) to enhance cross-subject generalization and enable rapid subject-specific personalization. Under a strict leave-one-subject-out (LOSO) protocol on a multi-condition lower-limb biomechanics dataset, the proposed architecture achieves r = 0.706 +/- 0.139 and R^2 = 0.474 +/- 0.208 without subject-specific adaptation. With only 0.5% adaptation data, performance increases to r = 0.761 +/- 0.030 and R^2 = 0.559 +/- 0.047, demonstrating rapid adaptation and early performance saturation. These results support attention-based architectures combined with lightweight adaptation as a practical and scalable alternative to direct EMG sensing for real-world wearable robotic applications.

OpenNav: Efficient Open Vocabulary 3D Object Detection for Smart Wheelchair Navigation

Aug 25, 2024Abstract:Open vocabulary 3D object detection (OV3D) allows precise and extensible object recognition crucial for adapting to diverse environments encountered in assistive robotics. This paper presents OpenNav, a zero-shot 3D object detection pipeline based on RGB-D images for smart wheelchairs. Our pipeline integrates an open-vocabulary 2D object detector with a mask generator for semantic segmentation, followed by depth isolation and point cloud construction to create 3D bounding boxes. The smart wheelchair exploits these 3D bounding boxes to identify potential targets and navigate safely. We demonstrate OpenNav's performance through experiments on the Replica dataset and we report preliminary results with a real wheelchair. OpenNav improves state-of-the-art significantly on the Replica dataset at mAP25 (+9pts) and mAP50 (+5pts) with marginal improvement at mAP. The code is publicly available at this link: https://github.com/EasyWalk-PRIN/OpenNav.

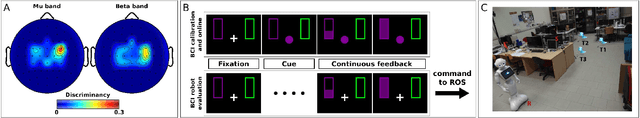

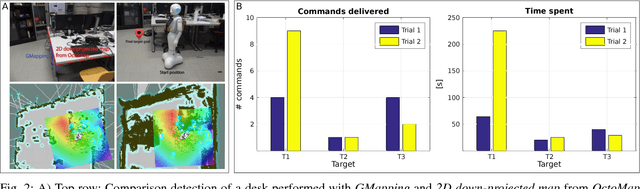

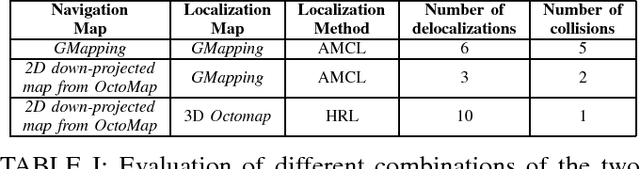

Brain-Computer Interface meets ROS: A robotic approach to mentally drive telepresence robots

Apr 04, 2019

Abstract:This paper shows and evaluates a novel approach to integrate a non-invasive Brain-Computer Interface (BCI) with the Robot Operating System (ROS) to mentally drive a telepresence robot. Controlling a mobile device by using human brain signals might improve the quality of life of people suffering from severe physical disabilities or elderly people who cannot move anymore. Thus, the BCI user is able to actively interact with relatives and friends located in different rooms thanks to a video streaming connection to the robot. To facilitate the control of the robot via BCI, we explore new ROS-based algorithms for navigation and obstacle avoidance, making the system safer and more reliable. In this regard, the robot can exploit two maps of the environment, one for localization and one for navigation, and both can be used also by the BCI user to watch the position of the robot while it is moving. As demonstrated by the experimental results, the user's cognitive workload is reduced, decreasing the number of commands necessary to complete the task and helping him/her to keep attention for longer periods of time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge