Longsheng Jiang

Autonomous Driving using Safe Reinforcement Learning by Incorporating a Regret-based Human Lane-Changing Decision Model

Oct 10, 2019

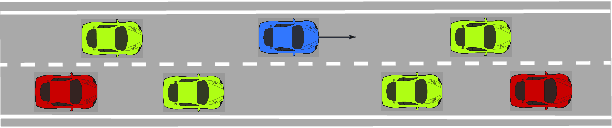

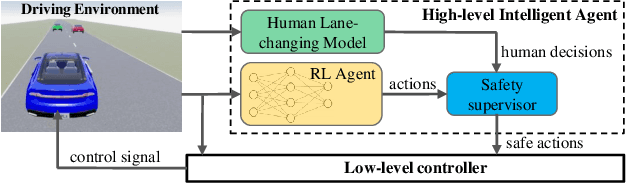

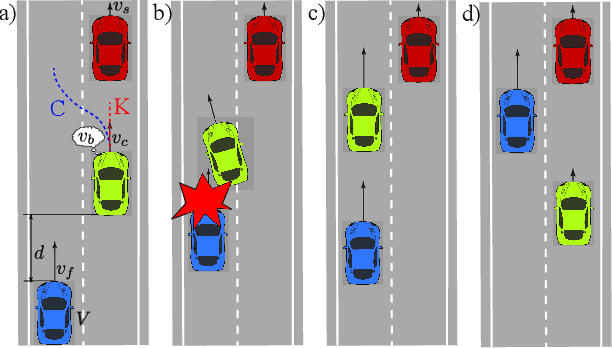

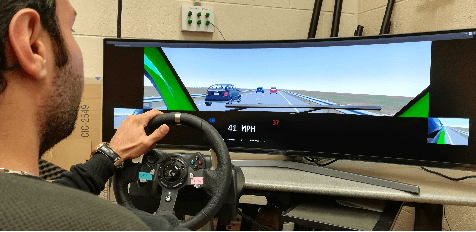

Abstract:It is expected that many human drivers will still prefer to drive themselves even if the self-driving technologies are ready. Therefore, human-driven vehicles and autonomous vehicles (AVs) will coexist in a mixed traffic for a long time. To enable AVs to safely and efficiently maneuver in this mixed traffic, it is critical that the AVs can understand how humans cope with risks and make driving-related decisions. On the other hand, the driving environment is highly dynamic and ever-changing, and it is thus difficult to enumerate all the scenarios and hard-code the controllers. To face up these challenges, in this work, we incorporate a human decision-making model in reinforcement learning to control AVs for safe and efficient operations. Specifically, we adapt regret theory to describe a human driver's lane-changing behavior, and fit the personalized models to individual drivers for predicting their lane-changing decisions. The predicted decisions are incorporated in the safety constraints for reinforcement learning in training and in implementation. We then use an extended version of double deep Q-network (DDQN) to train our AV controller within the safety set. By doing so, the amount of collisions in training is reduced to zero, while the training accuracy is not impinged.

Respect Your Emotion: Human-Multi-Robot Teaming based on Regret Decision Model

Sep 18, 2019

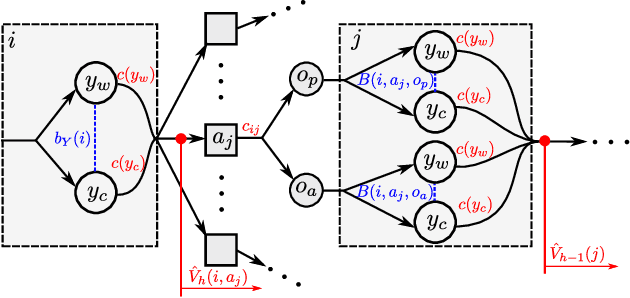

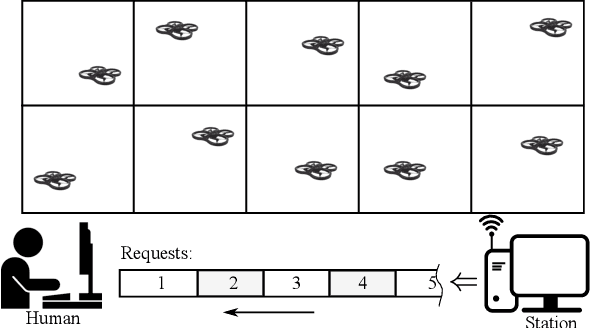

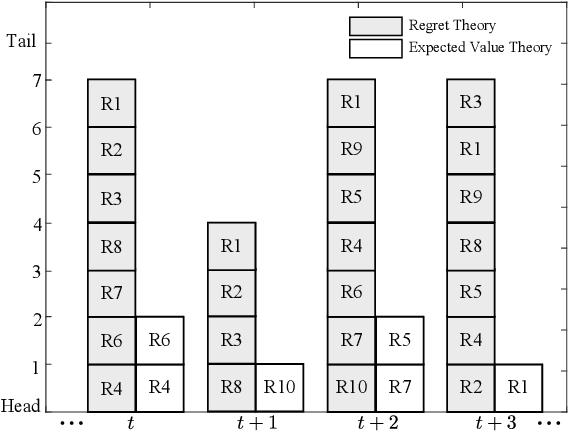

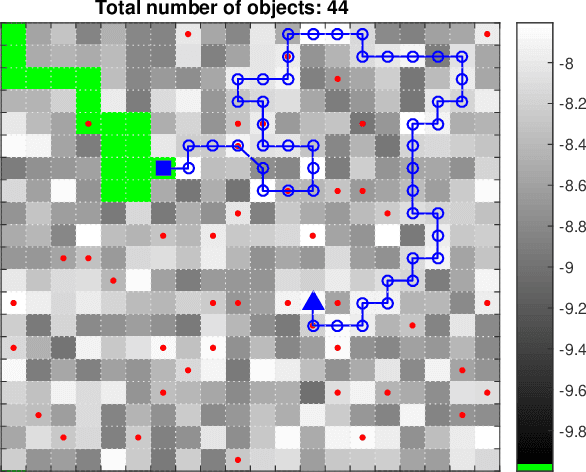

Abstract:Often, when modeling human decision-making behaviors in the context of human-robot teaming, the emotion aspect of human is ignored. Nevertheless, the influence of emotion, in some cases, is not only undeniable but beneficial. This work studies the human-like characteristics brought by regret emotion in one-human-multi-robot teaming for the application of domain search. In such application, the task management load is outsourced to the robots to reduce the human's workload, freeing the human to do more important work. The regret decision model is first used by each robot for deciding whether to request human service, then is extended for optimally queuing the requests from multiple robots. For the movement of the robots in the domain search, we designed a path planning algorithm based on dynamic programming for each robot. The simulation shows that the human-like characteristics, namely, risk-seeking and risk-aversion, indeed bring some appealing effects for balancing the workload and performance in the human-multi-robot team.

* 8 pages, 4 figures, conference

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge