Liyue Zhao

Discriminative adversarial networks for positive-unlabeled learning

Jun 06, 2019

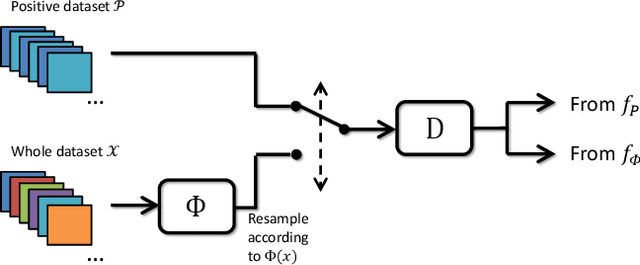

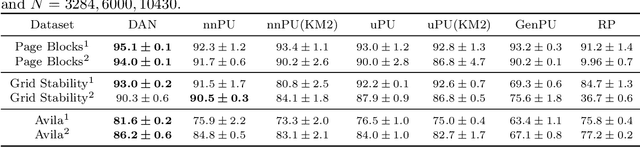

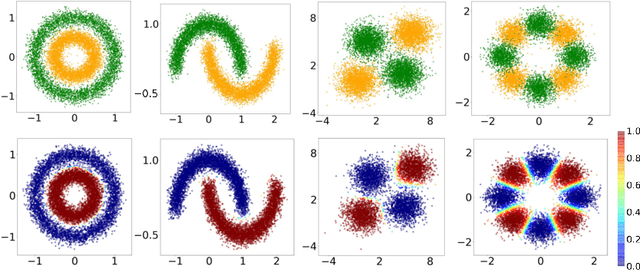

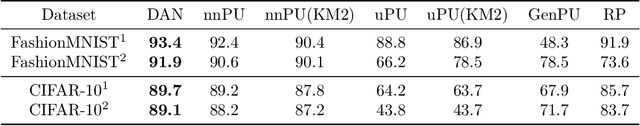

Abstract:As an important semi-supervised learning task, positive-unlabeled (PU) learning aims to learn a binary classifier only from positive and unlabeled data. In this article, we develop a novel PU learning framework, called discriminative adversarial networks, which contains two discriminative models represented by deep neural networks. One model $\Phi$ predicts the conditional probability of the positive label for a given sample, which defines a Bayes classifier after training, and the other model $D$ distinguishes labeled positive data from those identified by $\Phi$. The two models are simultaneously trained in an adversarial way like generative adversarial networks, and the equilibrium can be achieved when the output of $\Phi$ is close to the exact posterior probability of the positive class. In contrast with existing deep PU learning approaches, DAN does not require the class prior estimation, and its consistency can be proved under very general conditions. Numerical experiments demonstrate the effectiveness of the proposed framework.

An Active Learning Approach for Jointly Estimating Worker Performance and Annotation Reliability with Crowdsourced Data

Jan 16, 2014

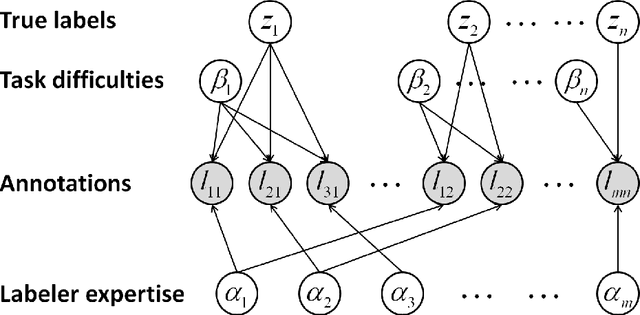

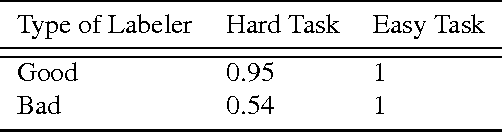

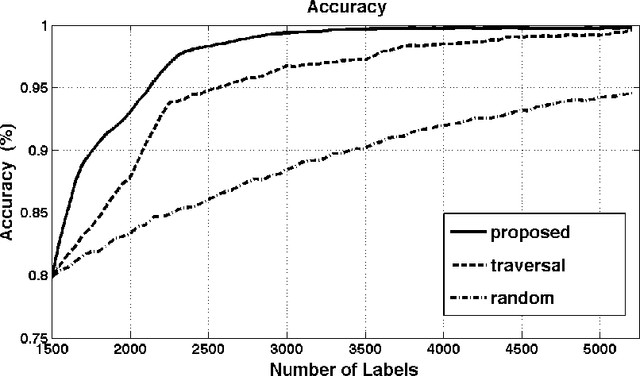

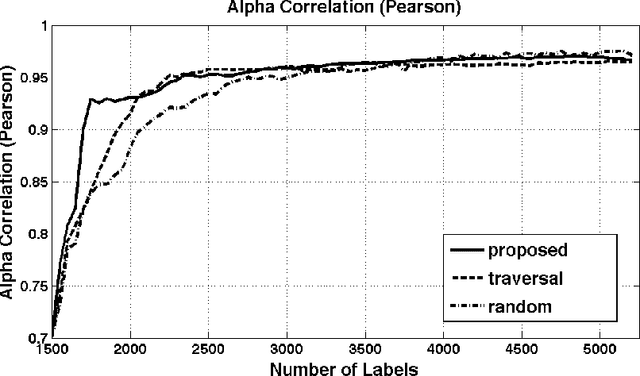

Abstract:Crowdsourcing platforms offer a practical solution to the problem of affordably annotating large datasets for training supervised classifiers. Unfortunately, poor worker performance frequently threatens to compromise annotation reliability, and requesting multiple labels for every instance can lead to large cost increases without guaranteeing good results. Minimizing the required training samples using an active learning selection procedure reduces the labeling requirement but can jeopardize classifier training by focusing on erroneous annotations. This paper presents an active learning approach in which worker performance, task difficulty, and annotation reliability are jointly estimated and used to compute the risk function guiding the sample selection procedure. We demonstrate that the proposed approach, which employs active learning with Bayesian networks, significantly improves training accuracy and correctly ranks the expertise of unknown labelers in the presence of annotation noise.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge