Levin Gerdes

High-fidelity 3D reconstruction for planetary exploration

Feb 14, 2026Abstract:Planetary exploration increasingly relies on autonomous robotic systems capable of perceiving, interpreting, and reconstructing their surroundings in the absence of global positioning or real-time communication with Earth. Rovers operating on planetary surfaces must navigate under sever environmental constraints, limited visual redundancy, and communication delays, making onboard spatial awareness and visual localization key components for mission success. Traditional techniques based on Structure-from-Motion (SfM) and Simultaneous Localization and Mapping (SLAM) provide geometric consistency but struggle to capture radiometric detail or to scale efficiently in unstructured, low-texture terrains typical of extraterrestrial environments. This work explores the integration of radiance field-based methods - specifically Neural Radiance Fields (NeRF) and Gaussian Splatting - into a unified, automated environment reconstruction pipeline for planetary robotics. Our system combines the Nerfstudio and COLMAP frameworks with a ROS2-compatible workflow capable of processing raw rover data directly from rosbag recordings. This approach enables the generation of dense, photorealistic, and metrically consistent 3D representations from minimal visual input, supporting improved perception and planning for autonomous systems operating in planetary-like conditions. The resulting pipeline established a foundation for future research in radiance field-based mapping, bridging the gap between geometric and neural representations in planetary exploration.

* 7 pages, 3 figures, conference paper

OmniUnet: A Multimodal Network for Unstructured Terrain Segmentation on Planetary Rovers Using RGB, Depth, and Thermal Imagery

Aug 01, 2025Abstract:Robot navigation in unstructured environments requires multimodal perception systems that can support safe navigation. Multimodality enables the integration of complementary information collected by different sensors. However, this information must be processed by machine learning algorithms specifically designed to leverage heterogeneous data. Furthermore, it is necessary to identify which sensor modalities are most informative for navigation in the target environment. In Martian exploration, thermal imagery has proven valuable for assessing terrain safety due to differences in thermal behaviour between soil types. This work presents OmniUnet, a transformer-based neural network architecture for semantic segmentation using RGB, depth, and thermal (RGB-D-T) imagery. A custom multimodal sensor housing was developed using 3D printing and mounted on the Martian Rover Testbed for Autonomy (MaRTA) to collect a multimodal dataset in the Bardenas semi-desert in northern Spain. This location serves as a representative environment of the Martian surface, featuring terrain types such as sand, bedrock, and compact soil. A subset of this dataset was manually labeled to support supervised training of the network. The model was evaluated both quantitatively and qualitatively, achieving a pixel accuracy of 80.37% and demonstrating strong performance in segmenting complex unstructured terrain. Inference tests yielded an average prediction time of 673 ms on a resource-constrained computer (Jetson Orin Nano), confirming its suitability for on-robot deployment. The software implementation of the network and the labeled dataset have been made publicly available to support future research in multimodal terrain perception for planetary robotics.

Field Assessment of Force Torque Sensors for Planetary Rover Navigation

Nov 07, 2024

Abstract:Proprioceptive sensors on planetary rovers serve for state estimation and for understanding terrain and locomotion performance. While inertial measurement units (IMUs) are widely used to this effect, force-torque sensors are less explored for planetary navigation despite their potential to directly measure interaction forces and provide insights into traction performance. This paper presents an evaluation of the performance and use cases of force-torque sensors based on data collected from a six-wheeled rover during tests over varying terrains, speeds, and slopes. We discuss challenges, such as sensor signal reliability and terrain response accuracy, and identify opportunities regarding the use of these sensors. The data is openly accessible and includes force-torque measurements from each of the six-wheel assemblies as well as IMU data from within the rover chassis. This paper aims to inform the design of future studies and rover upgrades, particularly in sensor integration and control algorithms, to improve navigation capabilities.

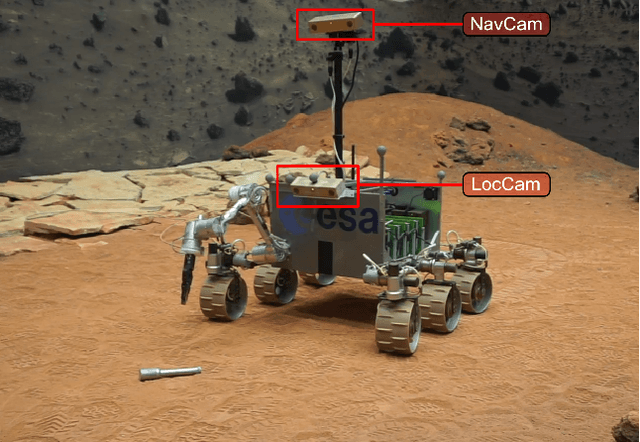

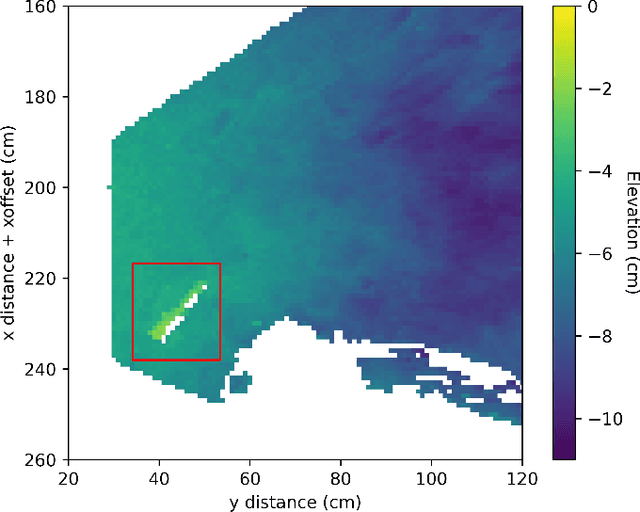

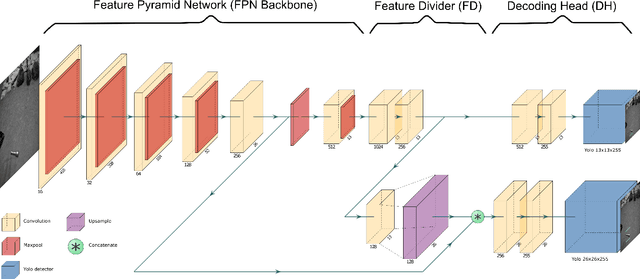

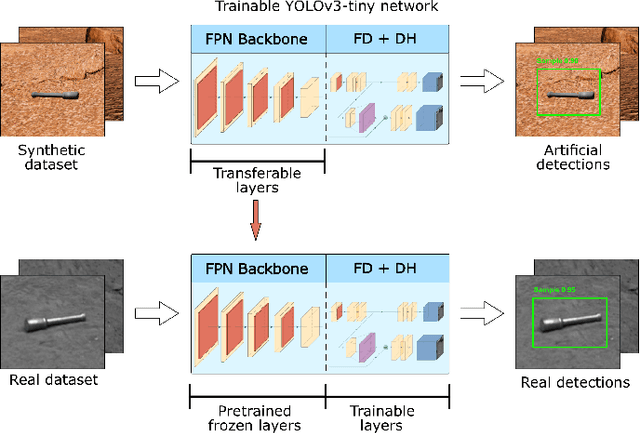

Hardware-accelerated Mars Sample Localization via deep transfer learning from photorealistic simulations

Jun 06, 2022

Abstract:The goal of the Mars Sample Return campaign is to collect soil samples from the surface of Mars and return them to Earth for further study. The samples will be acquired and stored in metal tubes by the Perseverance rover and deposited on the Martian surface. As part of this campaign, it is expected the Sample Fetch Rover will be in charge of localizing and gathering up to 35 sample tubes over 150 Martian sols. Autonomous capabilities are critical for the success of the overall campaign and for the Sample Fetch Rover in particular. This work proposes a novel approach for the autonomous detection and pose estimation of the sample tubes. For the detection stage, a Deep Neural Network and transfer learning from a synthetic dataset are proposed. The dataset is created from photorealistic 3D simulations of Martian scenarios. Additionally, Computer Vision techniques are used to estimate the detected sample tubes poses. Finally, laboratory tests of the Sample Localization procedure are performed using the ExoMars Testing Rover on a Mars-like testbed. These tests validate the proposed approach in different hardware architectures, providing promising results related to the sample detection and pose estimation.

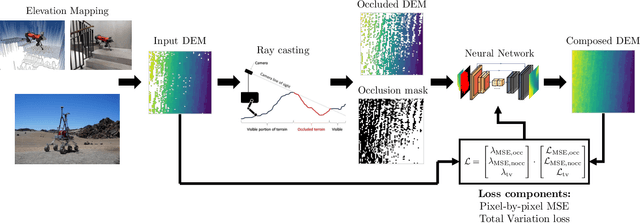

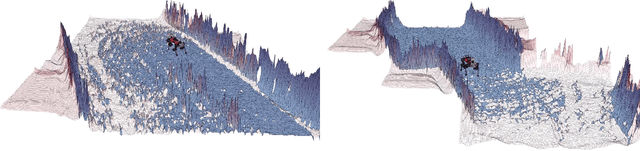

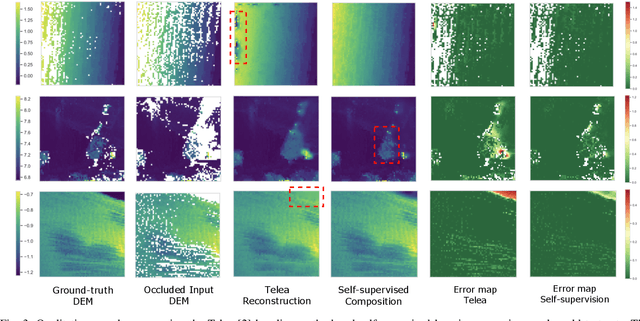

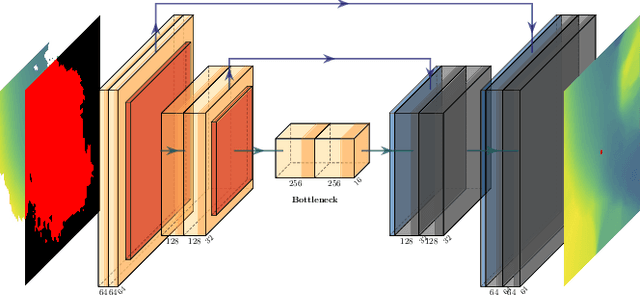

Solving Occlusion in Terrain Mapping with Neural Networks

Sep 15, 2021

Abstract:Accurate and complete terrain maps enhance the awareness of autonomous robots and enable safe and optimal path planning. Rocks and topography often create occlusions and lead to missing elevation information in the Digital Elevation Map (DEM). Currently, mostly traditional inpainting techniques based on diffusion or patch-matching are used by autonomous mobile robots to fill-in incomplete DEMs. These methods cannot leverage the high-level terrain characteristics and the geometric constraints of line of sight we humans use intuitively to predict occluded areas. We propose to use neural networks to reconstruct the occluded areas in DEMs. We introduce a self-supervised learning approach capable of training on real-world data without a need for ground-truth information. We accomplish this by adding artificial occlusion to the incomplete elevation maps constructed on a real robot by performing ray casting. We first evaluate a supervised learning approach on synthetic data for which we have the full ground-truth available and subsequently move to several real-world datasets. These real-world datasets were recorded during autonomous exploration of both structured and unstructured terrain with a legged robot, and additionally in a planetary scenario on Lunar analogue terrain. We state a significant improvement compared to the Telea and Navier-Stokes baseline methods both on synthetic terrain and for the real-world datasets. Our neural network is able to run in real-time on both CPU and GPU with suitable sampling rates for autonomous ground robots.

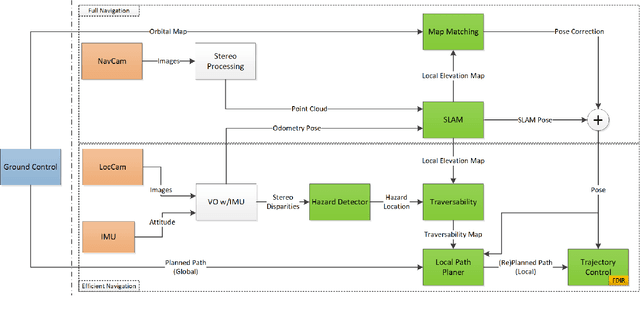

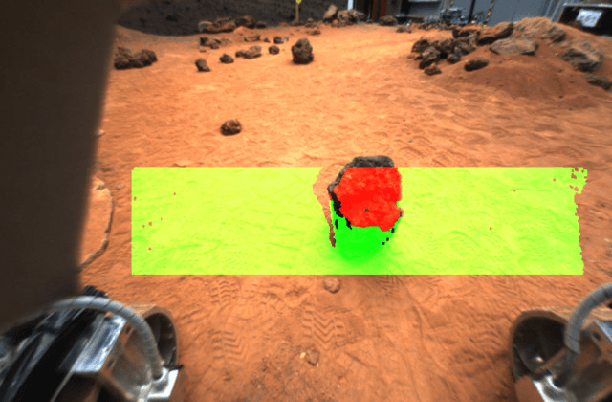

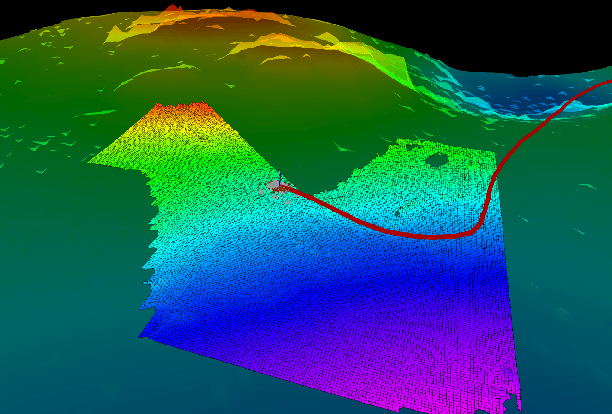

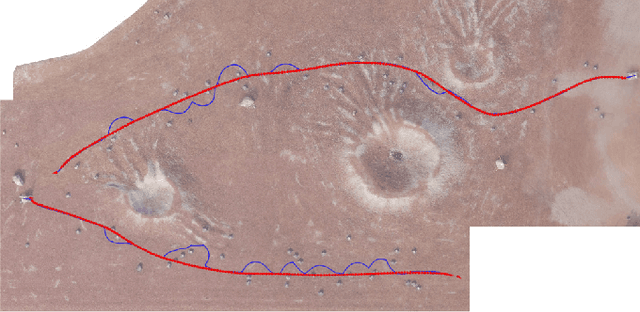

A GNC Architecture for Planetary Rovers with Autonomous Navigation Capabilities

Nov 22, 2019

Abstract:This paper proposes a Guidance, Navigation, and Control (GNC) architecture for planetary rovers targeting the conditions of upcoming Mars exploration missions such as Mars 2020 and the Sample Fetching Rover (SFR). The navigation requirements of these missions demand a control architecture featuring autonomous capabilities to achieve a fast and long traverse. The proposed solution presents a two-level architecture where the efficient navigation (low) level is always active and the full navigation (upper) level is enabled according to the difficulty of the terrain. The first level is an efficient implementation of the basic functionalities for autonomous navigation based on hazard detection, local path replanning, and trajectory control with visual odometry. The second level implements an adaptive SLAM algorithm that improves the relative localization, evaluates the traversability of the terrain ahead for a more optimal path planning, and performs global (absolute) localization that corrects the pose drift during longer traverses. The architecture provides a solution for long range, low supervision and fast planetary exploration. Both navigation levels have been validated on planetary analogue field test campaigns.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge