Laurent Clavier

IEMN, CERI SN - IMT Nord Europe, IRCICA, IMT Nord Europe

Gaussian Phase Noise Effects on Hybrid Precoding MIMO Systems for Sub-THz Transmission

Mar 25, 2026Abstract:The sub-THz spectrum offers numerous advantages, including massive multiple-input multiple-output (MIMO) technology with large antenna arrays that enhance spectral efficiency (SE) of future systems. Hybrid precoding (HP) thus emerges as a cost-effective alternative to fully digital precoding regarding complexity and energy consumption. However, sub-THz frequencies introduce hardware challenges, particularly phase noise (PN) from local oscillators (LOs). We analyze PN impact on MIMO systems using HP, leveraging singular value decomposition and common LO architecture. We adopt the Gaussian PN (GPN) model, recognized as accurate for describing PN behavior in sub-THz transmissions. We derive a lower bound on achievable SE and provide closed-form bit error rate expressions for quadrature amplitude modulation (QAM), specifically 4-QAM and 16-QAM, under high-SNR and strong GPN conditions. These analytical results are validated through Monte Carlo simulations. We show that GPN can be effectively counteracted with a single pilot symbol in single-user MIMO systems, unlike single-input single-output systems where mitigation proves infeasible. Simulation results compare conventional QAM against polar-QAM tailored for GPN-impaired systems. Finally, we introduce perspectives for further improvements in performance and energy efficiency.

Exploiting Spatial Modulation for Strong PhaseNoise Mitigation in mmWave Massive MIMO

Mar 11, 2026Abstract:This letter investigates phase noise (PN) mitigation in generalized receiver spatial modulation (GRSM) massive MIMO systems at mmWave under a common local oscillator (CLO). Under CLO, the received energy remains invariant relative to the no-PN scenario, enabling reliable energy-based spatial detection using the no-PN threshold. PN-sensitivity and geometry-based metrics are introduced to design compact, PN-resilient MQAM symbol pools with low detection complexity. PN robustness is further improved through an enhanced PN-aware GRSM-MQAM system that exploits spatial modulation (SM) to recover part of the MQAM bits and strategically maps spatial-pattern Hamming weights to reduce the effective PN impact. In addition, a practical single-stage PN estimation/compensation architecture is proposed, while a benchmark double-stage compensation is adopted to quantify the upper bound achievable via separate Tx/Rx PN mitigation. Results show that under PN, the overall BER is mainly dominated by MQAM symbol detection errors, especially for denser constellations, whereas spatial detection remains robust. The proposed single-stage compensation improves PN resilience, while the benchmark double-stage compensation approaches near PN-free performance.

On the Ambiguity Function of OFDM-based ISAC Signals Under Non-Ideal Power Amplifiers

Dec 10, 2025Abstract:Integrated Sensing and Communications (ISAC) has garnered significant attention as a promising technology for next-generation wireless and vehicular communications. Among candidate waveforms, Orthogonal Frequency Division Multiplexing (OFDM) has been extensively investigated over the past decade for its robustness against frequency-selective fading and its favorable ranging performance. However, the waveform's sensing and communication (S&C) performance depends strongly on the modulation scheme; while variable-amplitude constellations such as quadrature amplitude (QAM) are more efficient for communication, constant-modulus modulations such as phase shift keying (PSK) are more suitable for sensing. Yet, it remains unclear whether these findings persist under power amplifier (PA) nonlinearity. Because OFDM signals exhibit a high peak-to-average power ratio (PAPR), they require highly linear PAs to avoid distortion, which conflicts with radar requirements, where high transmit power is always beneficial for sensing. In this work, we analyze the effect of PA-induced distortions on the sensing task for PSK and QAM constellations. By introducing the Signal-to-Distortion Ratio (SDR), we examine the extent of the distortion limitation on the ranging task. We complement simulation results with a theoretical characterization of the ambiguity function (AF), thereby explicitly demonstrating how distortion artifacts manifest in the zero-Doppler sidelobes (i.e, ranging sidelobes) and the zero-delay sidelobes. Simulations show that PA distortions impose a palpable performance ceiling for both constellations, reshape the AF, and reduce detection probability, diminishing the theoretical advantage of unimodular signaling and further compromising the OFDM sensing performance with non-uniform envelope signals.

Explainable AI for Enhancing Efficiency of DL-based Channel Estimation

Jul 09, 2024

Abstract:The support of artificial intelligence (AI) based decision-making is a key element in future 6G networks, where the concept of native AI will be introduced. Moreover, AI is widely employed in different critical applications such as autonomous driving and medical diagnosis. In such applications, using AI as black-box models is risky and challenging. Hence, it is crucial to understand and trust the decisions taken by these models. Tackling this issue can be achieved by developing explainable AI (XAI) schemes that aim to explain the logic behind the black-box model behavior, and thus, ensure its efficient and safe deployment. Recently, we proposed a novel perturbation-based XAI-CHEST framework that is oriented toward channel estimation in wireless communications. The core idea of the XAI-CHEST framework is to identify the relevant model inputs by inducing high noise on the irrelevant ones. This manuscript provides the detailed theoretical foundations of the XAI-CHEST framework. In particular, we derive the analytical expressions of the XAI-CHEST loss functions and the noise threshold fine-tuning optimization problem. Hence the designed XAI-CHEST delivers a smart input feature selection methodology that can further improve the overall performance while optimizing the architecture of the employed model. Simulation results show that the XAI-CHEST framework provides valid interpretations, where it offers an improved bit error rate performance while reducing the required computational complexity in comparison to the classical DL-based channel estimation.

GLIP: Electromagnetic Field Exposure Map Completion by Deep Generative Networks

May 06, 2024

Abstract:In Spectrum cartography (SC), the generation of exposure maps for radio frequency electromagnetic fields (RF-EMF) spans dimensions of frequency, space, and time, which relies on a sparse collection of sensor data, posing a challenging ill-posed inverse problem. Cartography methods based on models integrate designed priors, such as sparsity and low-rank structures, to refine the solution of this inverse problem. In our previous work, EMF exposure map reconstruction was achieved by Generative Adversarial Networks (GANs) where physical laws or structural constraints were employed as a prior, but they require a large amount of labeled data or simulated full maps for training to produce efficient results. In this paper, we present a method to reconstruct EMF exposure maps using only the generator network in GANs which does not require explicit training, thus overcoming the limitations of GANs, such as using reference full exposure maps. This approach uses a prior from sensor data as Local Image Prior (LIP) captured by deep convolutional generative networks independent of learning the network parameters from images in an urban environment. Experimental results show that, even when only sparse sensor data are available, our method can produce accurate estimates.

On exploiting the synaptic interaction properties to obtain frequency-specific neurons

Nov 17, 2023Abstract:Energy consumption remains the main limiting factors in many IoT applications. In particular, micro-controllers consume far too much power. In order to overcome this problem, new circuit designs have been proposed and the use of spiking neurons and analog computing has emerged as it allows a very significant consumption reduction. However, working in the analog domain brings difficulty to handle the sequential processing of incoming signals as is needed in many use cases. In this paper, we use a bio-inspired phenomenon called Interacting Synapses to produce a time filter, without using non-biological techniques such as synaptic delays. We propose a model of neuron and synapses that fire for a specific range of delays between two incoming spikes, but do not react when this Inter-Spike Timing is not in that range. We study the parameters of the model to understand how to choose them and adapt the Inter-Spike Timing. The originality of the paper is to propose a new way, in the analog domain, to deal with temporal sequences.

Towards Explainable AI for Channel Estimation in Wireless Communications

Jul 03, 2023Abstract:Research into 6G networks has been initiated to support a variety of critical artificial intelligence (AI) assisted applications such as autonomous driving. In such applications, AI-based decisions should be performed in a real-time manner. These decisions include resource allocation, localization, channel estimation, etc. Considering the black-box nature of existing AI-based models, it is highly challenging to understand and trust the decision-making behavior of such models. Therefore, explaining the logic behind those models through explainable AI (XAI) techniques is essential for their employment in critical applications. This manuscript proposes a novel XAI-based channel estimation (XAI-CHEST) scheme that provides detailed reasonable interpretability of the deep learning (DL) models that are employed in doubly-selective channel estimation. The aim of the proposed XAI-CHEST scheme is to identify the relevant model inputs by inducing high noise on the irrelevant ones. As a result, the behavior of the studied DL-based channel estimators can be further analyzed and evaluated based on the generated interpretations. Simulation results show that the proposed XAI-CHEST scheme provides valid interpretations of the DL-based channel estimators for different scenarios.

Gaussian Process-based Spatial Reconstruction of Electromagnetic fields

Mar 03, 2022

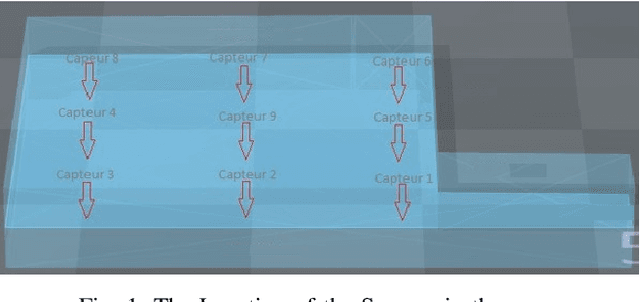

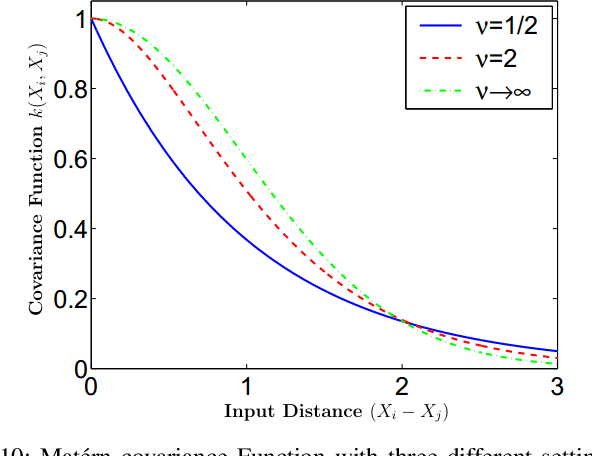

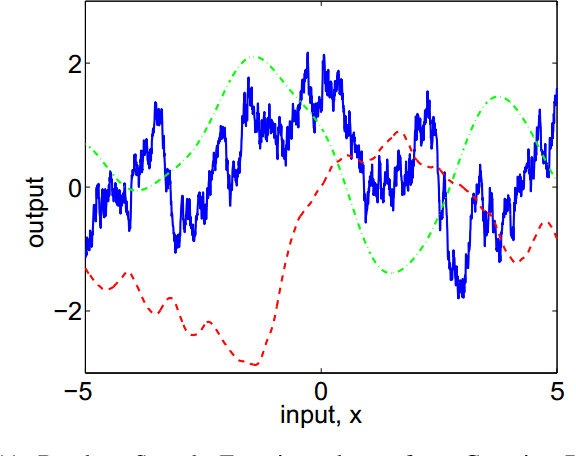

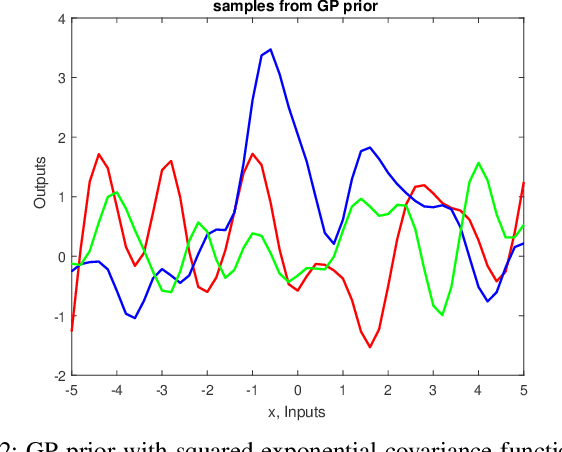

Abstract:These days we live in a world with a permanent electromagnetic field. This raises many questions about our health and the deployment of new equipment. The problem is that these fields remain difficult to visualize easily, which only some experts can understand. To tackle this problem, we propose to spatially estimate the level of the field based on a few observations at all positions of the considered space. This work presents an algorithm for spatial reconstruction of electromagnetic fields using the Gaussian Process. We consider a spatial, physical phenomenon observed by a sensor network. A Gaussian Process regression model with selected mean and covariance function is implemented to develop a 9 sensors-based estimation algorithm. A Bayesian inference approach is used to perform the model selection of the covariance function and to learn the hyperparameters from our data set. We present the prediction performance of the proposed model and compare it with the case where the mean is zero. The results show that the proposed Gaussian Process-based prediction model reconstructs the EM fields in all positions only using 9 sensors.

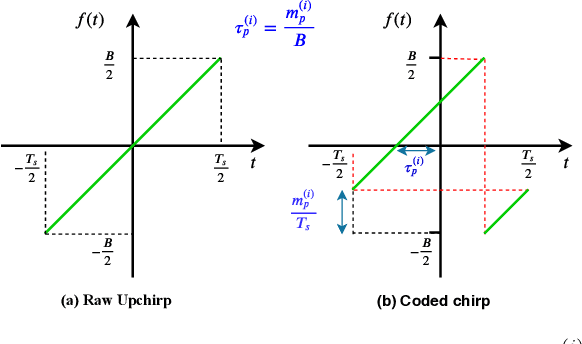

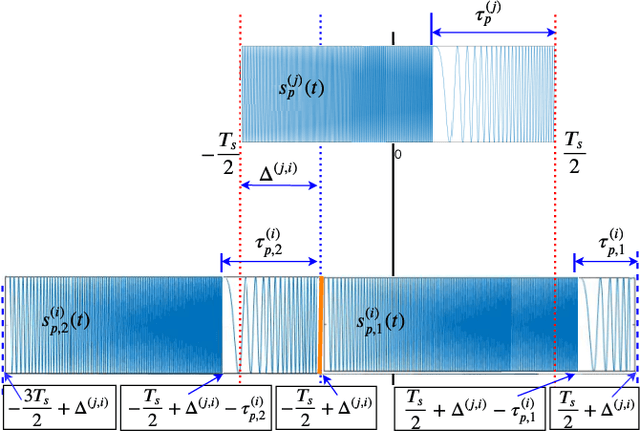

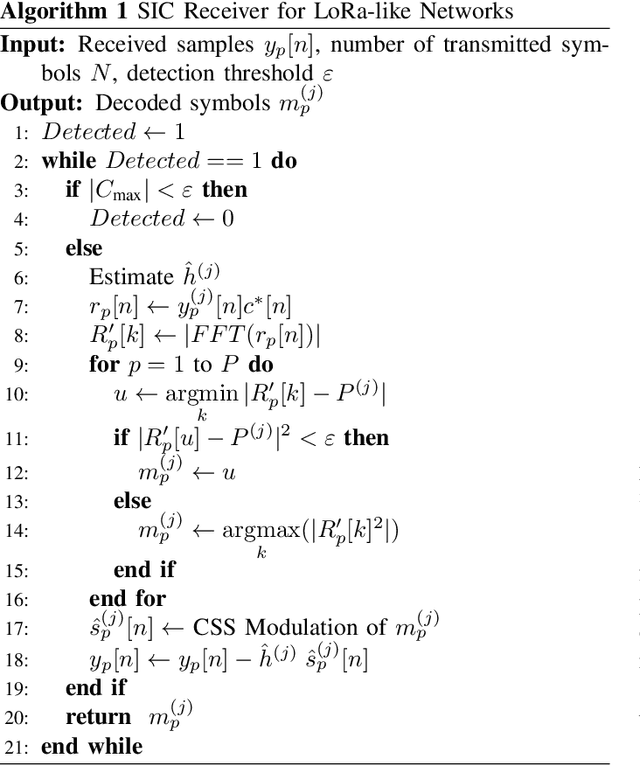

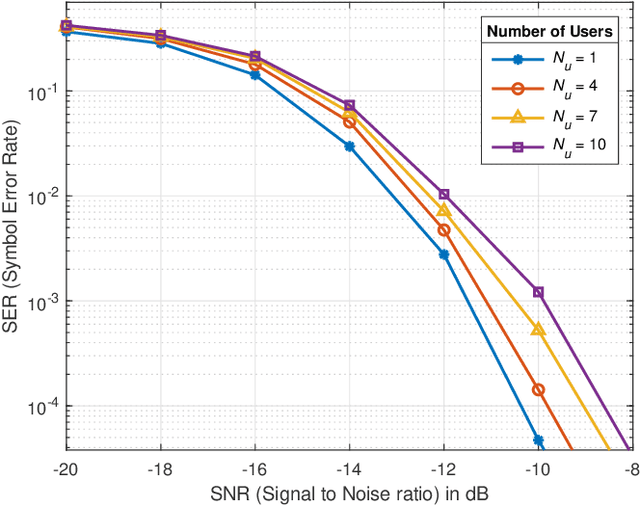

Serial Interference Cancellation for Improving uplink in LoRa-like Networks

Mar 04, 2021

Abstract:In this paper, we present a new receiver design, which significantly improves performance in the Internet of Things networks such as LoRa, i.e., having a chirp spread spectrum modulation. The proposed receiver is able to demodulate multiple users simultaneously transmitted over the same frequency channel with the same spreading factor. From a non-orthogonal multiple access point of view, it is based on the power domain and uses serial interference cancellation. Simulation results show that the receiver allows a significant increase in the number of connected devices in the network.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge