Kyung-Joong Kim

Multiverse: Language-Conditioned Multi-Game Level Blending via Shared Representation

Mar 31, 2026Abstract:Text-to-level generation aims to translate natural language descriptions into structured game levels, enabling intuitive control over procedural content generation. While prior text-to-level generators are typically limited to a single game domain, extending language-conditioned generation to multiple games requires learning representations that capture structural relationships across domains. We propose Multiverse, a language-conditioned multi-game level generator that enables cross-game level blending through textual specifications. The model learns a shared latent space aligning textual instructions and level structures, while a threshold-based multi-positive contrastive supervision links semantically related levels across games. This representation allows language to guide which structural characteristics should be preserved when combining content from different games, enabling controllable blending through latent interpolation and zero-shot generation from compositional textual prompts. Experiments show that the learned representation supports controllable cross-game level blending and significantly improves blending quality within the same game genre, while providing a unified representation for language-conditioned multi-game content generation.

Shared Representation for 3D Pose Estimation, Action Classification, and Progress Prediction from Tactile Signals

Mar 26, 2026Abstract:Estimating human pose, classifying actions, and predicting movement progress are essential for human-robot interaction. While vision-based methods suffer from occlusion and privacy concerns in realistic environments, tactile sensing avoids these issues. However, prior tactile-based approaches handle each task separately, leading to suboptimal performance. In this study, we propose a Shared COnvolutional Transformer for Tactile Inference (SCOTTI) that learns a shared representation to simultaneously address three separate prediction tasks: 3D human pose estimation, action class categorization, and action completion progress estimation. To the best of our knowledge, this is the first work to explore action progress prediction using foot tactile signals from custom wireless insole sensors. This unified approach leverages the mutual benefits of multi-task learning, enabling the model to achieve improved performance across all three tasks compared to learning them independently. Experimental results demonstrate that SCOTTI outperforms existing approaches across all three tasks. Additionally, we introduce a novel dataset collected from 15 participants performing various activities and exercises, with 7 hours of total duration, across eight different activities.

FIRE: Frobenius-Isometry Reinitialization for Balancing the Stability-Plasticity Tradeoff

Feb 08, 2026Abstract:Deep neural networks trained on nonstationary data must balance stability (i.e., retaining prior knowledge) and plasticity (i.e., adapting to new tasks). Standard reinitialization methods, which reinitialize weights toward their original values, are widely used but difficult to tune: conservative reinitializations fail to restore plasticity, while aggressive ones erase useful knowledge. We propose FIRE, a principled reinitialization method that explicitly balances the stability-plasticity tradeoff. FIRE quantifies stability through Squared Frobenius Error (SFE), measuring proximity to past weights, and plasticity through Deviation from Isometry (DfI), reflecting weight isotropy. The reinitialization point is obtained by solving a constrained optimization problem, minimizing SFE subject to DfI being zero, which is efficiently approximated by Newton-Schulz iteration. FIRE is evaluated on continual visual learning (CIFAR-10 with ResNet-18), language modeling (OpenWebText with GPT-0.1B), and reinforcement learning (HumanoidBench with SAC and Atari games with DQN). Across all domains, FIRE consistently outperforms both naive training without intervention and standard reinitialization methods, demonstrating effective balancing of the stability-plasticity tradeoff.

Prism: Spectral Parameter Sharing for Multi-Agent Reinforcement Learning

Feb 06, 2026Abstract:Parameter sharing is a key strategy in multi-agent reinforcement learning (MARL) for improving scalability, yet conventional fully shared architectures often collapse into homogeneous behaviors. Recent methods introduce diversity through clustering, pruning, or masking, but typically compromise resource efficiency. We propose Prism, a parameter sharing framework that induces inter-agent diversity by representing shared networks in the spectral domain via singular value decomposition (SVD). All agents share the singular vector directions while learning distinct spectral masks on singular values. This mechanism encourages inter-agent diversity and preserves scalability. Extensive experiments on both homogeneous (LBF, SMACv2) and heterogeneous (MaMuJoCo) benchmarks show that Prism achieves competitive performance with superior resource efficiency.

PREFAB: PREFerence-based Affective Modeling for Low-Budget Self-Annotation

Jan 21, 2026Abstract:Self-annotation is the gold standard for collecting affective state labels in affective computing. Existing methods typically rely on full annotation, requiring users to continuously label affective states across entire sessions. While this process yields fine-grained data, it is time-consuming, cognitively demanding, and prone to fatigue and errors. To address these issues, we present PREFAB, a low-budget retrospective self-annotation method that targets affective inflection regions rather than full annotation. Grounded in the peak-end rule and ordinal representations of emotion, PREFAB employs a preference-learning model to detect relative affective changes, directing annotators to label only selected segments while interpolating the remainder of the stimulus. We further introduce a preview mechanism that provides brief contextual cues to assist annotation. We evaluate PREFAB through a technical performance study and a 25-participant user study. Results show that PREFAB outperforms baselines in modeling affective inflections while mitigating workload (and conditionally mitigating temporal burden). Importantly PREFAB improves annotator confidence without degrading annotation quality.

Alternating Approach-Putt Models for Multi-Stage Speech Enhancement

Aug 14, 2025

Abstract:Speech enhancement using artificial neural networks aims to remove noise from noisy speech signals while preserving the speech content. However, speech enhancement networks often introduce distortions to the speech signal, referred to as artifacts, which can degrade audio quality. In this work, we propose a post-processing neural network designed to mitigate artifacts introduced by speech enhancement models. Inspired by the analogy of making a `Putt' after an `Approach' in golf, we name our model PuttNet. We demonstrate that alternating between a speech enhancement model and the proposed Putt model leads to improved speech quality, as measured by perceptual quality scores (PESQ), objective intelligibility (STOI), and background noise intrusiveness (CBAK) scores. Furthermore, we illustrate with graphical analysis why this alternating Approach outperforms repeated application of either model alone.

IPCGRL: Language-Instructed Reinforcement Learning for Procedural Level Generation

Mar 16, 2025Abstract:Recent research has highlighted the significance of natural language in enhancing the controllability of generative models. While various efforts have been made to leverage natural language for content generation, research on deep reinforcement learning (DRL) agents utilizing text-based instructions for procedural content generation remains limited. In this paper, we propose IPCGRL, an instruction-based procedural content generation method via reinforcement learning, which incorporates a sentence embedding model. IPCGRL fine-tunes task-specific embedding representations to effectively compress game-level conditions. We evaluate IPCGRL in a two-dimensional level generation task and compare its performance with a general-purpose embedding method. The results indicate that IPCGRL achieves up to a 21.4% improvement in controllability and a 17.2% improvement in generalizability for unseen instructions. Furthermore, the proposed method extends the modality of conditional input, enabling a more flexible and expressive interaction framework for procedural content generation.

Automatic Curriculum Design for Zero-Shot Human-AI Coordination

Mar 10, 2025Abstract:Zero-shot human-AI coordination is the training of an ego-agent to coordinate with humans without using human data. Most studies on zero-shot human-AI coordination have focused on enhancing the ego-agent's coordination ability in a given environment without considering the issue of generalization to unseen environments. Real-world applications of zero-shot human-AI coordination should consider unpredictable environmental changes and the varying coordination ability of co-players depending on the environment. Previously, the multi-agent UED (Unsupervised Environment Design) approach has investigated these challenges by jointly considering environmental changes and co-player policy in competitive two-player AI-AI scenarios. In this paper, our study extends the multi-agent UED approach to a zero-shot human-AI coordination. We propose a utility function and co-player sampling for a zero-shot human-AI coordination setting that helps train the ego-agent to coordinate with humans more effectively than the previous multi-agent UED approach. The zero-shot human-AI coordination performance was evaluated in the Overcooked-AI environment, using human proxy agents and real humans. Our method outperforms other baseline models and achieves a high human-AI coordination performance in unseen environments.

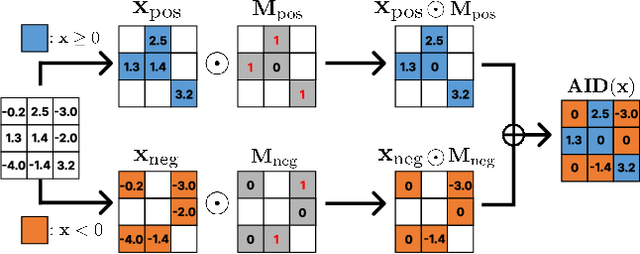

Activation by Interval-wise Dropout: A Simple Way to Prevent Neural Networks from Plasticity Loss

Feb 03, 2025

Abstract:Plasticity loss, a critical challenge in neural network training, limits a model's ability to adapt to new tasks or shifts in data distribution. This paper introduces AID (Activation by Interval-wise Dropout), a novel method inspired by Dropout, designed to address plasticity loss. Unlike Dropout, AID generates subnetworks by applying Dropout with different probabilities on each preactivation interval. Theoretical analysis reveals that AID regularizes the network, promoting behavior analogous to that of deep linear networks, which do not suffer from plasticity loss. We validate the effectiveness of AID in maintaining plasticity across various benchmarks, including continual learning tasks on standard image classification datasets such as CIFAR10, CIFAR100, and TinyImageNet. Furthermore, we show that AID enhances reinforcement learning performance in the Arcade Learning Environment benchmark.

ChatPCG: Large Language Model-Driven Reward Design for Procedural Content Generation

Jun 07, 2024

Abstract:Driven by the rapid growth of machine learning, recent advances in game artificial intelligence (AI) have significantly impacted productivity across various gaming genres. Reward design plays a pivotal role in training game AI models, wherein researchers implement concepts of specific reward functions. However, despite the presence of AI, the reward design process predominantly remains in the domain of human experts, as it is heavily reliant on their creativity and engineering skills. Therefore, this paper proposes ChatPCG, a large language model (LLM)-driven reward design framework.It leverages human-level insights, coupled with game expertise, to generate rewards tailored to specific game features automatically. Moreover, ChatPCG is integrated with deep reinforcement learning, demonstrating its potential for multiplayer game content generation tasks. The results suggest that the proposed LLM exhibits the capability to comprehend game mechanics and content generation tasks, enabling tailored content generation for a specified game. This study not only highlights the potential for improving accessibility in content generation but also aims to streamline the game AI development process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge