Kristijonas Cyras

Optimizing Reinforcement Learning Training over Digital Twin Enabled Multi-fidelity Networks

Mar 09, 2026Abstract:In this paper, we investigate a novel digital network twin (DNT) assisted deep learning (DL) model training framework. In particular, we consider a physical network where a base station (BS) uses several antennas to serve multiple mobile users, and a DNT that is a virtual representation of the physical network. The BS must adjust its antenna tilt angles to optimize the data rates of all users. Due to user mobility, the BS may not be able to accurately track network dynamics such as wireless channels and user mobilities. Hence, a reinforcement learning (RL) approach is used to dynamically adjust the antenna tilt angles. To train the RL, we can use data collected from the physical network and the DNT. The data collected from the physical network is more accurate but incurs more communication overhead compared to the data collected from the DNT. Therefore, it is necessary to determine the ratio of data collected from the physical network and the DNT to improve the training of the RL model. We formulate this problem as an optimization problem whose goal is to jointly optimize the tilt angle adjustment policy and the data collection strategy, aiming to maximize the data rates of all users while constraining the time delay introduced by collecting data from the physical network. To solve this problem, we propose a hierarchical RL framework that integrates robust adversarial loss and proximal policy optimization (PPO). Simulation results show that our proposed method reduces the physical network data collection delay by up to 28.01% and 1x compared to a hierarchical RL that uses vanilla PPO as the first level RL, and the baseline that uses robust-RL at the first level and selects the data collection ratio randomly.

Machine Reasoning Explainability

Sep 01, 2020

Abstract:As a field of AI, Machine Reasoning (MR) uses largely symbolic means to formalize and emulate abstract reasoning. Studies in early MR have notably started inquiries into Explainable AI (XAI) -- arguably one of the biggest concerns today for the AI community. Work on explainable MR as well as on MR approaches to explainability in other areas of AI has continued ever since. It is especially potent in modern MR branches, such as argumentation, constraint and logic programming, planning. We hereby aim to provide a selective overview of MR explainability techniques and studies in hopes that insights from this long track of research will complement well the current XAI landscape. This document reports our work in-progress on MR explainability.

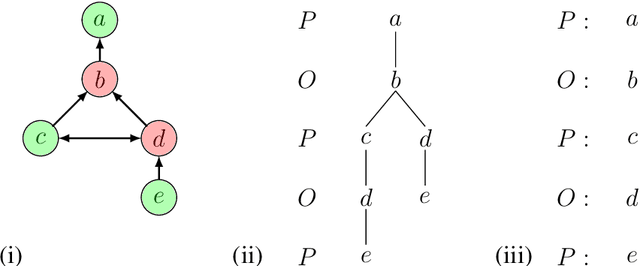

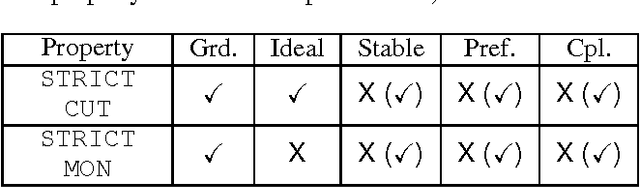

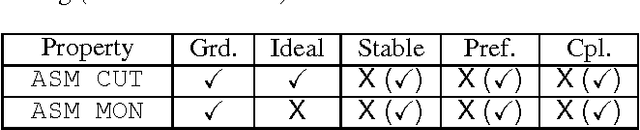

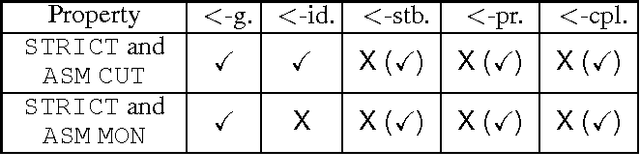

Properties of ABA+ for Non-Monotonic Reasoning

Nov 05, 2017

Abstract:We investigate properties of ABA+, a formalism that extends the well studied structured argumentation formalism Assumption-Based Argumentation (ABA) with a preference handling mechanism. In particular, we establish desirable properties that ABA+ semantics exhibit. These pave way to the satisfaction by ABA+ of some (arguably) desirable principles of preference handling in argumentation and nonmonotonic reasoning, as well as non-monotonic inference properties of ABA+ under various semantics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge