Khalid Youssef

Improved Robustness for Deep Learning-based Segmentation of Multi-Center Myocardial Perfusion MRI Datasets Using Data Adaptive Uncertainty-guided Space-time Analysis

Aug 09, 2024

Abstract:Background. Fully automatic analysis of myocardial perfusion MRI datasets enables rapid and objective reporting of stress/rest studies in patients with suspected ischemic heart disease. Developing deep learning techniques that can analyze multi-center datasets despite limited training data and variations in software and hardware is an ongoing challenge. Methods. Datasets from 3 medical centers acquired at 3T (n = 150 subjects) were included: an internal dataset (inD; n = 95) and two external datasets (exDs; n = 55) used for evaluating the robustness of the trained deep neural network (DNN) models against differences in pulse sequence (exD-1) and scanner vendor (exD-2). A subset of inD (n = 85) was used for training/validation of a pool of DNNs for segmentation, all using the same spatiotemporal U-Net architecture and hyperparameters but with different parameter initializations. We employed a space-time sliding-patch analysis approach that automatically yields a pixel-wise "uncertainty map" as a byproduct of the segmentation process. In our approach, a given test case is segmented by all members of the DNN pool and the resulting uncertainty maps are leveraged to automatically select the "best" one among the pool of solutions. Results. The proposed DAUGS analysis approach performed similarly to the established approach on the internal dataset (p = n.s.) whereas it significantly outperformed on the external datasets (p < 0.005 for exD-1 and exD-2). Moreover, the number of image series with "failed" segmentation was significantly lower for the proposed vs. the established approach (4.3% vs. 17.1%, p < 0.0005). Conclusions. The proposed DAUGS analysis approach has the potential to improve the robustness of deep learning methods for segmentation of multi-center stress perfusion datasets with variations in the choice of pulse sequence, site location or scanner vendor.

Temporal Uncertainty Localization to Enable Human-in-the-loop Analysis of Dynamic Contrast-enhanced Cardiac MRI Datasets

Aug 25, 2023Abstract:Dynamic contrast-enhanced (DCE) cardiac magnetic resonance imaging (CMRI) is a widely used modality for diagnosing myocardial blood flow (perfusion) abnormalities. During a typical free-breathing DCE-CMRI scan, close to 300 time-resolved images of myocardial perfusion are acquired at various contrast "wash in/out" phases. Manual segmentation of myocardial contours in each time-frame of a DCE image series can be tedious and time-consuming, particularly when non-rigid motion correction has failed or is unavailable. While deep neural networks (DNNs) have shown promise for analyzing DCE-CMRI datasets, a "dynamic quality control" (dQC) technique for reliably detecting failed segmentations is lacking. Here we propose a new space-time uncertainty metric as a dQC tool for DNN-based segmentation of free-breathing DCE-CMRI datasets by validating the proposed metric on an external dataset and establishing a human-in-the-loop framework to improve the segmentation results. In the proposed approach, we referred the top 10% most uncertain segmentations as detected by our dQC tool to the human expert for refinement. This approach resulted in a significant increase in the Dice score (p<0.001) and a notable decrease in the number of images with failed segmentation (16.2% to 11.3%) whereas the alternative approach of randomly selecting the same number of segmentations for human referral did not achieve any significant improvement. Our results suggest that the proposed dQC framework has the potential to accurately identify poor-quality segmentations and may enable efficient DNN-based analysis of DCE-CMRI in a human-in-the-loop pipeline for clinical interpretation and reporting of dynamic CMRI datasets.

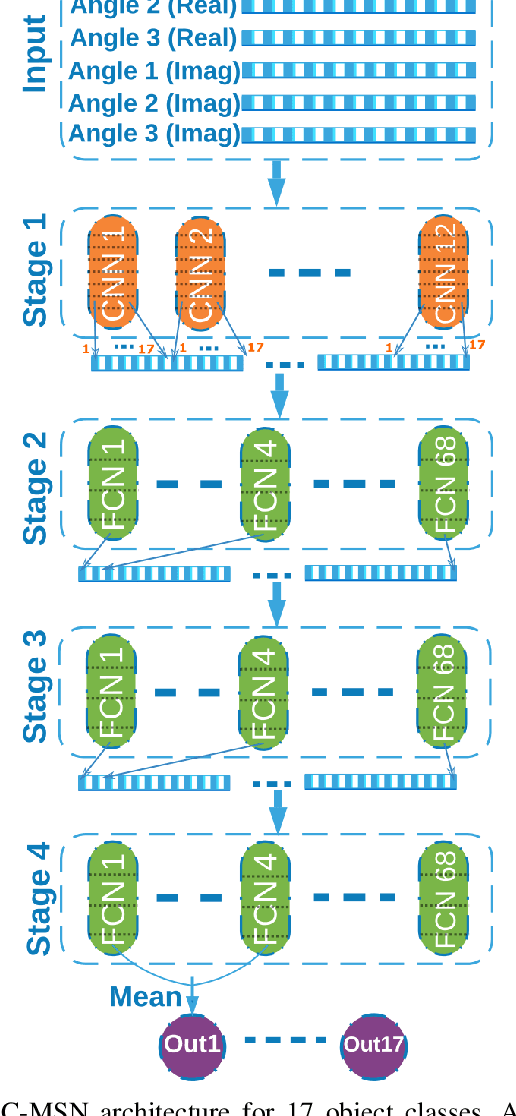

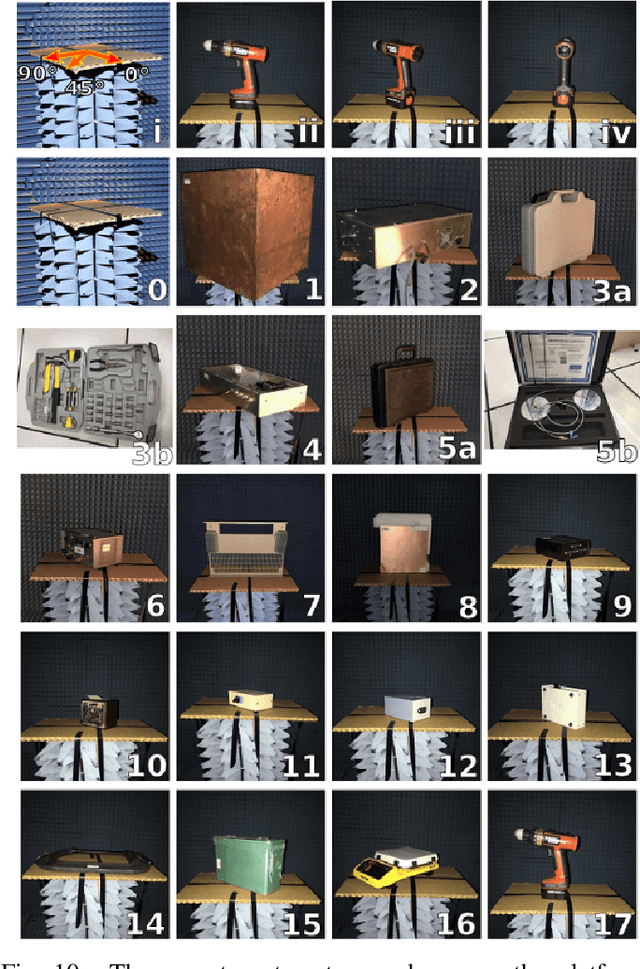

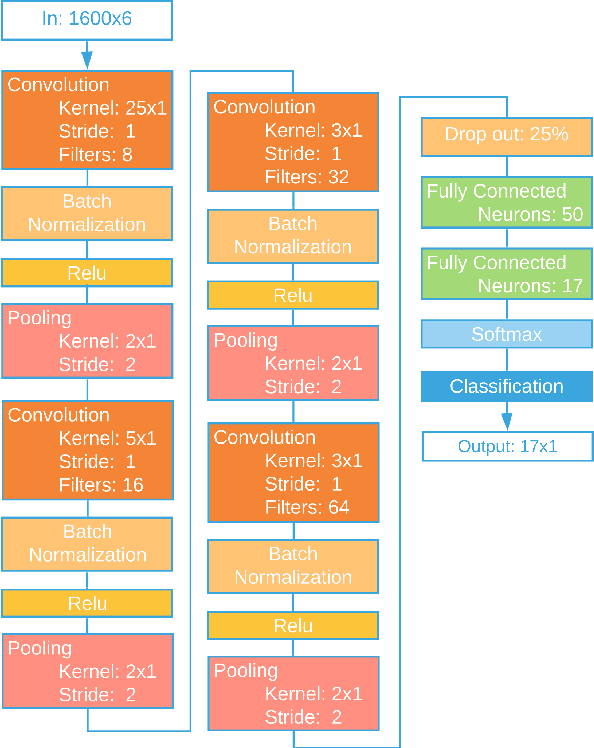

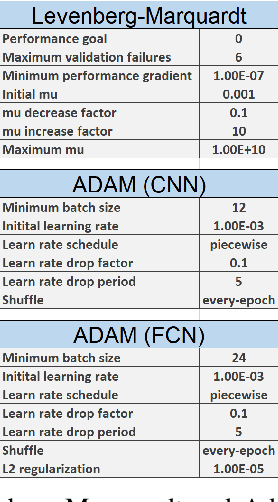

Scalable End-to-End RF Classification: A Case Study on Undersized Dataset Regularization by Convolutional-MST

May 13, 2021

Abstract:Unlike areas such as computer vision and speech recognition where convolutional and recurrent neural networks-based approaches have proven effective to the nature of the respective areas of application, deep learning (DL) still lacks a general approach suitable for the unique nature and challenges of RF systems such as radar, signals intelligence, electronic warfare, and communications. Existing approaches face problems in robustness, consistency, efficiency, repeatability and scalability. One of the main challenges in RF sensing such as radar target identification is the difficulty and cost of obtaining data. Hundreds to thousands of samples per class are typically used when training for classifying signals into 2 to 12 classes with reported accuracy ranging from 87% to 99%, where accuracy generally decreases with more classes added. In this paper, we present a new DL approach based on multistage training and demonstrate it on RF sensing signal classification. We consistently achieve over 99% accuracy for up to 17 diverse classes using only 11 samples per class for training, yielding up to 35% improvement in accuracy over standard DL approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge