Kensuke Harada

Osaka University, AIST

A Closed-Loop Bin Picking System for Entangled Wire Harnesses using Bimanual and Dynamic Manipulation

Jun 26, 2023

Abstract:This paper addresses the challenge of industrial bin picking using entangled wire harnesses. Wire harnesses are essential in manufacturing but poses challenges in automation due to their complex geometries and propensity for entanglement. Our previous work tackled this issue by proposing a quasi-static pulling motion to separate the entangled wire harnesses. However, it still lacks sufficiency and generalization to various shapes and structures. In this paper, we deploy a dual-arm robot that can grasp, extract and disentangle wire harnesses from dense clutter using dynamic manipulation. The robot can swing to dynamically discard the entangled objects and regrasp to adjust the undesirable grasp pose. To improve the robustness and accuracy of the system, we leverage a closed-loop framework that uses haptic feedback to detect entanglement in real-time and flexibly adjust system parameters. Our bin picking system achieves an overall success rate of 91.2% in the real-world experiments using two different types of long wire harnesses. It demonstrates the effectiveness of our system in handling various wire harnesses for industrial bin picking.

Probabilistic Slide-support Manipulation Planning in Clutter

Jun 22, 2023

Abstract:To safely and efficiently extract an object from the clutter, this paper presents a bimanual manipulation planner in which one hand of the robot is used to slide the target object out of the clutter while the other hand is used to support the surrounding objects to prevent the clutter from collapsing. Our method uses a neural network to predict the physical phenomena of the clutter when the target object is moved. We generate the most efficient action based on the Monte Carlo tree search.The grasping and sliding actions are planned to minimize the number of motion sequences to pick the target object. In addition, the object to be supported is determined to minimize the position change of surrounding objects. Experiments with a real bimanual robot confirmed that the robot could retrieve the target object, reducing the total number of motion sequences and improving safety.

Implicit Contact-Rich Manipulation Planning for a Manipulator with Insufficient Payload

Feb 26, 2023

Abstract:This paper uses a mobile manipulator with a collaborative robotic arm to manipulate objects beyond the robot's maximum payload. It proposes a single-shot probabilistic roadmap-based method to plan and optimize manipulation motion with environment support. The method uses an expanded object mesh model to examine contact and randomly explores object motion while keeping contact and securing affordable grasping force. It generates robotic motion trajectories after obtaining object motion using an optimization-based algorithm. With the proposed method's help, we can plan contact-rich manipulation without particularly analyzing an object's contact modes and their transitions. The planner and optimizer determine them automatically. We conducted experiments and analyses using simulations and real-world executions to examine the method's performance. It can successfully find manipulation motion that met contact, force, and kinematic constraints, thus allowing a mobile manipulator to move heavy objects while leveraging supporting forces from environmental obstacles. The mehtod does not need to explicitly analyze contact states and build contact transition graphs, thus providing a new view for robotic grasp-less manipulation, non-prehensile manipulation, manipulation with contact, etc.

Learning to Dexterously Pick or Separate Tangled-Prone Objects for Industrial Bin Picking

Feb 16, 2023

Abstract:Industrial bin picking for tangled-prone objects requires the robot to either pick up untangled objects or perform separation manipulation when the bin contains no isolated objects. The robot must be able to flexibly perform appropriate actions based on the current observation. It is challenging due to high occlusion in the clutter, elusive entanglement phenomena, and the need for skilled manipulation planning. In this paper, we propose an autonomous, effective and general approach for picking up tangled-prone objects for industrial bin picking. First, we learn PickNet - a network that maps the visual observation to pixel-wise possibilities of picking isolated objects or separating tangled objects and infers the corresponding grasp. Then, we propose two effective separation strategies: Dropping the entangled objects into a buffer bin to reduce the degree of entanglement; Pulling to separate the entangled objects in the buffer bin planned by PullNet - a network that predicts position and direction for pulling from visual input. To efficiently collect data for training PickNet and PullNet, we embrace the self-supervised learning paradigm using an algorithmic supervisor in a physics simulator. Real-world experiments show that our policy can dexterously pick up tangled-prone objects with success rates of 90%. We further demonstrate the generalization of our policy by picking a set of unseen objects. Supplementary material, code, and videos can be found at https://xinyiz0931.github.io/tangle.

Automatically Prepare Training Data for YOLO Using Robotic In-Hand Observation and Synthesis

Jan 04, 2023

Abstract:Deep learning methods have recently exhibited impressive performance in object detection. However, such methods needed much training data to achieve high recognition accuracy, which was time-consuming and required considerable manual work like labeling images. In this paper, we automatically prepare training data using robots. Considering the low efficiency and high energy consumption in robot motion, we proposed combining robotic in-hand observation and data synthesis to enlarge the limited data set collected by the robot. We first used a robot with a depth sensor to collect images of objects held in the robot's hands and segment the object pictures. Then, we used a copy-paste method to synthesize the segmented objects with rack backgrounds. The collected and synthetic images are combined to train a deep detection neural network. We conducted experiments to compare YOLOv5x detectors trained with images collected using the proposed method and several other methods. The results showed that combined observation and synthetic images led to comparable performance to manual data preparation. They provided a good guide on optimizing data configurations and parameter settings for training detectors. The proposed method required only a single process and was a low-cost way to produce the combined data. Interested readers may find the data sets and trained models from the following GitHub repository: github.com/wrslab/tubedet

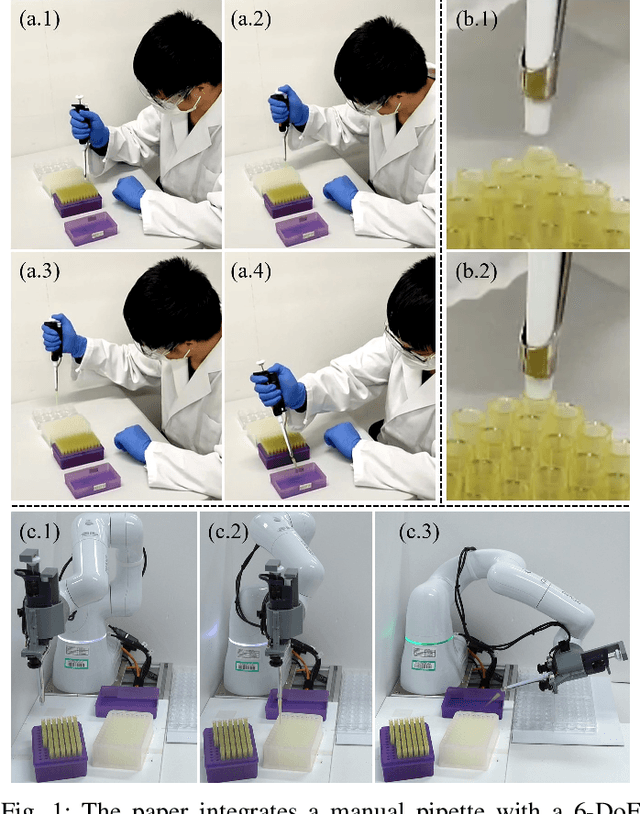

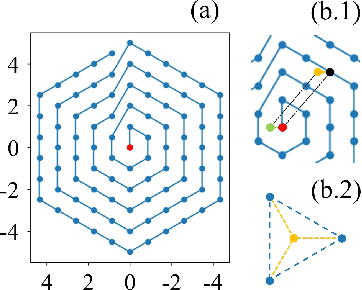

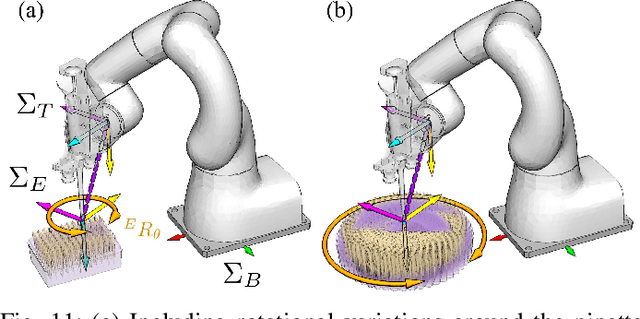

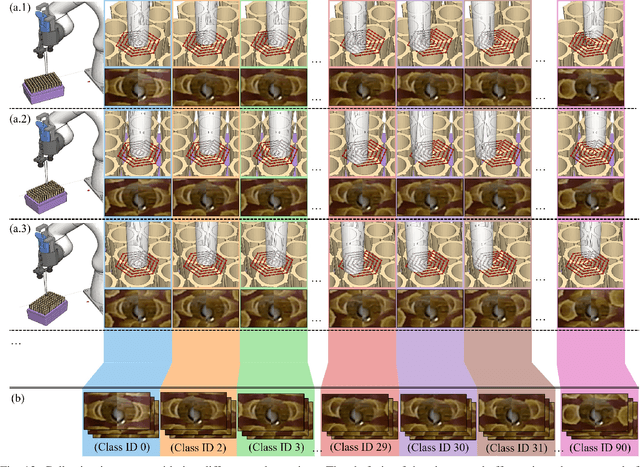

Integrating a Manual Pipette into a Collaborative Robot Manipulator for Flexible Liquid Dispensing

Jul 04, 2022

Abstract:This paper presents a system integration approach for a 6-DoF (Degree of Freedom) collaborative robot to operate a pipette for liquid dispensing. Its technical development is threefold. First, we designed an end-effector for holding and triggering manual pipettes. Second, we took advantage of a collaborative robot to recognize labware poses and planned robotic motion based on the recognized poses. Third, we developed vision-based classifiers to predict and correct positioning errors and thus precisely attached pipettes to disposable tips. Through experiments and analysis, we confirmed that the developed system, especially the planning and visual recognition methods, could help secure high-precision and flexible liquid dispensing. The developed system is suitable for low-frequency, high-repetition biochemical liquid dispensing tasks. We expect it to promote the deployment of collaborative robots for laboratory automation and thus improve the experimental efficiency without significantly customizing a laboratory environment.

Accelerating Robot Learning of Contact-Rich Manipulations: A Curriculum Learning Study

Apr 28, 2022

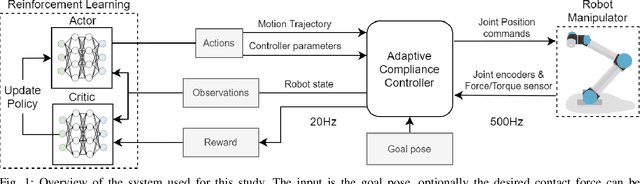

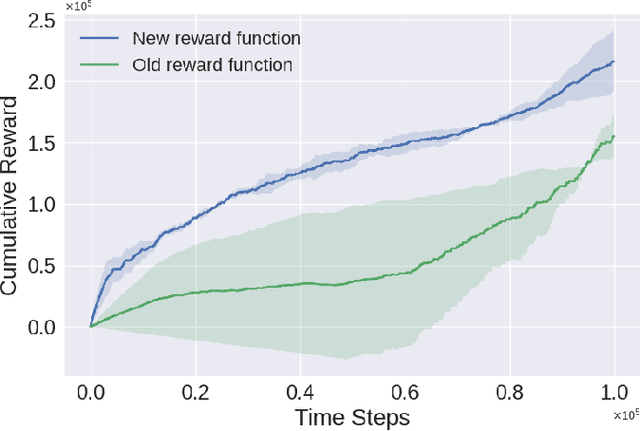

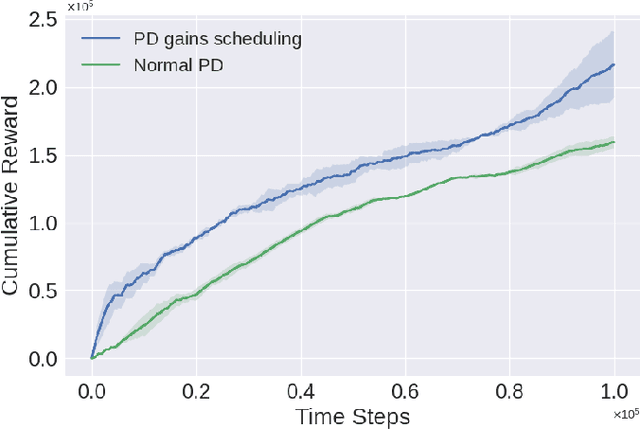

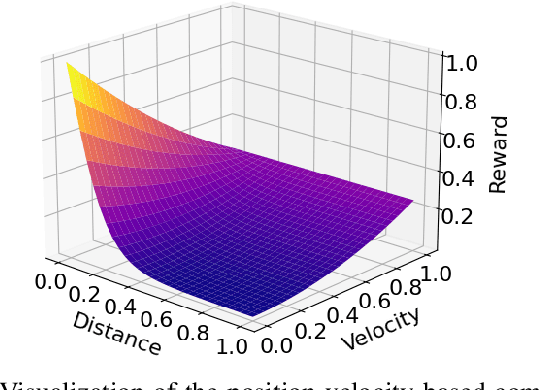

Abstract:The Reinforcement Learning (RL) paradigm has been an essential tool for automating robotic tasks. Despite the advances in RL, it is still not widely adopted in the industry due to the need for an expensive large amount of robot interaction with its environment. Curriculum Learning (CL) has been proposed to expedite learning. However, most research works have been only evaluated in simulated environments, from video games to robotic toy tasks. This paper presents a study for accelerating robot learning of contact-rich manipulation tasks based on Curriculum Learning combined with Domain Randomization (DR). We tackle complex industrial assembly tasks with position-controlled robots, such as insertion tasks. We compare different curricula designs and sampling approaches for DR. Based on this study, we propose a method that significantly outperforms previous work, which uses DR only (No CL is used), with less than a fifth of the training time (samples). Results also show that even when training only in simulation with toy tasks, our method can learn policies that can be transferred to the real-world robot. The learned policies achieved success rates of up to 86\% on real-world complex industrial insertion tasks (with tolerances of $\pm 0.01~mm$) not seen during the training.

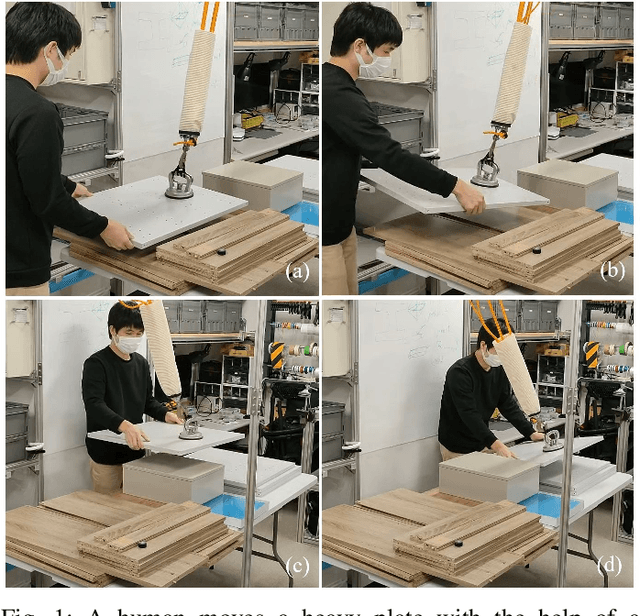

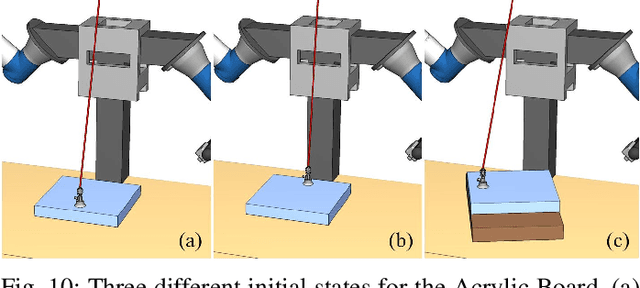

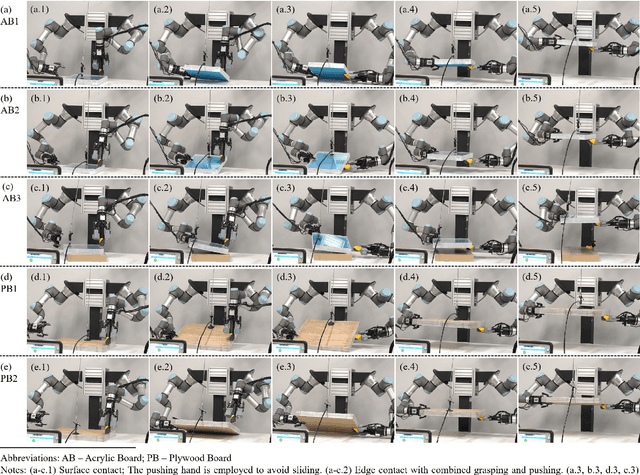

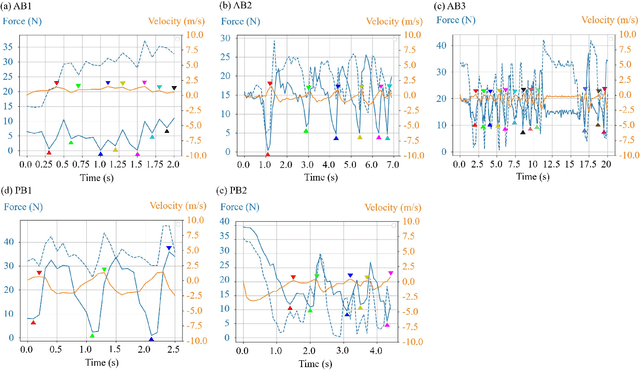

A Dual-Arm Robot that Manipulates Heavy Plates Cooperatively with a Vacuum Lifter

Mar 20, 2022

Abstract:A vacuum lifter is widely used to hold and pick up large, heavy, and flat objects. Conventionally, when using a vacuum lifter, a human worker watches the state of a running vacuum lifter and adjusts the object's pose to maintain balance. In this work, we propose using a dual-arm robot to replace the human workers and develop planning and control methods for a dual-arm robot to raise a heavy plate with the help of a vacuum lifter. The methods help the robot determine its actions by considering the vacuum lifer's suction position and suction force limits. The essence of the methods is two-fold. First, we build a Manipulation State Graph (MSG) to store the weighted logical relations of various plate contact states and robot/vacuum lifter configurations, and search the graph to plan efficient and low-cost robot manipulation sequences. Second, we develop a velocity-based impedance controller to coordinate the robot and the vacuum lifter when lifting an object. With its help, a robot can follow the vacuum lifter's motion and realize compliant robot-vacuum lifter collaboration. The proposed planning and control methods are investigated using real-world experiments. The results show that a robot can effectively and flexibly work together with a vacuum lifter to manipulate large and heavy plate-like objects with the methods' support.

Metal Wire Manipulation Planning for 3D Curving -- How a Low Payload Robot Can Use a Bending Machine to Bend Stiff Metal Wire

Mar 08, 2022

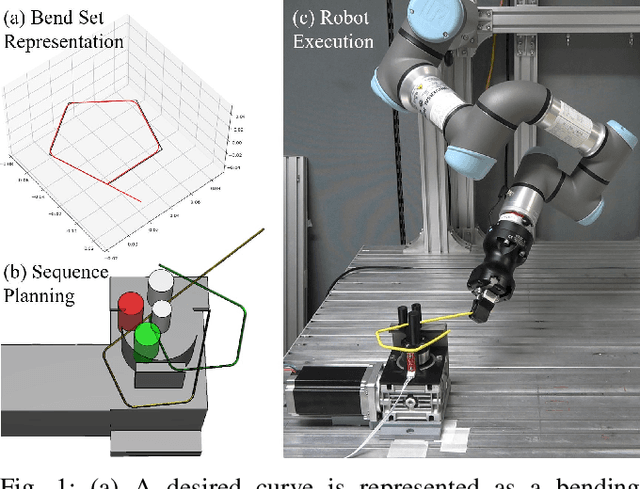

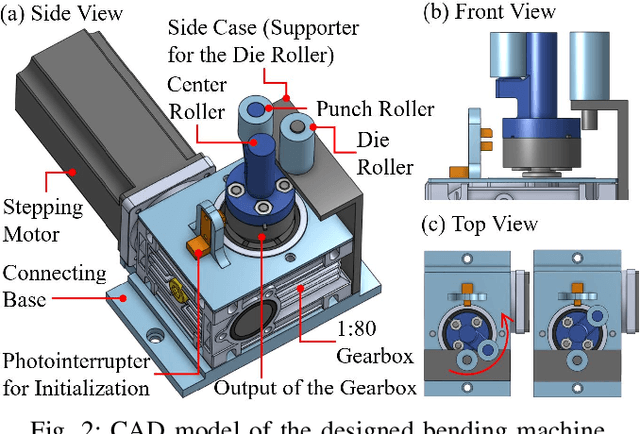

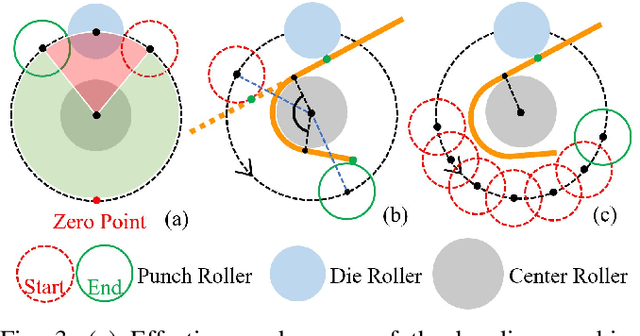

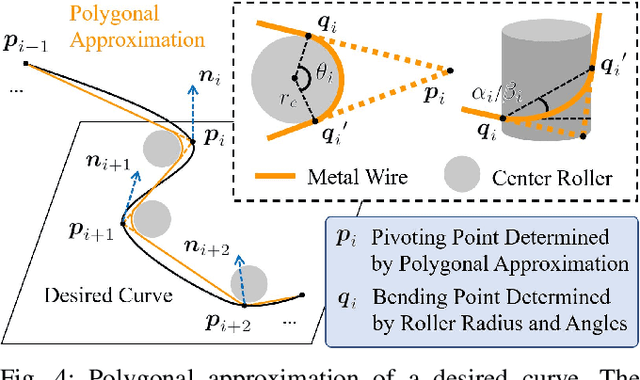

Abstract:This paper presents a combined task and motion planner for a robot arm to carry out 3D metal wire curving tasks by collaborating with a bending machine. We assume a collaborative robot that is safe to work in a human environment but has a weak payload to bend objects with large stiffness, and developed a combined planner for the robot to use a bending machine. Our method converts a 3D curve to a bending set and generates the feasible bending sequence, machine usage, robotic grasp poses, and pick-and-place arm motion considering the combined task and motion level constraints. Compared with previous deformable linear object shaping work that relied on forces provided by robotic arms, the proposed method is suitable for the material with high stiffness. We evaluate the system using different tasks. The results show that the proposed system is flexible and robust to generate robotic motion to corporate with the designed bending machine.

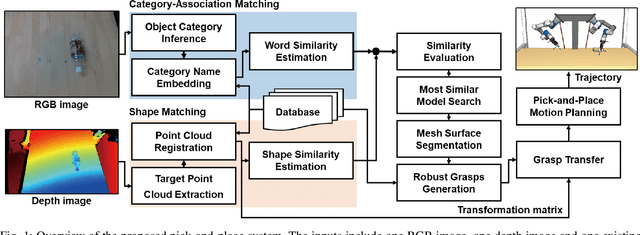

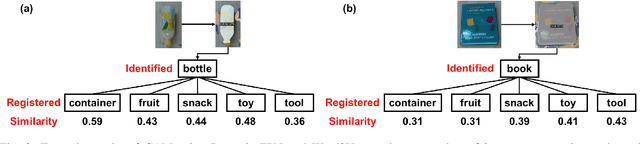

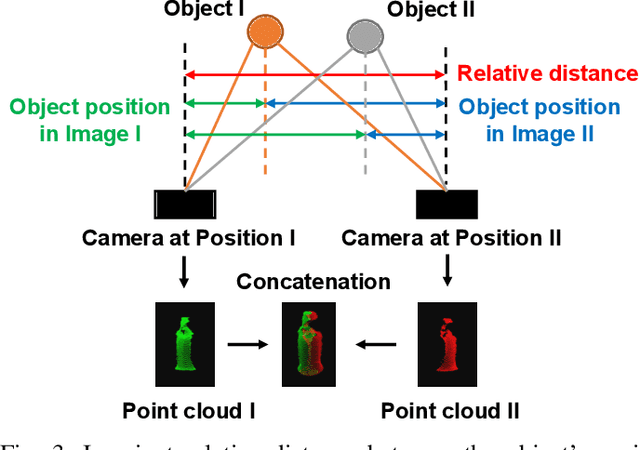

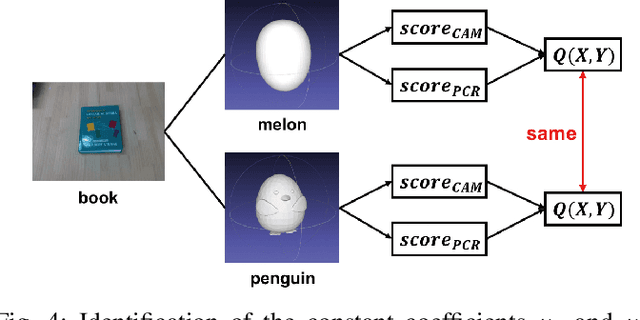

Category-Association Based Similarity Matching for Novel Object Pick-and-Place Task

Jan 27, 2022

Abstract:Robotic pick-and-place has been researched for a long time to cope with uncertainty of novel objects and changeable environments. Past works mainly focus on learning-based methods to achieve high precision. However, they have difficulty being generalized for the limitation of specified training models. To break through this drawback of learning-based approaches, we introduce a new perspective of similarity matching between novel objects and a known database based on category-association to achieve pick-and-place tasks with high accuracy and stabilization. We calculate the category name similarity using word embedding to quantify the semantic similarity between the categories of known models and the target real-world objects. With a similar model identified by a similarity prediction function, we preplan a series of robust grasps and imitate them to plan new grasps on the real-world target object. We also propose a distance-based method to infer the in-hand posture of objects and adjust small rotations to achieve stable placements under uncertainty. Through a real-world robotic pick-and-place experiment with a dozen of in-category and out-of-category novel objects, our method achieved an average success rate of 90.6% and 75.9% respectively, validating the capacity of generalization to diverse objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge