Kasper Støy

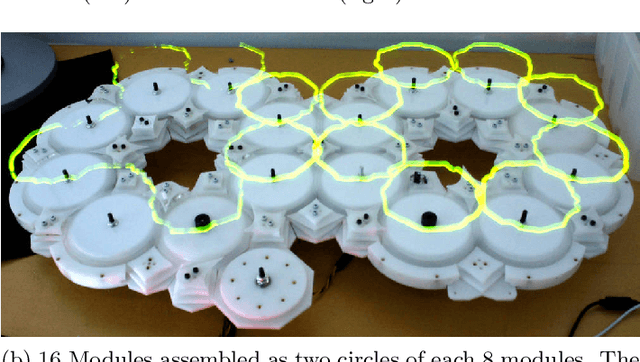

Multi-Modular MANTA-RAY: A Modular Soft Surface Platform for Distributed Multi-Object Manipulation

Jan 29, 2026Abstract:Manipulation surfaces control objects by actively deforming their shape rather than directly grasping them. While dense actuator arrays can generate complex deformations, they also introduce high degrees of freedom (DOF), increasing system complexity and limiting scalability. The MANTA-RAY (Manipulation with Adaptive Non-rigid Textile Actuation with Reduced Actuation densitY) platform addresses these challenges by leveraging a soft, fabric-based surface with reduced actuator density to manipulate fragile and heterogeneous objects. Previous studies focused on single-module implementations supported by four actuators, whereas the feasibility and benefits of a scalable, multi-module configuration remain unexplored. In this work, we present a distributed, modular, and scalable variant of the MANTA-RAY platform that maintains manipulation performance with a reduced actuator density. The proposed multi-module MANTA-RAY platform and control strategy employs object passing between modules and a geometric transformation driven PID controller that directly maps tilt-angle control outputs to actuator commands, eliminating the need for extensive data-driven or black-box training. We evaluate system performance in simulation across surface configurations of varying modules (3x3 and 4x4) and validate its feasibility through experiments on a physical 2x2 hardware prototype. The system successfully manipulates objects with diverse geometries, masses, and textures including fragile items such as eggs and apples as well as enabling parallel manipulation. The results demonstrate that the multi-module MANTA-RAY improves scalability and enables coordinated manipulation of multiple objects across larger areas, highlighting its potential for practical, real-world applications.

Robots can defuse high-intensity conflict situations

Aug 07, 2025Abstract:This paper investigates the specific scenario of high-intensity confrontations between humans and robots, to understand how robots can defuse the conflict. It focuses on the effectiveness of using five different affective expression modalities as main drivers for defusing the conflict. The aim is to discover any strengths or weaknesses in using each modality to mitigate the hostility that people feel towards a poorly performing robot. The defusing of the situation is accomplished by making the robot better at acknowledging the conflict and by letting it express remorse. To facilitate the tests, we used a custom affective robot in a simulated conflict situation with 105 test participants. The results show that all tested expression modalities can successfully be used to defuse the situation and convey an acknowledgment of the confrontation. The ratings were remarkably similar, but the movement modality was different (ANON p$<$.05) than the other modalities. The test participants also had similar affective interpretations on how impacted the robot was of the confrontation across all expression modalities. This indicates that defusing a high-intensity interaction may not demand special attention to the expression abilities of the robot, but rather require attention to the abilities of being socially aware of the situation and reacting in accordance with it.

Affecta-Context: The Context-Guided Behavior Adaptation Framework

Aug 07, 2025Abstract:This paper presents Affecta-context, a general framework to facilitate behavior adaptation for social robots. The framework uses information about the physical context to guide its behaviors in human-robot interactions. It consists of two parts: one that represents encountered contexts and one that learns to prioritize between behaviors through human-robot interactions. As physical contexts are encountered the framework clusters them by their measured physical properties. In each context, the framework learns to prioritize between behaviors to optimize the physical attributes of the robot's behavior in line with its current environment and the preferences of the users it interacts with. This paper illlustrates the abilities of the Affecta-context framework by enabling a robot to autonomously learn the prioritization of discrete behaviors. This was achieved by training across 72 interactions in two different physical contexts with 6 different human test participants. The paper demonstrates the trained Affecta-context framework by verifying the robot's ability to generalize over the input and to match its behaviors to a previously unvisited physical context.

On the causality between affective impact and coordinated human-robot reactions

Aug 06, 2025

Abstract:In an effort to improve how robots function in social contexts, this paper investigates if a robot that actively shares a reaction to an event with a human alters how the human perceives the robot's affective impact. To verify this, we created two different test setups. One to highlight and isolate the reaction element of affective robot expressions, and one to investigate the effects of applying specific timing delays to a robot reacting to a physical encounter with a human. The first test was conducted with two different groups (n=84) of human observers, a test group and a control group both interacting with the robot. The second test was performed with 110 participants using increasingly longer reaction delays for the robot with every ten participants. The results show a statistically significant change (p$<$.05) in perceived affective impact for the robots when they react to an event shared with a human observer rather than reacting at random. The result also shows for shared physical interaction, the near-human reaction times from the robot are most appropriate for the scenario. The paper concludes that a delay time around 200ms may render the biggest impact on human observers for small-sized non-humanoid robots. It further concludes that a slightly shorter reaction time around 100ms is most effective when the goal is to make the human observers feel they made the biggest impact on the robot.

Toward Anxiety-Reducing Pocket Robots for Children

Mar 31, 2025Abstract:A common denominator for most therapy treatments for children who suffer from an anxiety disorder is daily practice routines to learn techniques needed to overcome anxiety. However, applying those techniques while experiencing anxiety can be highly challenging. This paper presents the design, implementation, and pilot study of a tactile hand-held pocket robot AffectaPocket, designed to work alongside therapy as a focus object to facilitate coping during an anxiety attack. The robot does not require daily practice to be used, has a small form factor, and has been designed for children 7 to 12 years old. The pocket robot works by sensing when it is being held and attempts to shift the child's focus by presenting them with a simple three-note rhythm-matching game. We conducted a pilot study of the pocket robot involving four children aged 7 to 10 years, and then a main study with 18 children aged 6 to 8 years; neither study involved children with anxiety. Both studies aimed to assess the reliability of the robot's sensor configuration, its design, and the effectiveness of the user tutorial. The results indicate that the morphology and sensor setup performed adequately and the tutorial process enabled the children to use the robot with little practice. This work demonstrates that the presented pocket robot could represent a step toward developing low-cost accessible technologies to help children suffering from anxiety disorders.

* 8 pages

Soft Manipulation Surface With Reduced Actuator Density For Heterogeneous Object Manipulation

Nov 21, 2024Abstract:Object manipulation in robotics faces challenges due to diverse object shapes, sizes, and fragility. Gripper-based methods offer precision and low degrees of freedom (DOF) but the gripper limits the kind of objects to grasp. On the other hand, surface-based approaches provide flexibility for handling fragile and heterogeneous objects but require numerous actuators, increasing complexity. We propose new manipulation hardware that utilizes equally spaced linear actuators placed vertically and connected by a soft surface. In this setup, object manipulation occurs on the soft surface through coordinated movements of the surrounding actuators. This approach requires fewer actuators to cover a large manipulation area, offering a cost-effective solution with a lower DOF compared to dense actuator arrays. It also effectively handles heterogeneous objects of varying shapes and weights, even when they are significantly smaller than the distance between actuators. This method is particularly suitable for managing highly fragile objects in the food industry.

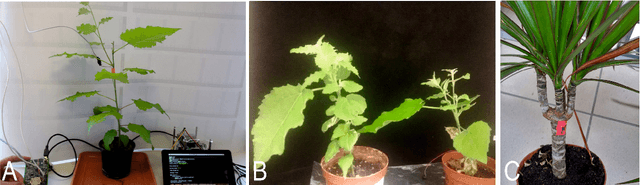

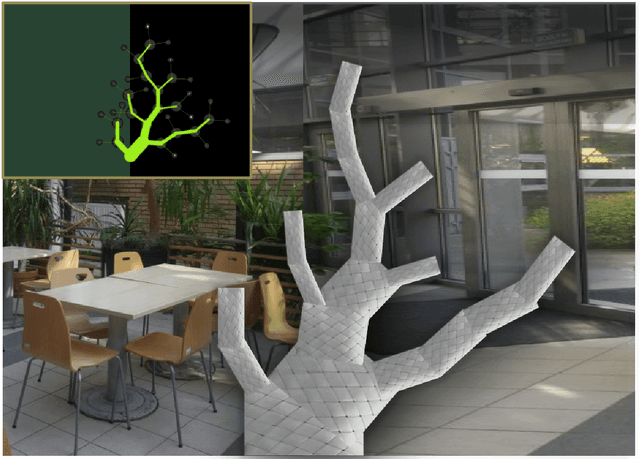

Flora robotica -- An Architectural System Combining Living Natural Plants and Distributed Robots

Sep 13, 2017

Abstract:Key to our project flora robotica is the idea of creating a bio-hybrid system of tightly coupled natural plants and distributed robots to grow architectural artifacts and spaces. Our motivation with this ground research project is to lay a principled foundation towards the design and implementation of living architectural systems that provide functionalities beyond those of orthodox building practice, such as self-repair, material accumulation and self-organization. Plants and robots work together to create a living organism that is inhabited by human beings. User-defined design objectives help to steer the directional growth of the plants, but also the system's interactions with its inhabitants determine locations where growth is prohibited or desired (e.g., partitions, windows, occupiable space). We report our plant species selection process and aspects of living architecture. A leitmotif of our project is the rich concept of braiding: braids are produced by robots from continuous material and serve as both scaffolds and initial architectural artifacts before plants take over and grow the desired architecture. We use light and hormones as attraction stimuli and far-red light as repelling stimulus to influence the plants. Applied sensors range from simple proximity sensing to detect the presence of plants to sophisticated sensing technology, such as electrophysiology and measurements of sap flow. We conclude by discussing our anticipated final demonstrator that integrates key features of flora robotica, such as the continuous growth process of architectural artifacts and self-repair of living architecture.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge