Kai Pan

Agent-First Tool API: A Semantic Interface Paradigm for Enterprise AI Agent Systems

May 11, 2026Abstract:As AI agents transition from research prototypes to enterprise production systems, the tool interfaces they consume remain rooted in human-oriented CRUD paradigms. This paper identifies five fundamental architectural mismatches between conventional APIs and autonomous agent requirements: exact-identifier dependence, rendering-oriented responses, single-shot interaction assumptions, user-equivalent authorization, and opaque error semantics. We propose the Agent-First Tool API paradigm, comprising three integrated mechanisms: (1) a Six-Verb Semantic Protocol that decomposes tool interactions into search, resolve, preview, execute, verify, and recover phases; (2) a Normalized Tool Contract (NTC) providing structured decision-support metadata including confidence scores, evidence chains, and suggested next actions; and (3) a dual-layer governance pipeline combining static capability policies with dynamic risk escalation. The paradigm is implemented and validated in a production multi-tenant SaaS platform serving 85 registered tools across 6 business domains. Comparative experiments on 50 real operational tasks demonstrate that Agent-First APIs achieve 88% end-to-end task success rate versus 64% for optimized CRUD baselines (+37.5%), while reducing required human interventions by 72.7% and improving autonomous error recovery by 5.8x. We establish that the paradigm is orthogonal and complementary to transport-layer standards such as MCP, operating as the semantic application layer above existing tool discovery and invocation protocols.

Beyond Autonomy: A Dynamic Tiered AgentRunner Framework for Governable and Resilient Enterprise AI Execution

May 11, 2026Abstract:Current large language model agent frameworks prioritize autonomy but lack the governability mechanisms required for enterprise deployment. High-risk write operations proceed without independent review, complex tasks lack acceptance verification, and computational resources are allocated uniformly regardless of risk level. We propose the Dynamic Tiered AgentRunner, a controlled execution protocol distilled from a production-grade multi-tenant SaaS platform. The framework introduces three core mechanisms: (1) Risk-Adaptive Tiering that dynamically allocates computational resources and review intensity based on task risk profiles, achieving Pareto-optimal trade-offs between safety and efficiency; (2) Separation of Powers architecture where proposal, review, execution, and verification are performed by independent agents with physically isolated boundaries; and (3) Resilience-by-Design through a Verifier-Recovery closed loop that treats failure as a first-class system state. We formalize the tier selectio

Super-Resolution on Rotationally Scanned Photoacoustic Microscopy Images Incorporating Scanning Prior

Dec 12, 2023

Abstract:Photoacoustic Microscopy (PAM) images integrating the advantages of optical contrast and acoustic resolution have been widely used in brain studies. However, there exists a trade-off between scanning speed and image resolution. Compared with traditional raster scanning, rotational scanning provides good opportunities for fast PAM imaging by optimizing the scanning mechanism. Recently, there is a trend to incorporate deep learning into the scanning process to further increase the scanning speed.Yet, most such attempts are performed for raster scanning while those for rotational scanning are relatively rare. In this study, we propose a novel and well-performing super-resolution framework for rotational scanning-based PAM imaging. To eliminate adjacent rows' displacements due to subject motion or high-frequency scanning distortion,we introduce a registration module across odd and even rows in the preprocessing and incorporate displacement degradation in the training. Besides, gradient-based patch selection is proposed to increase the probability of blood vessel patches being selected for training. A Transformer-based network with a global receptive field is applied for better performance. Experimental results on both synthetic and real datasets demonstrate the effectiveness and generalizability of our proposed framework for rotationally scanned PAM images'super-resolution, both quantitatively and qualitatively. Code is available at https://github.com/11710615/PAMSR.git.

DS3-Net: Difficulty-perceived Common-to-T1ce Semi-Supervised Multimodal MRI Synthesis Network

Mar 14, 2022

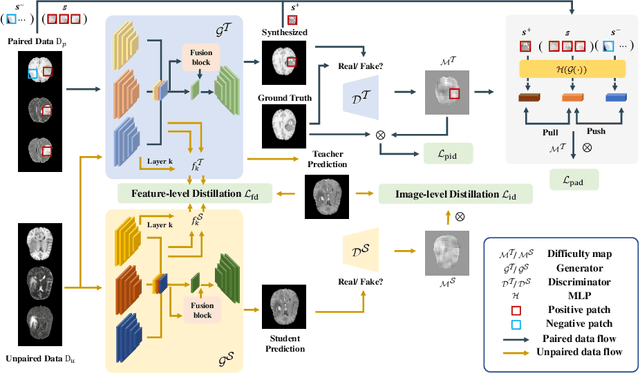

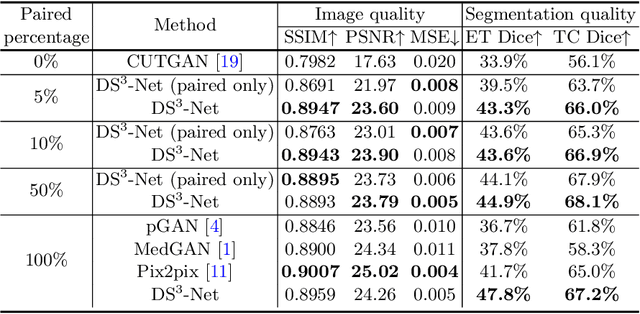

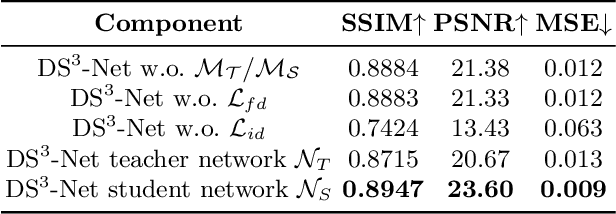

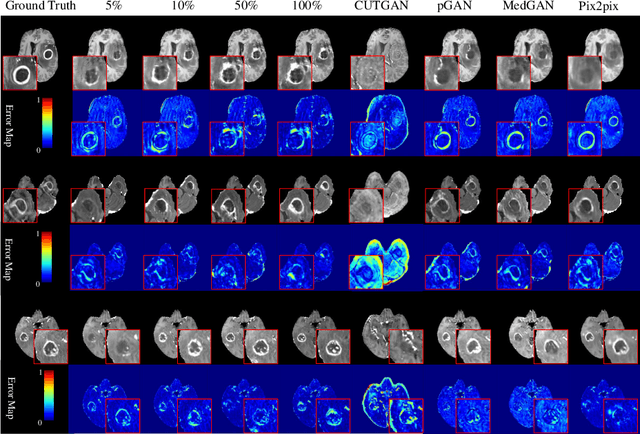

Abstract:Contrast-enhanced T1 (T1ce) is one of the most essential magnetic resonance imaging (MRI) modalities for diagnosing and analyzing brain tumors, especially gliomas. In clinical practice, common MRI modalities such as T1, T2, and fluid attenuation inversion recovery are relatively easy to access while T1ce is more challenging considering the additional cost and potential risk of allergies to the contrast agent. Therefore, it is of great clinical necessity to develop a method to synthesize T1ce from other common modalities. Current paired image translation methods typically have the issue of requiring a large amount of paired data and do not focus on specific regions of interest, e.g., the tumor region, in the synthesization process. To address these issues, we propose a Difficulty-perceived common-to-T1ce Semi-Supervised multimodal MRI Synthesis network (DS3-Net), involving both paired and unpaired data together with dual-level knowledge distillation. DS3-Net predicts a difficulty map to progressively promote the synthesis task. Specifically, a pixelwise constraint and a patchwise contrastive constraint are guided by the predicted difficulty map. Through extensive experiments on the publiclyavailable BraTS2020 dataset, DS3-Net outperforms its supervised counterpart in each respect. Furthermore, with only 5% paired data, the proposed DS3-Net achieves competitive performance with state-of-theart image translation methods utilizing 100% paired data, delivering an average SSIM of 0.8947 and an average PSNR of 23.60.

Improvements in Micro-CT Method for Characterizing X-ray Monocapillary Optics

Jun 28, 2021

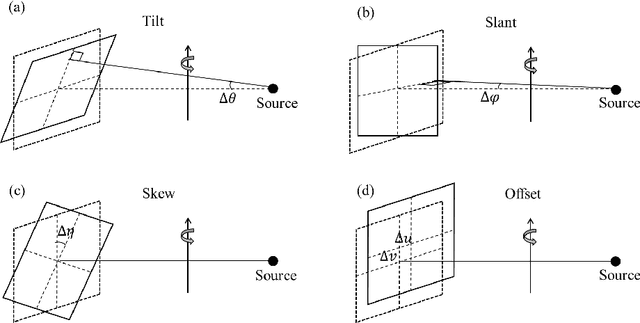

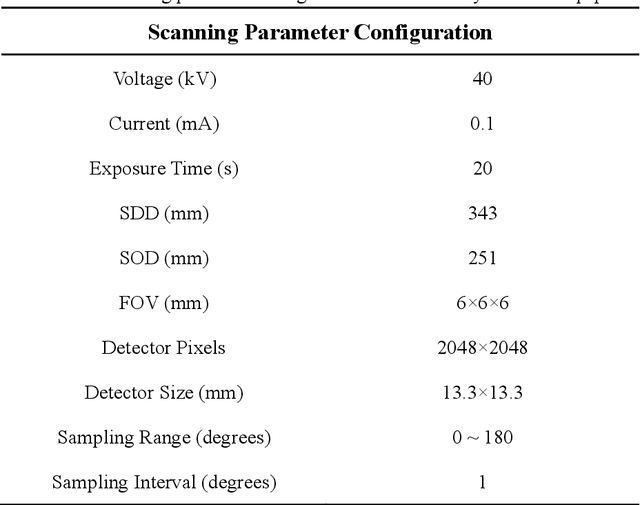

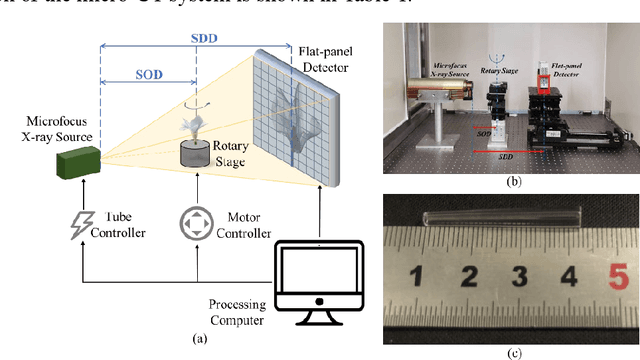

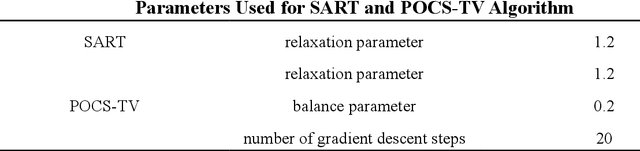

Abstract:Accurate characterization of the inner surface of X-ray monocapillary optics (XMCO) is of great significance in X-ray optics research. Compared with other characterization methods, the micro computed tomography (micro-CT) method has its unique advantages but also has some disadvantages, such as a long scanning time, long image reconstruction time, and inconvenient scanning process. In this paper, sparse sampling was proposed to shorten the scanning time, GPU acceleration technology was used to improve the speed of image reconstruction, and a simple geometric calibration algorithm was proposed to avoid the calibration phantom and simplify the scanning process. These methodologies will popularize the use of the micro-CT method in XMCO characterization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge