Jianhua Qin

Multi-scale Dynamic Wake Modeling of Floating Offshore Wind Turbines via Fourier Neural Operators and Physics-Informed Neural Networks

Apr 27, 2026Abstract:Multi-scale dynamic wake prediction is essential for the real-time control and performance optimization of floating offshore wind turbines (FOWTs). In this study, Fourier neural operators (FNOs) and physics-informed neural networks (PINNs) are utilized for the first time to reconstruct and predict the complex turbulent wakes of the FOWT under coupled surge and pitch motions across a range of Strouhal numbers (St = [0, 0.6]). Results demonstrate that while both models successfully capture dominant dynamic characteristics such as wake meandering, PINN-generated wakes appear relatively smooth, failing to resolve high-frequency coherent structures as well as the intensity of temporal variations in wake center and wake half-width. FNO effectively resolves both large- and small-scale coherent turbulent structures with significantly higher fidelity. Furthermore, FNO achieves a training speed approximately eight times faster than PINN, converging in far fewer epochs. Power spectral density (PSD) analysis reveals that FNO is more effective at capturing not only the primary wake meandering frequencies (St) but also their higher-order harmonics (e.g., 2St and 3St) and small-scale coherent structures. In fact, PINN effectively acts as a spatiotemporal low-pass filter; they resolve only large-scale dynamic features and fail to capture other spectral signatures induced by coupled surge and pitch motions, thereby significantly underestimating the energy in the high-frequency regime. These findings suggest that FNO is a promising approach for FOWT wake prediction.

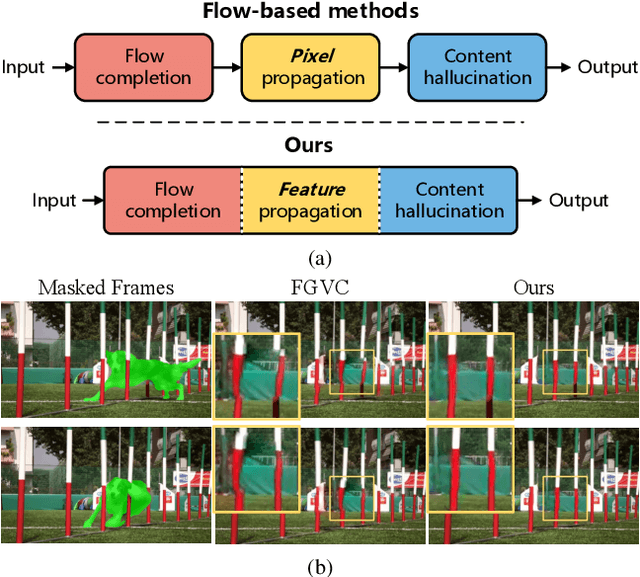

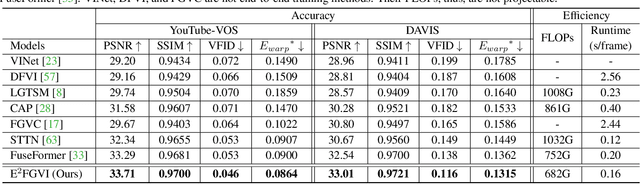

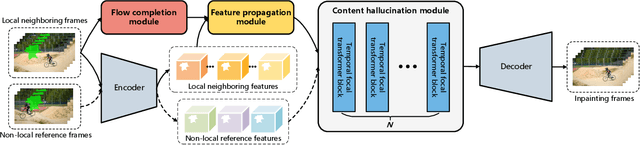

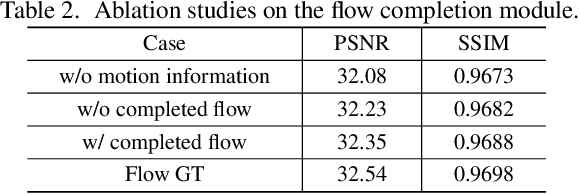

Towards An End-to-End Framework for Flow-Guided Video Inpainting

Apr 07, 2022

Abstract:Optical flow, which captures motion information across frames, is exploited in recent video inpainting methods through propagating pixels along its trajectories. However, the hand-crafted flow-based processes in these methods are applied separately to form the whole inpainting pipeline. Thus, these methods are less efficient and rely heavily on the intermediate results from earlier stages. In this paper, we propose an End-to-End framework for Flow-Guided Video Inpainting (E$^2$FGVI) through elaborately designed three trainable modules, namely, flow completion, feature propagation, and content hallucination modules. The three modules correspond with the three stages of previous flow-based methods but can be jointly optimized, leading to a more efficient and effective inpainting process. Experimental results demonstrate that the proposed method outperforms state-of-the-art methods both qualitatively and quantitatively and shows promising efficiency. The code is available at https://github.com/MCG-NKU/E2FGVI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge