Ivana Collado-Gonzalez

Towards Versatile Opti-Acoustic Sensor Fusion and Volumetric Mapping

Mar 15, 2026Abstract:Accurate 3D volumetric mapping is critical for autonomous underwater vehicles operating in obstacle-rich environments. Vision-based perception provides high-resolution data but fails in turbid conditions, while sonar is robust to lighting and turbidity but suffers from low resolution and elevation ambiguity. This paper presents a volumetric mapping framework that fuses a stereo sonar pair with a monocular camera to enable safe navigation under varying visibility conditions. Overlapping sonar fields of view resolve elevation ambiguity, producing fully defined 3D point clouds at each time step. The framework identifies regions of interest in camera images, associates them with corresponding sonar returns, and combines sonar range with camera-derived elevation cues to generate additional 3D points. Each 3D point is assigned a confidence value reflecting its reliability. These confidence-weighted points are fused using a Gaussian Process Volumetric Mapping framework that prioritizes the most reliable measurements. Experimental comparisons with other opti-acoustic and sonar-based approaches, along with field tests in a marina environment, demonstrate the method's effectiveness in capturing complex geometries and preserving critical information for robot navigation in both clear and turbid conditions. Our code is open-source to support community adoption.

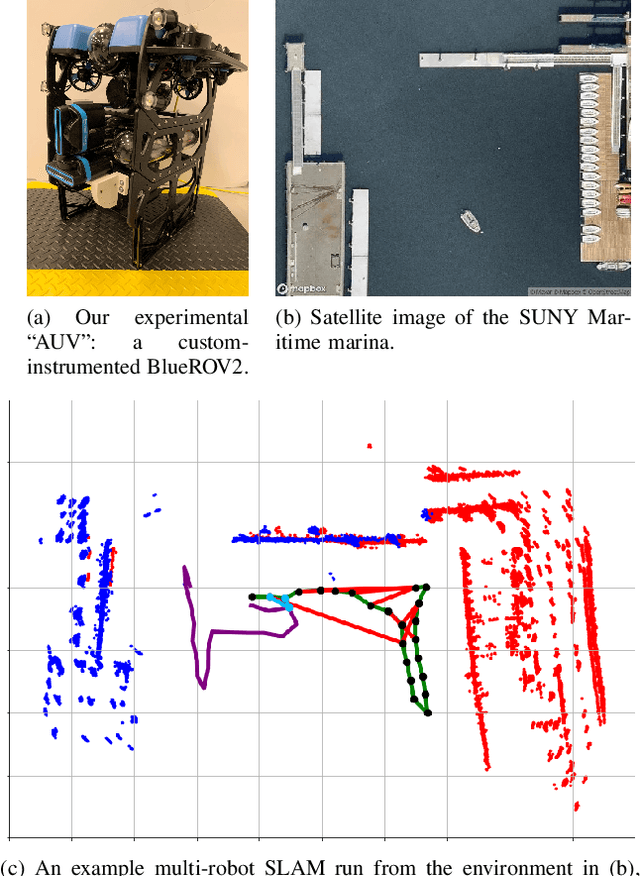

Large-Scale Dense 3D Mapping Using Submaps Derived From Orthogonal Imaging Sonars

Dec 04, 2024Abstract:3D situational awareness is critical for any autonomous system. However, when operating underwater, environmental conditions often dictate the use of acoustic sensors. These acoustic sensors are plagued by high noise and a lack of 3D information in sonar imagery, motivating the use of an orthogonal pair of imaging sonars to recover 3D perceptual data. Thus far, mapping systems in this area only use a subset of the available data at discrete timesteps and rely on object-level prior information in the environment to develop high-coverage 3D maps. Moreover, simple repeating objects must be present to build high-coverage maps. In this work, we propose a submap-based mapping system integrated with a simultaneous localization and mapping (SLAM) system to produce dense, 3D maps of complex unknown environments with varying densities of simple repeating objects. We compare this submapping approach to our previous works in this area, analyzing simple and highly complex environments, such as submerged aircraft. We analyze the tradeoffs between a submapping-based approach and our previous work leveraging simple repeating objects. We show where each method is well-motivated and where they fall short. Importantly, our proposed use of submapping achieves an advance in underwater situational awareness with wide aperture multi-beam imaging sonar, moving toward generalized large-scale dense 3D mapping capability for fully unknown complex environments.

Real-Time Planning Under Uncertainty for AUVs Using Virtual Maps

Mar 07, 2024

Abstract:Reliable localization is an essential capability for marine robots navigating in GPS-denied environments. SLAM, commonly used to mitigate dead reckoning errors, still fails in feature-sparse environments or with limited-range sensors. Pose estimation can be improved by incorporating the uncertainty prediction of future poses into the planning process and choosing actions that reduce uncertainty. However, performing belief propagation is computationally costly, especially when operating in large-scale environments. This work proposes a computationally efficient planning under uncertainty frame-work suitable for large-scale, feature-sparse environments. Our strategy leverages SLAM graph and occupancy map data obtained from a prior exploration phase to create a virtual map, describing the uncertainty of each map cell using a multivariate Gaussian. The virtual map is then used as a cost map in the planning phase, and performing belief propagation at each step is avoided. A receding horizon planning strategy is implemented, managing a goal-reaching and uncertainty-reduction tradeoff. Simulation experiments in a realistic underwater environment validate this approach. Experimental comparisons against a full belief propagation approach and a standard shortest-distance approach are conducted.

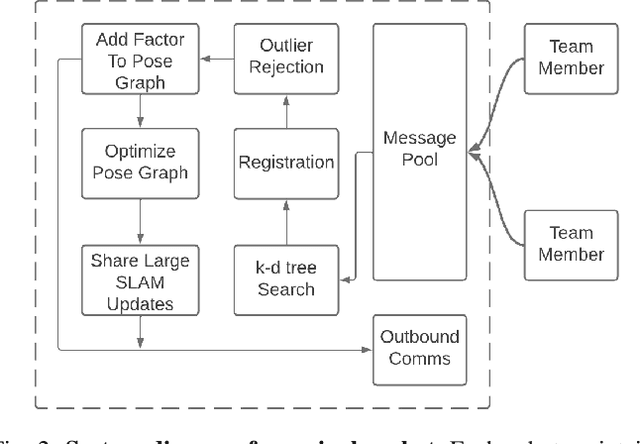

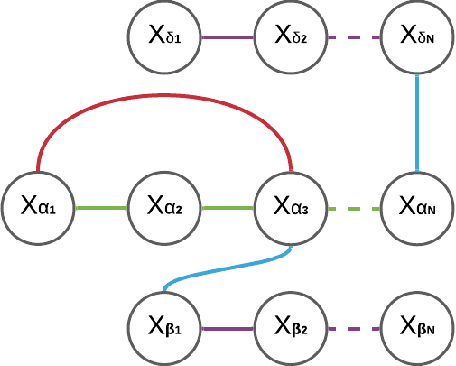

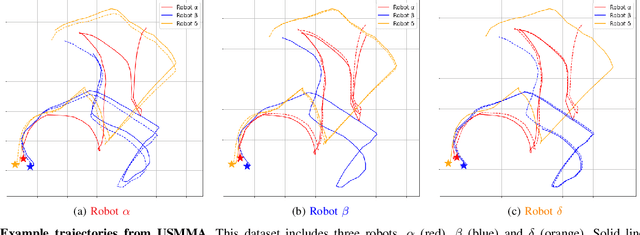

DRACo-SLAM: Distributed Robust Acoustic Communication-efficient SLAM for Imaging Sonar Equipped Underwater Robot Teams

Oct 03, 2022

Abstract:An essential task for a multi-robot system is generating a common understanding of the environment and relative poses between robots. Cooperative tasks can be executed only when a vehicle has knowledge of its own state and the states of the team members. However, this has primarily been achieved with direct rendezvous between underwater robots, via inter-robot ranging. We propose a novel distributed multi-robot simultaneous localization and mapping (SLAM) framework for underwater robots using imaging sonar-based perception. By passing only scene descriptors between robots, we do not need to pass raw sensor data unless there is a likelihood of inter-robot loop closure. We utilize pairwise consistent measurement set maximization (PCM), making our system robust to erroneous loop closures. The functionality of our system is demonstrated using two real-world datasets, one with three robots and another with two robots. We show that our system effectively estimates the trajectories of the multi-robot system and keeps the bandwidth requirements of inter-robot communication low. To our knowledge, this paper describes the first instance of multi-robot SLAM using real imaging sonar data (which we implement offline, using simulated communication). Code link: https://github.com/jake3991/DRACo-SLAM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge