Ian Steenstra

Assessing Risks of Large Language Models in Mental Health Support: A Framework for Automated Clinical AI Red Teaming

Feb 23, 2026Abstract:Large Language Models (LLMs) are increasingly utilized for mental health support; however, current safety benchmarks often fail to detect the complex, longitudinal risks inherent in therapeutic dialogue. We introduce an evaluation framework that pairs AI psychotherapists with simulated patient agents equipped with dynamic cognitive-affective models and assesses therapy session simulations against a comprehensive quality of care and risk ontology. We apply this framework to a high-impact test case, Alcohol Use Disorder, evaluating six AI agents (including ChatGPT, Gemini, and Character.AI) against a clinically-validated cohort of 15 patient personas representing diverse clinical phenotypes. Our large-scale simulation (N=369 sessions) reveals critical safety gaps in the use of AI for mental health support. We identify specific iatrogenic risks, including the validation of patient delusions ("AI Psychosis") and failure to de-escalate suicide risk. Finally, we validate an interactive data visualization dashboard with diverse stakeholders, including AI engineers and red teamers, mental health professionals, and policy experts (N=9), demonstrating that this framework effectively enables stakeholders to audit the "black box" of AI psychotherapy. These findings underscore the critical safety risks of AI-provided mental health support and the necessity of simulation-based clinical red teaming before deployment.

A Risk Taxonomy for Evaluating AI-Powered Psychotherapy Agents

May 21, 2025Abstract:The proliferation of Large Language Models (LLMs) and Intelligent Virtual Agents acting as psychotherapists presents significant opportunities for expanding mental healthcare access. However, their deployment has also been linked to serious adverse outcomes, including user harm and suicide, facilitated by a lack of standardized evaluation methodologies capable of capturing the nuanced risks of therapeutic interaction. Current evaluation techniques lack the sensitivity to detect subtle changes in patient cognition and behavior during therapy sessions that may lead to subsequent decompensation. We introduce a novel risk taxonomy specifically designed for the systematic evaluation of conversational AI psychotherapists. Developed through an iterative process including review of the psychotherapy risk literature, qualitative interviews with clinical and legal experts, and alignment with established clinical criteria (e.g., DSM-5) and existing assessment tools (e.g., NEQ, UE-ATR), the taxonomy aims to provide a structured approach to identifying and assessing user/patient harms. We provide a high-level overview of this taxonomy, detailing its grounding, and discuss potential use cases. We discuss two use cases in detail: monitoring cognitive model-based risk factors during a counseling conversation to detect unsafe deviations, in both human-AI counseling sessions and in automated benchmarking of AI psychotherapists with simulated patients. The proposed taxonomy offers a foundational step towards establishing safer and more responsible innovation in the domain of AI-driven mental health support.

Scaffolding Empathy: Training Counselors with Simulated Patients and Utterance-level Performance Visualizations

Feb 25, 2025Abstract:Learning therapeutic counseling involves significant role-play experience with mock patients, with current manual training methods providing only intermittent granular feedback. We seek to accelerate and optimize counselor training by providing frequent, detailed feedback to trainees as they interact with a simulated patient. Our first application domain involves training motivational interviewing skills for counselors. Motivational interviewing is a collaborative counseling style in which patients are guided to talk about changing their behavior, with empathetic counseling an essential ingredient. We developed and evaluated an LLM-powered training system that features a simulated patient and visualizations of turn-by-turn performance feedback tailored to the needs of counselors learning motivational interviewing. We conducted an evaluation study with professional and student counselors, demonstrating high usability and satisfaction with the system. We present design implications for the development of automated systems that train users in counseling skills and their generalizability to other types of social skills training.

Virtual Agents for Alcohol Use Counseling: Exploring LLM-Powered Motivational Interviewing

Jul 10, 2024

Abstract:We introduce a novel application of large language models (LLMs) in developing a virtual counselor capable of conducting motivational interviewing (MI) for alcohol use counseling. Access to effective counseling remains limited, particularly for substance abuse, and virtual agents offer a promising solution by leveraging LLM capabilities to simulate nuanced communication techniques inherent in MI. Our approach combines prompt engineering and integration into a user-friendly virtual platform to facilitate realistic, empathetic interactions. We evaluate the effectiveness of our virtual agent through a series of studies focusing on replicating MI techniques and human counselor dialog. Initial findings suggest that our LLM-powered virtual agent matches human counselors' empathetic and adaptive conversational skills, presenting a significant step forward in virtual health counseling and providing insights into the design and implementation of LLM-based therapeutic interactions.

Empathic Grounding: Explorations using Multimodal Interaction and Large Language Models with Conversational Agents

Jul 01, 2024Abstract:We introduce the concept of "empathic grounding" in conversational agents as an extension of Clark's conceptualization of grounding in conversation in which the grounding criterion includes listener empathy for the speaker's affective state. Empathic grounding is generally required whenever the speaker's emotions are foregrounded and can make the grounding process more efficient and reliable by communicating both propositional and affective understanding. Both speaker expressions of affect and listener empathic grounding can be multimodal, including facial expressions and other nonverbal displays. Thus, models of empathic grounding for embodied agents should be multimodal to facilitate natural and efficient communication. We describe a multimodal model that takes as input user speech and facial expression to generate multimodal grounding moves for a listening agent using a large language model. We also describe a testbed to evaluate approaches to empathic grounding, in which a humanoid robot interviews a user about a past episode of pain and then has the user rate their perception of the robot's empathy. We compare our proposed model to one that only generates non-affective grounding cues in a between-subjects experiment. Findings demonstrate that empathic grounding increases user perceptions of empathy, understanding, emotional intelligence, and trust. Our work highlights the role of emotion awareness and multimodality in generating appropriate grounding moves for conversational agents.

Multimodal Dialogue State Tracking By QA Approach with Data Augmentation

Jul 20, 2020

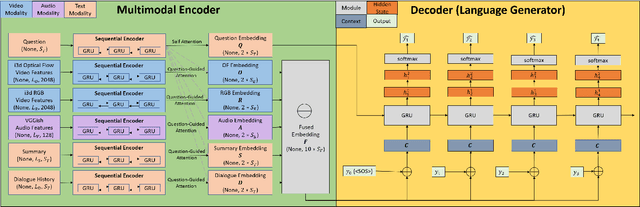

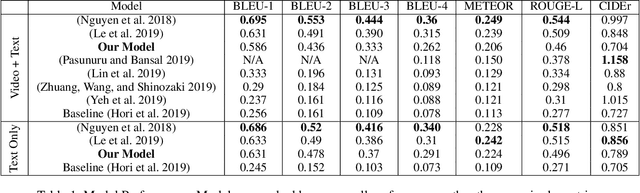

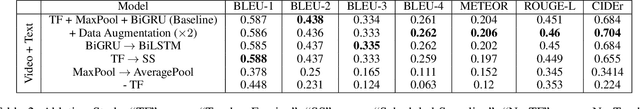

Abstract:Recently, a more challenging state tracking task, Audio-Video Scene-Aware Dialogue (AVSD), is catching an increasing amount of attention among researchers. Different from purely text-based dialogue state tracking, the dialogue in AVSD contains a sequence of question-answer pairs about a video and the final answer to the given question requires additional understanding of the video. This paper interprets the AVSD task from an open-domain Question Answering (QA) point of view and proposes a multimodal open-domain QA system to deal with the problem. The proposed QA system uses common encoder-decoder framework with multimodal fusion and attention. Teacher forcing is applied to train a natural language generator. We also propose a new data augmentation approach specifically under QA assumption. Our experiments show that our model and techniques bring significant improvements over the baseline model on the DSTC7-AVSD dataset and demonstrate the potentials of our data augmentation techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge