Hancock Edwin

From Quantum Graph Computing to Quantum Graph Learning: A Survey

Feb 19, 2022

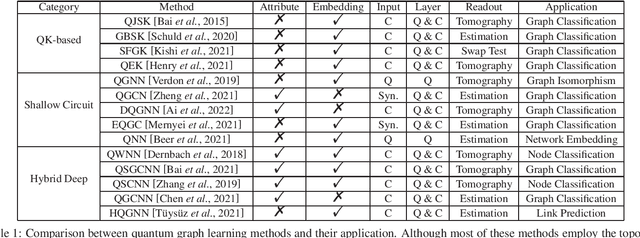

Abstract:Quantum computing (QC) is a new computational paradigm whose foundations relate to quantum physics. Notable progress has been made, driving the birth of a series of quantum-based algorithms that take advantage of quantum computational power. In this paper, we provide a targeted survey of the development of QC for graph-related tasks. We first elaborate the correlations between quantum mechanics and graph theory to show that quantum computers are able to generate useful solutions that can not be produced by classical systems efficiently for some problems related to graphs. For its practicability and wide-applicability, we give a brief review of typical graph learning techniques designed for various tasks. Inspired by these powerful methods, we note that advanced quantum algorithms have been proposed for characterizing the graph structures. We give a snapshot of quantum graph learning where expectations serve as a catalyst for subsequent research. We further discuss the challenges of using quantum algorithms in graph learning, and future directions towards more flexible and versatile quantum graph learning solvers.

Fast Subspace Clustering Based on the Kronecker Product

Mar 15, 2018

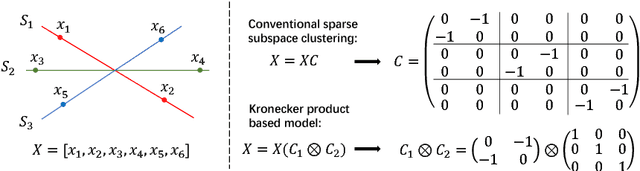

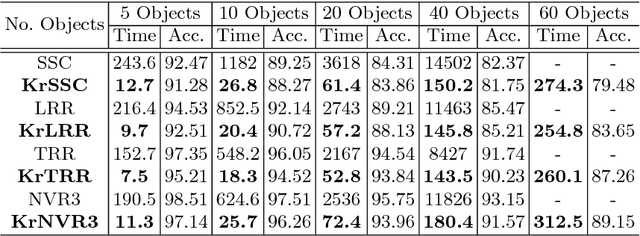

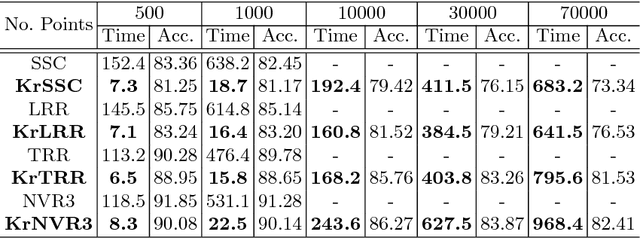

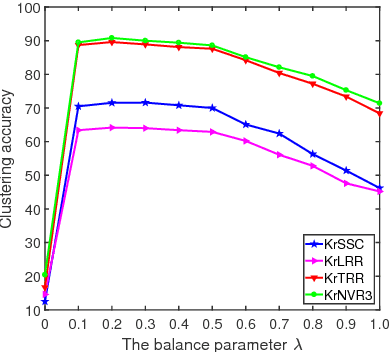

Abstract:Subspace clustering is a useful technique for many computer vision applications in which the intrinsic dimension of high-dimensional data is often smaller than the ambient dimension. Spectral clustering, as one of the main approaches to subspace clustering, often takes on a sparse representation or a low-rank representation to learn a block diagonal self-representation matrix for subspace generation. However, existing methods require solving a large scale convex optimization problem with a large set of data, with computational complexity reaches O(N^3) for N data points. Therefore, the efficiency and scalability of traditional spectral clustering methods can not be guaranteed for large scale datasets. In this paper, we propose a subspace clustering model based on the Kronecker product. Due to the property that the Kronecker product of a block diagonal matrix with any other matrix is still a block diagonal matrix, we can efficiently learn the representation matrix which is formed by the Kronecker product of k smaller matrices. By doing so, our model significantly reduces the computational complexity to O(kN^{3/k}). Furthermore, our model is general in nature, and can be adapted to different regularization based subspace clustering methods. Experimental results on two public datasets show that our model significantly improves the efficiency compared with several state-of-the-art methods. Moreover, we have conducted experiments on synthetic data to verify the scalability of our model for large scale datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge