Hallison Paz

Implicit Neural Representation of Tileable Material Textures

Feb 03, 2024Abstract:We explore sinusoidal neural networks to represent periodic tileable textures. Our approach leverages the Fourier series by initializing the first layer of a sinusoidal neural network with integer frequencies with a period $P$. We prove that the compositions of sinusoidal layers generate only integer frequencies with period $P$. As a result, our network learns a continuous representation of a periodic pattern, enabling direct evaluation at any spatial coordinate without the need for interpolation. To enforce the resulting pattern to be tileable, we add a regularization term, based on the Poisson equation, to the loss function. Our proposed neural implicit representation is compact and enables efficient reconstruction of high-resolution textures with high visual fidelity and sharpness across multiple levels of detail. We present applications of our approach in the domain of anti-aliased surface.

Neural Implicit Morphing of Face Images

Aug 26, 2023

Abstract:Face morphing is one of the seminal problems in computer graphics, with numerous artistic and forensic applications. It is notoriously challenging due to pose, lighting, gender, and ethnicity variations. Generally, this task consists of a warping for feature alignment and a blending for a seamless transition between the warped images. We propose to leverage coordinate-based neural networks to represent such warpings and blendings of face images. During training, we exploit the smoothness and flexibility of such networks, by combining energy functionals employed in classical approaches without discretizations. Additionally, our method is time-dependent, allowing a continuous warping, and blending of the target images. During warping inference, we need both direct and inverse transformations of the time-dependent warping. The first is responsible for morphing the target image into the source image, while the inverse is used for morphing in the opposite direction. Our neural warping stores those maps in a single network due to its inversible property, dismissing the hard task of inverting them. The results of our experiments indicate that our method is competitive with both classical and data-based neural techniques under the lens of face-morphing detection approaches. Aesthetically, the resulting images present a seamless blending of diverse faces not yet usual in the literature.

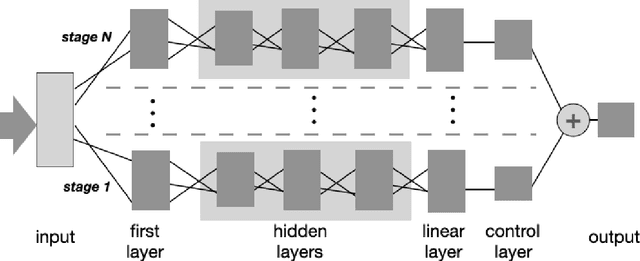

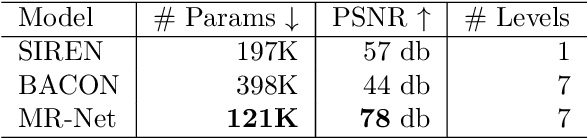

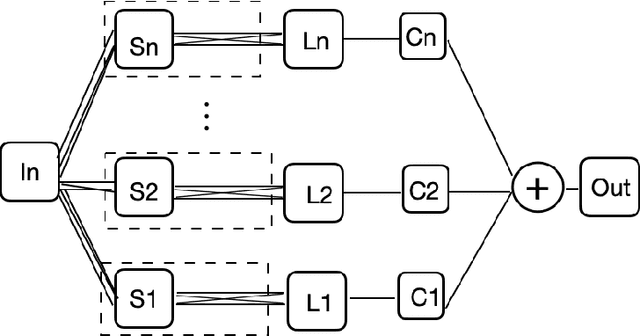

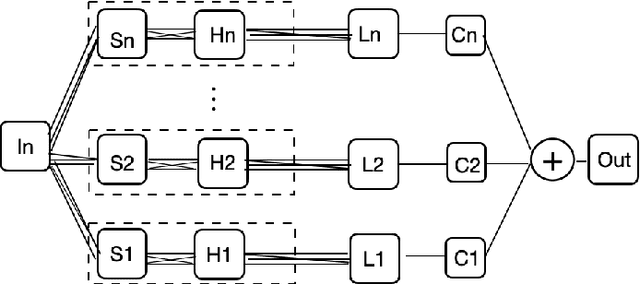

Multiresolution Neural Networks for Imaging

Aug 27, 2022

Abstract:We present MR-Net, a general architecture for multiresolution neural networks, and a framework for imaging applications based on this architecture. Our coordinate-based networks are continuous both in space and in scale as they are composed of multiple stages that progressively add finer details. Besides that, they are a compact and efficient representation. We show examples of multiresolution image representation and applications to texturemagnification, minification, and antialiasing. This document is the extended version of the paper [PNS+22]. It includes additional material that would not fit the page limitations of the conference track for publication.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge