Fernando M. Quintana

Bruno: Backpropagation Running Undersampled for Novel device Optimization

May 23, 2025

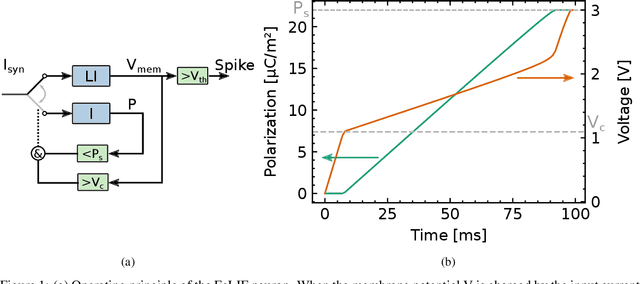

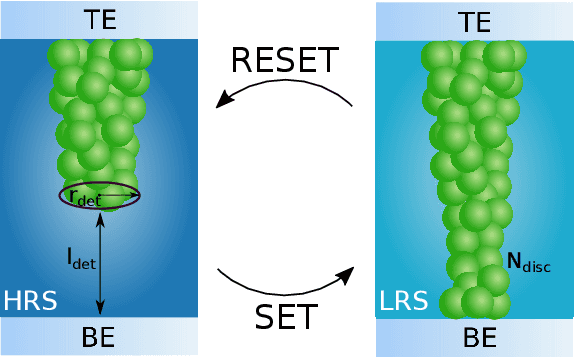

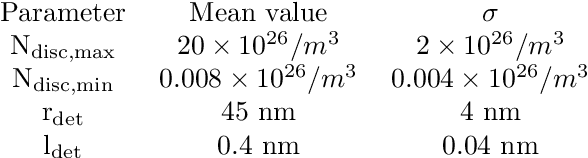

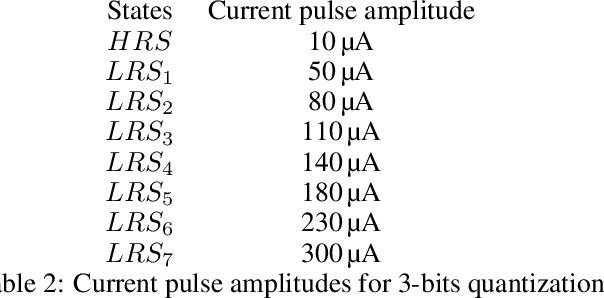

Abstract:Recent efforts to improve the efficiency of neuromorphic and machine learning systems have focused on the development of application-specific integrated circuits (ASICs), which provide hardware specialized for the deployment of neural networks, leading to potential gains in efficiency and performance. These systems typically feature an architecture that goes beyond the von Neumann architecture employed in general-purpose hardware such as GPUs. Neural networks developed for this specialised hardware then need to take into account the specifics of the hardware platform, which requires novel training algorithms and accurate models of the hardware, since they cannot be abstracted as a general-purpose computing platform. In this work, we present a bottom-up approach to train neural networks for hardware based on spiking neurons and synapses built on ferroelectric capacitor (FeCap) and Resistive switching non-volatile devices (RRAM) respectively. In contrast to the more common approach of designing hardware to fit existing abstract neuron or synapse models, this approach starts with compact models of the physical device to model the computational primitive of the neurons. Based on these models, a training algorithm is developed that can reliably backpropagate through these physical models, even when applying common hardware limitations, such as stochasticity, variability, and low bit precision. The training algorithm is then tested on a spatio-temporal dataset with a network composed of quantized synapses based on RRAM and ferroelectric leaky integrate-and-fire (FeLIF) neurons. The performance of the network is compared with different networks composed of LIF neurons. The results of the experiments show the potential advantage of using BRUNO to train networks with FeLIF neurons, by achieving a reduction in both time and memory for detecting spatio-temporal patterns with quantized synapses.

WaLiN-GUI: a graphical and auditory tool for neuron-based encoding

Oct 25, 2023

Abstract:Neuromorphic computing relies on spike-based, energy-efficient communication, inherently implying the need for conversion between real-valued (sensory) data and binary, sparse spiking representation. This is usually accomplished using the real valued data as current input to a spiking neuron model, and tuning the neuron's parameters to match a desired, often biologically inspired behaviour. We developed a tool, the WaLiN-GUI, that supports the investigation of neuron models and parameter combinations to identify suitable configurations for neuron-based encoding of sample-based data into spike trains. Due to the generalized LIF model implemented by default, next to the LIF and Izhikevich neuron models, many spiking behaviors can be investigated out of the box, thus offering the possibility of tuning biologically plausible responses to the input data. The GUI is provided open source and with documentation, being easy to extend with further neuron models and personalize with data analysis functions.

ETLP: Event-based Three-factor Local Plasticity for online learning with neuromorphic hardware

Jan 24, 2023Abstract:Neuromorphic perception with event-based sensors, asynchronous hardware and spiking neurons is showing promising results for real-time and energy-efficient inference in embedded systems. The next promise of brain-inspired computing is to enable adaptation to changes at the edge with online learning. However, the parallel and distributed architectures of neuromorphic hardware based on co-localized compute and memory imposes locality constraints to the on-chip learning rules. We propose in this work the Event-based Three-factor Local Plasticity (ETLP) rule that uses (1) the pre-synaptic spike trace, (2) the post-synaptic membrane voltage and (3) a third factor in the form of projected labels with no error calculation, that also serve as update triggers. We apply ETLP with feedforward and recurrent spiking neural networks on visual and auditory event-based pattern recognition, and compare it to Back-Propagation Through Time (BPTT) and eProp. We show a competitive performance in accuracy with a clear advantage in the computational complexity for ETLP. We also show that when using local plasticity, threshold adaptation in spiking neurons and a recurrent topology are necessary to learn spatio-temporal patterns with a rich temporal structure. Finally, we provide a proof of concept hardware implementation of ETLP on FPGA to highlight the simplicity of its computational primitives and how they can be mapped into neuromorphic hardware for online learning with low-energy consumption and real-time interaction.

Online programming system for robotic fillet welding in Industry 4.0

Dec 21, 2021

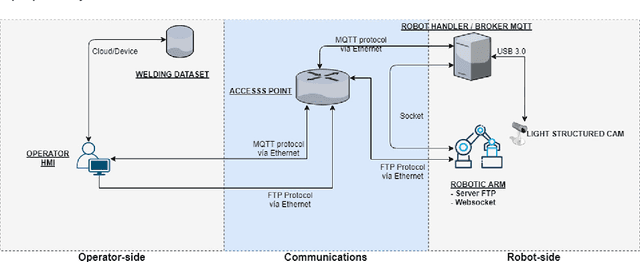

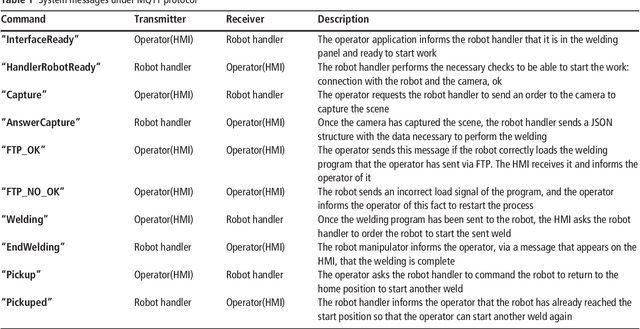

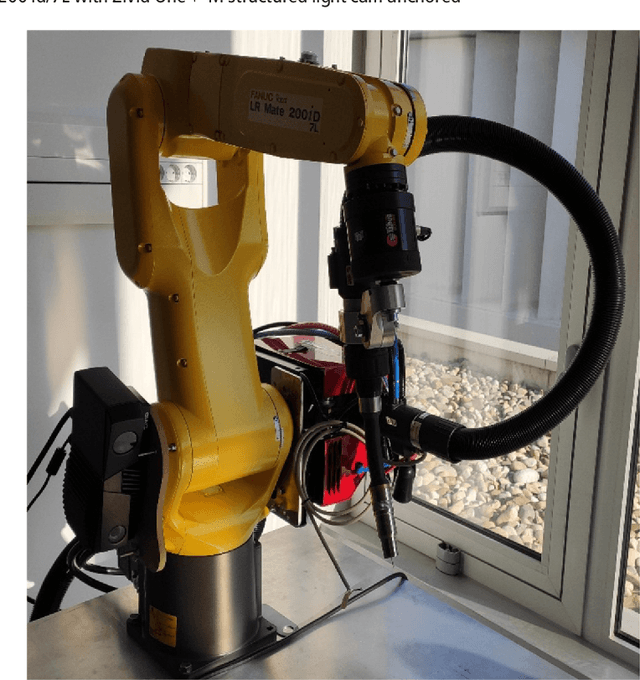

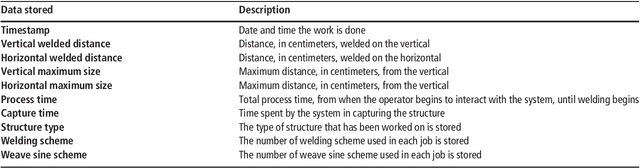

Abstract:Fillet welding is one of the most widespread types of welding in the industry, which is still carried out manually or automated by contact. This paper aims to describe an online programming system for noncontact fillet welding robots with U and L shaped structures, which responds to the needs of the Fourth Industrial Revolution. In this paper, the authors propose an online robot programming methodology that eliminates unnecessary steps traditionally performed in robotic welding, so that the operator only performs three steps to complete the welding task. First, choose the piece to weld. Then, enter the welding parameters. Finally, it sends the automatically generated program to the robot. The system finally managed to perform the fillet welding task with the proposed method in a more efficient preparation time than the compared methods. For this, a reduced number of components was used compared to other systems, such as, a structured light 3D camera, two computers and a concentrator, in addition to the six axis industrial robotic arm. The operating complexity of the system has been reduced as much as possible. To the best of the authors knowledge, there is no scientific or commercial evidence of an online robot programming system capable of performing a fillet welding process, simplifying the process so that it is completely transparent for the operator and framed in the Industry 4.0 paradigm. Its commercial potential lies mainly in its simple and low cost implementation in a flexible system capable of adapting to any industrial fillet welding job and to any support that can accommodate it.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge