Martin A. Nicholson

Bruno: Backpropagation Running Undersampled for Novel device Optimization

May 23, 2025

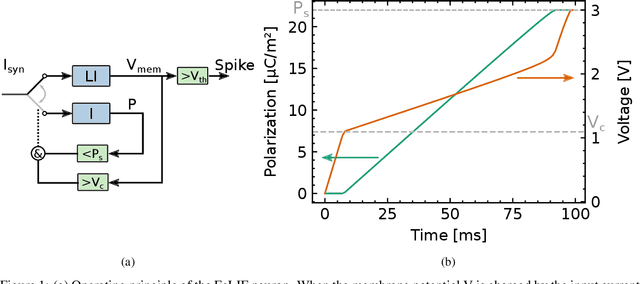

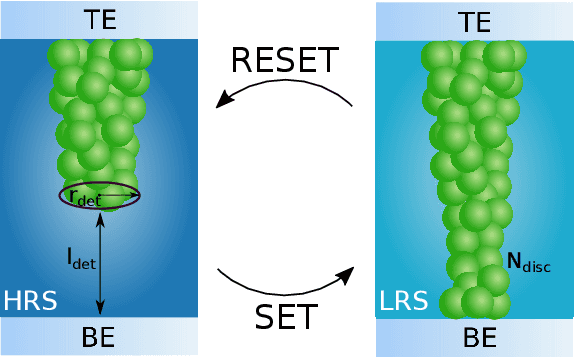

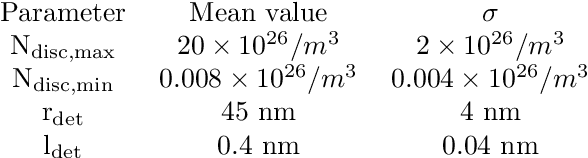

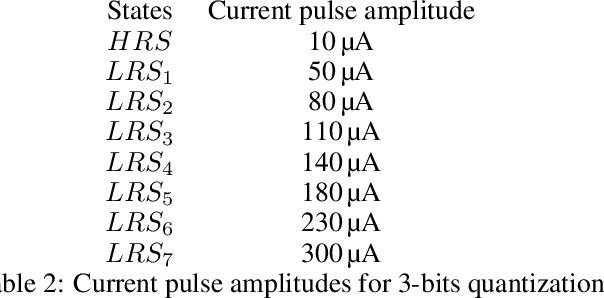

Abstract:Recent efforts to improve the efficiency of neuromorphic and machine learning systems have focused on the development of application-specific integrated circuits (ASICs), which provide hardware specialized for the deployment of neural networks, leading to potential gains in efficiency and performance. These systems typically feature an architecture that goes beyond the von Neumann architecture employed in general-purpose hardware such as GPUs. Neural networks developed for this specialised hardware then need to take into account the specifics of the hardware platform, which requires novel training algorithms and accurate models of the hardware, since they cannot be abstracted as a general-purpose computing platform. In this work, we present a bottom-up approach to train neural networks for hardware based on spiking neurons and synapses built on ferroelectric capacitor (FeCap) and Resistive switching non-volatile devices (RRAM) respectively. In contrast to the more common approach of designing hardware to fit existing abstract neuron or synapse models, this approach starts with compact models of the physical device to model the computational primitive of the neurons. Based on these models, a training algorithm is developed that can reliably backpropagate through these physical models, even when applying common hardware limitations, such as stochasticity, variability, and low bit precision. The training algorithm is then tested on a spatio-temporal dataset with a network composed of quantized synapses based on RRAM and ferroelectric leaky integrate-and-fire (FeLIF) neurons. The performance of the network is compared with different networks composed of LIF neurons. The results of the experiments show the potential advantage of using BRUNO to train networks with FeLIF neurons, by achieving a reduction in both time and memory for detecting spatio-temporal patterns with quantized synapses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge