Eleanor Hagerman

Shammie

Beyond the Imitation Game: Quantifying and extrapolating the capabilities of language models

Jun 10, 2022Abstract:Language models demonstrate both quantitative improvement and new qualitative capabilities with increasing scale. Despite their potentially transformative impact, these new capabilities are as yet poorly characterized. In order to inform future research, prepare for disruptive new model capabilities, and ameliorate socially harmful effects, it is vital that we understand the present and near-future capabilities and limitations of language models. To address this challenge, we introduce the Beyond the Imitation Game benchmark (BIG-bench). BIG-bench currently consists of 204 tasks, contributed by 442 authors across 132 institutions. Task topics are diverse, drawing problems from linguistics, childhood development, math, common-sense reasoning, biology, physics, social bias, software development, and beyond. BIG-bench focuses on tasks that are believed to be beyond the capabilities of current language models. We evaluate the behavior of OpenAI's GPT models, Google-internal dense transformer architectures, and Switch-style sparse transformers on BIG-bench, across model sizes spanning millions to hundreds of billions of parameters. In addition, a team of human expert raters performed all tasks in order to provide a strong baseline. Findings include: model performance and calibration both improve with scale, but are poor in absolute terms (and when compared with rater performance); performance is remarkably similar across model classes, though with benefits from sparsity; tasks that improve gradually and predictably commonly involve a large knowledge or memorization component, whereas tasks that exhibit "breakthrough" behavior at a critical scale often involve multiple steps or components, or brittle metrics; social bias typically increases with scale in settings with ambiguous context, but this can be improved with prompting.

Specificity-Based Sentence Ordering for Multi-Document Extractive Risk Summarization

Sep 23, 2019

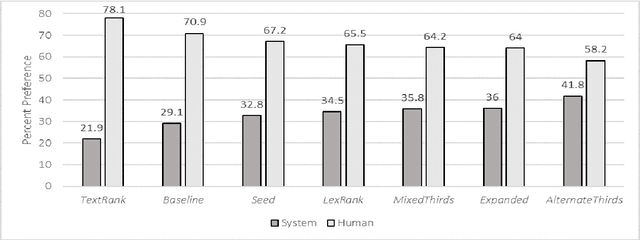

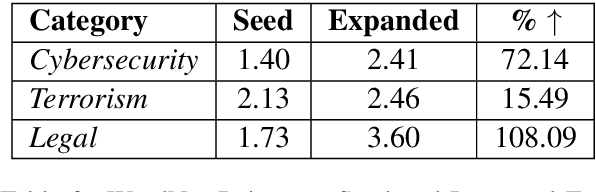

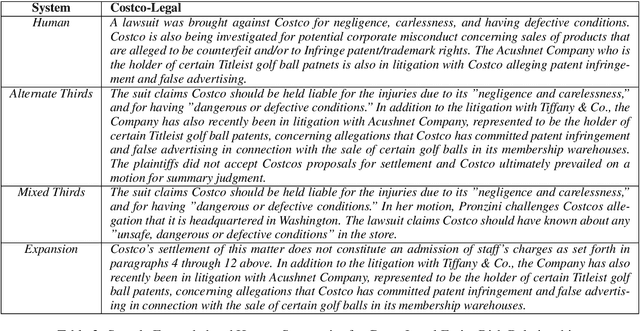

Abstract:Risk mining technologies seek to find relevant textual extractions that capture entity-risk relationships. However, when high volume data sets are processed, a multitude of relevant extractions can be returned, shifting the focus to how best to present the results. We provide the details of a risk mining multi-document extractive summarization system that produces high quality output by modeling shifts in specificity that are characteristic of well-formed discourses. In particular, we propose a novel selection algorithm that alternates between extracts based on human curated or expanded autoencoded key terms, which exhibit greater specificity or generality as it relates to an entity-risk relationship. Through this extract ordering, and without the need for more complex discourse-aware NLP, we induce felicitous shifts in specificity in the alternating summaries that outperform non-alternating summaries on automatic ROUGE and BLEU scores, and manual understandability and preferences evaluations - achieving no statistically significant difference when compared to human authored summaries.

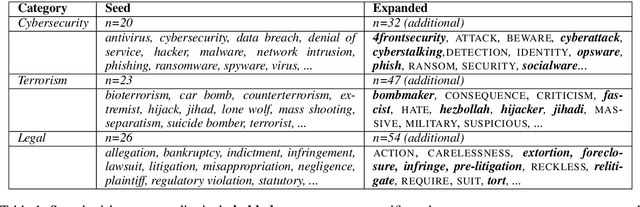

A Consolidated System for Robust Multi-Document Entity Risk Extraction and Taxonomy Augmentation

Sep 23, 2019

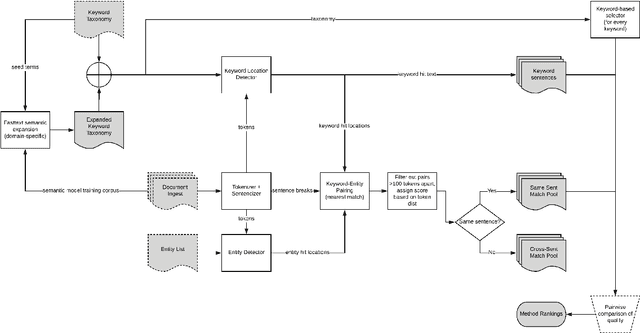

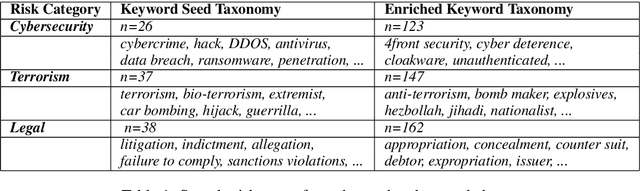

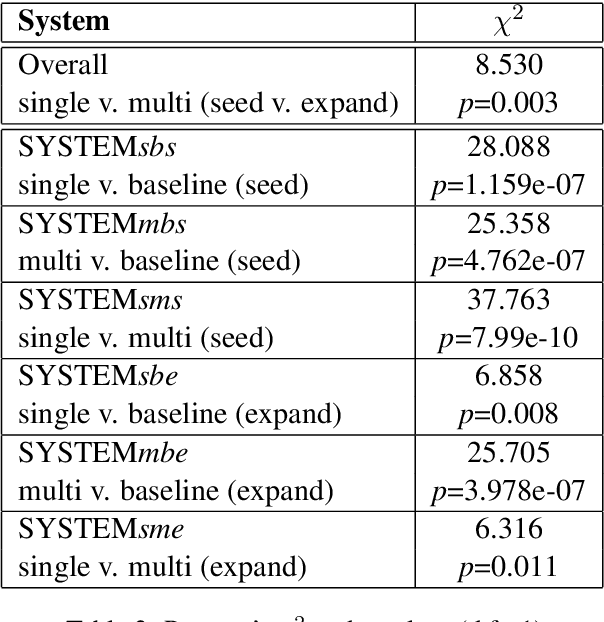

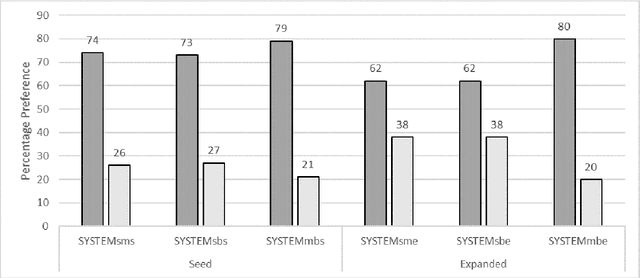

Abstract:We introduce a hybrid human-automated system that provides scalable entity-risk relation extractions across large data sets. Given an expert-defined keyword taxonomy, entities, and data sources, the system returns text extractions based on bidirectional token distances between entities and keywords and expands taxonomy coverage with word vector encodings. Our system represents a more simplified architecture compared to alerting focused systems - motivated by high coverage use cases in the risk mining space such as due diligence activities and intelligence gathering. We provide an overview of the system and expert evaluations for a range of token distances. We demonstrate that single and multi-sentence distance groups significantly outperform baseline extractions with shorter, single sentences being preferred by analysts. As the taxonomy expands, the amount of relevant information increases and multi-sentence extractions become more preferred, but this is tempered against entity-risk relations become more indirect. We discuss the implications of these observations on users, management of ambiguity and taxonomy expansion, and future system modifications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge