Duncan Calvert

A Behavior Architecture for Fast Humanoid Robot Door Traversals

Nov 05, 2024

Abstract:Towards the role of humanoid robots as squad mates in urban operations and other domains, we identified doors as a major area lacking capability development. In this paper, we focus on the ability of humanoid robots to navigate and deal with doors. Human-sized doors are ubiquitous in many environment domains and the humanoid form factor is uniquely suited to operate and traverse them. We present an architecture which incorporates GPU accelerated perception and a tree based interactive behavior coordination system with a whole body motion and walking controller. Our system is capable of performing door traversals on a variety of door types. It supports rapid authoring of behaviors for unseen door types and techniques to achieve re-usability of those authored behaviors. The behaviors are modelled using trees and feature logical reactivity and action sequences that can be executed with layered concurrency to increase speed. Primitive actions are built on top of our existing whole body controller which supports manipulation while walking. We include a perception system using both neural networks and classical computer vision for door mechanism detection outside of the lab environment. We present operator-robot interdependence analysis charts to explore how human cognition is combined with artificial intelligence to produce complex robot behavior. Finally, we present and discuss real robot performances of fast door traversals on our Nadia humanoid robot. Videos online at https://www.youtube.com/playlist?list=PLXuyT8w3JVgMPaB5nWNRNHtqzRK8i68dy.

Mixed Reality Teleoperation Assistance for Direct Control of Humanoids

Nov 01, 2024Abstract:Teleoperation plays a crucial role in enabling robot operations in challenging environments, yet existing limitations in effectiveness and accuracy necessitate the development of innovative strategies for improving teleoperated tasks. This article introduces a novel approach that utilizes mixed reality and assistive autonomy to enhance the efficiency and precision of humanoid robot teleoperation. By leveraging Probabilistic Movement Primitives, object detection, and Affordance Templates, the assistance combines user motion with autonomous capabilities, achieving task efficiency while maintaining human-like robot motion. Experiments and feasibility studies on the Nadia robot confirm the effectiveness of the proposed framework.

High-Speed and Impact Resilient Teleoperation of Humanoid Robots

Sep 06, 2024

Abstract:Teleoperation of humanoid robots has long been a challenging domain, necessitating advances in both hardware and software to achieve seamless and intuitive control. This paper presents an integrated solution based on several elements: calibration-free motion capture and retargeting, low-latency fast whole-body kinematics streaming toolbox and high-bandwidth cycloidal actuators. Our motion retargeting approach stands out for its simplicity, requiring only 7 IMUs to generate full-body references for the robot. The kinematics streaming toolbox, ensures real-time, responsive control of the robot's movements, significantly reducing latency and enhancing operational efficiency. Additionally, the use of cycloidal actuators makes it possible to withstand high speeds and impacts with the environment. Together, these approaches contribute to a teleoperation framework that offers unprecedented performance. Experimental results on the humanoid robot Nadia demonstrate the effectiveness of the integrated system.

Authoring and Operating Humanoid Behaviors On the Fly using Coactive Design Principles

Jul 25, 2023Abstract:Humanoid robots have the potential to perform useful tasks in a world built for humans. However, communicating intention and teaming with a humanoid robot is a multi-faceted and complex problem. In this paper, we tackle the problems associated with quickly and interactively authoring new robot behavior that works on real hardware. We bring the powerful concepts of Affordance Templates and Coactive Design methodology to this problem to attempt to solve and explain it. In our approach we use interactive stance and hand pose goals along with other types of actions to author humanoid robot behavior on the fly. We then describe how our operator interface works to author behaviors on the fly and provide interdependence analysis charts for task approach and door opening. We present timings from real robot performances for traversing a push door and doing a pick and place task on our Nadia humanoid robot.

A Virtual-Reality Driven Approach for Generating Humanoid Multi-Contact Trajectories

Mar 14, 2023Abstract:We present a virtual reality (VR) framework designed to intuitively generate humanoid multi-contact maneuvers for use in unstructured environments. Our framework allows the operator to directly manipulate the inverse kinematics objectives which parameterize a trajectory. Kinematic objectives consisting of spatial poses, center-of-mass position and joint positions are used in an optimization based inverse kinematics solver to compute whole-body configurations while enforcing static contact stability. Virtual ``anchors'' allow the operator to freely drag and constrain the robot as well as modify objective weights and constraint sets. The interface's design novelty is a generalized use of anchors which enables arbitrary posture and contact modes. The operator is aided by visual cues of actuation feasibility and tools for rapid anchor placement. We demonstrate our approach in simulation and hardware on a NASA Valkyrie humanoid, focusing on multi-contact trajectories which are challenging to generate autonomously or through alternative teleoperation approaches.

A Fast, Autonomous, Bipedal Walking Behavior over Rapid Regions

Jul 17, 2022

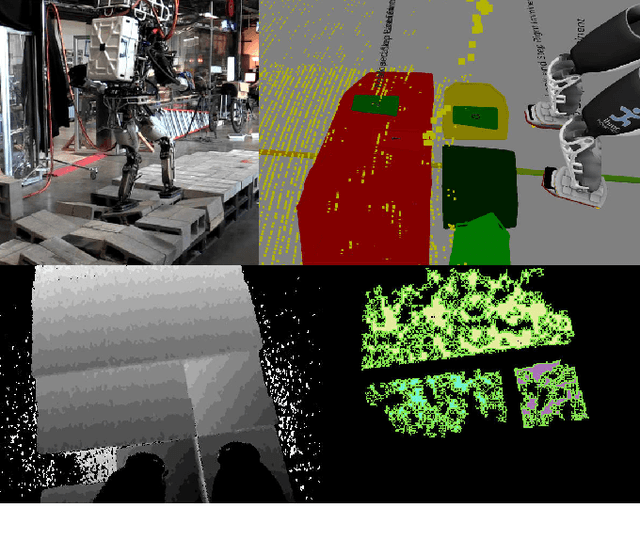

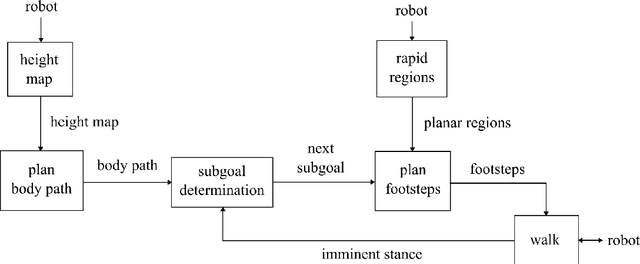

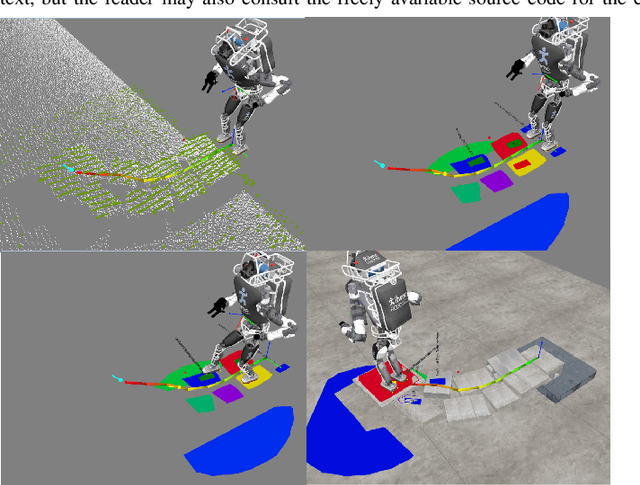

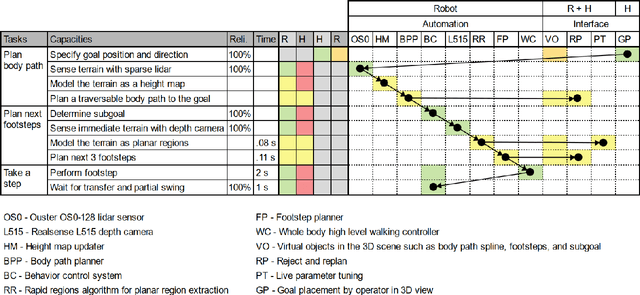

Abstract:In trying to build humanoid robots that perform useful tasks in a world built for humans, we address the problem of autonomous locomotion. Humanoid robot planning and control algorithms for walking over rough terrain are becoming increasingly capable. At the same time, commercially available depth cameras have been getting more accurate and GPU computing has become a primary tool in AI research. In this paper, we present a newly constructed behavior control system for achieving fast, autonomous, bipedal walking, without pauses or deliberation. We achieve this using a recently published rapid planar regions perception algorithm, a height map based body path planner, an A* footstep planner, and a momentum-based walking controller. We put these elements together to form a behavior control system supported by modern software development practices and simulation tools.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge