Derek Rayside

RADACS: Towards Higher-Order Reasoning using Action Recognition in Autonomous Vehicles

Sep 28, 2022

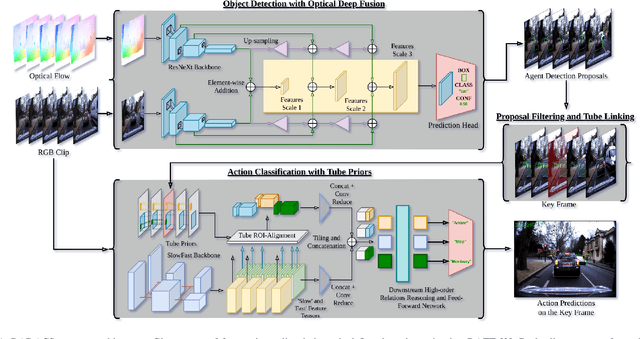

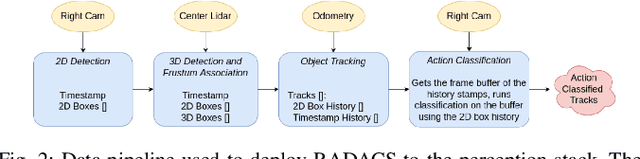

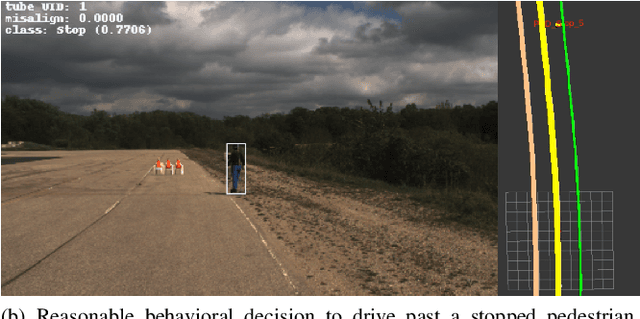

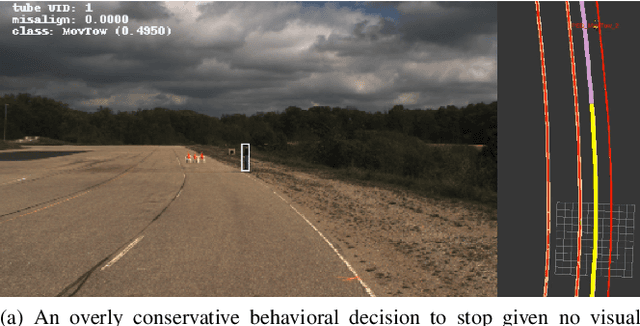

Abstract:When applied to autonomous vehicle settings, action recognition can help enrich an environment model's understanding of the world and improve plans for future action. Towards these improvements in autonomous vehicle decision-making, we propose in this work a novel two-stage online action recognition system, termed RADACS. RADACS formulates the problem of active agent detection and adapts ideas about actor-context relations from human activity recognition in a straightforward two-stage pipeline for action detection and classification. We show that our proposed scheme can outperform the baseline on the ICCV2021 Road Challenge dataset and by deploying it on a real vehicle platform, we demonstrate how a higher-order understanding of agent actions in an environment can improve decisions on a real autonomous vehicle.

Real-Time Unified Trajectory Planning and Optimal Control for Urban Autonomous Driving Under Static and Dynamic Obstacle Constraints

Sep 19, 2022

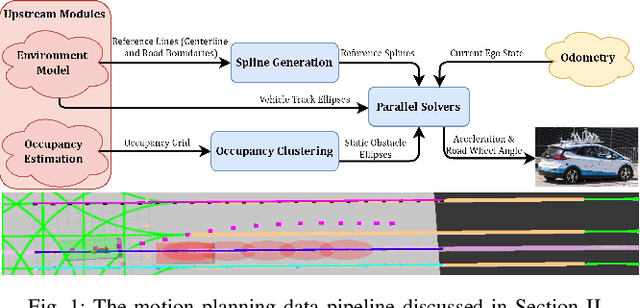

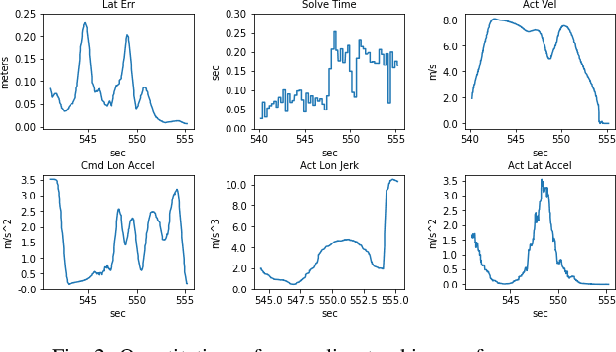

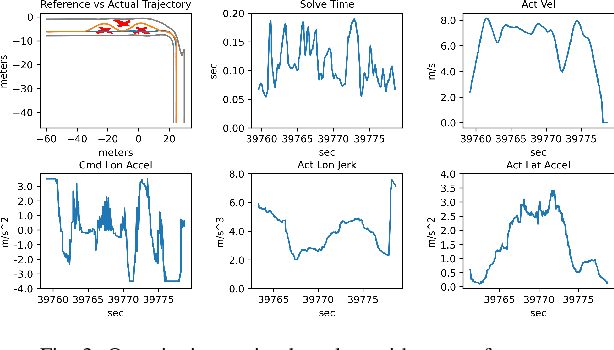

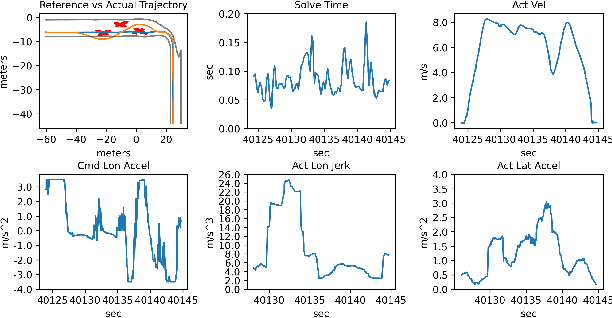

Abstract:Trajectory planning and control have historically been separated into two modules in automated driving stacks. Trajectory planning focuses on higher-level tasks like avoiding obstacles and staying on the road surface, whereas the controller tries its best to follow an ever changing reference trajectory. We argue that this separation is (1) flawed due to the mismatch between planned trajectories and what the controller can feasibly execute, and (2) unnecessary due to the flexibility of the model predictive control (MPC) paradigm. Instead, in this paper, we present a unified MPC-based trajectory planning and control scheme that guarantees feasibility with respect to road boundaries, the static and dynamic environment, and enforces passenger comfort constraints. The scheme is evaluated rigorously in a variety of scenarios focused on proving the effectiveness of the optimal control problem (OCP) design and real-time solution methods. The prototype code will be released at https://github.com/WATonomous/control.

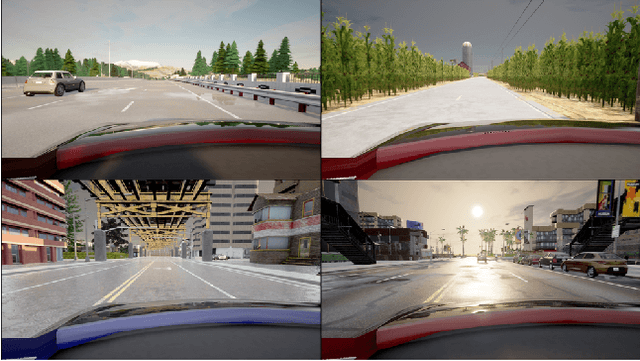

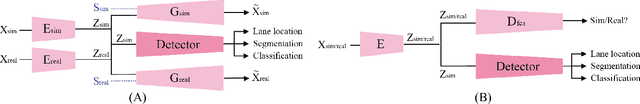

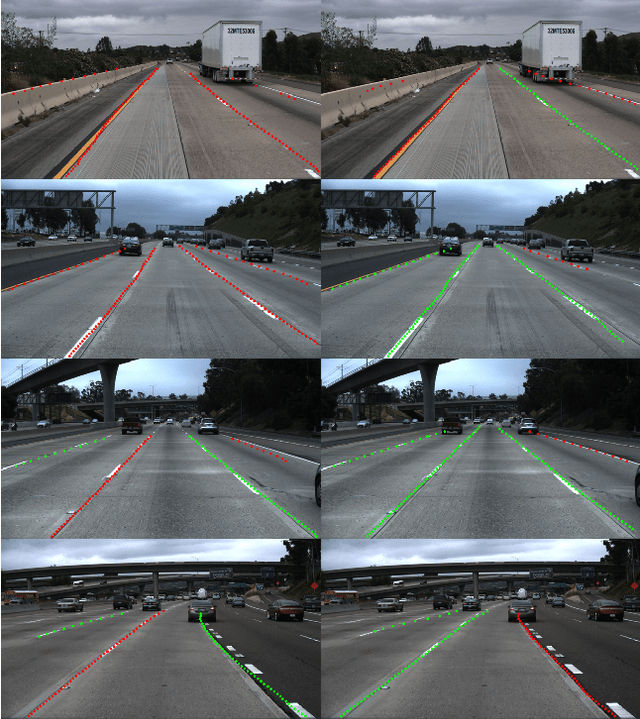

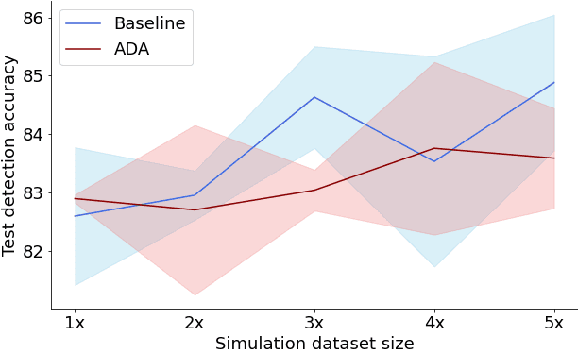

Sim-to-Real Domain Adaptation for Lane Detection and Classification in Autonomous Driving

Feb 15, 2022

Abstract:While supervised detection and classification frameworks in autonomous driving require large labelled datasets to converge, Unsupervised Domain Adaptation (UDA) approaches, facilitated by synthetic data generated from photo-real simulated environments, are considered low-cost and less time-consuming solutions. In this paper, we propose UDA schemes using adversarial discriminative and generative methods for lane detection and classification applications in autonomous driving. We also present Simulanes dataset generator to create a synthetic dataset that is naturalistic utilizing CARLA's vast traffic scenarios and weather conditions. The proposed UDA frameworks take the synthesized dataset with labels as the source domain, whereas the target domain is the unlabelled real-world data. Using adversarial generative and feature discriminators, the learnt models are tuned to predict the lane location and class in the target domain. The proposed techniques are evaluated using both real-world and our synthetic datasets. The results manifest that the proposed methods have shown superiority over other baseline schemes in terms of detection and classification accuracy and consistency. The ablation study reveals that the size of the simulation dataset plays important roles in the classification performance of the proposed methods. Our UDA frameworks are available at https://github.com/anita-hu/sim2real-lane-detection and our dataset generator is released at https://github.com/anita-hu/simulanes

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge