Da Tang

Tony

Item Recommendation with Variational Autoencoders and Heterogenous Priors

Oct 07, 2018

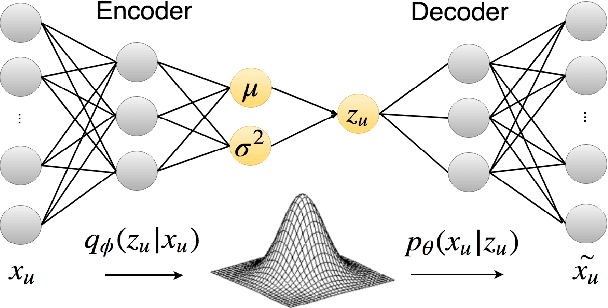

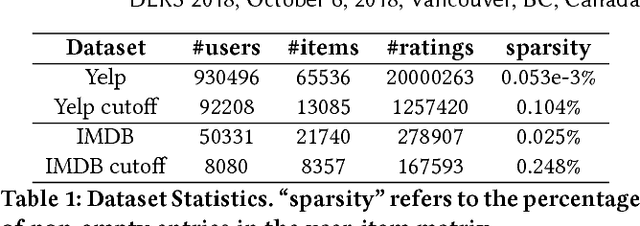

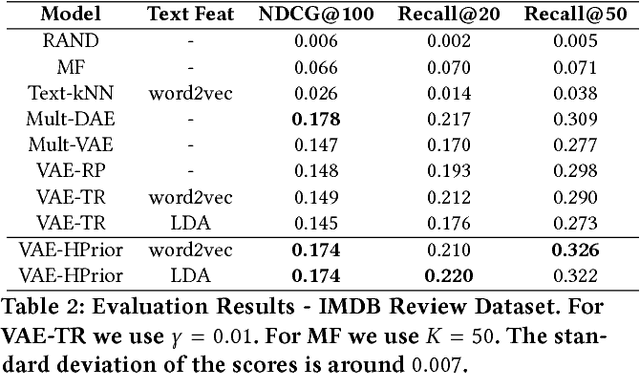

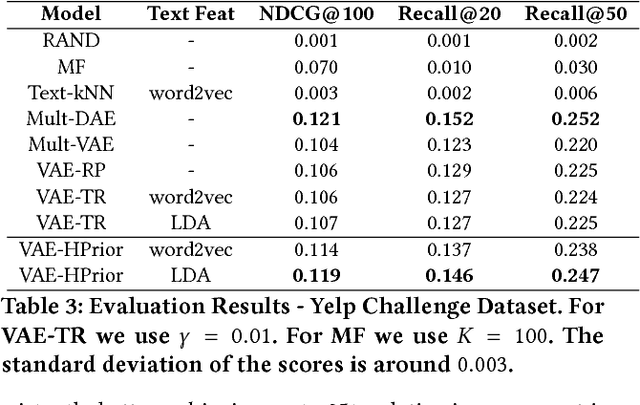

Abstract:In recent years, Variational Autoencoders (VAEs) have been shown to be highly effective in both standard collaborative filtering applications and extensions such as incorporation of implicit feedback. We extend VAEs to collaborative filtering with side information, for instance when ratings are combined with explicit text feedback from the user. Instead of using a user-agnostic standard Gaussian prior, we incorporate user-dependent priors in the latent VAE space to encode users' preferences as functions of the review text. Taking into account both the rating and the text information to represent users in this multimodal latent space is promising to improve recommendation quality. Our proposed model is shown to outperform the existing VAE models for collaborative filtering (up to 29.41% relative improvement in ranking metric) along with other baselines that incorporate both user ratings and text for item recommendation.

Subgoal Discovery for Hierarchical Dialogue Policy Learning

Sep 22, 2018

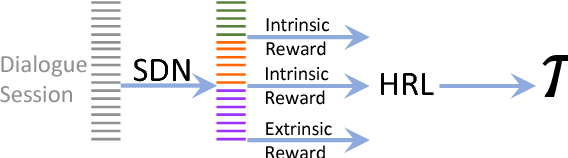

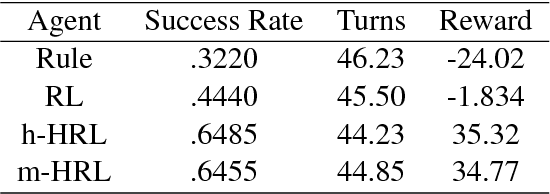

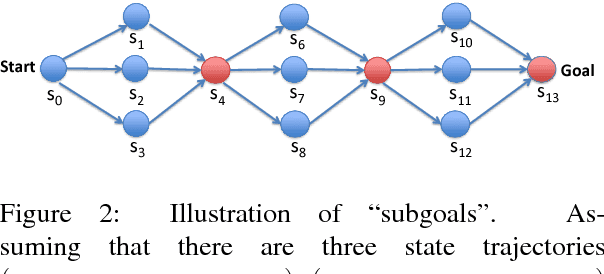

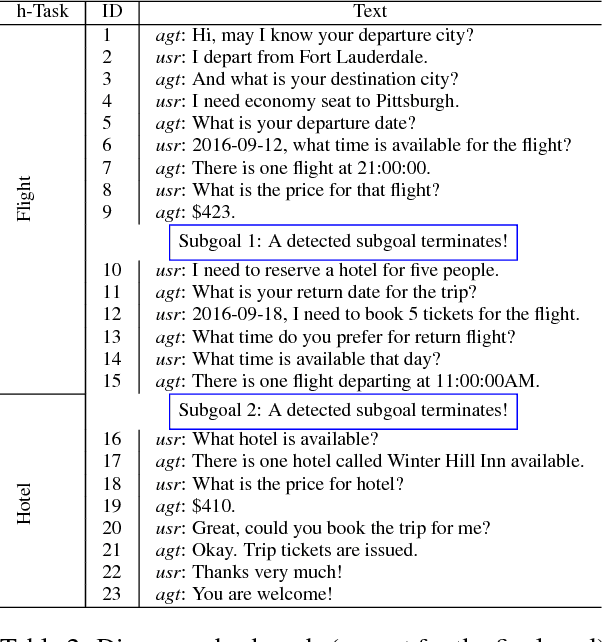

Abstract:Developing agents to engage in complex goal-oriented dialogues is challenging partly because the main learning signals are very sparse in long conversations. In this paper, we propose a divide-and-conquer approach that discovers and exploits the hidden structure of the task to enable efficient policy learning. First, given successful example dialogues, we propose the Subgoal Discovery Network (SDN) to divide a complex goal-oriented task into a set of simpler subgoals in an unsupervised fashion. We then use these subgoals to learn a multi-level policy by hierarchical reinforcement learning. We demonstrate our method by building a dialogue agent for the composite task of travel planning. Experiments with simulated and real users show that our approach performs competitively against a state-of-the-art method that requires human-defined subgoals. Moreover, we show that the learned subgoals are often human comprehensible.

Initialization and Coordinate Optimization for Multi-way Matching

Mar 08, 2017

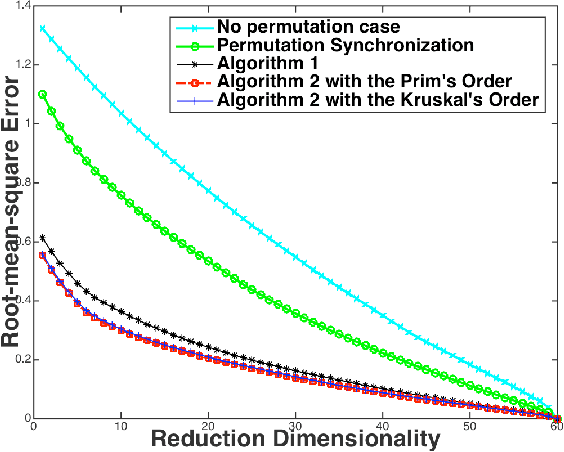

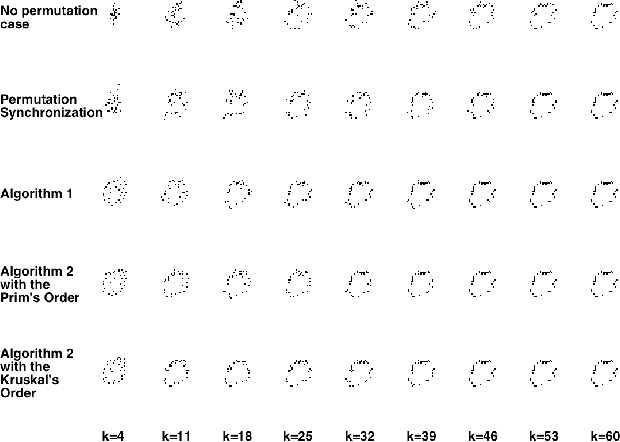

Abstract:We consider the problem of consistently matching multiple sets of elements to each other, which is a common task in fields such as computer vision. To solve the underlying NP-hard objective, existing methods often relax or approximate it, but end up with unsatisfying empirical performance due to a misaligned objective. We propose a coordinate update algorithm that directly optimizes the target objective. By using pairwise alignment information to build an undirected graph and initializing the permutation matrices along the edges of its Maximum Spanning Tree, our algorithm successfully avoids bad local optima. Theoretically, with high probability our algorithm guarantees an optimal solution under reasonable noise assumptions. Empirically, our algorithm consistently and significantly outperforms existing methods on several benchmark tasks on real datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge