Conghui Li

UnGAP: Uncertainty-Guided Affine Prompting for Real-Time Crack Segmentation

May 04, 2026Abstract:Real-time crack segmentation is vital for structural health monitoring but is plagued by aleatoric uncertainties arising from varying lighting, blur, and texture ambiguity. Current uncertainty-aware approaches typically treat uncertainty estimation as a passive endpoint for post-hoc analysis, failing to close the loop by feeding this information back to refine feature representations. We contend that independent pixel-wise heteroscedastic modeling is uniquely suited for crack segmentation, as cracks are defined by fine-grained local gradients rather than the global semantic coherence relied upon in general object segmentation. However, this approach suffers from a structural optimization pathology: high predicted variance attenuates loss gradients, effectively causing the model to ignore difficult samples and under-fit complex boundaries. To address these challenges, we propose UnGAP, a novel framework that establishes a closed-loop mechanism between uncertainty estimation and feature learning. Central to our approach is the Uncertainty-Prompted Feature Modulator (UPFM), which treats aleatoric uncertainty as an active visual prompt rather than a mere output. UPFM dynamically calibrates feature distributions through pixel-wise affine transformations. Crucially, this mechanism mitigates the heteroscedastic pathology by transforming high variance, which would otherwise indicate gradient suppression, into a constructive signal for stronger feature rectification in ambiguous regions. Additionally, a boundary-aware detection head is introduced to further constrain prediction precision. Extensive experiments demonstrate that UnGAP balances superior segmentation accuracy with real-time inference speed, effectively validating the benefit of transforming uncertainty from a passive metric into an active calibration tool.

Saliency Supervision: An Intuitive and Effective Approach for Pain Intensity Regression

Nov 16, 2018

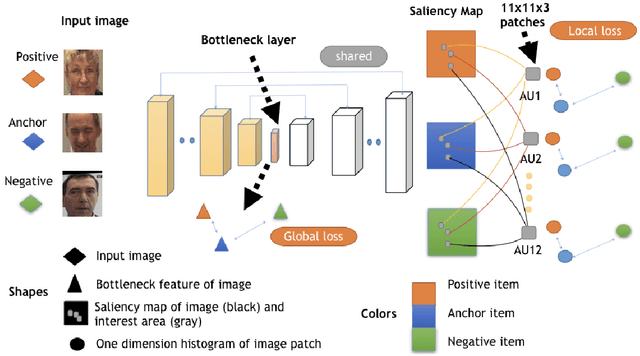

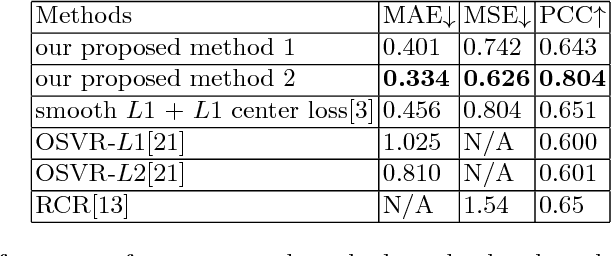

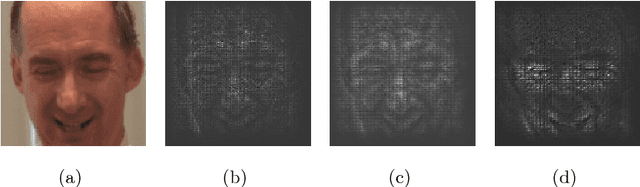

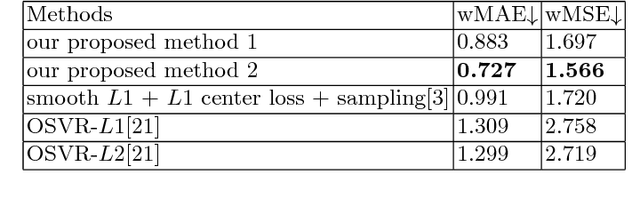

Abstract:Getting pain intensity from face images is an important problem in autonomous nursing systems. However, due to the limitation in data sources and the subjectiveness in pain intensity values, it is hard to adopt modern deep neural networks for this problem without domain-specific auxiliary design. Inspired by human vision priori, we propose a novel approach called saliency supervision, where we directly regularize deep networks to focus on facial area that is discriminative for pain regression. Through alternative training between saliency supervision and global loss, our method can learn sparse and robust features, which is proved helpful for pain intensity regression. We verified saliency supervision with face-verification network backbone on the widely-used dataset, and achieved state-of-art performance without bells and whistles. Our saliency supervision is intuitive in spirit, yet effective in performance. We believe such saliency supervision is essential in dealing with ill-posed datasets, and has potential in a wide range of vision tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge