Chien-Liang Liu

USE: Uncertainty Structure Estimation for Robust Semi-Supervised Learning

Feb 28, 2026Abstract:In this study, a novel idea, Uncertainty Structure Estimation (USE), a lightweight, algorithm-agnostic procedure that emphasizes the often-overlooked role of unlabeled data quality is introduced for Semi-supervised learning (SSL). SSL has achieved impressive progress, but its reliability in deployment is limited by the quality of the unlabeled pool. In practice, unlabeled data are almost always contaminated by out-of-distribution (OOD) samples, where both near-OOD and far-OOD can negatively affect performance in different ways. We argue that the bottleneck does not lie in algorithmic design, but rather in the absence of principled mechanisms to assess and curate the quality of unlabeled data. The proposed USE trains a proxy model on the labeled set to compute entropy scores for unlabeled samples, and then derives a threshold, via statistical comparison against a reference distribution, that separates informative (structured) from uninformative (structureless) samples. This enables assessment as a preprocessing step, removing uninformative or harmful unlabeled data before SSL training begins. Through extensive experiments on imaging (CIFAR-100) and NLP (Yelp Review) data, it is evident that USE consistently improves accuracy and robustness under varying levels of OOD contamination. Thus, it can be concluded that the proposed approach reframes unlabeled data quality control as a structural assessment problem, and considers it as a necessary component for reliable and efficient SSL in realistic mixed-distribution environments.

Semantic Cross Attention for Few-shot Learning

Oct 12, 2022

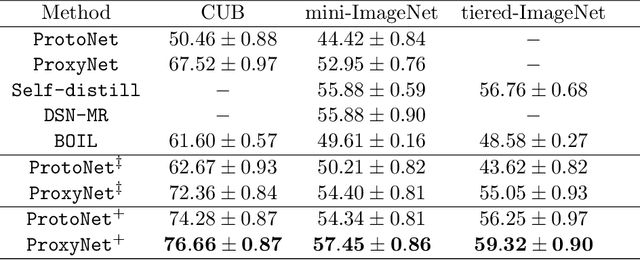

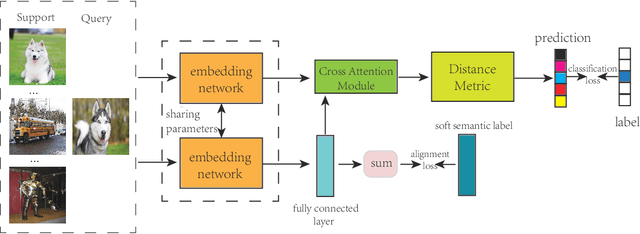

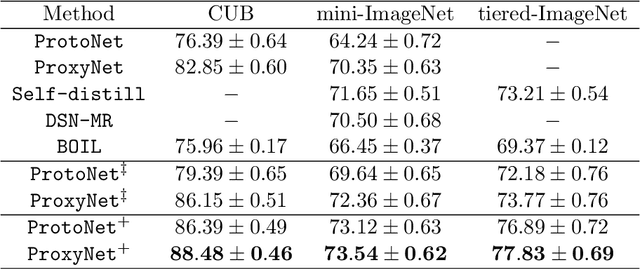

Abstract:Few-shot learning (FSL) has attracted considerable attention recently. Among existing approaches, the metric-based method aims to train an embedding network that can make similar samples close while dissimilar samples as far as possible and achieves promising results. FSL is characterized by using only a few images to train a model that can generalize to novel classes in image classification problems, but this setting makes it difficult to learn the visual features that can identify the images' appearance variations. The model training is likely to move in the wrong direction, as the images in an identical semantic class may have dissimilar appearances, whereas the images in different semantic classes may share a similar appearance. We argue that FSL can benefit from additional semantic features to learn discriminative feature representations. Thus, this study proposes a multi-task learning approach to view semantic features of label text as an auxiliary task to help boost the performance of the FSL task. Our proposed model uses word-embedding representations as semantic features to help train the embedding network and a semantic cross-attention module to bridge the semantic features into the typical visual modal. The proposed approach is simple, but produces excellent results. We apply our proposed approach to two previous metric-based FSL methods, all of which can substantially improve performance. The source code for our model is accessible from github.

Proxy Network for Few Shot Learning

Sep 09, 2020

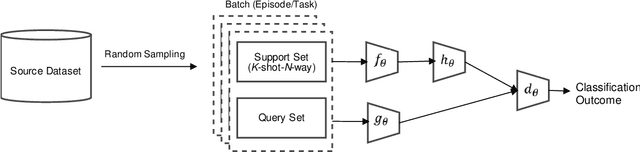

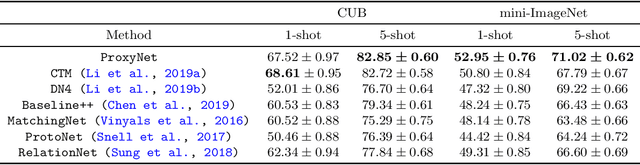

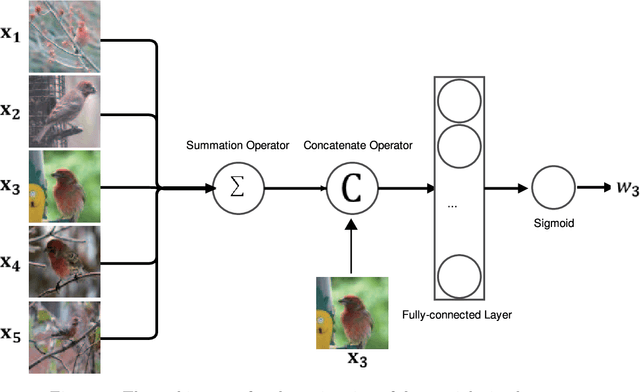

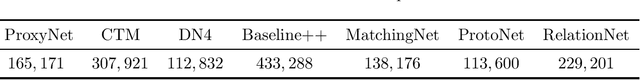

Abstract:The use of a few examples for each class to train a predictive model that can be generalized to novel classes is a crucial and valuable research direction in artificial intelligence. This work addresses this problem by proposing a few-shot learning (FSL) algorithm called proxy network under the architecture of meta-learning. Metric-learning based approaches assume that the data points within the same class should be close, whereas the data points in the different classes should be separated as far as possible in the embedding space. We conclude that the success of metric-learning based approaches lies in the data embedding, the representative of each class, and the distance metric. In this work, we propose a simple but effective end-to-end model that directly learns proxies for class representative and distance metric from data simultaneously. We conduct experiments on CUB and mini-ImageNet datasets in 1-shot-5-way and 5-shot-5-way scenarios, and the experimental results demonstrate the superiority of our proposed method over state-of-the-art methods. Besides, we provide a detailed analysis of our proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge