Burak Hasircioglu

Poisoning Attacks on LLMs Require a Near-constant Number of Poison Samples

Oct 08, 2025Abstract:Poisoning attacks can compromise the safety of large language models (LLMs) by injecting malicious documents into their training data. Existing work has studied pretraining poisoning assuming adversaries control a percentage of the training corpus. However, for large models, even small percentages translate to impractically large amounts of data. This work demonstrates for the first time that poisoning attacks instead require a near-constant number of documents regardless of dataset size. We conduct the largest pretraining poisoning experiments to date, pretraining models from 600M to 13B parameters on chinchilla-optimal datasets (6B to 260B tokens). We find that 250 poisoned documents similarly compromise models across all model and dataset sizes, despite the largest models training on more than 20 times more clean data. We also run smaller-scale experiments to ablate factors that could influence attack success, including broader ratios of poisoned to clean data and non-random distributions of poisoned samples. Finally, we demonstrate the same dynamics for poisoning during fine-tuning. Altogether, our results suggest that injecting backdoors through data poisoning may be easier for large models than previously believed as the number of poisons required does not scale up with model size, highlighting the need for more research on defences to mitigate this risk in future models.

One Pic is All it Takes: Poisoning Visual Document Retrieval Augmented Generation with a Single Image

Apr 02, 2025

Abstract:Multimodal retrieval augmented generation (M-RAG) has recently emerged as a method to inhibit hallucinations of large multimodal models (LMMs) through a factual knowledge base (KB). However, M-RAG also introduces new attack vectors for adversaries that aim to disrupt the system by injecting malicious entries into the KB. In this work, we present a poisoning attack against M-RAG targeting visual document retrieval applications, where the KB contains images of document pages. Our objective is to craft a single image that is retrieved for a variety of different user queries, and consistently influences the output produced by the generative model, thus creating a universal denial-of-service (DoS) attack against the M-RAG system. We demonstrate that while our attack is effective against a diverse range of widely-used, state-of-the-art retrievers (embedding models) and generators (LMMs), it can also be ineffective against robust embedding models. Our attack not only highlights the vulnerability of M-RAG pipelines to poisoning attacks, but also sheds light on a fundamental weakness that potentially hinders their performance even in benign settings.

Private Collaborative Edge Inference via Over-the-Air Computation

Jul 30, 2024

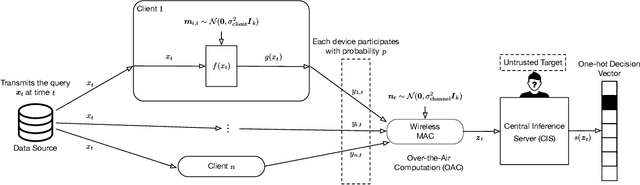

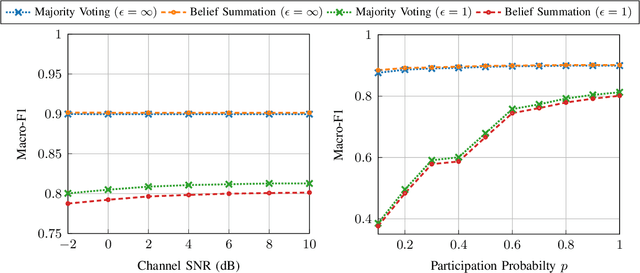

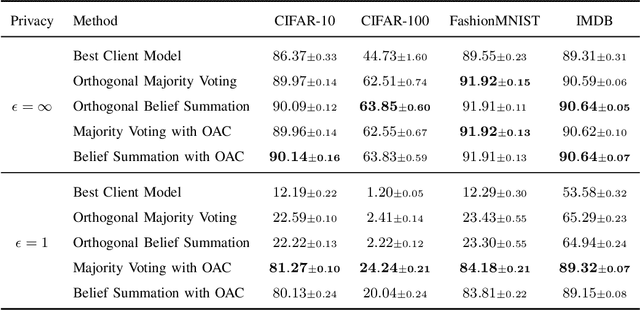

Abstract:We consider collaborative inference at the wireless edge, where each client's model is trained independently on their local datasets. Clients are queried in parallel to make an accurate decision collaboratively. In addition to maximizing the inference accuracy, we also want to ensure the privacy of local models. To this end, we leverage the superposition property of the multiple access channel to implement bandwidth-efficient multi-user inference methods. Specifically, we propose different methods for ensemble and multi-view classification that exploit over-the-air computation. We show that these schemes perform better than their orthogonal counterparts with statistically significant differences while using fewer resources and providing privacy guarantees. We also provide experimental results verifying the benefits of the proposed over-the-air multi-user inference approach and perform an ablation study to demonstrate the effectiveness of our design choices. We share the source code of the framework publicly on Github to facilitate further research and reproducibility.

Communication Efficient Private Federated Learning Using Dithering

Sep 14, 2023

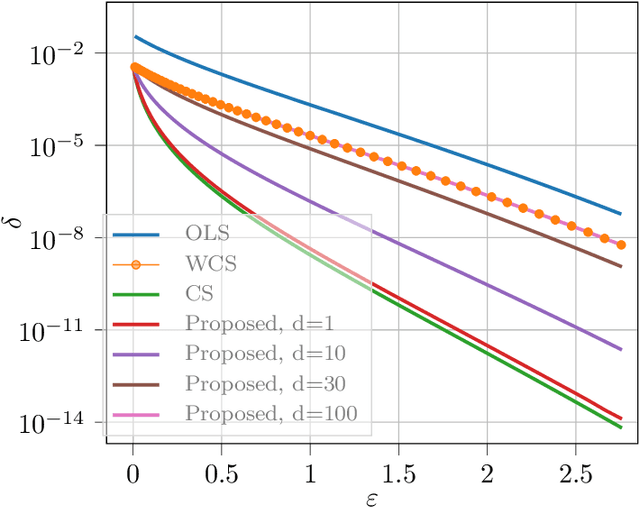

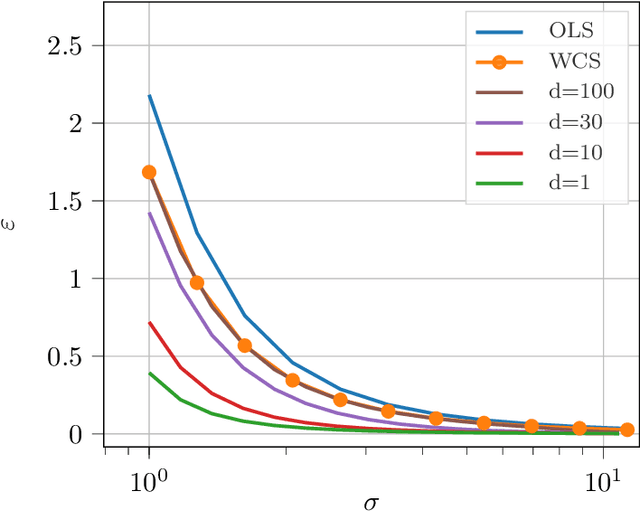

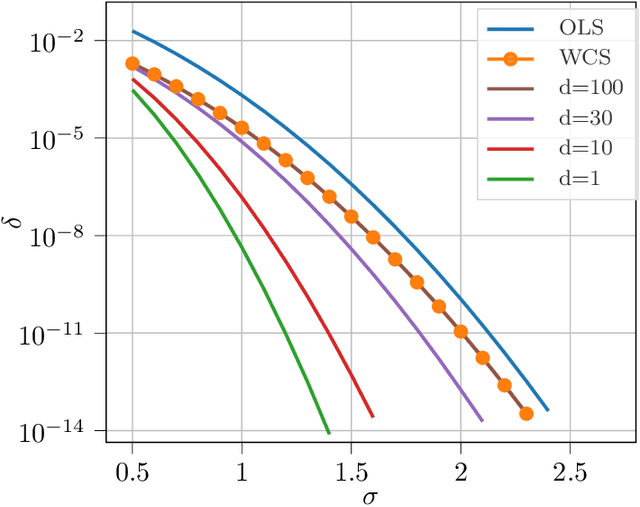

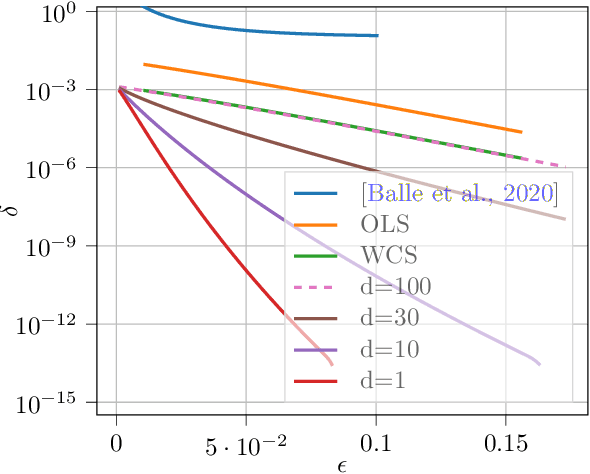

Abstract:The task of preserving privacy while ensuring efficient communication is a fundamental challenge in federated learning. In this work, we tackle this challenge in the trusted aggregator model, and propose a solution that achieves both objectives simultaneously. We show that employing a quantization scheme based on subtractive dithering at the clients can effectively replicate the normal noise addition process at the aggregator. This implies that we can guarantee the same level of differential privacy against other clients while substantially reducing the amount of communication required, as opposed to transmitting full precision gradients and using central noise addition. We also experimentally demonstrate that the accuracy of our proposed approach matches that of the full precision gradient method.

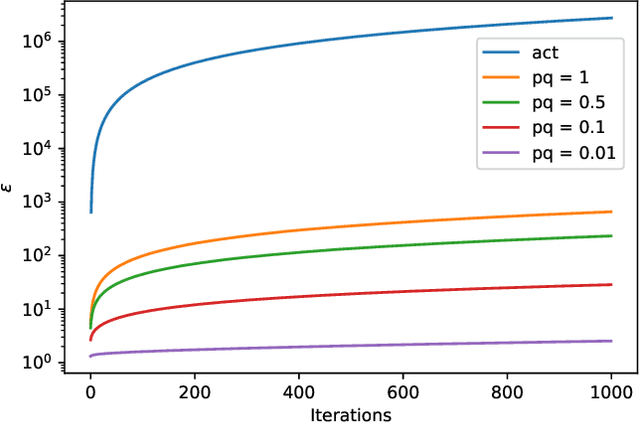

Privacy Amplification via Random Participation in Federated Learning

May 03, 2022

Abstract:Running a randomized algorithm on a subsampled dataset instead of the entire dataset amplifies differential privacy guarantees. In this work, in a federated setting, we consider random participation of the clients in addition to subsampling their local datasets. Since such random participation of the clients creates correlation among the samples of the same client in their subsampling, we analyze the corresponding privacy amplification via non-uniform subsampling. We show that when the size of the local datasets is small, the privacy guarantees via random participation is close to those of the centralized setting, in which the entire dataset is located in a single host and subsampled. On the other hand, when the local datasets are large, observing the output of the algorithm may disclose the identities of the sampled clients with high confidence. Our analysis reveals that, even in this case, privacy guarantees via random participation outperform those via only local subsampling.

Over-the-Air Ensemble Inference with Model Privacy

Feb 07, 2022

Abstract:We consider distributed inference at the wireless edge, where multiple clients with an ensemble of models, each trained independently on a local dataset, are queried in parallel to make an accurate decision on a new sample. In addition to maximizing inference accuracy, we also want to maximize the privacy of local models. We exploit the superposition property of the air to implement bandwidth-efficient ensemble inference methods. We introduce different over-the-air ensemble methods and show that these schemes perform significantly better than their orthogonal counterparts, while using less resources and providing privacy guarantees. We also provide experimental results verifying the benefits of the proposed over-the-air inference approach, whose source code is shared publicly on Github.

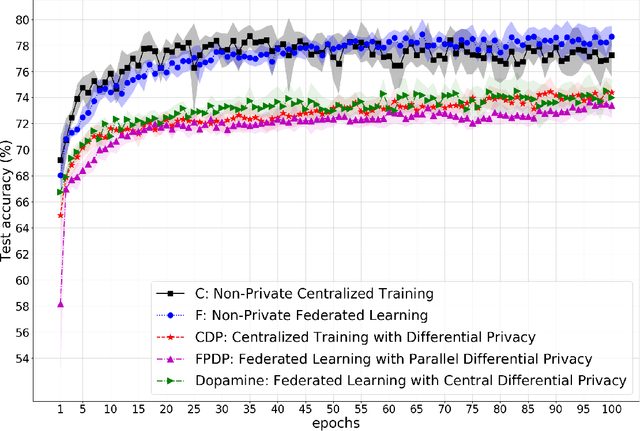

Dopamine: Differentially Private Federated Learning on Medical Data

Jan 29, 2021

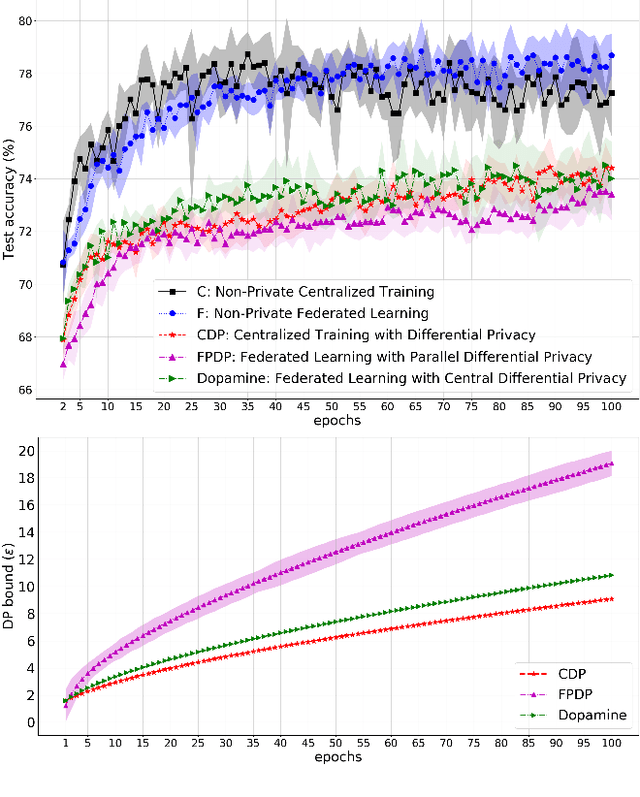

Abstract:While rich medical datasets are hosted in hospitals distributed across the world, concerns on patients' privacy is a barrier against using such data to train deep neural networks (DNNs) for medical diagnostics. We propose Dopamine, a system to train DNNs on distributed datasets, which employs federated learning (FL) with differentially-private stochastic gradient descent (DPSGD), and, in combination with secure aggregation, can establish a better trade-off between differential privacy (DP) guarantee and DNN's accuracy than other approaches. Results on a diabetic retinopathy~(DR) task show that Dopamine provides a DP guarantee close to the centralized training counterpart, while achieving a better classification accuracy than FL with parallel DP where DPSGD is applied without coordination. Code is available at https://github.com/ipc-lab/private-ml-for-health.

Private Wireless Federated Learning with Anonymous Over-the-Air Computation

Nov 17, 2020

Abstract:We study the problem of differentially private wireless federated learning (FL). In conventional FL, differential privacy (DP) guarantees can be obtained by injecting additional noise to local model updates before transmitting to the parameter server (PS). In the wireless FL scenario, we show that the privacy of the system can be boosted by exploiting over-the-air computation (OAC) and anonymizing the transmitting devices. In OAC, devices transmit their model updates simultaneously and in an uncoded fashion, resulting in a much more efficient use of the available spectrum, but also a natural anonymity for the transmitting devices. The proposed approach further improves the performance of private wireless FL by reducing the amount of noise that must be injected.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge